In the vast and intricate world of modern computing, where data flows ceaselessly between processors, memory, and networks, there exists a fundamental concept that, while often overlooked by the casual observer, is absolutely critical to the correct interpretation and operation of digital systems: endianness. It is an architectural property that dictates the order in which multi-byte data (like integers, floating-point numbers, or memory addresses) is stored in memory and transmitted across data links. Understanding endianness isn’t merely an academic exercise; it’s a cornerstone for building robust, interoperable, and efficient technology solutions, especially in an era defined by diverse hardware architectures, distributed systems, AI, and autonomous technologies. From the low-level embedded systems controlling drones to the high-performance computing clusters processing vast datasets for machine learning, the seemingly simple choice of byte order can spell the difference between seamless functionality and catastrophic data corruption.

The Fundamental Concept: Byte Ordering in Digital Systems

At its core, endianness is about how a computer organizes bytes. When we deal with single bytes (8 bits), there’s no ambiguity. But when a piece of data, such as a 32-bit integer or a 64-bit floating-point number, occupies more than one byte of memory, the question arises: which byte comes first? This seemingly trivial decision has profound implications for how different computer systems communicate and interpret data.

Defining Endianness: Big-Endian and Little-Endian

There are two primary forms of endianness:

-

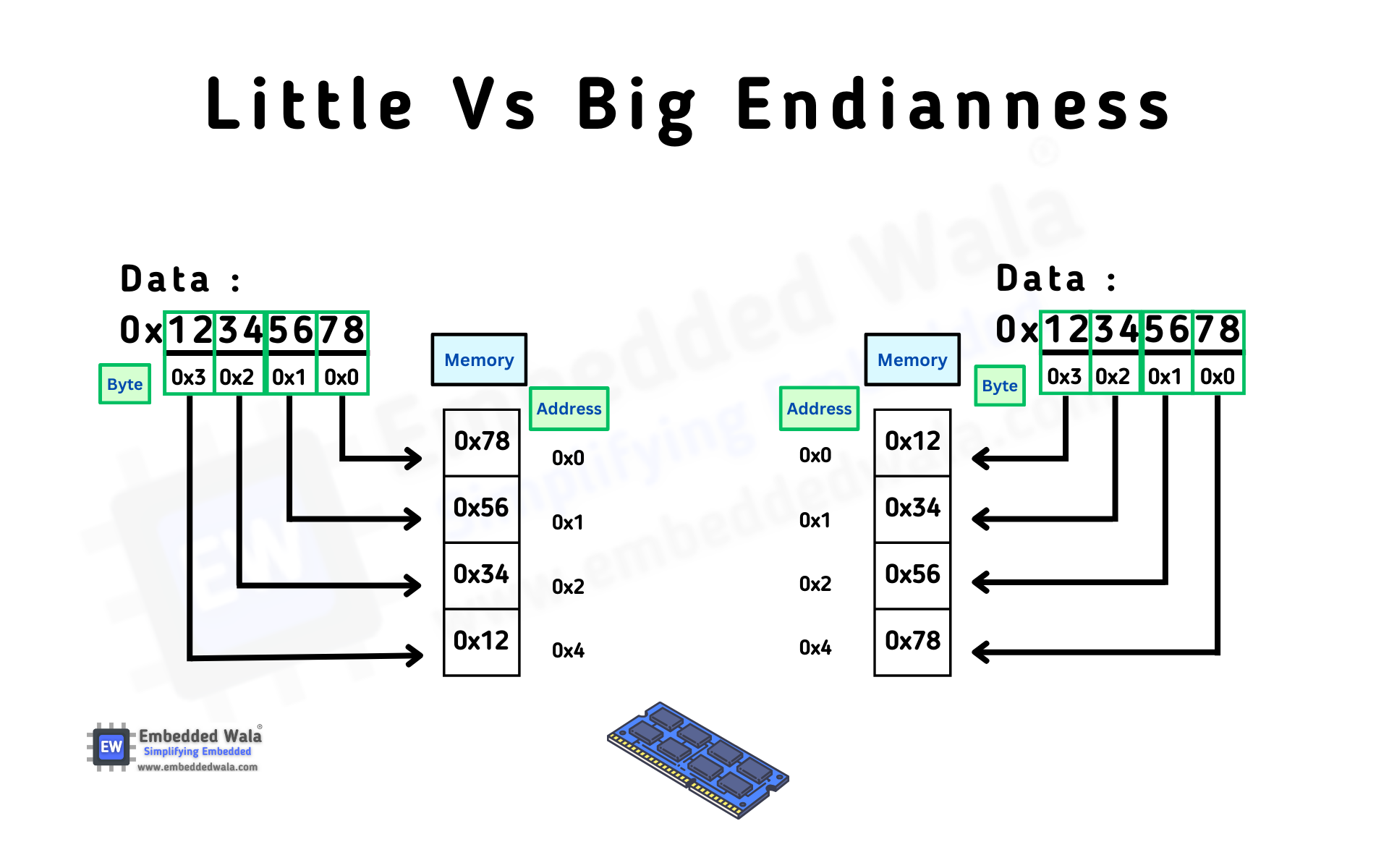

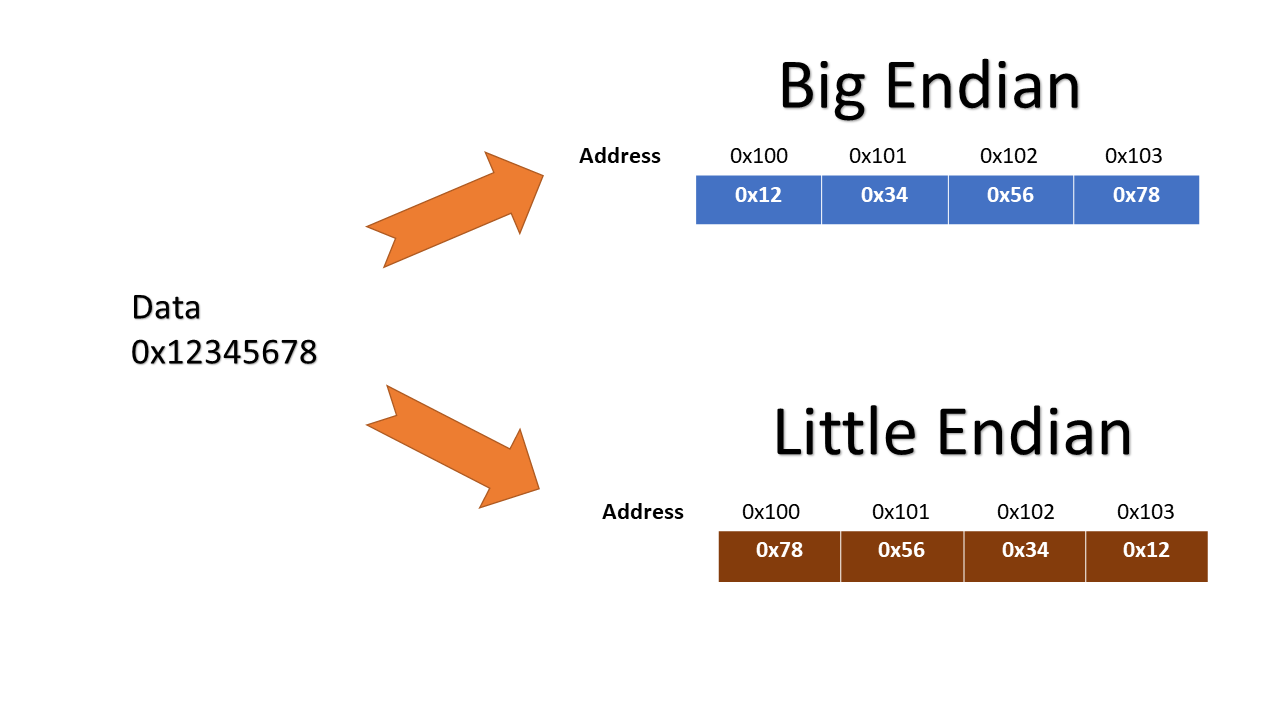

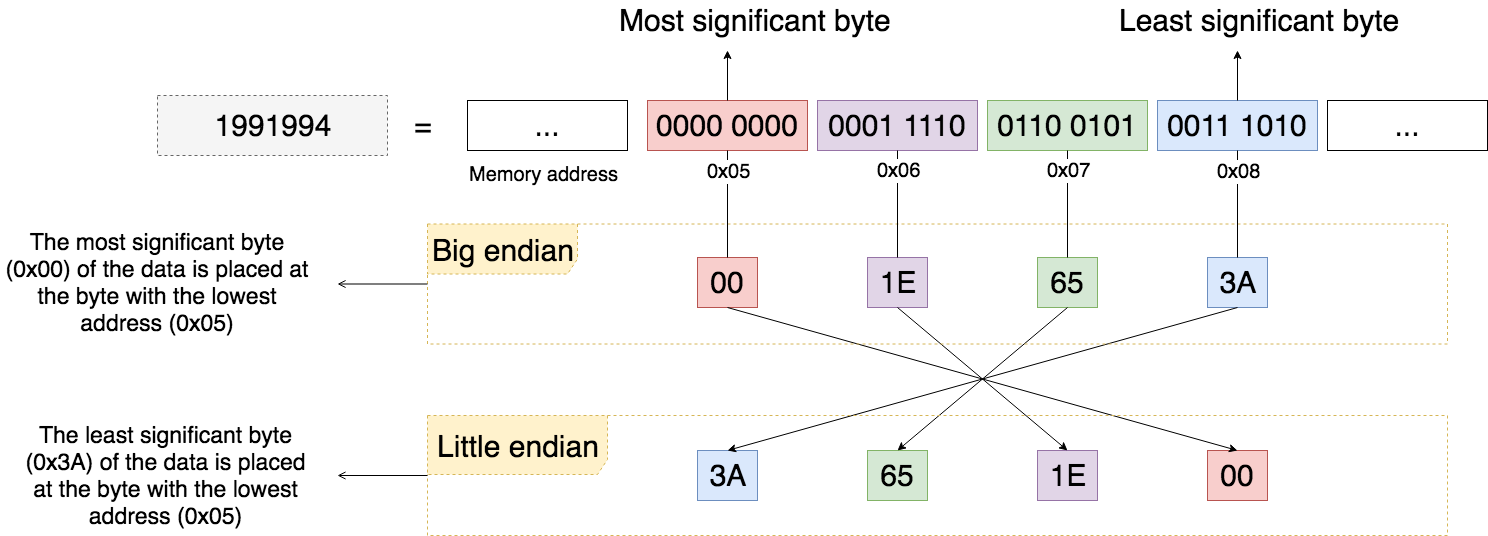

Big-Endian: In a big-endian system, the most significant byte (MSB) of a multi-byte data type is stored at the lowest memory address, and the least significant byte (LSB) is stored at the highest memory address. Think of it like reading numbers from left to right, as we do in conventional writing: the “biggest” part of the number comes first. For example, if the hexadecimal value

0x12345678(where0x12is the MSB and0x78is the LSB) is stored in memory starting at address0x1000:0x1000:0x120x1001:0x340x1002:0x560x1003:0x78

This order is often considered the “network byte order” and is favored by some processors and communication protocols.

-

Little-Endian: Conversely, in a little-endian system, the least significant byte (LSB) is stored at the lowest memory address, and the most significant byte (MSB) is stored at the highest memory address. This is akin to writing numbers right-to-left, with the “smallest” part first. Using the same hexadecimal value

0x12345678at address0x1000:0x1000:0x780x1001:0x560x1002:0x340x1003:0x12

The vast majority of modern consumer PCs, based on Intel x86 or AMD64 architectures, are little-endian.

Why Does Endianness Exist? A Historical Perspective

The emergence of two distinct endian conventions is largely attributed to historical design choices made by early computer architects. David Cohen coined the terms “Big-Endian” and “Little-Endian” in 1980, drawing from Jonathan Swift’s satire Gulliver’s Travels, where two warring factions debated whether to break eggs from the big end or the little end.

The choice between big-endian and little-endian often came down to processor design philosophy and optimization. Big-endian systems typically made it easier to access the most significant byte for arithmetic operations, as it was always at the starting address. Little-endian systems, on the other hand, simplified memory access for words of varying lengths (e.g., accessing a byte, then a word, then a double word) because the low-order byte was always at the start address, and pointer arithmetic could be simpler. Furthermore, little-endian systems made it easier to convert between different data sizes by simply extending the memory address. There was no universally “better” choice, leading to a bifurcation that continues to influence modern computing.

The Ramifications of Misunderstanding Endianness

The critical issue arises when data is moved between systems with different endian conventions. If a big-endian system writes the value 0x12345678 to a network socket, and a little-endian system reads those four bytes expecting an integer, it will interpret them as 0x78563412. This mismatch leads to incorrect values, corrupted data, and potentially system crashes or security vulnerabilities. In the context of “Tech & Innovation,” where interconnectedness and data exchange are paramount, such misinterpretations can undermine the reliability and integrity of complex systems, from sensor networks to autonomous vehicles.

Endianness in Modern Tech & Innovation

In the rapidly evolving landscape of technology and innovation, endianness is far from an arcane curiosity; it is a vital consideration that impacts interoperability, data integrity, and the very foundation of heterogeneous computing environments. Its influence is felt across various cutting-edge fields.

Data Communication Protocols and Interoperability

One of the most significant domains where endianness plays a crucial role is in data communication. Networks are inherently heterogeneous; a packet might originate from an Intel-based (little-endian) server, traverse through a big-endian router, and be received by an ARM-based (potentially bi-endian, configurable) embedded system or another little-endian client. To ensure seamless communication, a common standard for byte order must be established. The internet protocol suite (TCP/IP) mandates “network byte order,” which is explicitly big-endian. This means that any multi-byte data transmitted over a network, such as IP addresses, port numbers, or packet lengths, must be converted to big-endian before transmission and then converted back to the host’s native endianness upon reception. Failure to adhere to this standard would result in garbled data, failed connections, and a breakdown of communication infrastructure, hindering global connectivity and the very fabric of cloud computing and distributed services.

Embedded Systems and IoT Devices

The proliferation of embedded systems and Internet of Things (IoT) devices is a hallmark of modern innovation. From smart home sensors to industrial control systems and, crucially, drone flight controllers, these devices often operate on resource-constrained microcontrollers and microprocessors. These architectures can vary widely in their endianness. For instance, an ARM Cortex-M processor can be configured as either big-endian or little-endian (bi-endian), while a PIC microcontroller might inherently be little-endian, and older PowerPC chips were typically big-endian.

When designing or integrating these devices, especially within a larger IoT ecosystem, endianness becomes a critical consideration. Imagine a drone’s flight controller (an embedded system) communicating with its GPS module, IMU (Inertial Measurement Unit), or a ground control station. If the GPS module sends latitude/longitude coordinates as a 32-bit float in little-endian format, but the flight controller’s processor expects big-endian data, the drone might misinterpret its position, leading to erratic flight, navigation errors, or even crashes. Similarly, telemetry data streamed from a drone to a ground station must be correctly ordered to provide accurate real-time insights for autonomous flight algorithms or pilot intervention. Ensuring correct byte order is paramount for the safety, reliability, and functionality of autonomous and smart devices.

Artificial Intelligence and Machine Learning Data Pipelines

The advancements in Artificial Intelligence and Machine Learning are heavily reliant on the efficient processing and exchange of vast amounts of data. This data often originates from diverse sources, is processed on different hardware architectures (CPUs, GPUs, TPUs), and is consumed by various models and applications. Endianness can introduce subtle yet critical issues in this pipeline.

Consider a scenario where a machine learning model is trained on a supercomputing cluster that might use big-endian architectures, but the inference is deployed on a cloud-based server or an edge device (like an AI-powered drone) running a little-endian processor. When serializing and deserializing data (e.g., images, sensor readings, model weights, feature vectors), byte order must be meticulously managed. If a feature vector represented as an array of floating-point numbers is saved to a file on a big-endian system and then loaded directly into memory on a little-endian system without proper conversion, the numerical values will be corrupted. This can lead to erroneous predictions, model failures, or incorrect classifications, severely impacting the reliability of AI-driven applications such as object detection in autonomous vehicles, remote sensing image analysis, or predictive maintenance in industrial IoT. Data integrity through correct endian handling is non-negotiable for trustworthy AI systems.

Practical Implications and Mitigation Strategies

Addressing endianness is a fundamental aspect of robust software and hardware design in any interconnected and diverse technological landscape. Proactive strategies are essential to prevent data corruption and ensure seamless operation.

Network Byte Order: A Standard Solution

As highlighted, the Internet Protocol (IP) suite standardizes on big-endian for network byte order. This means that all multi-byte data sent over a TCP/IP network must be converted to big-endian before transmission and converted back to the host’s native byte order upon reception. Standard library functions like htons (host to network short), htonl (host to network long), ntohs (network to host short), and ntohl (network to host long) are provided in C/C++ (and equivalents in other languages) specifically for this purpose. These functions abstract away the underlying endianness of the host system, performing byte-swapping only if the host is little-endian. Adhering to this convention is crucial for any network-aware application, from web servers to drone telemetry systems, ensuring universal interoperability.

Software Development Best Practices

For developers working on cross-platform applications or systems that interact with diverse hardware, explicit handling of endianness is a non-negotiable best practice.

- Data Serialization Formats: When designing data formats for storage or transmission, specify the endianness. Formats like XML, JSON, and Protocol Buffers are generally endian-neutral because they transmit data as strings, but binary data formats must explicitly define byte order.

- Byte Swapping Functions: Implement or utilize robust byte-swapping functions when reading or writing binary data to or from systems of different endianness. Many programming languages and libraries offer utilities for this (e.g.,

ByteBufferin Java,structmodule in Python,std::byteswapin C++23). - Configuration and Compilation Flags: Be aware of compiler and architecture-specific flags that might affect endianness, especially in embedded development where code might be compiled for different target processors.

- Testing: Thoroughly test applications on both big-endian and little-endian systems (or using emulators) to catch endian-related bugs early in the development cycle. This is particularly vital for autonomous systems where incorrect data interpretation can have severe physical consequences.

Hardware Design Considerations

Hardware architects also face decisions regarding endianness. While the choice often defaults to what’s prevalent in a specific market (e.g., little-endian for consumer devices), some processors are “bi-endian,” meaning their byte order can be configured via a pin setting or a control register. This flexibility allows a single chip design to be used in various environments, simplifying development for system integrators. However, it also introduces complexity in ensuring that all components within a system are configured to operate with a consistent endianness, or that interfaces between different endian components handle conversions appropriately at the hardware or firmware level. Proper hardware design must factor in the intended interoperability and communication needs, ensuring that memory controllers, peripherals, and communication interfaces align with the chosen endian convention.

Case Studies and Emerging Trends

The impact of endianness continues to be relevant, particularly as technology becomes more interconnected and reliant on diverse hardware. Its influence is apparent in complex data processing, cross-platform software, and the quest for universal data standards.

Endianness in Remote Sensing Data

Remote sensing data, collected by satellites, aerial platforms (including drones), and ground sensors, often involves large binary datasets representing imagery, spectral information, and geospatial coordinates. These datasets are frequently processed and analyzed by diverse software tools and hardware platforms. For instance, a drone equipped with a multispectral sensor might capture data, which is then processed by a powerful big-endian server for advanced analysis and finally visualized on a little-endian client workstation. If the raw binary sensor data or the intermediate processed files do not explicitly specify their endianness, or if conversion routines are not correctly applied, the resulting images could be distorted, spectral signatures misinterpreted, and geographical locations miscalculated. This directly impacts critical applications like precision agriculture, environmental monitoring, urban planning, and disaster response, where data accuracy is paramount. Standardized data formats that explicitly define byte order (or use endian-neutral representations) are essential for reliable remote sensing data pipelines.

Cross-Platform Development Challenges

Modern software development often targets multiple platforms – desktop (Windows, macOS, Linux), mobile (Android, iOS), and web. Each of these can involve different underlying processor architectures and thus different endianness. Developing applications that handle user data, file storage, or network communication consistently across these platforms requires a deep understanding of endianness. For example, a mobile app on an ARM-based device might need to exchange complex data structures with a backend server running on x86, or with an older embedded system. Developers must either ensure all data is converted to a canonical network byte order, or meticulously implement architecture-specific conversion logic. Tools and frameworks that abstract this complexity, offering higher-level data serialization, are increasingly valuable in mitigating these challenges, allowing developers to focus on features rather than byte order minutiae.

The Future of Endianness in Heterogeneous Computing

As computing continues its trajectory towards increasingly heterogeneous architectures – combining CPUs, GPUs, FPGAs, and specialized AI accelerators – the importance of managing endianness will only grow. These different processing units might operate with different native byte orders, or they might be highly optimized for specific data access patterns that implicitly favor one endianness. Furthermore, with the rise of edge computing, where processing occurs closer to the data source (e.g., on a drone or an IoT gateway), the need for efficient and correct data exchange between diverse components becomes even more critical. Future innovations in programming languages, runtime environments, and hardware-software co-design will likely feature more robust, automatic, or transparent mechanisms for handling endianness, allowing developers to build complex, high-performance systems without being constantly bogged down by byte-order considerations. However, the fundamental concept will remain a critical piece of the puzzle for system architects and low-level engineers.

In conclusion, “what is endian” is far more than a technicality; it’s a foundational concept in the digital realm that underpins the reliability and interoperability of modern technology. From the lowest levels of hardware design to the highest echelons of AI and autonomous systems, understanding and correctly managing byte order is indispensable for innovation that is both robust and seamless. As we push the boundaries of computing with ever more interconnected and diverse systems, the silent yet significant role of endianness will continue to shape the architecture and success of our technological future.