In the ever-evolving landscape of consumer electronics, the term “dual-core processor” has become a ubiquitous descriptor, often appearing in the specifications of everything from smartphones and laptops to, increasingly, sophisticated drone flight controllers and embedded systems. Understanding what a dual-core processor is and its implications is crucial for appreciating the capabilities and limitations of modern technology, particularly in performance-intensive applications like aerial imaging and autonomous flight. At its core, a dual-core processor represents a significant leap in computational power over its single-core predecessors, fundamentally altering how tasks are managed and executed.

The Genesis of Multi-Core Processing

The journey towards multi-core processors is a story of overcoming fundamental physical limitations. For decades, the primary method of increasing processor performance was to increase clock speed – how many cycles a processor could execute per second. This relentless pursuit led to impressive gains, but it also encountered significant hurdles. As clock speeds climbed, so did power consumption and heat generation. Processors began to run so hot that cooling became a major engineering challenge, and the very physics of signal propagation at higher frequencies introduced new complexities. These thermal and electrical barriers meant that simply making a single core faster was becoming unsustainable and prohibitively expensive.

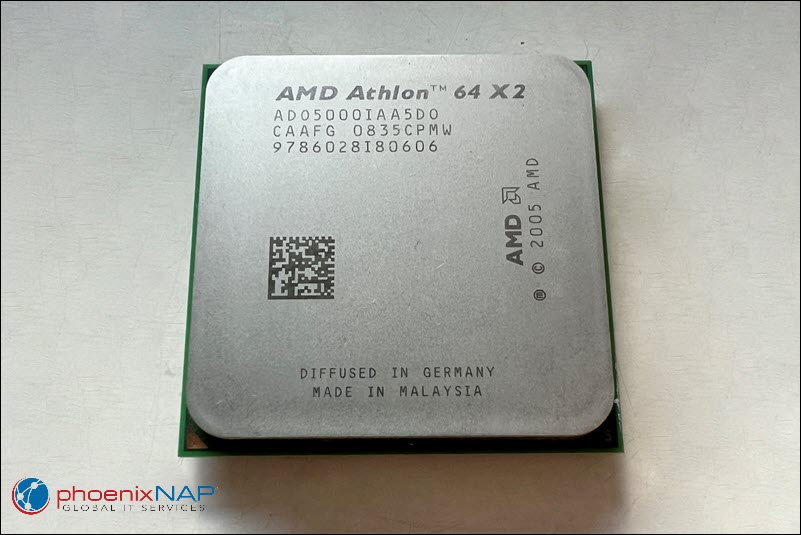

The solution, pioneered by companies like Intel and AMD, was not to make a single core faster, but to put multiple cores on a single chip. The concept is analogous to adding more lanes to a highway rather than trying to make the existing lanes significantly wider or faster. This allowed for parallel processing, where multiple tasks could be handled simultaneously, leading to a dramatic improvement in overall throughput and responsiveness, even if individual core speeds didn’t increase as dramatically as they once had.

Single-Core vs. Dual-Core: A Fundamental Shift

A single-core processor, as the name suggests, contains a single central processing unit (CPU) that handles all computational tasks. It executes instructions sequentially, one after another. While efficient for basic operations, this can become a bottleneck when multiple demanding applications are running concurrently or when a single complex task requires significant processing power. Imagine a single chef trying to prepare a multi-course meal for a large party – they can only do one thing at a time, leading to delays.

A dual-core processor, conversely, integrates two independent processing cores onto a single integrated circuit (chip). Each of these cores has its own execution unit and can process instructions independently. This means that the processor can execute two tasks simultaneously, or it can split a single complex task that has been designed for parallel execution into two parts and process them concurrently. Using the chef analogy, a dual-core processor is like having two chefs working in the same kitchen, each capable of preparing different dishes or working on different components of the same dish, significantly increasing the overall output and efficiency.

How Dual-Core Processors Enhance Performance

The benefit of having two cores is not simply a doubling of speed for every task. The actual performance improvement depends heavily on the nature of the software and how it is designed to utilize the available processing power.

Parallel Processing and Task Management

The primary advantage of a dual-core processor lies in its ability to perform parallel processing. Operating systems and applications designed to take advantage of multi-core architectures can distribute different threads of execution – the individual sequences of instructions that make up a program – across the available cores. This leads to:

- Improved Multitasking: Running multiple applications simultaneously becomes much smoother. For instance, a user might be editing a high-resolution video on one core while downloading a large file and browsing the web on the other. This was often a sluggish experience on single-core processors, with noticeable delays and unresponsiveness.

- Faster Execution of Parallelizable Tasks: Complex computations, such as video encoding, 3D rendering, scientific simulations, and even sophisticated image processing algorithms used in drone cameras, can often be broken down into smaller, independent tasks. A dual-core processor can then assign these sub-tasks to each core, completing the overall operation much faster than a single core could.

- Enhanced Responsiveness: Even if an application is not explicitly designed for heavy parallelization, the operating system can often delegate background processes or less critical threads to one core, leaving the other core free to handle the primary, user-facing application. This prevents the system from becoming bogged down by background tasks and maintains a fluid user experience.

Cache Memory and Interconnects

Within a dual-core processor, each core typically has its own dedicated cache memory (L1 and L2 caches). Cache is a small amount of very fast memory located directly on the processor chip. It stores frequently accessed data and instructions, allowing the core to retrieve them much faster than accessing main system RAM. Having separate caches for each core can prevent conflicts and improve access speeds.

Furthermore, dual-core processors employ sophisticated interconnects, such as bus interfaces, to facilitate communication between the two cores and between the cores and the rest of the system (e.g., RAM, graphics processing unit). These interconnects are critical for efficiently sharing data and coordinating tasks when necessary. The design of these interconnects plays a vital role in how effectively the two cores can work together.

Implications for Specific Applications

The impact of a dual-core processor is particularly pronounced in areas requiring significant computational power and responsiveness, making them increasingly relevant in various technology sectors:

- Smartphones and Tablets: Dual-core processors enable smoother app switching, faster web browsing, more fluid gaming experiences, and the ability to run more demanding applications like video editing suites and augmented reality experiences.

- Laptops and Desktops: For general productivity, multitasking, and content creation, dual-core processors offer a noticeable improvement over older single-core systems. This is especially true for tasks involving moderate video editing, photo manipulation, and software development.

- Embedded Systems and IoT Devices: In more specialized applications, such as the flight controllers in advanced drones, dual-core processors can manage complex tasks like sensor fusion, navigation algorithms, real-time image processing for obstacle avoidance, and communication protocols concurrently. This allows for more sophisticated autonomous capabilities and higher levels of control.

Limitations and the Path Forward

Despite their advantages, dual-core processors are not without limitations, and the technology has continued to evolve.

Amdahl’s Law and Software Optimization

A key consideration when discussing multi-core performance is Amdahl’s Law. This principle states that the overall speedup achieved by parallelizing a task is limited by the portion of the task that cannot be parallelized (the serial portion). Even with two cores, if a significant part of a program must be executed sequentially, the performance gains will be less than ideal. This highlights the critical importance of software developers designing applications to be highly parallelizable.

The Rise of More Cores

As the demand for computational power continues to grow, the industry has moved beyond dual-core processors to quad-core, hexa-core, octa-core, and even processors with dozens of cores. For certain specialized workloads, such as high-performance computing, scientific research, and complex AI model training, these higher core counts offer substantial advantages. However, for many consumer-level applications and less demanding tasks, a dual-core processor can still provide an excellent balance of performance, power efficiency, and cost.

Power Consumption and Heat

While multi-core processors were partly a solution to the heat and power issues of high clock speeds, putting multiple cores on a single chip still consumes more power and generates more heat than a single-core processor. Efficient power management techniques and advanced cooling solutions are essential to manage these factors, especially in portable devices and compact embedded systems.

Conclusion

The dual-core processor marked a significant turning point in the evolution of computing. By enabling parallel processing, it fundamentally improved multitasking capabilities, accelerated the execution of complex tasks, and enhanced overall system responsiveness. While the trend in high-end computing has moved towards even higher core counts, dual-core processors remain a powerful and cost-effective solution for a vast range of applications, from everyday consumer electronics to sophisticated embedded systems. Understanding the principles behind dual-core architecture provides valuable insight into the performance capabilities of the devices we use daily and the ongoing advancements in computational technology.