In the rapidly evolving landscape of software development, where agility, scalability, and efficiency are paramount, containerization has emerged as a transformative paradigm. At the heart of this revolution, particularly within the Docker ecosystem, lies Docker Hub. More than just a repository, Docker Hub is a central cloud-based registry service that serves as the world’s largest library and community for container images. It acts as the indispensable connective tissue, facilitating the discovery, storage, sharing, and deployment of containerized applications across diverse environments. For developers, DevOps engineers, and organizations striving for continuous innovation and streamlined operations, understanding Docker Hub is not merely beneficial; it is essential to harnessing the full power of modern software delivery.

This deep dive explores Docker Hub’s multifaceted role, its core functionalities, and its profound impact on fostering technological advancement and collaborative development. We will unpack how this innovative platform enables everything from rapid application prototyping to large-scale enterprise deployments, firmly positioning it as a cornerstone of contemporary tech and innovation.

The Foundation of Modern Software Delivery

To truly grasp the significance of Docker Hub, one must first appreciate the fundamental concepts it supports: Docker and containerization. These technologies have revolutionized how software is built, shipped, and run, addressing long-standing challenges of environment consistency and dependency management.

Understanding Docker and Containers

Docker, at its core, is a platform designed to make it easier to create, deploy, and run applications using containers. A container is a standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries, and settings. Unlike virtual machines, containers share the host system’s kernel, making them significantly lighter-weight, faster to start, and more efficient in resource utilization. This isolation provides a consistent environment from development to testing to production, effectively solving the “it works on my machine” problem.

The magic of Docker lies in its ability to package applications and their dependencies into a standardized unit for software development. These units, known as Docker images, are immutable templates that define exactly what goes into a container. Once an image is built, it can be run on any system with Docker installed, ensuring consistent behavior regardless of the underlying infrastructure.

The Role of Registries in the Container Ecosystem

While Docker images provide the “blueprint” for containerized applications, a mechanism is needed to store, organize, and distribute these images. This is where container registries come into play. A container registry is a centralized service for hosting and managing Docker images. It functions much like a version control system (e.g., Git) for code, but for compiled application packages.

Docker Hub stands out as the default and most widely used public registry. It provides the crucial infrastructure for developers and teams to:

- Store Images: Safely archive their application images.

- Share Images: Collaborate by easily distributing images across teams or to the public.

- Version Control: Manage different versions of images, ensuring reproducibility and facilitating rollbacks.

- Discover Images: Find pre-built images for popular software, operating systems, and tools, accelerating development.

Without a robust registry like Docker Hub, the benefits of containerization—speed, consistency, portability—would be severely hampered, making application sharing and deployment a cumbersome and error-prone process.

Diving Deep into Docker Hub’s Core Functionalities

Docker Hub is not just a storage locker; it’s a sophisticated platform equipped with a suite of features designed to enhance developer workflows and foster a vibrant ecosystem of containerized software. These functionalities collectively empower users to manage, automate, and secure their container images effectively.

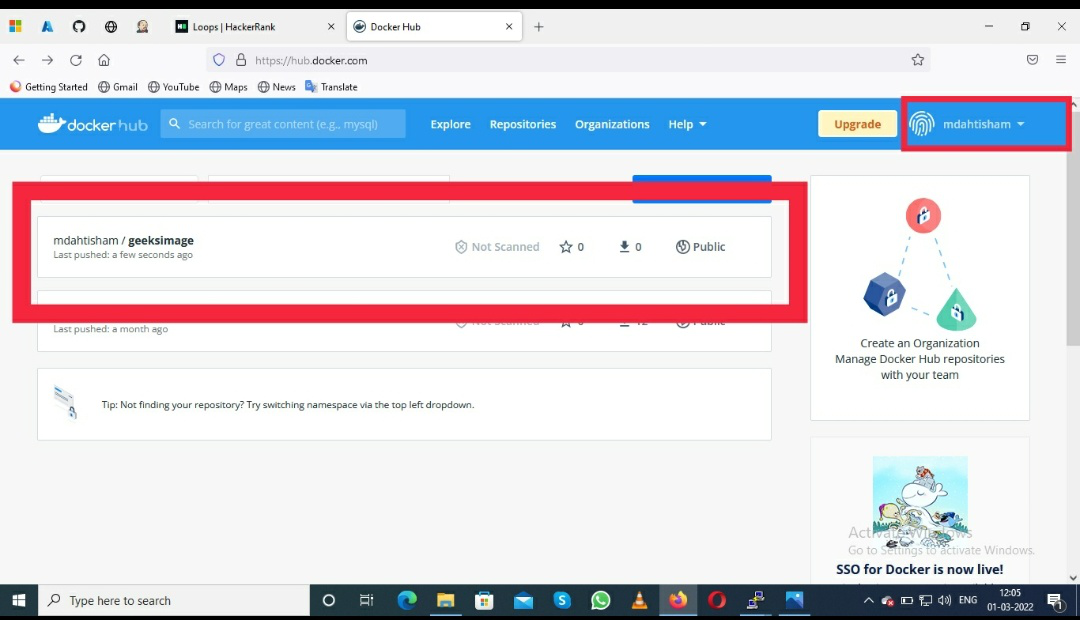

Public and Private Repositories: Sharing and Securing Images

At its heart, Docker Hub offers two primary types of repositories:

- Public Repositories: These are accessible to anyone and are ideal for sharing open-source projects, community-contributed tools, or images intended for broad consumption. The vast collection of official images for popular software (like Ubuntu, Nginx, Redis) resides in public repositories, making it incredibly easy for developers to pull and utilize trusted base images. This fosters open collaboration and accelerates innovation by providing widely available, standardized components.

- Private Repositories: For proprietary applications, sensitive data, or internal enterprise tools, private repositories provide the necessary security and access control. Users can define who has permission to push or pull images, ensuring that intellectual property remains protected. This feature is critical for organizations developing innovative solutions that require confidentiality before public release.

The flexibility to choose between public and private repositories caters to a wide spectrum of use cases, from individual hobbyists contributing to open source to large enterprises managing complex, confidential software portfolios.

Automated Builds and Webhooks: Streamlining CI/CD

One of Docker Hub’s most powerful features is its integration with source code repositories like GitHub and Bitbucket. This enables Automated Builds, where Docker Hub can automatically build Docker images from a Dockerfile whenever changes are pushed to a specified branch in the connected source code repository. This dramatically simplifies the continuous integration (CI) process:

- Developer pushes code changes to Git.

- Docker Hub detects the change via a webhook.

- Docker Hub fetches the code and builds a new Docker image.

- The newly built image is automatically pushed to the Docker Hub repository.

This automation ensures that images are always up-to-date with the latest code, reducing manual effort and potential for human error. Furthermore, Webhooks can be configured to trigger actions in other services (e.g., notifying a deployment system or a chat application) whenever an image is successfully built or pushed. This seamless integration with CI/CD pipelines is a cornerstone of agile development and rapid innovation, allowing teams to deliver new features and fixes faster and more reliably.

Official Images and Verified Publishers: Trust and Reliability

The sheer volume of images on Docker Hub necessitates mechanisms to ensure trust and reliability. Docker Hub addresses this through:

- Official Images: These are a curated set of Docker repositories managed by Docker, Inc. and trusted upstream vendors. They are extensively documented, regularly updated, and designed for best practices. Official Images provide a reliable and secure foundation for building applications, acting as a “gold standard” for base images (e.g.,

python,node,mysql). Their widespread use accelerates development by allowing teams to start with robust, well-maintained components. - Verified Publishers: This program extends trust beyond official images to commercial software vendors. Verified Publishers, identified by a distinctive badge, are partners that provide high-quality, secure, and supported images for their commercial products. This gives users confidence in the provenance and integrity of third-party software images, which is crucial when integrating complex enterprise solutions or specialized innovative technologies into containerized workflows.

These mechanisms are vital for maintaining the health and security of the Docker ecosystem, empowering developers to build upon a foundation of trusted components without fear of compromise.

Docker Hub as an Enabler of Tech & Innovation

Docker Hub’s utility extends far beyond mere storage; it is a critical enabler of innovation across a multitude of technological domains. By abstracting away environmental inconsistencies and streamlining deployment, it allows developers and organizations to focus their energies on building cutting-edge solutions.

Accelerating Development of AI/ML Applications

Artificial Intelligence and Machine Learning projects often involve complex dependencies, specific library versions (e.g., TensorFlow, PyTorch), GPU drivers, and custom environments. Containerization, facilitated by Docker Hub, is a game-changer for AI/ML development:

- Reproducible Environments: AI/ML models are sensitive to their environment. Docker images ensure that the exact training and inference environment can be consistently reproduced across different machines, preventing “works on my machine” issues for data scientists.

- Scalable Deployment: Training computationally intensive models often requires distributed computing. Docker Hub enables easy distribution of identical training environments to clusters of machines, whether on-premises or in the cloud. When a model is ready for deployment, its containerized inference service can be easily scaled up or down based on demand.

- Collaboration: Data science teams can share complex AI/ML development environments and trained models as Docker images, drastically simplifying collaboration and onboarding of new team members. This allows rapid iteration and experimentation, key drivers of innovation in AI.

By providing a robust platform for sharing and managing these complex environments, Docker Hub significantly accelerates the lifecycle of AI/ML innovation, from research and development to production deployment.

Supporting Distributed Systems and Microservices Architectures

The shift towards microservices architecture, where applications are broken down into small, independently deployable services, has been a major trend in modern software development. Docker Hub is indispensable in this paradigm:

- Independent Deployment: Each microservice can be packaged into its own Docker image and managed independently on Docker Hub. This allows different teams to develop and deploy their services at their own pace, using their preferred technologies, without impacting other parts of the system.

- Service Discovery and Orchestration: While Docker Hub stores the images, orchestration tools like Kubernetes (which frequently pull images from Docker Hub) manage the deployment, scaling, and networking of these individual microservice containers. Docker Hub provides the centralized source for all these component images.

- Version Management: Managing dozens or hundreds of microservices requires precise version control. Docker Hub’s tagging and versioning capabilities ensure that specific versions of each service can be deployed and rolled back if necessary, providing stability and flexibility in complex distributed systems.

This modular approach, heavily reliant on Docker Hub for image management, enables greater agility, resilience, and scalability—hallmarks of innovative, cloud-native applications.

Facilitating Open Source Collaboration and Community Driven Innovation

Docker Hub acts as a massive public library, hosting thousands of open-source projects and community-contributed images. This collaborative aspect is a powerful engine for innovation:

- Access to Pre-built Components: Developers can quickly pull and integrate pre-built images for databases, web servers, development tools, and more, avoiding the need to “reinvent the wheel.” This accelerates development cycles and allows teams to focus on their unique value proposition.

- Community Contributions: Open-source projects often use Docker Hub to distribute their software, making it incredibly easy for users to try out and contribute to new technologies. This low barrier to entry fosters a vibrant community of innovation, where new tools and approaches are constantly being shared and improved upon.

- Standardization: The widespread adoption of Docker images and Docker Hub promotes a level of standardization in how software is packaged and run. This standardization reduces friction, encourages interoperability, and enables a more cohesive ecosystem for technological advancement.

In essence, Docker Hub amplifies the network effect of open-source development, democratizing access to powerful tools and fostering a global community of innovators.

Best Practices and Advanced Usage

To maximize the benefits of Docker Hub and maintain robust, secure containerized workflows, adhering to best practices and leveraging advanced features is crucial.

Image Tagging and Versioning Strategies

Effective image tagging is fundamental for managing different versions of applications and ensuring reproducibility. While a simple latest tag might be convenient, it’s generally recommended to:

- Use Semantic Versioning: Tag images with meaningful version numbers (e.g.,

1.0.0,1.0.1-beta,2.0.0) that reflect changes in the application. - Include Git SHAs: For continuous deployment scenarios, appending a Git commit SHA to the tag can link an image directly to the exact source code that built it, aiding traceability.

- Distinguish Branches: Tags like

main,dev, orfeature-xcan be used for images built from different development branches.

Consistent tagging ensures that teams can reliably deploy specific versions, troubleshoot issues more effectively, and roll back to previous stable states, all critical for maintaining stable and innovative applications.

Security Considerations and Image Scanning

Security is paramount in any technological endeavor, and container images are no exception. Docker Hub offers features and encourages practices to enhance image security:

- Regular Updates: Keep base images and application dependencies up-to-date to patch known vulnerabilities.

- Minimize Image Size: Smaller images have a smaller attack surface. Use multi-stage builds and lean base images (e.g.,

alpine) to reduce unnecessary components. - Avoid Running as Root: Configure containers to run with a non-root user whenever possible to limit potential damage from compromised processes.

- Image Scanning: Docker Hub offers integrated image scanning capabilities (e.g., Snyk integration) that automatically analyze images for known vulnerabilities. Regularly scanning images and addressing reported issues is a critical component of a secure container pipeline.

By prioritizing security throughout the image lifecycle, developers can build and deploy innovative solutions with greater confidence and resilience.

Integrating with Orchestration Tools (Kubernetes, Docker Swarm)

While Docker Hub manages image storage and distribution, orchestration platforms like Kubernetes and Docker Swarm are responsible for deploying and managing containers in production environments. The seamless integration between them is a cornerstone of modern infrastructure:

- Declarative Deployments: Orchestrators pull images from Docker Hub (or other registries) based on declarative configuration files. This allows users to define the desired state of their applications, and the orchestrator ensures that state is maintained.

- Automated Scaling and Healing: When an application needs to scale, orchestrators pull additional instances of the required image from Docker Hub and deploy them. If a container fails, the orchestrator pulls a fresh image and restarts it.

- Environment Agnostic: By pulling container images from Docker Hub, orchestration tools can deploy applications consistently across any cloud provider or on-premises infrastructure that supports them, enhancing portability and reducing vendor lock-in.

This powerful combination of Docker Hub for image management and orchestration tools for runtime management forms the backbone of scalable, resilient, and highly innovative application infrastructures.

The Future of Container Registries and Docker Hub’s Evolving Role

The landscape of cloud-native development is constantly evolving, and Docker Hub continues to adapt to meet emerging challenges and opportunities. Its future role will be shaped by ongoing trends in distributed computing and edge technologies.

Expanding Cloud Native Horizons

As cloud-native architectures become more sophisticated, Docker Hub will continue to be a vital component. It’s likely to see increased integration with broader cloud-native ecosystems, including serverless functions, service meshes, and more advanced security frameworks. Enhanced capabilities for multi-platform image support (e.g., ARM, WebAssembly) will also become more prominent, catering to a wider array of innovative hardware and software environments. The ongoing evolution of container standards and tooling will undoubtedly find a central distribution point in registries like Docker Hub, ensuring broad accessibility for cutting-edge technologies.

Edge Computing and IoT Deployments

The rise of edge computing and the Internet of Things (IoT) presents new frontiers for containerization and, by extension, Docker Hub. Deploying applications to resource-constrained devices at the edge requires small, efficient, and easily distributable software packages. Docker images are perfectly suited for this, and Docker Hub provides the centralized platform to manage the vast number of images required for diverse IoT devices and edge gateways.

- Remote Updates: Docker Hub can facilitate the remote distribution of software updates to edge devices, ensuring they always run the latest, most secure versions of applications.

- Consistent Deployments: Just as in the data center, containers ensure consistent application environments across a heterogeneous landscape of edge hardware.

- Reduced Bandwidth: Optimized container images pulled from Docker Hub can minimize the bandwidth required for deploying and updating applications to remote locations.

As AI processing moves closer to data sources at the edge, Docker Hub will play an increasingly crucial role in managing and distributing the specialized AI/ML models and inference engines required for these innovative, distributed systems.

In conclusion, Docker Hub is far more than just a place to store Docker images. It is a dynamic, collaborative, and secure platform that underpins the entire containerization movement. By providing essential services for image discovery, management, automation, and security, it empowers developers and organizations to accelerate innovation, streamline operations, and build the next generation of resilient, scalable, and cutting-edge software solutions. Its strategic importance in the ecosystem of Tech & Innovation is undeniable, and its continued evolution will undoubtedly shape the future of software development for years to come.