While the title “what is dma mean” might initially conjure images of buzzing quadcopters or cutting-edge camera technology, the true meaning of DMA lies at the very heart of how modern computing systems operate. DMA, or Direct Memory Access, is a fundamental hardware feature that revolutionizes data transfer between peripheral devices and the system’s main memory (RAM). It’s not about the flashy gadgets we see flying or capturing stunning visuals, but rather the intricate, often invisible, engineering that makes them — and everything else — possible. This article will delve deep into the concept of DMA, exploring its significance, how it functions, its various implementations, and its profound impact on system performance and efficiency.

The Bottleneck of Traditional Data Transfer

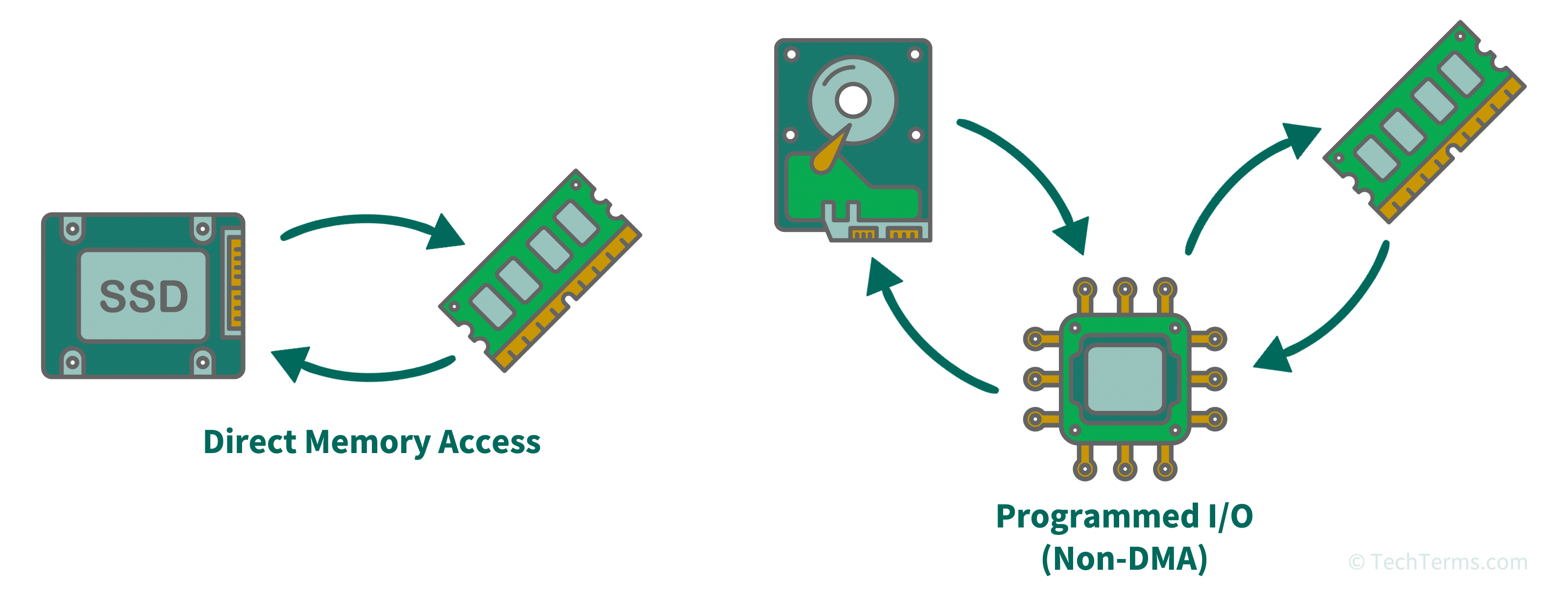

Before understanding the brilliance of DMA, it’s crucial to grasp the limitations of older, more rudimentary methods of data transfer. In a system without DMA, when a peripheral device (like a hard drive, network card, or even a graphics card) needs to send or receive data, it must engage the Central Processing Unit (CPU) in a direct, byte-by-byte dance. This process, often referred to as Programmed I/O (PIO), is inherently inefficient and creates a significant bottleneck.

Programmed I/O (PIO): The CPU as the Middleman

In a PIO system, the CPU acts as the sole conduit for all data movement between peripherals and memory. Imagine trying to move a large stack of books from one room to another, but you, the CPU, have to personally carry each individual book.

- Initiation: The peripheral device signals that it has data ready or requires data.

- CPU Intervention: The CPU is interrupted and has to stop its current tasks to attend to the peripheral.

- Data Transfer: The CPU then reads data from the peripheral’s buffer, one word or byte at a time, and writes it to its own internal registers.

- Memory Write: The CPU then takes that data from its registers and writes it to the designated location in RAM.

- Repetition: This process is repeated for every single piece of data being transferred.

The Cost of Constant CPU Involvement

The implications of this constant CPU involvement are substantial:

- CPU Underutilization: While the CPU is busy shuffling data, it cannot perform any other computational tasks. This means that even simple data transfers can bring the entire system to a grinding halt, preventing the CPU from running applications, processing user input, or performing complex calculations.

- High Latency: The time taken for the CPU to switch contexts, fetch data, and write it back introduces significant latency. For applications requiring real-time data processing or high-speed I/O, this latency can be unacceptable.

- Scalability Issues: As the number of peripherals and the volume of data increase, the PIO model quickly becomes unsustainable, leading to severe performance degradation.

Interrupt-Driven I/O: A Slight Improvement

A slight evolution from pure PIO is Interrupt-Driven I/O. In this model, the peripheral device interrupts the CPU only when a block of data is ready or a transfer is complete. While this reduces the constant polling overhead, the CPU is still responsible for moving the data itself, piece by piece. It’s like the person moving books now only gets interrupted to bring a whole box of books, but they still have to carry each box themselves. The CPU is still the primary mover, even if it’s not engaged for every single book.

The DMA Advantage: Offloading the CPU

Direct Memory Access (DMA) is a technological marvel designed to liberate the CPU from the tedious and time-consuming task of managing data transfers. Instead of the CPU acting as the intermediary, DMA introduces a dedicated hardware component – the DMA Controller (DMAC) – which takes over the responsibility of moving data directly between peripherals and memory.

Introducing the DMA Controller (DMAC)

The DMAC is essentially a specialized processor designed for one purpose: efficient data movement. It operates independently of the CPU, allowing the CPU to focus on its core computational tasks while data is being transferred in the background. Think of the DMAC as a professional mover who can efficiently transport entire rooms of furniture without needing your constant supervision.

The DMA Transfer Process

The DMA transfer process dramatically streamlines data movement:

- CPU Initialization: The CPU initiates the DMA transfer by programming the DMAC. This involves telling the DMAC:

- The source address of the data (either in memory or a peripheral’s buffer).

- The destination address of the data (either in memory or a peripheral’s buffer).

- The number of bytes or words to be transferred.

- The direction of the transfer (read from peripheral to memory, or write from memory to peripheral).

- DMAC Takes Over: Once programmed, the DMAC takes control of the system’s bus (the communication highway between components). Crucially, it acquires bus mastership. This means it can initiate and control data transfers without needing the CPU’s direct involvement for each step.

- Direct Data Movement: The DMAC then directly reads data from the source and writes it to the destination. This bypasses the CPU entirely. The CPU is free to execute other instructions, making the system far more responsive and efficient.

- Completion Notification: Upon completing the entire transfer, the DMAC signals the CPU, usually via an interrupt. This tells the CPU that the data is ready or the operation is complete, and the CPU can then resume its interaction with the peripheral or continue with its other tasks.

The Benefits of DMA

The advantages of this approach are profound:

- CPU Offloading: The most significant benefit is freeing up the CPU. This dramatically improves overall system performance, allowing for higher throughput and the execution of more complex applications.

- Reduced Latency: By eliminating the CPU as a bottleneck and performing transfers in hardware, DMA significantly reduces data transfer latency. This is critical for high-speed applications like networking, storage, and graphics.

- Increased Throughput: Because data can be transferred more quickly and without CPU intervention, DMA enables higher data throughput. This means more data can be moved in a given amount of time.

- Power Efficiency: For mobile or battery-powered devices, reducing CPU load translates directly to lower power consumption.

Types of DMA and Their Implementations

The fundamental principle of DMA is consistent, but its implementation and variations cater to different system architectures and performance requirements. The most common types of DMA include Burst DMA, Cycle Stealing DMA, and Transparent DMA, alongside more modern, integrated approaches.

Burst DMA

In Burst DMA, the DMAC acquires bus mastership and transfers an entire block of data in one continuous operation before relinquishing the bus back to the CPU. This is akin to the professional mover clearing out an entire room of furniture in one go.

- Pros: Offers the highest throughput for large data transfers as there’s no interruption or bus contention with the CPU during the transfer.

- Cons: Can lead to significant “bus starvation” for the CPU, meaning the CPU might have to wait for an extended period to access the bus, potentially impacting real-time performance if not managed carefully. This mode is ideal for transferring large files or data streams where continuous movement is paramount.

Cycle Stealing DMA

Cycle Stealing DMA is a more balanced approach. Here, the DMAC acquires bus mastership for one bus cycle at a time. After each data transfer (byte or word), it relinquishes the bus back to the CPU. This is like the mover bringing one piece of furniture at a time but returning to the source for the next immediately.

- Pros: Allows the CPU to regain access to the bus much more frequently, preventing severe bus starvation and maintaining better responsiveness for time-sensitive tasks.

- Cons: The overhead of acquiring and releasing the bus for each cycle can slightly reduce the maximum achievable throughput compared to Burst DMA. This mode is excellent for peripherals that require frequent but relatively small data transfers, ensuring the CPU isn’t completely blocked.

Transparent DMA (or Hidden DMA)

Transparent DMA is the most sophisticated form. It exploits idle bus cycles, meaning the DMAC only performs transfers when the CPU is not using the bus. This happens automatically and without explicit signaling between the DMAC and the CPU. It’s like the mover working only when the room is completely empty and no one is around.

- Pros: Offers near-zero impact on CPU performance as it never actively competes for bus access. It effectively utilizes otherwise unused bus bandwidth.

- Cons: Its effectiveness is dependent on the CPU’s bus utilization. If the CPU is constantly accessing the bus, Transparent DMA will have little opportunity to operate. It’s the most efficient in terms of CPU utilization but might be slower for very large transfers compared to Burst DMA in specific scenarios.

Bus Mastering and DMA Controllers

Modern systems typically implement DMA through dedicated DMA Controllers (DMACs), which are often integrated into the chipset or even the CPU itself. These DMACs are responsible for managing the bus arbitration process – deciding which device gets bus mastership at any given time. Technologies like PCI (Peripheral Component Interconnect) and PCI Express (PCIe) are specifically designed to support bus mastering and DMA, allowing peripherals to directly request and acquire control of the bus for efficient data transfers.

DMA’s Crucial Role in Modern Computing and Technology

The impact of DMA extends across virtually every aspect of modern computing. From the fundamental operations of your personal computer to the advanced capabilities of specialized hardware, DMA is an indispensable technology that underpins performance and efficiency.

Storage Devices: Hard Drives and SSDs

The speed at which data can be read from or written to storage devices is critical. DMA allows the storage controller (e.g., SATA controller, NVMe controller) to directly transfer large blocks of data to and from RAM without the CPU needing to be involved in every byte. This is why Solid State Drives (SSDs), with their high transfer rates, heavily rely on DMA to deliver their advertised performance. Without DMA, the CPU would be bogged down just managing the transfer of files, making your computer feel sluggish.

Networking: High-Speed Data Transmission

Network interface cards (NICs) constantly receive and transmit data packets. DMA enables these NICs to directly place incoming data into system memory or read outgoing data from memory for transmission, bypassing the CPU. This is essential for high-speed networking technologies like Gigabit Ethernet and 10 Gigabit Ethernet, ensuring that the network card can keep up with the data flow without overwhelming the CPU.

Graphics Processing Units (GPUs)

While GPUs have their own dedicated memory (VRAM), they often need to transfer data to and from system RAM. This includes textures, shaders, and other assets required for rendering. DMA allows the GPU to efficiently fetch this data, significantly improving rendering performance and enabling complex visual effects in games and professional applications.

Audio and Video Processing

Real-time audio and video streaming and processing demand high data throughput and low latency. DMA is used to transfer audio samples or video frames between peripherals (like audio codecs or video capture cards) and memory, allowing for smooth playback and efficient processing by the CPU and specialized co-processors.

Embedded Systems and Peripherals

In embedded systems, where resources are often constrained, DMA is vital for efficiently managing data flow from sensors, communication modules, and other peripherals. This allows the embedded processor to focus on its control tasks rather than being consumed by data movement.

The Future of DMA: Enhancements and Integrations

As technology advances, DMA continues to evolve. Newer architectures are exploring more intelligent DMACs that can perform more complex operations, and tighter integration with CPUs and other components is reducing overhead further. Technologies like DMA remapping in virtualized environments enhance security and performance by allowing virtual machines to have direct memory access to specific hardware devices. Furthermore, the ongoing development of high-speed interconnects like PCIe ensures that the underlying infrastructure for DMA remains robust and capable of handling increasing data demands.

In conclusion, while the initial query “what is dma mean” might seem simple, the answer reveals a fundamental technology that is absolutely critical to the functioning of virtually all modern electronic devices. DMA is the silent workhorse, the unsung hero that enables our computers, smartphones, and countless other technologies to operate with the speed, efficiency, and responsiveness we expect. It is a testament to clever engineering that offloads critical tasks from the central processor, allowing it to focus on what it does best: computation and intelligence.