In the realm of technology and innovation, particularly concerning the advancement and application of autonomous systems and sophisticated digital processes, understanding foundational economic principles is crucial. One such concept, the “discount factor,” plays a pivotal role in how we evaluate future outcomes and make decisions in dynamic environments. While seemingly an abstract financial term, its implications are far-reaching, impacting everything from the development of intelligent algorithms to the strategic deployment of cutting-edge technologies.

The discount factor, denoted by the Greek letter delta ($delta$), is a fundamental component in calculating the present value of future rewards or costs. In essence, it represents the value placed on receiving a reward sooner rather than later. A higher discount factor indicates that future rewards are valued almost as much as immediate ones, suggesting a greater emphasis on long-term planning and investment. Conversely, a lower discount factor signifies a preference for immediate gratification, where future returns are heavily devalued. This concept is ubiquitous in fields like economics, finance, and increasingly, in the artificial intelligence and machine learning disciplines that underpin much of modern tech and innovation.

The Foundation of Temporal Value

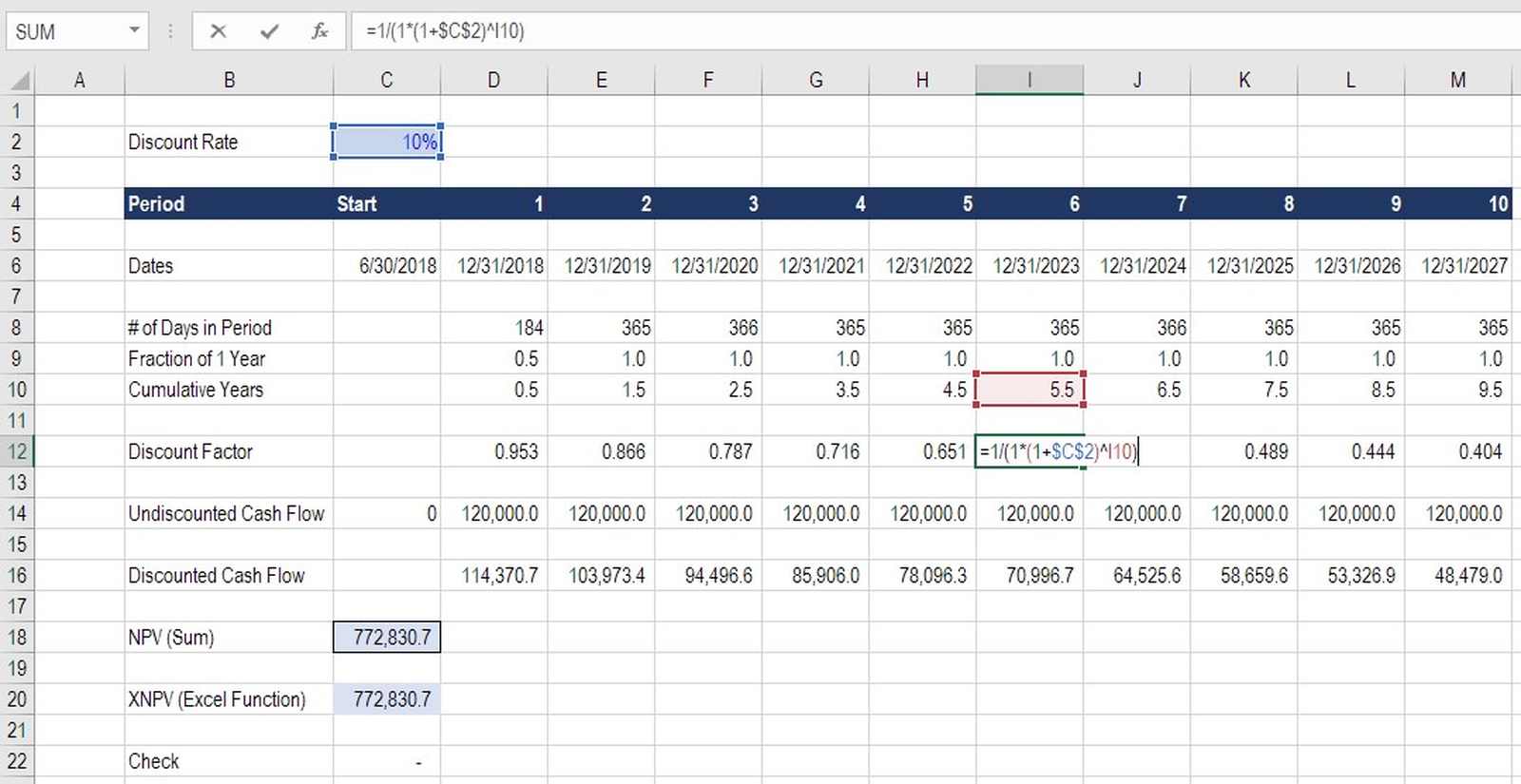

At its core, the discount factor acknowledges a fundamental truth about our perception of time and value: the “time value of money” or, more broadly, the “time value of reward.” This principle posits that a unit of currency or a unit of reward available today is worth more than the same unit available in the future. There are several reasons for this inherent preference for the present.

Opportunity Cost and Investment

One primary driver is the opportunity cost. A dollar received today can be invested and potentially grow over time, yielding a return. Therefore, receiving that dollar a year from now means forfeiting the potential earnings that could have been generated during that year. In the context of technological innovation, this translates to the ability to capitalize on early market advantages, secure funding for ongoing research and development, or deploy a product that generates revenue sooner. The faster a groundbreaking technology can be brought to market, the greater its potential to capture market share and recoup development costs.

Risk and Uncertainty

Another significant factor is the inherent uncertainty of the future. Unexpected events, changing market conditions, technological obsolescence, or even unforeseen challenges in deployment can all diminish the value of a future reward. The longer the time horizon, the greater the accumulation of potential risks. Therefore, a rational agent will typically discount future benefits to account for this increased uncertainty. In the tech world, this might manifest as a company being more inclined to invest in a project with a shorter but certain payoff, rather than a longer-term project with a potentially larger but highly speculative outcome.

Inflation and Purchasing Power

Inflation also plays a role. Over time, the general price level of goods and services tends to rise, meaning that a unit of currency will buy less in the future than it does today. This erosion of purchasing power naturally devalues future monetary gains. While not always directly applicable to non-monetary rewards in technological contexts, the principle of diminishing future utility remains relevant.

Discount Factor in Machine Learning and AI

The discount factor finds perhaps its most direct and powerful application in reinforcement learning, a subfield of machine learning where agents learn to make optimal decisions through trial and error in an environment. In reinforcement learning, an agent aims to maximize its cumulative future reward. The discount factor is used to weight these future rewards.

The Reinforcement Learning Equation

In reinforcement learning, the goal is to learn a policy that maximizes the expected sum of discounted future rewards. The value of a state, $V(s)$, is often defined as the expected cumulative future reward starting from state $s$. If $r_t$ is the reward received at time step $t$ and $gamma$ (gamma, often used interchangeably with $delta$) is the discount factor, the total discounted reward $R$ is given by:

$R = r0 + gamma r1 + gamma^2 r2 + gamma^3 r3 + …$

This equation clearly illustrates how rewards received at later time steps are multiplied by progressively smaller powers of the discount factor, thereby reducing their impact on the total cumulative reward.

Interpreting the Discount Factor’s Influence

The value of $gamma$ lies between 0 and 1, inclusive.

-

$gamma = 0$: This implies that the agent only cares about the immediate reward. It has no foresight and will act purely based on what offers the greatest reward right now. This is like a very myopic agent.

-

$gamma$ close to 1 (e.g., 0.99): This suggests that the agent is very farsighted and values future rewards almost as much as immediate ones. It is willing to undertake actions that might have a lower immediate reward but lead to significantly higher cumulative rewards in the long run. This is essential for tasks requiring long-term planning, such as navigating a complex maze, mastering a game like Go, or optimizing complex industrial processes.

-

$gamma$ in the middle (e.g., 0.9): This represents a balance between immediate and future rewards. The agent considers a moderate horizon of future outcomes.

The choice of the discount factor is a critical hyperparameter in reinforcement learning. It directly influences the agent’s behavior and its ability to learn effective policies. For instance, in autonomous navigation systems for drones or robotic vehicles, a higher discount factor would encourage the AI to find routes that are not necessarily the shortest in terms of immediate distance but are safer and more efficient overall, avoiding obstacles and minimizing energy consumption over the entire journey.

Implications for Technological Development and Strategy

The concept of the discount factor extends beyond the technicalities of reinforcement learning into the broader strategic considerations of technological development and deployment.

Investment in Long-Term vs. Short-Term Projects

When companies assess potential R&D projects, they implicitly or explicitly apply a discount factor. A project promising a quick, high return on investment will be more attractive than one with a longer development cycle and a more uncertain future payoff, especially if the company operates with a high discount rate (i.e., a low discount factor). This can lead to a bias towards incremental innovation rather than disruptive, long-term ventures.

However, for truly transformative technologies – like artificial general intelligence, quantum computing, or advanced materials – that require significant upfront investment and have a long path to commercial viability, a lower discount factor is implicitly assumed by those investing in them. These investors are willing to wait for future rewards, recognizing the potentially immense long-term value. Venture capitalists, for example, often have a longer investment horizon and are more willing to accept the risks associated with technologies that might take a decade or more to mature.

Policy and Regulation in Emerging Technologies

Governments and regulatory bodies also grapple with discount factors when making decisions about the future of technology. For instance, when evaluating the costs and benefits of investing in new infrastructure for emerging technologies (like 5G networks or advanced battery charging stations), policymakers must decide how to value future economic benefits and societal impacts against present-day expenditures. Debates around climate change mitigation, for example, often hinge on how future environmental benefits (e.g., reduced carbon emissions) are discounted relative to the present costs of transitioning to greener technologies. A higher discount rate applied to future environmental benefits would imply less urgency in taking action today.

Ethical Considerations in AI Deployment

The discount factor also touches upon ethical considerations in AI. If an AI system is designed to make decisions that have long-term consequences (e.g., in autonomous vehicles or resource management), the discount factor used in its decision-making algorithm can profoundly affect its choices. A system with a very high discount factor might prioritize short-term efficiency or safety, potentially at the expense of long-term sustainability or equity. Conversely, a system with a very low discount factor might make decisions that appear suboptimal in the short term but lead to significantly better long-term outcomes, even if those outcomes are harder to predict or quantify. This highlights the importance of carefully selecting and scrutinizing the discount factors embedded in AI systems that will shape our future.

In conclusion, the discount factor is a vital concept that bridges the gap between immediate actions and future consequences. It is a fundamental tool for rational decision-making, from optimizing algorithmic behavior in artificial intelligence to guiding strategic investments in technological innovation and shaping public policy. Understanding its nuances allows for a deeper appreciation of how we value progress and navigate the complexities of building a technologically advanced future.