To truly understand the modern world, its technological marvels, and the continuous wave of innovation, one must first grasp the fundamental concept of “what is digitally.” It’s more than just using a computer or having an internet connection; it represents a profound paradigm shift in how information is created, processed, stored, and transmitted. At its heart, “digitally” refers to the representation of information using discrete, numerical values, primarily binary digits (bits). This seemingly simple principle has laid the groundwork for virtually every advanced technology we encounter today, from artificial intelligence and autonomous systems to global communication networks and intricate mapping solutions. It’s the very essence that transforms raw data into actionable insights, enabling machines to “think,” systems to self-regulate, and complex environments to be understood with unprecedented precision.

In the realm of Tech & Innovation, “digitally” is not merely an adjective; it’s the operational mode, the architectural blueprint, and the functional language. It allows for the intricate algorithms of AI to learn from vast datasets, enables autonomous drones to navigate complex airspace, and permits remote sensors to capture environmental data with meticulous accuracy. Without this digital foundation, the sophisticated capabilities that define contemporary technological advancement would simply not exist. This article will delve into the multifaceted meaning of “digitally,” exploring its foundational principles, its transformative impact on various technological sectors, and its relentless drive towards a future ever more connected and intelligent.

The Foundation of the Digital Realm

At the very core of “what is digitally” lies a fundamental transformation of information itself. Moving from an analog world to a digital one represents a shift from continuous waves to discrete points, a change that underpins the reliability, precision, and immense processing power of all modern technology.

From Analog to Discrete

Historically, information was often captured and transmitted in analog form. Analog signals are continuous, mirroring the physical phenomena they represent—think of a traditional vinyl record where grooves directly correspond to sound waves, or an old-fashioned telephone transmitting variations in electrical current. However, analog signals are susceptible to noise and degradation; every copy of a copy loses fidelity.

Digital information, by contrast, breaks down continuous data into discrete, quantifiable chunks. Instead of an infinite range of values, digital systems use a finite set of values, typically represented by “on” or “off” states. This conversion process, called analog-to-digital conversion (ADC), samples the analog signal at regular intervals and assigns numerical values to those samples. The resulting digital data is robust: it can be copied countless times without loss of quality, transmitted over vast distances with error correction, and manipulated with absolute precision. This discrete nature is what makes complex computations possible and reliable.

Binary Code: The Universal Language

The bedrock of digital representation is binary code, a system that uses only two symbols, 0 and 1, to represent all information. These binary digits, or “bits,” are the most basic unit of data in computing. Why binary? Because it’s easily represented by the physical states of electronic components—a switch being open or closed, a voltage being high or low, a magnetic polarity pointing one way or another.

All complex information—text, images, audio, video, and crucially, program instructions—is translated into long sequences of these 0s and 1s. A character on your screen, a pixel in a high-resolution image, or a command for an autonomous drone is ultimately a unique combination of bits. This universality of binary code allows disparate digital systems to communicate and interpret information seamlessly, forming the interconnected web of modern technology. Without this common language, the sophisticated operations of AI, advanced sensor data processing, and complex flight control systems would be utterly impossible.

Data as the New Raw Material

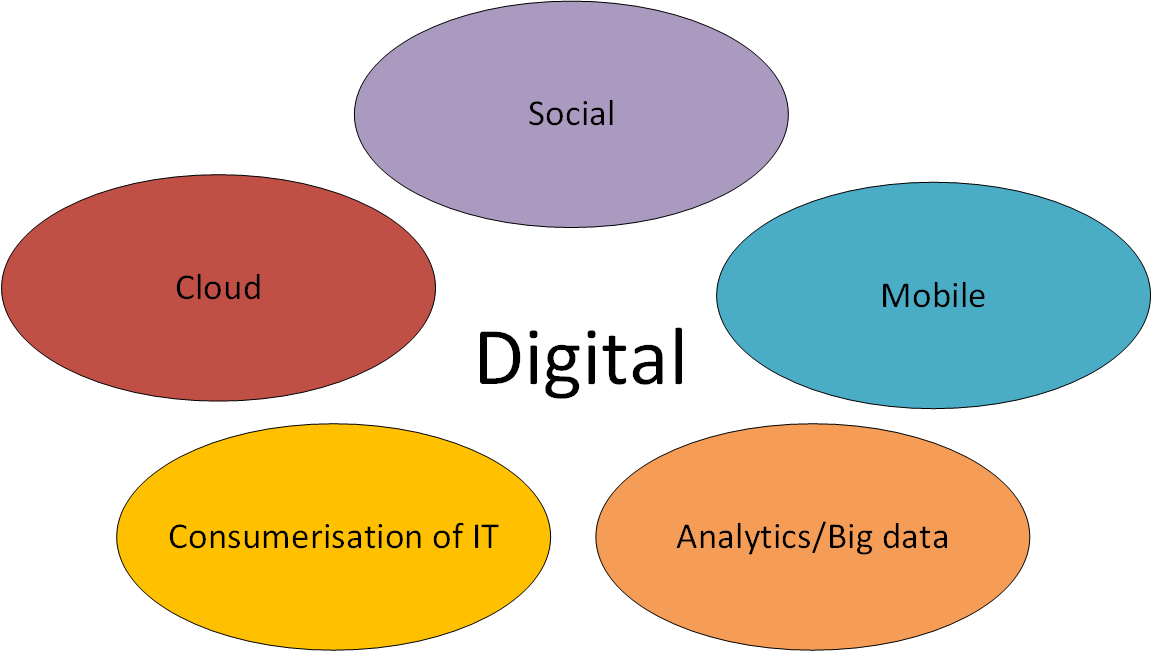

In the digital age, information itself—data—has become an invaluable resource, often referred to as the “new oil” or “new raw material.” Every interaction, every measurement, every observation captured by a sensor, and every command executed by a machine generates digital data. This explosion of data, often called “big data,” is not just a byproduct; it is the fuel for innovation.

Digital transformation turns previously unquantifiable processes into measurable data points. This allows for rigorous analysis, pattern recognition, and predictive modeling. For instance, in remote sensing, satellite images generate vast quantities of digital data about land use, weather patterns, and environmental changes. Autonomous systems rely on streams of digital sensor data—Lidar, radar, cameras—to build a real-time understanding of their environment. AI algorithms learn and improve by ingesting massive datasets. This ability to collect, store, and process digital data at scale is what drives advancements in fields like machine learning, smart cities, and precision agriculture, enabling unprecedented insights and automated decision-making.

Digital Systems in Action: Empowering Innovation

The conceptual understanding of “digitally” comes to life through the robust digital systems that process, connect, and store information. These systems are the engines driving the innovations we see in Tech & Innovation, making complex tasks not only feasible but increasingly commonplace.

Computation and Processing Power

At the heart of any digital innovation, from complex AI models to real-time drone navigation, lies immense computation and processing power. Digital processors, such as Central Processing Units (CPUs) and Graphics Processing Units (GPUs), are designed to interpret and execute digital instructions—those sequences of 0s and 1s—at astonishing speeds. These processors perform billions of calculations per second, enabling the intricate algorithms necessary for modern applications.

For instance, AI Follow Mode on a drone requires instantaneous processing of visual data from cameras, identifying and tracking a subject in real-time while simultaneously managing flight controls. Autonomous flight systems continuously process sensor data (GPS, accelerometers, gyroscopes, Lidar) to build a 3D map of their environment, identify obstacles, and plot the safest and most efficient flight path. This level of responsiveness and intelligence is entirely a product of sophisticated digital hardware executing highly optimized digital software, constantly crunching numbers to make split-second decisions.

Connectivity and Networks

The power of digital information is amplified exponentially through connectivity. Digital networks, ranging from local Wi-Fi to global internet infrastructure and emerging 5G technologies, are designed to transmit vast quantities of digital data across immense distances with remarkable speed and reliability. This seamless flow of information is critical for virtually every aspect of modern innovation.

Consider remote sensing and mapping: digital data from aerial drones or satellites needs to be transmitted to ground stations or cloud servers for processing and analysis. The Internet of Things (IoT) relies on billions of interconnected digital devices, from smart sensors in a factory to environmental monitors in a smart city, all communicating digitally. High-speed 5G networks are particularly transformative, enabling low-latency communication crucial for real-time control of autonomous vehicles, remote surgery, and augmented reality applications. This digital web allows for distributed computing, real-time collaboration, and the effective deployment and management of vast, interconnected technological ecosystems.

Storage and Accessibility

The ability to store immense quantities of digital information efficiently and access it almost instantly is another cornerstone of digital innovation. Unlike analog storage, which can degrade over time, digital storage maintains perfect fidelity. Modern data centers and cloud computing infrastructures store exabytes (billions of gigabytes) of data, making it readily available for analysis and application.

This digital archive is vital for training machine learning models, which require vast datasets to identify patterns and make predictions. Mapping applications rely on digital geographic data that can be updated and queried in real-time. Cloud storage allows for scalable and secure backup of critical operational data for autonomous fleets or remote sensing missions. Furthermore, the digital format facilitates sophisticated indexing and search capabilities, allowing users and AI systems to quickly retrieve specific pieces of information from enormous repositories, transforming raw data into accessible knowledge and driving continuous improvement in algorithms and system performance.

The Impact of Digitally-Driven Technologies

The abstract concept of “digitally” translates into tangible and transformative technologies that are reshaping industries and daily life. These innovations, fundamentally built upon digital principles, demonstrate the profound impact of this paradigm shift.

Artificial Intelligence and Machine Learning

Perhaps no field exemplifies the power of “digitally” more than Artificial Intelligence (AI) and Machine Learning (ML). These technologies are inherently digital, relying on digital representations of data and digital algorithms to learn, adapt, and make decisions. AI systems process vast digital datasets—be it images, text, sensor readings, or historical operational data—to identify patterns, make predictions, and automate complex tasks.

For example, AI Follow Mode in a drone uses computer vision algorithms to digitally analyze video streams, identify a target (like a person or vehicle), and calculate its trajectory. This digital information then feeds into the drone’s digital flight control system to maintain tracking. Machine learning models, trained on millions of digital examples, are what enable autonomous systems to distinguish between different objects, understand spoken commands, or even generate new content. The ability to digitally represent complex relationships and rules allows AI to simulate intelligence, driving advancements from predictive maintenance in industry to sophisticated natural language processing in communication.

Autonomous Systems and Robotics

Autonomous systems, including self-driving cars, industrial robots, and advanced drones, are prime examples of digitally-driven innovation. These systems operate through an intricate interplay of digital sensors, digital control units, and digital decision-making algorithms. Digital sensors (like Lidar, radar, and cameras) continuously capture digital data about their surroundings, building a real-time digital model of the environment.

This digital data is then processed by onboard digital computers running algorithms for obstacle avoidance, path planning, and navigation. For instance, a drone performing an autonomous inspection mission digitally processes telemetry data, GPS coordinates, and visual feeds to execute a pre-programmed flight path, detect anomalies, and even return to base if conditions change. The “autonomy” comes from the system’s ability to digitally interpret its environment and make complex operational decisions without continuous human intervention, leveraging digital logic and learned behaviors to perform tasks safely and efficiently.

Remote Sensing, Mapping, and Digital Twins

Digital technology has revolutionized how we understand and interact with the physical world through remote sensing, mapping, and the creation of “digital twins.” Remote sensing involves collecting digital data about an object or area from a distance, typically using sensors on satellites, aircraft, or drones. This digital data—spectral images, elevation models, thermal readings—is then processed to generate detailed maps, monitor environmental changes, or assess infrastructure.

Geographic Information Systems (GIS) are entirely digital platforms that store, manage, analyze, and display geographically referenced information, enabling everything from urban planning to precision agriculture. Building on this, “digital twins” are virtual, digital replicas of physical objects, processes, or systems. These twins are fed real-time digital data from their physical counterparts, allowing for continuous monitoring, simulation, and optimization. For instance, a digital twin of a smart city could simulate traffic flows, energy consumption, and environmental conditions based on live digital sensor data, providing insights for better management and predictive analysis. The creation and utility of these highly detailed, dynamic digital representations would be impossible without the underlying digital infrastructure.

The Future is Inherently Digital

As technology continues its relentless march forward, the digital paradigm is not just a passing trend but the enduring framework for future innovation. “Digitally” will become even more ingrained in every facet of our existence, presenting both immense opportunities and critical challenges.

Ubiquitous Digital Presence

The future promises an ever more ubiquitous digital presence, with digital technologies seamlessly integrated into every aspect of our lives. We are moving towards smart cities where infrastructure communicates digitally to optimize services, smart homes that anticipate our needs, and personalized healthcare systems driven by digital data from wearables and diagnostics. The concept of the Internet of Everything (IoE), where billions of devices, objects, and people are digitally interconnected, is rapidly approaching. This pervasive digital fabric will enable unprecedented levels of automation, efficiency, and personalized experiences, transforming how we live, work, and interact with our environments. From immersive augmented and virtual reality experiences to self-organizing logistical networks, the digital will be the invisible, yet omnipresent, enabler.

Ethical Considerations in a Digital World

However, the increasing dominance of “digitally” also brings forth crucial ethical considerations. As more of our lives are mediated by digital systems, questions of data privacy, cybersecurity, and algorithmic bias become paramount. Who owns the vast quantities of digital data generated? How do we ensure that AI algorithms, trained on digital datasets, do not perpetuate or amplify existing societal biases? The security of digital infrastructure, from national grids to personal devices, is a constant challenge against sophisticated cyber threats. Addressing these ethical dilemmas is not merely a technical task but a societal imperative, requiring careful regulation, transparent design, and public discourse to ensure that digital advancements serve humanity equitably and securely.

Continuous Evolution

“Digitally” is not a static state but a continuous process of evolution. The underlying technologies—from quantum computing promising exponential increases in processing power to new communication paradigms like Li-Fi and advanced forms of digital encryption—are constantly being refined and reinvented. This ongoing evolution will push the boundaries of what is possible, enabling even more sophisticated AI, truly sentient autonomous systems, and entirely new ways of interacting with digital information. The future will see continued innovation in how data is collected, processed, and applied, further blurring the lines between the physical and digital realms and opening up unforeseen opportunities for exploration, creation, and problem-solving on a global scale.

In conclusion, “what is digitally” is the foundational question underpinning the entire landscape of modern Tech & Innovation. It represents the transformation of continuous information into discrete, quantifiable data, enabling unprecedented precision, speed, and versatility. From the binary code that forms its language to the powerful processors that execute its commands, the digital paradigm has given rise to artificial intelligence, autonomous systems, advanced mapping, and countless other transformative technologies. As we look to the future, the digital realm will only expand, bringing with it incredible potential for advancement, alongside critical responsibilities to ensure its ethical and beneficial deployment for all.