In an increasingly interconnected and automated world, the invisible currents of information flow constantly, driving everything from the simplest smart devices to the most sophisticated autonomous systems. At the heart of this intricate web lies a fundamental concept: the digital signal. Far more than just a technical term, digital signals represent the very language of modern technology, a binary heartbeat that powers artificial intelligence, enables autonomous flight, facilitates precise mapping, and unlocks the potential of remote sensing. Understanding what a digital signal is, how it differs from its analog counterpart, and its profound implications for technological innovation is crucial for anyone looking to grasp the essence of our digital age.

The Fundamental Shift: From Analog to Digital

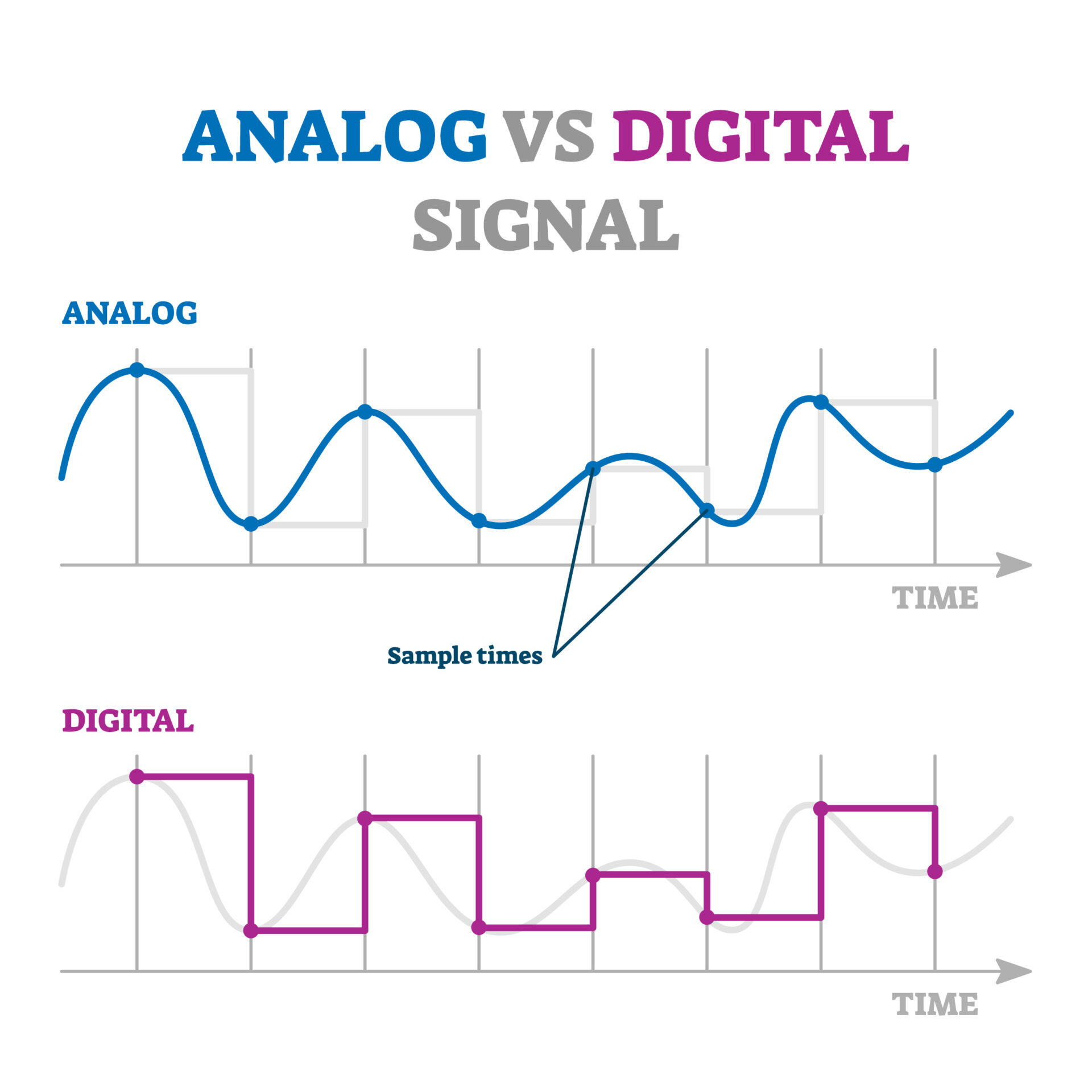

For much of human history, information was conveyed and stored in analog forms. Sounds, images, and measurements were continuous phenomena, reflected directly in the mediums used to capture or transmit them. However, as technology advanced and the need for greater precision, reliability, and versatility grew, a transformative shift occurred, paving the way for the digital revolution.

Understanding Analog Signals

An analog signal is a continuous wave that varies in amplitude or frequency to represent information. Think of a traditional vinyl record, where the grooves physically mimic the sound waves, or an old-fashioned radio broadcast, where the electromagnetic wave continuously changes to carry audio. Analog signals are characterized by their infinite range of values within a given spectrum, meaning they can represent even the slightest fluctuations in the original information. While intuitive and direct, this continuity also makes analog signals susceptible to noise, degradation, and distortion during transmission or copying. Every interference, every minute electrical fluctuation, can introduce inaccuracies, making perfect replication or long-distance transmission challenging without significant loss of fidelity. For complex systems like those required for autonomous navigation or detailed remote sensing, the inherent vulnerabilities of analog signals presented significant hurdles.

The Digital Advantage: Precision and Robustness

The advent of digital signals introduced a paradigm shift. Unlike analog signals, digital signals are discrete; they represent information as a sequence of distinct, often binary, values. Instead of a continuous wave, a digital signal is a series of “on” or “off” states, typically represented by high or low voltages (1s and 0s). This fundamental difference confers immense advantages. Because digital signals only have a limited number of defined states, they are inherently more robust against noise. A slight fluctuation in voltage that would distort an analog signal is simply ignored by a digital receiver, which only cares if the signal is above or below a certain threshold to determine if it’s a 1 or a 0. This “either/or” nature ensures that digital information can be transmitted, stored, and copied repeatedly without degradation, maintaining perfect fidelity. This precision and resilience are indispensable for the intricate operations of modern technology, from AI algorithms processing vast datasets to autonomous vehicles relying on flawless sensor readings for safe operation.

The Binary Language of Modern Technology

At its core, the digital signal communicates using a language that is remarkably simple yet incredibly powerful: binary code. This two-symbol system forms the bedrock of all digital communication and computation, enabling the complex functionalities we see in contemporary innovations.

Bits, Bytes, and Data Representation

The smallest unit of information in the digital world is a “bit,” a contraction of “binary digit.” A bit can exist in one of two states: 0 or 1. These states are physically represented in various ways—as low or high voltage, the presence or absence of a magnetic field, or light on/off. While a single bit conveys minimal information, combining multiple bits allows for the representation of much more complex data. For instance, eight bits grouped together form a “byte,” which can represent 256 different values (2^8), enough to encode a single character of text or a numerical value. Larger units like kilobytes, megabytes, gigabytes, and terabytes are simply multiples of bytes, indicating the vast amounts of information that can be stored and processed. This standardized, quantized approach to data representation is what allows diverse technologies to communicate seamlessly and process information with unparalleled accuracy and efficiency.

Encoding and Decoding: Making Sense of the Signals

The raw sequence of 1s and 0s generated by a digital signal isn’t inherently meaningful to humans or even directly usable by all components. This is where encoding and decoding processes come into play. Encoding is the process of converting analog information (like a voice, an image, or a physical measurement from a sensor) into a digital format. This involves sampling the analog signal at regular intervals and then quantizing each sample—assigning it a specific numerical value based on its amplitude. These numerical values are then converted into binary sequences. For example, a sensor measuring temperature might output an analog voltage. An Analog-to-Digital Converter (ADC) samples this voltage, assigns it a discrete numerical value (e.g., 25.3°C), and then converts that number into a binary stream (e.g., 00011001.01010011).

Conversely, decoding is the process of converting these digital streams back into a usable format, either for human interpretation (e.g., displaying an image on a screen) or for controlling another system (e.g., sending commands to a motor). A Digital-to-Analog Converter (DAC) performs the reverse operation of an ADC, reconstructing an analog signal from the digital data. Sophisticated algorithms and protocols are also part of this encoding/decoding dance, ensuring data integrity, managing transmission speeds, and allowing for error correction, all critical for the reliability of systems like autonomous navigation and remote sensing platforms.

Digital Signals in Action: Powering Innovation

The robustness and precision of digital signals are not merely theoretical advantages; they are the bedrock upon which nearly all modern technological innovations are built. From the way our devices communicate to how complex data is processed and acted upon, digital signals are the silent orchestrators.

Communication Systems: The Backbone of Connected Devices

Digital signals are paramount in modern communication. Whether it’s your smartphone connecting to a cellular tower, a satellite transmitting data back to Earth, or a drone communicating with its ground control station, digital modulation techniques ensure reliable and high-bandwidth information exchange. For technologies like AI Follow Mode in drones, continuous and error-free digital data streams are essential for transmitting real-time video, telemetry (altitude, speed, GPS coordinates), and control commands. The digital nature of these signals allows for sophisticated error correction codes, encryption for security, and multiplexing, where multiple data streams can be sent over a single channel, maximizing efficiency. This reliable digital communication link is what enables remote sensing platforms to transmit vast amounts of image and spectral data from distant locations back for analysis.

Data Processing and Storage: The Brains Behind the Operations

Beyond transmission, digital signals are fundamental to how information is processed and stored. Every sensor on an autonomous vehicle, every camera capturing images for mapping, and every piece of data informing an AI algorithm converts its raw analog input into a digital signal. This digital data can then be manipulated, analyzed, and stored by computers with incredible speed and accuracy. AI systems, in particular, thrive on digital data. Machine learning models analyze patterns in vast digital datasets (e.g., recognizing objects in digital images for obstacle avoidance, or identifying features in digital elevation models for mapping). The ability to store this data digitally without loss over long periods, and to retrieve and process it rapidly, is what allows AI to learn, adapt, and make informed decisions, transforming raw sensor input into actionable intelligence.

Advanced Control and Automation: Precision and Reliability

Autonomous flight, robotic control, and other automated systems rely entirely on digital signals for precision and reliability. Control systems receive digital commands from processors (often driven by AI algorithms), interpret them, and then translate them into precise actions. For instance, in an autonomous drone, digital signals convey flight path commands to the flight controller, which then sends digital pulses to the motor electronic speed controllers (ESCs) to adjust propeller RPMs with pinpoint accuracy. This digital control loop ensures stability, allows for complex maneuvers, and enables features like waypoint navigation and precise payload deployment. The ability to define exact states and control parameters digitally minimizes ambiguity and human error, making these systems both highly efficient and incredibly safe, especially in critical applications like infrastructure inspection or search and rescue.

Advantages and Challenges of Digital Signal Implementation

While the shift to digital signals has ushered in an era of unprecedented technological advancement, it’s also important to understand the inherent benefits and the ongoing challenges engineers face in optimizing their implementation.

Key Benefits: Noise Immunity, Efficiency, and Versatility

The primary advantage of digital signals, as discussed, is their superior noise immunity. By representing data as discrete values, they are far less susceptible to degradation from electrical interference, signal attenuation, or environmental factors. This robustness ensures data integrity over long distances and through noisy channels. Secondly, digital signals facilitate efficiency in terms of data compression. Algorithms can significantly reduce the amount of data needed to represent information (e.g., JPEG for images, MP3 for audio) without perceptible loss of quality, which is crucial for maximizing bandwidth and storage capacity in remote sensing and high-resolution imaging applications. Finally, their versatility is unmatched. Digital data can be easily encrypted for security, multiplexed with other data streams, processed by general-purpose computers, and stored indefinitely without degradation. This adaptability allows for the creation of incredibly complex and integrated systems.

Overcoming Hurdles: Bandwidth, Latency, and Security Considerations

Despite their advantages, digital signals do present their own set of challenges. One significant consideration is bandwidth. While digital signals are efficient, transmitting large volumes of digital data (e.g., 4K video streams from a drone or high-resolution lidar scans for mapping) still requires substantial bandwidth, which can be limited by regulations, hardware capabilities, and environmental factors. Closely related is latency, the delay between a signal being sent and received. In time-critical applications like autonomous navigation or real-time FPV (First Person View) drone operation, even milliseconds of delay can have significant consequences. Engineers continually strive to minimize latency through faster processors, optimized protocols, and low-latency transmission technologies. Lastly, security considerations are paramount. Because digital data is inherently easy to copy and manipulate, robust encryption, authentication protocols, and cybersecurity measures are essential to protect sensitive information transmitted by remote sensing platforms or control signals for autonomous systems from unauthorized access or malicious interference.

The Future of Digital Signals in Emerging Tech

The journey of digital signals is far from over. As technology continues to evolve at a rapid pace, so too does the sophistication with which we generate, transmit, and interpret these fundamental pulses of information. The future holds exciting prospects, pushing the boundaries of what’s possible in the realm of Tech & Innovation.

Pushing Boundaries with Higher Frequencies and Greater Bandwidth

The demand for more data, faster, is relentless. This drives innovation in developing technologies that can handle higher frequencies and greater bandwidths. The advent of 5G and future 6G networks, alongside advancements in optical communication and millimeter-wave technologies, are directly aimed at this challenge. These innovations will enable autonomous systems to process and transmit even larger volumes of sensor data in real-time, facilitating more complex AI models, higher-resolution mapping, and more robust obstacle avoidance capabilities. Imagine drones streaming uncompressed 8K video while simultaneously transmitting precise lidar data and executing complex AI-driven decisions with virtually no latency – this future hinges on ever-improving digital signal capabilities.

Integration with AI and Machine Learning: Smarter Signal Processing

The synergy between digital signals and artificial intelligence is a powerful force. AI and machine learning algorithms are increasingly being used to not only interpret digital signals but also to optimize their generation and transmission. This includes dynamic bandwidth allocation, intelligent error correction tailored to environmental conditions, and predictive analytics to anticipate and mitigate signal interference. Furthermore, AI is crucial for extracting meaningful insights from the vast amounts of digital data collected by remote sensing platforms, identifying subtle patterns that would be invisible to human analysis. This “smarter” signal processing will make future autonomous systems even more reliable, efficient, and adaptable.

Quantum Computing and Beyond: The Next Frontier

Looking further ahead, quantum computing promises a revolutionary leap in how information is processed and, by extension, how digital signals are understood and utilized. While still in its nascent stages, quantum entanglement and superposition could fundamentally alter data representation and processing, potentially enabling computations currently unimaginable. This could open doors to entirely new forms of secure communication, ultra-efficient data compression, and AI systems with unparalleled processing power, impacting every facet of Tech & Innovation from ultra-secure autonomous networks to quantum-enhanced remote sensing.

Conclusion: The Indispensable Foundation of the Digital Age

From the simplest “on” or “off” state to the intricate dance of billions of bits per second, the digital signal stands as the indispensable foundation of our modern technological landscape. It is the silent, robust language that enables AI to learn, autonomous systems to navigate, mapping technologies to precisely delineate our world, and remote sensing to reveal its hidden truths. By providing unparalleled precision, resilience against noise, and immense versatility, digital signals have not only transformed how we communicate and process information but continue to drive the relentless march of innovation. As we push the boundaries of what technology can achieve, understanding and optimizing the power of the digital signal will remain paramount, ensuring a future defined by ever-smarter, more connected, and more capable systems.