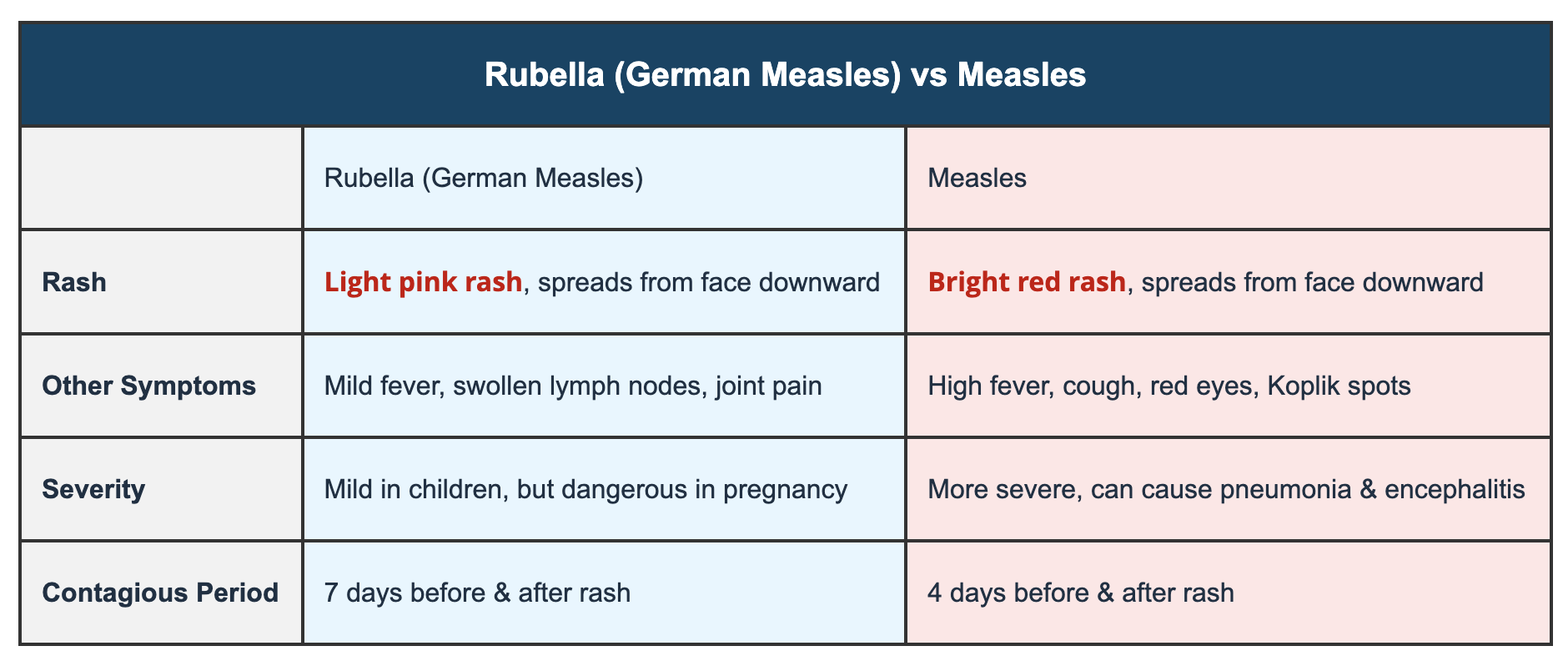

In the rapidly evolving landscape of unmanned aerial vehicles (UAVs) and advanced robotics, understanding the nuances between distinct technological functionalities is paramount. Much like the critical need to differentiate between diseases that might share superficial similarities but possess fundamentally different etiologies and prognoses – such as Measles and German Measles – so too must we meticulously distinguish between highly specialized drone capabilities. This article will not delve into virology or public health, but rather utilize this comparative framework to rigorously examine two pivotal advancements in drone autonomy that, while related, serve vastly different purposes and rely on distinct underlying technologies: AI Follow Mode and Autonomous Flight Path Generation. Both represent significant leaps in making drones smarter and more independent, yet their operational characteristics, technological underpinnings, and ideal applications diverge considerably. Grasping these distinctions is crucial for anyone involved in drone operations, from professional cinematographers to industrial inspectors and tech innovators, ensuring the right tool is deployed for the right task.

Navigating Autonomy: Dissecting AI Follow Mode and Autonomous Flight Path Generation

At a glance, both AI Follow Mode and Autonomous Flight Path Generation seem to grant a drone the ability to operate without constant manual joystick input. This shared characteristic of “autonomy” can lead to a misunderstanding of their true capabilities and limitations. However, a deeper dive reveals that they address fundamentally different challenges and execute vastly different types of missions.

The Core of AI Follow Mode: Intelligent Tracking

AI Follow Mode is an intelligent feature designed primarily to keep a designated subject within the drone’s frame or at a specific relative position. It’s about tracking a dynamic target. This mode is a cornerstone for creators in aerial filmmaking, extreme sports videography, and even personal surveillance or outdoor activities where a drone acts as a mobile cameraman.

The technology behind AI Follow Mode hinges on sophisticated computer vision algorithms. The drone identifies a user-selected subject (person, vehicle, animal) and locks onto it. It then autonomously adjusts its position, altitude, and orientation to maintain the desired framing relative to the subject, even as the subject moves. This involves:

- Object Recognition: Identifying and differentiating the target from its background using neural networks and machine learning models.

- Predictive Tracking: Anticipating the subject’s movement based on its current velocity, direction, and past trajectory to ensure smooth and continuous following, even if the subject briefly goes out of sight.

- Real-time Gimbal Control: Adjusting the camera’s pan, tilt, and roll to keep the subject centered or within a pre-defined shot composition.

The drone’s autonomy in this mode is narrowly focused: it’s intelligent about the subject and its immediate surroundings, primarily concerned with maintaining visual contact and framing. While it may incorporate basic obstacle avoidance to prevent collisions with large, stationary objects, its primary directive is to follow.

Autonomous Flight Path Generation: Beyond Simple Following

In stark contrast, Autonomous Flight Path Generation refers to a drone’s ability to plan, execute, and adapt a complex flight route independently, based on a pre-defined mission or dynamic environmental conditions. It’s about executing a mission across a defined area or sequence of waypoints, rather than tracking a single entity. This capability is foundational for applications like:

- Precision Mapping and Surveying: Flying systematic grids over vast areas to collect imagery for orthomosaics, 3D models, or digital elevation models.

- Infrastructure Inspection: Following pre-programmed routes around bridges, power lines, or wind turbines to capture detailed visual data for anomaly detection.

- Search and Rescue Operations: Systematically scanning large search areas to locate missing persons or assess disaster zones.

- Package Delivery and Logistics: Navigating complex routes between pick-up and drop-off points while avoiding dynamic obstacles.

The intelligence here is global and environmental. The drone isn’t just following a subject; it’s understanding its position within a larger geographical context, navigating according to complex parameters, and making decisions to achieve a broader objective. Key technologies involved include:

- Global Navigation Satellite Systems (GNSS/GPS): For precise positioning and navigation across large areas.

- Simultaneous Localization and Mapping (SLAM): For building and updating maps of unknown environments while simultaneously tracking its own location within those maps, especially in GPS-denied environments.

- Sensor Fusion: Integrating data from multiple sensors (IMUs, lidar, radar, ultrasonic, vision cameras) to build a comprehensive understanding of its environment.

- Advanced Path Planning Algorithms: Generating optimal, collision-free routes that consider efficiency, sensor coverage, and mission objectives.

- Dynamic Obstacle Avoidance: Detecting and re-routing around unforeseen obstacles in real-time.

Autonomous Flight Path Generation signifies a higher level of environmental awareness and mission complexity, moving beyond reactive tracking to proactive, goal-oriented navigation.

Under the Hood: Technological Distinctions and Capabilities

The fundamental differences in purpose between AI Follow Mode and Autonomous Flight Path Generation necessitate distinct technological architectures and computational demands. Understanding these core technological disparities reveals why one cannot simply perform the functions of the other.

Sensor Systems and Data Processing

The sensor suites and the way data is processed are primary differentiators:

-

AI Follow Mode: Primarily relies on visual sensors (RGB cameras). High-resolution video streams are fed into on-board processors that run real-time object detection and tracking algorithms. The focus is on extracting specific features of the target and calculating its relative motion. While some drones might use additional short-range ultrasonic or infrared sensors for basic proximity detection, the core intelligence for following is vision-centric and focused on a single, dynamic object. Data processing prioritizes speed and accuracy in target identification and relative position calculation.

-

Autonomous Flight Path Generation: Demands a far more comprehensive and diverse array of sensors for building a robust environmental model. This typically includes:

- High-precision GNSS/GPS: For global positioning accuracy.

- Inertial Measurement Units (IMUs): Accelerometers and gyroscopes for attitude and velocity estimation.

- Lidar (Light Detection and Ranging): For creating dense 3D point clouds of the environment, essential for detailed mapping and obstacle detection.

- Radar: Particularly useful in low-visibility conditions (fog, smoke) where optical sensors struggle.

- Stereo or Monocular Vision Cameras: For visual odometry, SLAM, and identifying features in the environment.

- Ultrasonic Sensors: For short-range obstacle detection.

Data processing involves sensor fusion, where inputs from all these disparate sources are integrated and interpreted to create a coherent, dynamic 3D map of the environment. This data is then used for localization, mapping, path planning, and obstacle avoidance, requiring significant computational power to process large datasets in real-time.

Computational Demands and Algorithmic Complexity

The scale and nature of computational tasks also diverge significantly:

-

AI Follow Mode: Involves relatively focused computational tasks. The drone continuously processes video frames to identify, track, and predict the movement of a specific subject. While demanding in terms of real-time processing, the scope of its ‘understanding’ is limited to the subject and its immediate interactions within the camera’s field of view. Algorithms are optimized for rapid, reactive adjustments to maintain framing.

-

Autonomous Flight Path Generation: Presents a much higher level of algorithmic complexity and computational demand. The drone must manage:

- Global Path Planning: Generating an optimal route from a start point to a destination, potentially through complex terrain or airspace, considering waypoints, no-fly zones, and mission objectives.

- Local Obstacle Avoidance: Dynamically re-planning segments of the path in real-time to circumvent unexpected obstacles or dynamic changes in the environment.

- Localization and Mapping: Continuously updating its position within a 3D map of the environment, often simultaneously building or refining that map.

- Decision-Making Engines: Evaluating multiple factors (battery life, wind conditions, mission priority) to make intelligent choices during flight.

This requires powerful on-board processors, often specialized GPUs, capable of running complex AI models for perception, planning, and control across a vast environmental dataset.

Practical Applications and Operational Impact

The differences in underlying technology naturally lead to distinct practical applications and operational considerations for each autonomous mode. Choosing the right mode profoundly impacts mission success, safety, and user experience.

User Interaction and Control Paradigms

How operators interact with these modes reflects their inherent complexity:

-

AI Follow Mode: Generally offers a more intuitive, ‘point-and-shoot’ interface. The user selects a subject on a screen, perhaps defines a desired distance or angle, and the drone handles the rest. Pilot intervention is minimal once the mode is active, primarily for monitoring or canceling the operation. The focus is on simplifying the camera operation for dynamic subjects.

-

Autonomous Flight Path Generation: Requires more extensive pre-flight planning and configuration. Operators often use specialized ground control software to:

- Define mission parameters (waypoints, altitude, speed, camera angles, sensor triggers).

- Upload detailed maps or 3D models of the operating area.

- Establish safety parameters and contingency plans.

While the drone executes the flight path autonomously, the operator is typically responsible for rigorous pre-mission setup, continuous monitoring of telemetry, and readiness to intervene if unforeseen issues arise. The focus is on precise execution of a complex mission profile over a geographical area.

Safety, Reliability, and Regulatory Considerations

The safety profiles and regulatory implications also differ:

-

AI Follow Mode: Generally considered lower risk when operated within visual line of sight (VLOS) in open environments. The drone’s immediate vicinity and the subject’s movement are the primary safety concerns. Regulatory requirements are often aligned with general VLOS drone operations. However, reliable tracking is paramount; a lost lock can lead to erratic drone behavior.

-

Autonomous Flight Path Generation: Especially when executed beyond visual line of sight (BVLOS), entails significantly higher safety stakes and stricter regulatory scrutiny. Missions often cover large areas, potentially over complex terrain or populated zones. Robustness against GPS spoofing, sensor failures, communication loss, and dynamic obstacle encounters is critical. Regulators demand highly reliable systems, extensive testing, and comprehensive risk assessments for BVLOS autonomous operations, often requiring specific certifications and operational approvals. The consequence of failure can be much greater due to the scale and complexity of the operation.

Future Trajectories: Convergence and Specialization

As drone technology continues to mature, we observe trends towards both the convergence and further specialization of these autonomous capabilities.

Blurring Lines: Integrating Follow into Autonomous Missions

While distinct, future innovations are likely to see these functionalities integrated for enhanced mission capabilities. Imagine an autonomous mapping drone flying a pre-programmed grid over a construction site. Upon detecting an unexpected anomaly (e.g., a worker in a restricted area, a piece of equipment out of place), it could seamlessly transition into an AI Follow Mode, tracking the worker or circling the equipment to gather more detailed visual data, before rejoining its original autonomous flight path. This ‘dynamic tasking’ would combine the environmental awareness of autonomous path generation with the specific subject-tracking prowess of AI Follow Mode, offering unprecedented flexibility and responsiveness.

The Path Ahead: Specialized Autonomy and Human-AI Collaboration

Both AI Follow Mode and Autonomous Flight Path Generation will continue to evolve, becoming even more specialized and intelligent. Follow modes will gain more sophisticated contextual awareness, understanding not just “what” to follow but “how” to frame it creatively based on activity type. Autonomous path generation will incorporate more advanced forms of machine learning for real-time environmental interpretation, predictive maintenance scheduling based on gathered data, and dynamic adaptation to unforeseen events (e.g., severe weather). The future lies in enhancing these autonomous systems with greater AI-driven decision-making capabilities, while also fostering seamless human-AI collaboration, where operators supervise and guide intelligent agents rather than micromanage every movement.

In conclusion, just as understanding the subtle yet crucial differences between Measles and German Measles informs appropriate medical response, distinguishing between AI Follow Mode and Autonomous Flight Path Generation is vital for effectively leveraging the transformative power of drone technology. While both contribute to the overarching goal of autonomous flight, they represent distinct evolutionary paths of drone intelligence, each optimized for specific applications and driven by unique technological innovations. Recognizing these differences is the key to unlocking the full potential of UAVs in an ever-expanding array of industries and creative pursuits.