The term “DGX” in the context of cutting-edge technology, particularly within the realm of artificial intelligence and high-performance computing, refers to a specific line of powerful systems developed by NVIDIA. These systems are engineered from the ground up to accelerate deep learning and AI workloads, addressing the immense computational demands of training complex neural networks and processing vast datasets. Essentially, DGX systems are not just computers; they are integrated AI supercomputing platforms designed to democratize and accelerate the development and deployment of artificial intelligence.

The genesis of the DGX platform lies in the recognition that traditional computing architectures were becoming a bottleneck for AI research and development. As AI models grew in complexity and the datasets they learned from expanded exponentially, the need for specialized hardware capable of parallel processing, high memory bandwidth, and rapid data throughput became paramount. NVIDIA, a company long synonymous with GPU (Graphics Processing Unit) technology, leveraged its expertise in parallel computing to create a solution that could tackle these challenges head-on.

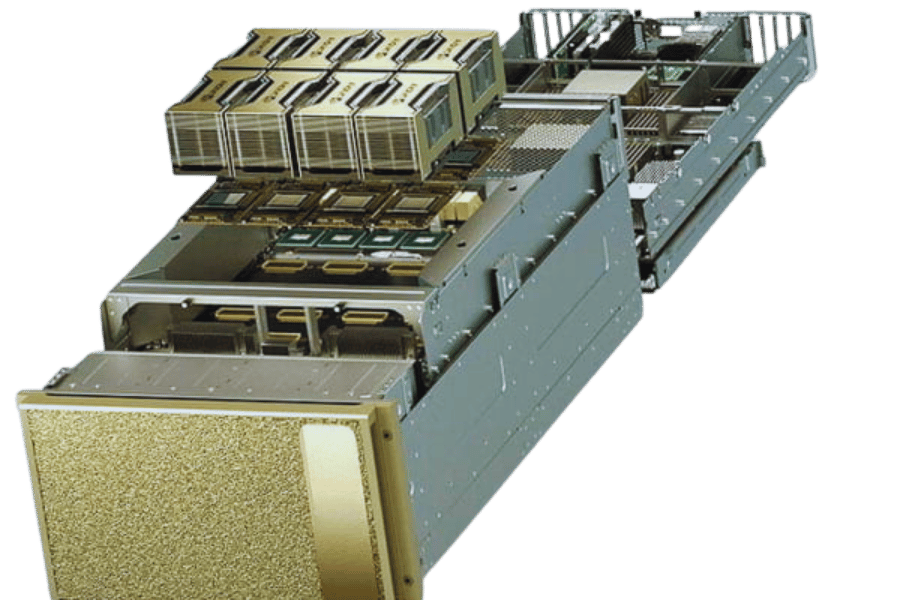

At its core, a DGX system is a meticulously engineered combination of hardware and software, optimized for AI. It integrates multiple high-performance GPUs, powerful CPUs, substantial amounts of high-speed memory, and fast storage solutions, all interconnected with high-bandwidth networking. This tightly coupled architecture ensures that data can be processed and transferred between components with minimal latency, a critical factor for efficient deep learning training.

The Architectural Pillars of DGX

The power and efficacy of DGX systems are built upon several key architectural pillars that distinguish them from conventional servers. These components work in synergy to provide an unparalleled environment for AI development.

NVIDIA GPUs: The Engine of AI Acceleration

The heart of every DGX system is its collection of NVIDIA GPUs. These are not the GPUs found in consumer gaming PCs; they are specialized datacenter-grade GPUs like the NVIDIA A100 or H100 Tensor Core GPUs. These GPUs are designed with Tensor Cores, specialized processing units that significantly accelerate the matrix multiplication operations fundamental to deep learning.

Tensor Cores and Mixed-Precision Computing

Tensor Cores are designed to perform matrix operations much faster than standard CUDA cores, particularly when using mixed-precision data types (e.g., FP16 or BF16 for calculations, with FP32 or FP64 for accumulation). This allows for a dramatic increase in training throughput and efficiency without a significant loss in accuracy for many deep learning tasks. The ability to perform these calculations at such high speeds is what enables researchers to train increasingly complex models in a reasonable timeframe.

High Memory Bandwidth and Capacity

Deep learning models often require large amounts of data to be loaded and processed quickly. NVIDIA’s datacenter GPUs feature high-bandwidth memory (HBM), which provides significantly more memory bandwidth than traditional GDDR memory. This ensures that the GPUs are not starved for data, allowing them to maintain peak performance. Furthermore, DGX systems are equipped with substantial amounts of GPU memory, enabling the training of larger models and the use of larger batch sizes, both of which can lead to faster convergence and improved accuracy.

High-Performance CPUs and System Memory

While GPUs handle the heavy lifting of deep learning computations, high-performance CPUs and ample system memory are crucial for tasks such as data pre-processing, model orchestration, and managing the overall workflow. DGX systems are equipped with powerful server-grade CPUs that can efficiently handle these supporting roles, ensuring that the GPUs are always fed with prepared data and that the system remains responsive. The large amounts of DDR4 or DDR5 system memory prevent bottlenecks in data loading and preparation stages.

High-Speed Interconnects: NVLink and NVSwitch

The performance of a multi-GPU system is heavily dependent on how quickly the GPUs can communicate with each other. DGX systems employ NVIDIA’s proprietary NVLink interconnect technology. NVLink provides much higher bandwidth and lower latency communication between GPUs compared to traditional PCIe connections.

NVLink Technology

NVLink allows GPUs to share data directly, bypassing the CPU and system memory. This is particularly important for distributed training, where multiple GPUs work together to train a single model. With NVLink, GPUs can exchange gradients and intermediate results much faster, leading to significant speedups in training time.

NVSwitch

For DGX systems with a large number of GPUs (e.g., 8 or more), NVIDIA utilizes NVSwitch. NVSwitch is a high-speed interconnect that connects all the GPUs in the system, enabling full GPU-to-GPU communication. This creates a powerful fabric where any GPU can communicate directly with any other GPU at full NVLink speed, unlocking unprecedented levels of scalability for deep learning workloads.

Fast Storage and Networking

Efficient AI development also requires fast access to large datasets. DGX systems are equipped with high-performance NVMe SSDs or other fast storage solutions to minimize data loading times. Furthermore, for large-scale deployments and distributed training across multiple DGX systems, high-speed network interfaces (e.g., InfiniBand or high-speed Ethernet) are integrated to ensure rapid communication between nodes.

The Software Ecosystem: DGX OS and NGC

Hardware is only one part of the equation. The true power of DGX systems is unlocked by a sophisticated software ecosystem designed specifically for AI workloads.

DGX OS: A Tailored Operating System

DGX systems run on DGX OS, a Linux-based operating system optimized for AI and deep learning. It includes pre-installed drivers, libraries, and tools essential for AI development, simplifying the setup and configuration process. This ensures that the hardware and software are perfectly tuned for optimal performance.

NVIDIA NGC: The Hub for AI Software

NVIDIA’s GPU Cloud (NGC) is a critical component of the DGX ecosystem. NGC provides a catalog of pre-trained AI models, optimized deep learning frameworks (like TensorFlow, PyTorch, and MXNet), development tools, and HPC applications. These NGC containers are highly optimized for NVIDIA hardware, meaning users can download and run complex AI models and frameworks with minimal setup, accelerating their development cycles significantly.

Pre-trained Models and Frameworks

NGC’s catalog offers a wide array of pre-trained models for various tasks, such as image classification, object detection, natural language processing, and more. These models can be used as a starting point for fine-tuning on custom datasets, saving considerable time and computational resources. Similarly, the optimized frameworks ensure that users are leveraging the latest performance enhancements for their chosen deep learning libraries.

Containers and Portability

The use of containers (like Docker) on NGC ensures portability and reproducibility. Users can develop and test their AI applications in a consistent environment, and then deploy them across different DGX systems or cloud platforms without worrying about compatibility issues.

Use Cases and Applications of DGX

The capabilities of DGX systems make them indispensable for a wide range of demanding AI applications across various industries.

Scientific Research and Discovery

Researchers in fields like drug discovery, genomics, materials science, and astrophysics are using DGX systems to accelerate complex simulations and data analysis. Training sophisticated models to predict protein folding, analyze DNA sequences, or model complex physical phenomena becomes feasible with the immense computational power of DGX.

Autonomous Vehicles

Developing and training the AI models that power self-driving cars requires processing massive amounts of sensor data (camera, lidar, radar) and running sophisticated perception, prediction, and planning algorithms. DGX systems are crucial for training these safety-critical AI systems.

Healthcare and Medical Imaging

In healthcare, DGX systems are employed for AI-powered medical image analysis, enabling faster and more accurate diagnoses of diseases from X-rays, CT scans, and MRIs. They are also used in drug discovery and personalized medicine to develop treatments tailored to individual patient genetics.

Natural Language Processing (NLP) and Generative AI

The recent explosion in the capabilities of Large Language Models (LLMs) and other generative AI technologies has been heavily driven by systems like DGX. Training these enormous models, which can understand and generate human-like text, code, and images, requires vast amounts of computational power and memory that only high-end AI supercomputing platforms can provide.

Financial Services

Financial institutions leverage DGX systems for fraud detection, algorithmic trading, risk management, and customer analytics. The ability to process vast financial datasets and train predictive models quickly is essential for staying competitive and secure in this rapidly evolving industry.

Manufacturing and Industrial Automation

DGX systems are used for optimizing supply chains, predictive maintenance, quality control through visual inspection, and developing intelligent robots for automated manufacturing processes.

The Future of AI Computing with DGX

The DGX platform is not static; it continuously evolves with NVIDIA’s advancements in GPU technology and AI software. Each new generation of DGX systems brings increased performance, greater efficiency, and new capabilities to push the boundaries of what’s possible in AI.

The trend towards larger and more complex AI models, coupled with the growing demand for AI applications across all sectors, ensures that the need for specialized AI supercomputing platforms like DGX will only continue to grow. DGX systems represent a significant investment, but for organizations serious about leading in AI innovation, they are an essential tool for accelerating research, development, and deployment of the next generation of intelligent systems. They embody the concept of purpose-built hardware and software for the most demanding computational challenges of our time.