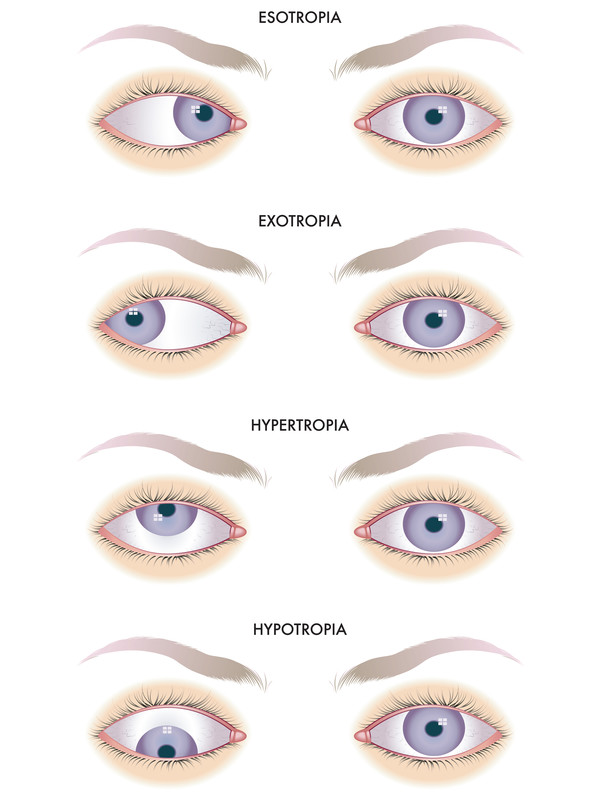

The term “crossed-eyed” typically conjures an image of a biological condition, specifically strabismus, where an individual’s eyes do not properly align with each other. However, in the rapidly evolving world of drone technology, particularly within the realm of cameras and imaging systems, this evocative phrase can be reappropriated as a potent metaphor. When we speak of a drone’s imaging system being “crossed-eyed,” we are referring to a critical misalignment or discrepancy within its visual apparatus that compromises its ability to accurately perceive, process, and interpret its surroundings. This metaphorical “crossed-eyed” state can manifest in various ways, from subtle calibration errors to significant hardware malfunctions, profoundly impacting the drone’s performance in tasks ranging from precise 3D mapping to autonomous navigation and cinematic aerial photography.

In an age where drones are increasingly relied upon for data acquisition, surveillance, scientific research, and complex operational tasks, the integrity of their visual input is paramount. A drone’s cameras are its eyes, and just as human vision requires perfect coordination for accurate perception, a drone’s multiple camera sensors must work in flawless synchronicity. This article will delve into what it means for a drone’s imaging system to be “crossed-eyed” from a technical perspective, exploring its causes, consequences, and the innovative solutions being developed to ensure these aerial platforms maintain unimpeachable visual acuity.

The Anatomy of a “Crossed-Eyed” Imaging System

For a drone’s imaging system to perform optimally, its components must be meticulously aligned and calibrated. Any deviation from this ideal state can lead to a “crossed-eyed” perception of the world, undermining the very purpose of its advanced camera technology.

Understanding Stereoscopic Vision in Drones

Many high-end drones, especially those designed for advanced functionalities like obstacle avoidance, 3D mapping, and autonomous flight, employ stereoscopic vision systems. Mimicking human binocular vision, these systems utilize two or more cameras positioned with a known baseline distance between them. By analyzing the parallax — the apparent shift in an object’s position when viewed from different angles — sophisticated algorithms can triangulate distances and construct a detailed 3D map of the environment.

This stereoscopic capability is fundamental for depth perception, allowing the drone to “understand” its spatial relationship to objects. Without it, a drone cannot accurately judge distances to obstacles, gauge terrain variations, or precisely place objects within a generated 3D model. The success of such systems hinges entirely on the exact relative positioning and orientation of these cameras.

Calibration: The Foundation of Accurate Vision

Calibration is the process of precisely defining the intrinsic and extrinsic parameters of a camera system. Intrinsic parameters describe the internal characteristics of a single camera, such as focal length, principal point (the image sensor’s optical center), and lens distortion coefficients. Extrinsic parameters, on the other hand, define the camera’s position and orientation relative to a global coordinate system or, crucially for stereoscopic systems, relative to other cameras on the drone.

For a multi-camera drone, meticulous calibration establishes the precise geometric relationship between all its “eyes.” This includes determining the exact baseline distance between cameras, their yaw, pitch, and roll angles relative to each other, and how their optical axes converge or diverge. Both factory calibration, performed under controlled conditions, and periodic field calibration are essential to maintain accuracy. Any error in this calibration process—even a minuscule shift in a camera’s perceived position or orientation—can render the entire system “crossed-eyed,” feeding distorted spatial data into the drone’s processing unit.

When “Eyes” Don’t Align: Manifestations of the “Crossed-Eyed” State

When a drone’s imaging system is “crossed-eyed,” the visual output and subsequent data processing exhibit distinct anomalies. In 3D mapping, this could lead to warped or incomplete point clouds, inaccurate measurements, and models that don’t correctly represent reality. For obstacle avoidance systems, a “crossed-eyed” sensor array might misjudge distances, leading to phantom obstacles or, more dangerously, failing to detect actual hazards.

Operators using stereoscopic FPV (First Person View) systems might experience severe disorientation, eye strain, or even nausea as their brains struggle to reconcile conflicting visual information from the misaligned camera feeds. In advanced computer vision tasks like object tracking or precise landing, a “crossed-eyed” system would provide unreliable input, causing the drone to miss targets or land inaccurately. Fundamentally, the drone’s understanding of its environment becomes skewed, impacting every subsequent decision and action.

Root Causes of Imaging System Misalignment

Understanding the mechanisms that lead to a “crossed-eyed” drone imaging system is crucial for prevention and correction. These causes can range from physical impacts to software glitches and environmental factors.

Mechanical Stress and Vibrations

Drones are subject to dynamic forces during flight, takeoff, and landing. Hard landings, collisions, or even prolonged exposure to high-frequency vibrations from motors and propellers can physically jar camera modules out of alignment. Over time, mounting screws can loosen, gimbal mechanisms can wear, or internal components within camera housings can shift. Even seemingly minor displacements—fractions of a millimeter or a few degrees of rotation—can be enough to significantly alter the extrinsic parameters of a camera, introducing a “crossed-eyed” bias into the system.

Software and Firmware Glitches

While hardware issues are often the culprit, software and firmware can also induce a “crossed-eyed” state. Errors in stored calibration files, corrupted sensor fusion algorithms, or glitches in the gimbal control software can cause the drone’s processing unit to misinterpret valid camera data or issue incorrect commands to camera positioning systems. For instance, if the software incorrectly assumes a different baseline distance between two stereo cameras than their physical separation, the resulting depth map will be fundamentally flawed, effectively rendering the system “crossed-eyed” even if the physical cameras are perfectly aligned.

Environmental Factors and Material Fatigue

Environmental conditions play a subtle yet significant role. Extreme temperature fluctuations can cause materials to expand and contract, potentially stressing camera mounts or distorting lens elements over time. Prolonged exposure to UV radiation can degrade plastics, leading to subtle changes in structural integrity. Additionally, dust, moisture, or other contaminants entering the camera housing can physically impede lens movement, obscure sensors, or interfere with internal wiring, leading to operational anomalies that mimic misalignment. Material fatigue, particularly in frequently stressed components, can also contribute to gradual shifts in camera positioning.

Manufacturing Tolerances and Assembly Errors

Even with stringent quality control, inherent manufacturing tolerances can mean that no two camera modules or drone frames are absolutely identical. Small deviations from design specifications during assembly can introduce initial misalignments that, while potentially compensated for by initial factory calibration, make the system more susceptible to becoming “crossed-eyed” over time or with operational stress. These subtle initial variances underscore the importance of robust calibration routines throughout a drone’s lifecycle.

The Impact of “Crossed-Eyed” Vision on Drone Applications

The consequences of a “crossed-eyed” imaging system extend far beyond mere visual distortion, directly affecting the operational efficacy and safety of drones across various critical applications.

Impaired 3D Mapping and Photogrammetry

In applications like surveying, construction site monitoring, and digital twin creation, drones equipped with high-resolution cameras are used to capture vast amounts of imagery for 3D reconstruction. A “crossed-eyed” system, with its misaligned cameras, will produce inconsistent parallax information across images. This results in inaccurate point clouds, warped geometric models, and errors in critical measurements suchations as volume calculations or elevation data. For industries relying on the precision of these outputs, a “crossed-eyed” drone directly translates to unreliable data, costly recalculations, and potential project delays.

Compromised Obstacle Avoidance and Autonomous Navigation

Perhaps one of the most critical impacts of “crossed-eyed” vision is on a drone’s ability to safely navigate its environment. Autonomous drones rely heavily on depth-sensing cameras (often stereoscopic or time-of-flight) to detect and avoid obstacles. If these sensors are misaligned, the drone’s perception of distance to an object will be erroneous. It might “see” a tree branch closer or further than it actually is, leading to either unnecessary detours (false positives) or, more dangerously, collisions (false negatives). This directly compromises flight safety, increases the risk of damage to the drone and its surroundings, and erodes confidence in autonomous flight capabilities.

Distorted FPV and VR Experiences

For human operators utilizing stereoscopic FPV goggles or VR systems for immersive control, a “crossed-eyed” drone camera system can create a highly disorienting and uncomfortable experience. When the left and right eye feeds are not perfectly synchronized and geometrically aligned, the brain struggles to fuse the two images into a coherent 3D scene. This can lead to severe eye strain, headaches, nausea, and a profound loss of depth perception for the pilot, making precise maneuvers, particularly in complex environments, incredibly difficult and unsafe.

Reduced Image Quality and Data Integrity

Beyond 3D reconstruction, even tasks like panorama stitching, multi-spectral imaging, or general image fusion can suffer. If cameras designed to capture different spectral bands or overlapping fields of view are misaligned, the resulting composite images will have registration errors, blurring, or ghosting effects. This degrades overall image quality and compromises the integrity of the data, making it unsuitable for scientific analysis, environmental monitoring, or high-quality visual content creation.

Corrective Measures and Future Innovations

Addressing and preventing the “crossed-eyed” phenomenon is a continuous challenge that drives innovation in drone technology. A multi-faceted approach involving advanced calibration, robust design, and intelligent software is essential.

Advanced Calibration Techniques

The cornerstone of maintaining accurate vision is sophisticated calibration. Beyond manual checkerboard-based methods, advanced techniques include automated self-calibration algorithms that can run pre-flight or even dynamically during flight. These algorithms utilize feature matching across multiple camera views to refine intrinsic and extrinsic parameters without human intervention. Some systems leverage photogrammetric principles or utilize known ground control points to recalibrate the imaging system, ensuring optimal alignment throughout its operational life. The goal is to make calibration a seamless, frequent, and highly accurate process.

Robust Mechanical Design and Damping Systems

Engineers are continuously working on designing drone frames and camera mounts that are inherently more resilient to physical stress and vibrations. This includes using stronger, lighter materials, designing dampened mounting systems that absorb shocks, and developing quick-release camera payloads with precision locking mechanisms that minimize the chances of misalignment during handling or flight. The focus is on creating a stable, unyielding platform for the imaging sensors.

Real-time Sensor Fusion and Self-Correction Algorithms

The future of drone imaging lies in intelligent systems that can detect and compensate for minor misalignments on the fly. Advanced sensor fusion algorithms, leveraging data from IMUs (Inertial Measurement Units), GPS, and multiple cameras, can identify inconsistencies indicative of a “crossed-eyed” state. Machine learning and AI are being employed to develop self-correction capabilities, where the drone’s flight controller or imaging processor can adapt its interpretation of camera data or even subtly adjust gimbal positions to compensate for detected misalignment, ensuring continuous accuracy.

Enhanced Quality Control and Diagnostic Tools

Preventative measures are critical. Manufacturers are implementing more rigorous quality control during assembly and initial calibration. For users, sophisticated diagnostic software embedded in drone apps can perform pre-flight checks, alerting operators to potential “crossed-eyed” conditions or calibration drift. These tools can guide users through recalibration procedures or recommend servicing, empowering them to maintain the integrity of their drone’s vision system.

The Future of “Perfect Vision” in Drones

Looking ahead, innovations such as event-based cameras, which only record changes in pixel intensity, promise more robust and less data-intensive vision systems. Integration of advanced IMUs with extreme precision and the development of even more resilient and modular imaging systems will further reduce the susceptibility to becoming “crossed-eyed.” The ultimate goal is to achieve near-perfect, continuous visual clarity, allowing drones to truly “see” and interact with their environment with unprecedented accuracy and reliability.

In conclusion, while the term “crossed-eyed” originates from human biology, its metaphorical application within drone imaging vividly illustrates a critical technical challenge. Ensuring that a drone’s multiple “eyes” are perfectly aligned and calibrated is not merely an aesthetic concern but a fundamental requirement for the safe, effective, and reliable operation of these sophisticated aerial platforms. As drone technology continues to advance, the ongoing pursuit of perfect visual acuity will remain a cornerstone of innovation, enabling drones to perceive and navigate their world with unparalleled precision.