Computer technology, in its most fundamental form, represents the application of scientific knowledge for practical purposes, especially in industry. It encompasses the design, development, and implementation of hardware, software, and the underlying principles that enable computing devices to function and interact. From the earliest mechanical calculators to the sophisticated artificial intelligence systems of today, computer technology has continuously evolved, revolutionizing how we communicate, work, learn, and experience the world around us. It’s a dynamic field, constantly pushing the boundaries of what’s possible, and its impact is felt across virtually every facet of modern life.

The Evolution of Computing: From Abacus to AI

The journey of computer technology is a fascinating narrative of human ingenuity, driven by a persistent desire to automate tasks, process information more efficiently, and solve complex problems. This evolution can be broadly categorized into distinct eras, each marked by significant breakthroughs and paradigm shifts.

Early Mechanical Marvels

The genesis of computing can be traced back to ancient times with devices like the abacus, a manual calculating tool that demonstrated the principle of representing numbers and performing arithmetic operations. However, the true dawn of mechanical computing arrived in the 17th century with pioneers like Blaise Pascal and his Pascaline, a gear-driven calculator capable of addition and subtraction. Later, Gottfried Wilhelm Leibniz refined this concept, creating a machine that could also perform multiplication and division. These early devices, while limited in scope, laid the groundwork for future innovations by proving that complex calculations could be automated.

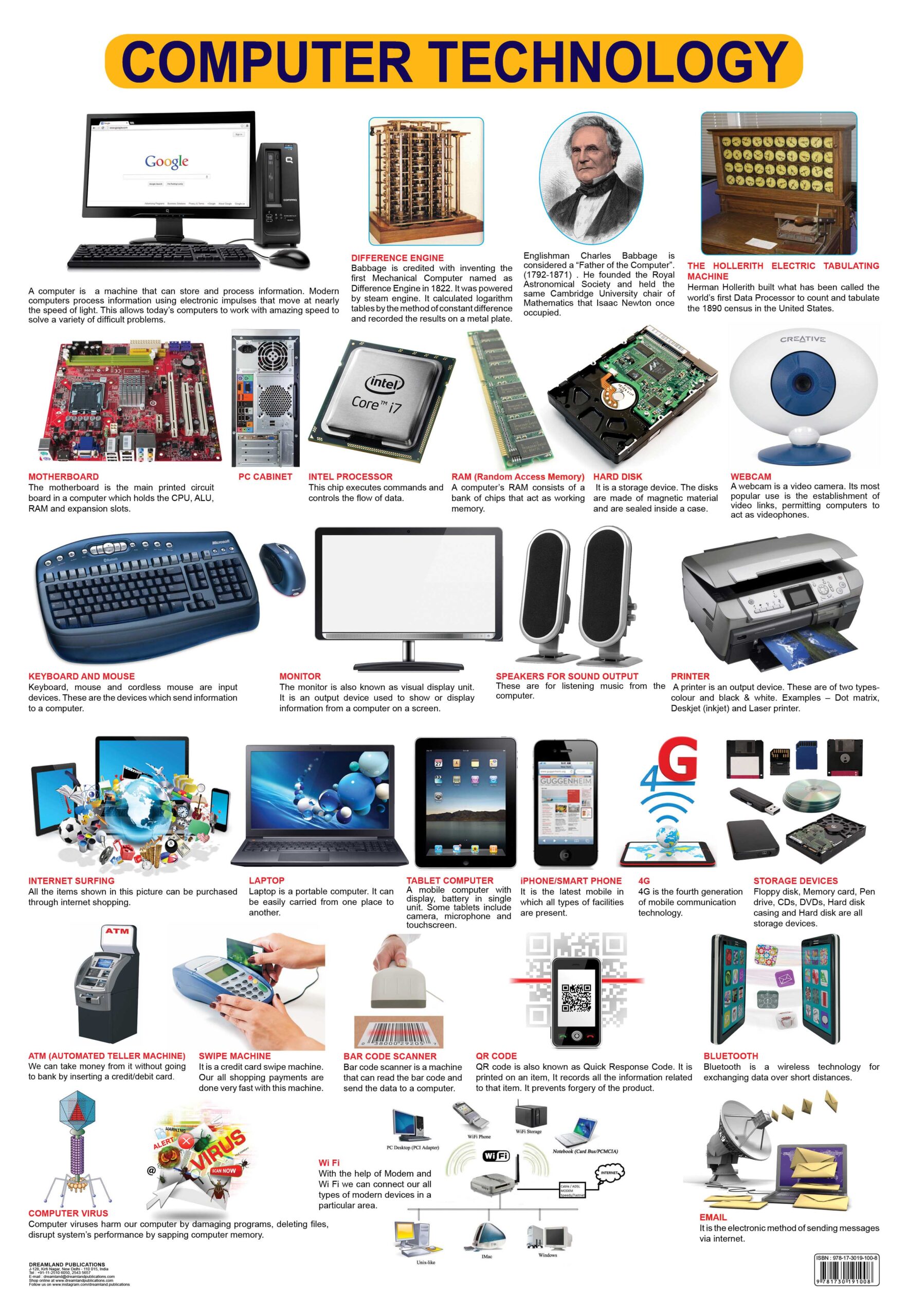

In the 19th century, Charles Babbage, often hailed as the “father of the computer,” conceptualized the Analytical Engine. This ambitious design, though never fully built in his lifetime due to technological limitations, incorporated many of the fundamental concepts of modern computers, including an arithmetic logic unit, control flow, memory, and input/output. His collaborator, Ada Lovelace, is credited with writing what is considered the first algorithm intended to be processed by a machine, recognizing the potential for computers to go beyond mere calculation.

The Dawn of Electronic Computing

The mid-20th century witnessed a seismic shift with the advent of electronic computing. The development of vacuum tubes, capable of acting as fast electronic switches, allowed for the creation of machines that could perform calculations orders of magnitude faster than their mechanical predecessors. Key milestones include the Atanasoff-Berry Computer (ABC) in the late 1930s, often considered the first automatic electronic digital computer, and the ENIAC (Electronic Numerical Integrator and Computer), completed in 1945. ENIAC was a colossal machine that occupied an entire room and consumed immense amounts of power, yet it represented a monumental leap forward in computational power.

The invention of the transistor in 1947 by Bell Labs was another pivotal moment. Transistors were smaller, more reliable, and consumed far less power than vacuum tubes, paving the way for smaller, more accessible computing devices. This led to the development of the second generation of computers in the late 1950s and early 1960s.

The Integrated Circuit and Personal Computing Revolution

The development of the integrated circuit (IC), or microchip, in the late 1950s and early 1960s, by Jack Kilby and Robert Noyce, was arguably the most transformative innovation in the history of computer technology. ICs allowed for the miniaturization of electronic components, packing thousands, and later millions, of transistors onto a single chip. This led to the third generation of computers, which were significantly smaller, faster, more affordable, and more reliable.

The subsequent invention of the microprocessor, essentially an entire CPU on a single chip, in the early 1970s, ushered in the era of personal computing. Companies like Apple, IBM, and Microsoft emerged, bringing computing power into homes and businesses. The Apple II, the IBM PC, and the advent of user-friendly operating systems like MS-DOS and later Windows democratized access to computing, transforming it from a specialized tool for scientists and engineers into an everyday necessity. This revolution fueled innovation in software development, networking, and a myriad of new applications.

The Internet Age and Beyond

The late 20th century and early 21st century have been defined by the explosion of the Internet. The development of protocols like TCP/IP and the World Wide Web created a global network connecting billions of devices, fundamentally changing communication, commerce, and information dissemination. This era has seen the rise of cloud computing, mobile devices, and the exponential growth of data.

Today, computer technology is at the forefront of transformative advancements like Artificial Intelligence (AI), Machine Learning (ML), Big Data analytics, and the Internet of Things (IoT). These technologies are not just about processing information; they are about enabling machines to learn, adapt, and interact with the physical world in increasingly sophisticated ways, promising further radical shifts in the years to come.

The Pillars of Computer Technology: Hardware and Software

At its core, computer technology is built upon the symbiotic relationship between two fundamental components: hardware and software. Neither can function without the other, and their intricate interplay is what brings computational power to life.

Hardware: The Physical Foundation

Hardware refers to the tangible, physical components of a computer system. These are the parts you can see and touch, forming the infrastructure upon which all computational processes are executed.

Central Processing Unit (CPU)

The Central Processing Unit (CPU) is often referred to as the “brain” of the computer. It’s responsible for executing instructions and performing calculations. Modern CPUs are incredibly complex, containing billions of transistors on a single chip, capable of processing billions of operations per second. Its speed and efficiency are critical determinants of a computer’s overall performance.

Memory and Storage

Random Access Memory (RAM) is a type of volatile memory that the CPU uses to temporarily store data and instructions that are currently being processed. It allows for rapid access to information, significantly speeding up operations. However, RAM is cleared when the computer is powered off.

For long-term data storage, computers utilize storage devices such as Hard Disk Drives (HDDs) and Solid State Drives (SSDs). HDDs use spinning platters to store data magnetically, while SSDs use flash memory, offering much faster read/write speeds and greater durability. The capacity of these storage devices determines how much data, software, and media a computer can hold.

Input and Output Devices

Input devices allow users to interact with the computer and provide it with data. Common examples include keyboards, mice, touchscreens, microphones, and scanners.

Output devices display or present the results of the computer’s processing. These include monitors, printers, speakers, and projectors. The seamless interaction between input and output devices, mediated by the computer’s internal hardware, is crucial for a functional system.

Motherboard and Peripherals

The motherboard is the main printed circuit board that connects all the other hardware components, including the CPU, RAM, storage drives, and expansion cards. It acts as the central nervous system of the computer, facilitating communication between these parts.

Other essential hardware components include the graphics processing unit (GPU), which handles visual output, and various peripherals such as network interface cards for internet connectivity, sound cards for audio, and optical drives for reading CDs and DVDs.

Software: The Intelligence and Instructions

Software, in contrast to hardware, is the set of instructions, data, or programs used to operate computers and execute specific tasks. It’s the intangible element that dictates what the hardware does and how it does it.

Operating Systems (OS)

The Operating System (OS) is the most fundamental type of software. It acts as an intermediary between the hardware and the user, managing the computer’s resources, providing a user interface, and allowing other application programs to run. Examples include Windows, macOS, and Linux. A stable and efficient OS is vital for the smooth functioning of any computing device.

Application Software

Application software is designed to perform specific tasks for the end-user. This category is vast and encompasses everything from word processors and spreadsheets to web browsers, video games, and sophisticated scientific simulation programs. Each application is a complex set of instructions tailored to achieve a particular objective.

System Software and Utilities

Beyond operating systems and applications, there is system software, which includes programs like device drivers, firmware, and utility programs. Device drivers, for instance, allow the OS to communicate with specific hardware components. Utility programs, such as antivirus software and disk defragmenters, help maintain and optimize the computer’s performance and security.

Programming Languages and Development

The creation of software relies on programming languages, which are formal languages designed to communicate instructions to a computer. Languages like Python, Java, C++, and JavaScript are used by developers to write the code that forms applications and systems. The field of software development is continuously evolving, with new languages and frameworks emerging to address the ever-increasing complexity and demands of modern computing.

The Impact and Future of Computer Technology

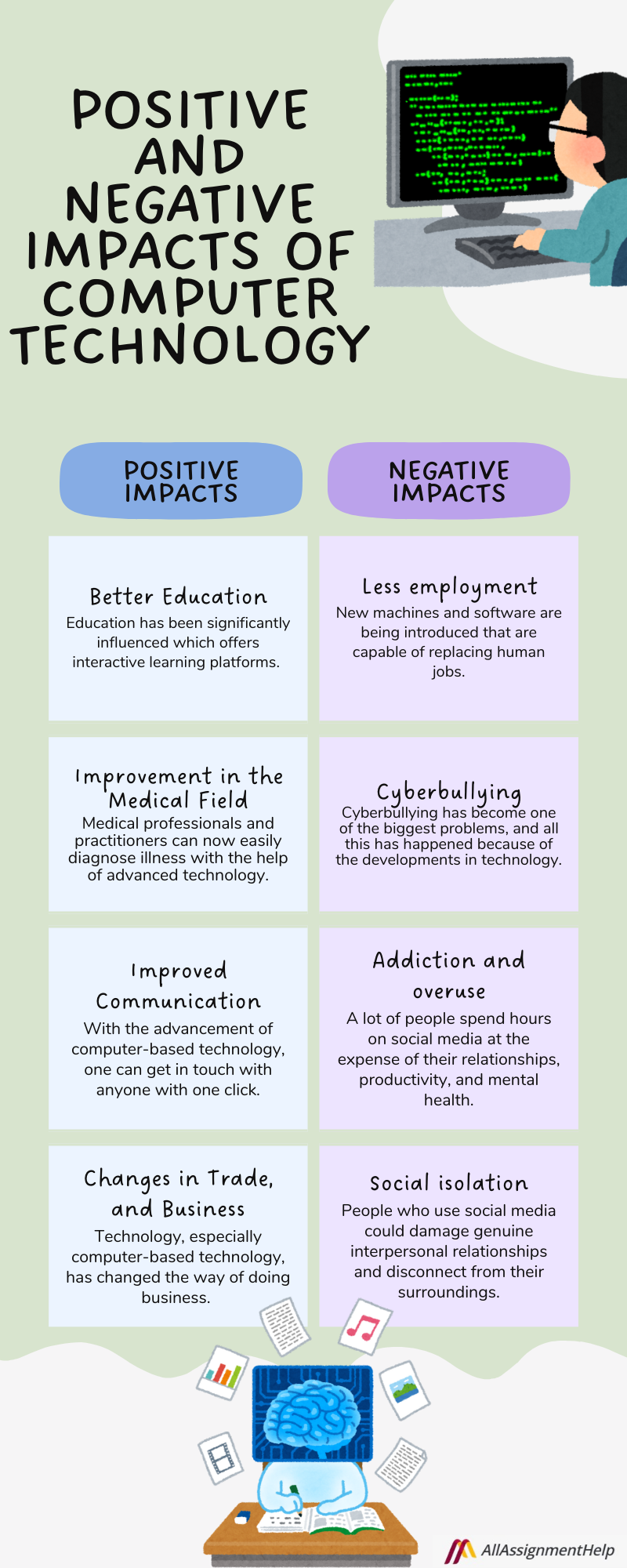

The pervasive influence of computer technology on society is undeniable, and its trajectory suggests even more profound transformations in the future. Its ability to process information at unprecedented speeds, connect individuals globally, and automate complex processes has reshaped industries, economies, and daily life.

Reshaping Industries and Economies

Computer technology has been a primary driver of economic growth and industrial transformation. The automation of manufacturing processes through robotics and AI has increased efficiency and productivity. The financial sector has been revolutionized by algorithmic trading and digital banking. The entertainment industry has been fundamentally altered by digital distribution and streaming services. Furthermore, new industries have emerged entirely due to advancements in computing, such as software development, data science, and cybersecurity. The ability to collect, analyze, and act upon vast amounts of data has become a critical competitive advantage in virtually every sector.

Enhancing Human Capabilities and Connectivity

Beyond the economic realm, computer technology has significantly enhanced human capabilities and connectivity. The internet has democratized access to information, making knowledge more readily available than ever before. Communication tools have become instantaneous and global, fostering collaboration and bridging geographical divides. From advanced medical diagnostic tools to immersive virtual reality experiences, computer technology is expanding the horizons of human potential and experience. Educational platforms, online courses, and digital learning tools are making education more accessible and personalized.

The Frontier of Innovation: AI, Quantum Computing, and Beyond

The future of computer technology is characterized by exciting and potentially paradigm-shifting innovations. Artificial Intelligence (AI) continues its rapid ascent, with advancements in machine learning enabling systems to perform tasks that were once exclusively the domain of human intelligence, such as complex problem-solving, natural language understanding, and creative generation. Quantum computing, while still in its nascent stages, promises to unlock computational power far beyond what is currently possible, with the potential to revolutionize fields like drug discovery, materials science, and cryptography.

The ongoing miniaturization of components, coupled with the exponential growth of data and the increasing interconnectivity of devices through the Internet of Things (IoT), will continue to drive innovation. We can anticipate a future where computing is even more seamlessly integrated into our environment, leading to smarter cities, more personalized healthcare, and more intuitive human-computer interactions. The continuous evolution of computer technology ensures that its impact will only deepen, shaping the future of humanity in ways we are only beginning to imagine.