In the rapidly evolving landscape of aerial imaging and remote sensing, the intersection of drone-captured data and generative artificial intelligence has introduced a new lexicon for professionals. Among the most critical of these terms is the “CFG Scale.” While originally rooted in the domain of latent diffusion models and generative AI, the CFG Scale—short for Classifier-Free Guidance Scale—has become a cornerstone concept for aerial photographers, surveyors, and filmmakers who utilize AI-driven tools to enhance, upscale, or modify drone imagery.

Understanding the CFG Scale is no longer just for software engineers; it is an essential skill for anyone operating high-end gimbal cameras or working in post-production for aerial cinematography. It represents the fine line between a photograph that looks like a grounded, realistic representation of the earth and one that descends into over-processed, “hallucinated” digital artifacts.

Defining CFG Scale in the Context of Advanced Aerial Cameras

To understand what CFG Scale is, one must first understand how modern AI-driven image processing functions. When we take a high-resolution 4K or 8K shot from a drone, the raw data is often processed using algorithms to reduce noise, enhance dynamic range, or even “fill in” missing pixels during an upscale. In generative AI workflows—such as those used for architectural visualization or cinematic environmental replacement—the CFG Scale determines how much the AI should adhere to the specific instructions (prompts or reference images) provided by the user.

The Mechanics of Classifier-Free Guidance

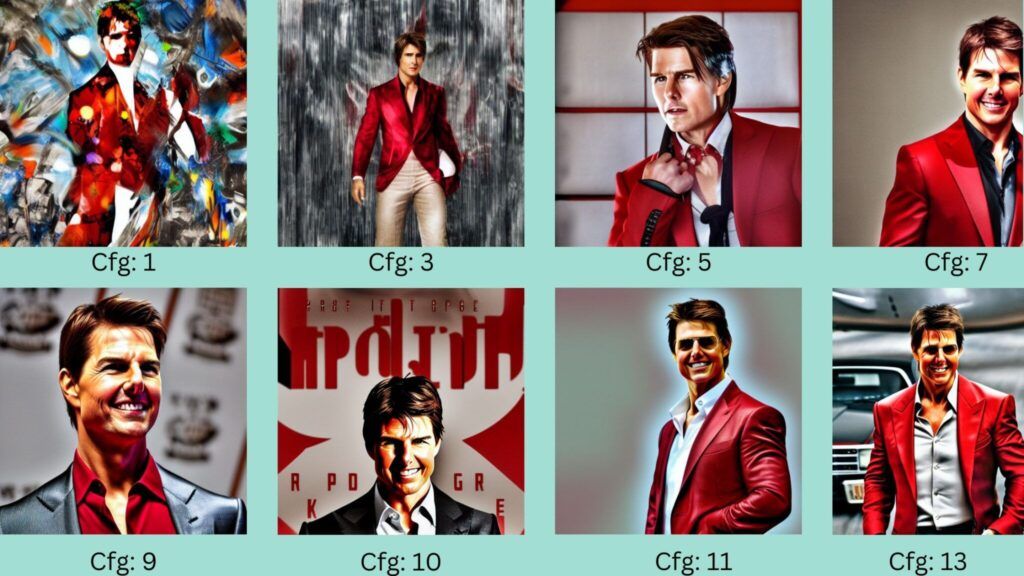

At its core, the CFG Scale is a trade-off mechanism. In the context of aerial imaging, think of it as a “fidelity vs. creativity” slider. When a drone pilot uses an AI tool to enhance a landscape shot, the software starts with a “noisy” version of the image and iteratively refines it.

The CFG Scale tells the software how much weight it should give to the guidance—the “classifier”—versus how much it should rely on its own internal logic. A lower CFG value allows the AI more freedom, which can lead to smoother gradients but potentially less detail in specific areas. A higher CFG value forces the AI to match the input data or prompt with extreme precision, which increases sharpness but can eventually lead to visual “breakage.”

Why CFG Scale Matters for Post-Production

For aerial filmmakers, the CFG Scale is the primary tool for maintaining brand consistency and visual realism. When performing “Generative Fill” to remove a stray vehicle from a cinematic drone shot or using AI to turn a daytime flight into a “golden hour” masterpiece, the CFG Scale dictates the result. If the scale is set too low, the AI might add elements that weren’t there—like changing the shape of a building. If set too high, the image may become “burnt,” characterized by oversaturated colors and harsh, unnatural edges.

Practical Applications in Aerial Photography and Asset Enhancement

The application of CFG Scale adjustments is most prevalent in the post-processing phase of drone imaging. As drone sensors become more powerful, the amount of data we collect is staggering, and AI is increasingly required to manage and interpret that data for creative and industrial outputs.

Upscaling and Detail Recovery

One of the most common uses for CFG Scale in drone photography is during the upscaling of FPV (First Person View) footage or low-light captures. FPV cameras often sacrifice sensor size for weight, resulting in footage that can be grainy. By running this footage through an AI upscaler, pilots can use a moderate CFG Scale (typically between 7.0 and 11.0) to “reconstruct” the fine details of grass, brickwork, or forest canopies.

At this range, the AI is guided enough to maintain the original geometry of the shot but has enough freedom to smooth out the sensor noise that a traditional sharpen filter would only exacerbate. This results in a 4K output that looks natively captured, rather than digitally enlarged.

Generative Filling and Environmental Modification

In high-end aerial filmmaking, “cleaning” the frame is a standard procedure. This involves removing power lines, construction equipment, or people from a shot to create a pristine environment. Using tools that leverage CFG Scale allows the editor to dictate how “strictly” the AI should match the surrounding pixels.

In these instances, a higher CFG Scale is often preferred to ensure the “filled” area perfectly matches the lighting and texture of the original drone plate. However, if the goal is to add elements—such as digitally placing a new architectural development onto a vacant lot captured via drone—the CFG Scale must be balanced to ensure the new structures blend seamlessly with the atmospheric perspective and lens distortion of the gimbal camera.

The Golden Ratio: Adjusting CFG Scale for Realistic Textures

Finding the “sweet spot” for the CFG Scale is a technical art form. Because aerial images often involve complex textures—like the chaotic patterns of ocean waves, the intricate lattices of urban grids, or the repetitive textures of agricultural fields—the AI can easily become overwhelmed.

Identifying Artifacts and Over-Saturation

When the CFG Scale is pushed too high (usually above 15.0 or 20.0), the image begins to suffer from “over-guidance.” In drone imagery, this manifests as:

- Color Distortions: Deep shadows might turn into pure black voids, and highlights may “glow” with unnatural neon tints.

- Structural Hallucinations: The AI may try so hard to find detail that it creates repetitive, hexagonal, or “deep dream” patterns in the foliage or pavement.

- Contrast “Crunch”: The subtle gradations of a sunset captured by a 10-bit sensor can be flattened into harsh, ugly bands.

Monitoring these artifacts is crucial for professionals. A professional aerial colorist will often work at a lower CFG Scale and use multiple passes to build up detail slowly, rather than forcing a high-scale reconstruction in a single step.

Maintaining Photorealism in Orthomosaics and Mapping

While primarily a creative tool, the concepts behind CFG Scale are beginning to influence technical mapping. When generating 3D models or orthomosaics from drone photos, AI is used to bridge the gaps between photos. If the “guidance” is too loose, the resulting map might look visually pleasing but be spatially inaccurate. For those in the imaging niche, understanding that guidance parameters affect the “truth” of the image is vital. In mapping, we prioritize a CFG Scale that favors the “ground truth” of the raw pixels over the aesthetic “guesses” of the AI model.

The Synergy Between Sensor Data and Generative AI

The future of drone cameras is not just in the glass and the silicon of the sensor, but in the “inference engine” that processes the light. We are entering an era where the ISP (Image Signal Processor) inside the drone might use CFG-based logic in real-time.

Imagine a drone flying in extreme low light. A standard camera would produce a dark, noisy mess. An AI-augmented camera, however, could use a diffusion model to “clean” the frame as it is recorded. In this scenario, the pilot might have a “CFG Slider” on their remote controller. Turning it up would provide a clearer, sharper image for navigation, while turning it down might preserve more of the natural (albeit noisy) atmosphere for a cinematic look.

This synergy allows for a new level of creative control. It moves the drone camera away from being a passive observer and toward being an active participant in the creation of the image. The CFG Scale is the steering wheel for that participation.

Technical Implementation: Integrating CFG into Professional Workflows

For professionals looking to integrate these concepts into their current workflow, the process usually begins in the “Digital Darkroom.” Whether you are using specialized plugins for DaVinci Resolve or standalone AI suites, the CFG Scale should be treated with the same respect as ISO or Shutter Speed.

- Baseline Testing: Always start with a neutral CFG Scale (usually 7.0). This is the standard “balanced” setting for most diffusion-based imaging tools.

- The “Inching” Method: If the aerial shot lacks definition—particularly in distant horizons—increase the scale by increments of 0.5. Watch for the moment the fine details (like power lines or individual leaves) begin to look like digital paint.

- Context-Specific Scaling:

- Urban Environments: High CFG (10.0-12.0) helps maintain the straight lines and hard edges of architecture.

- Natural Landscapes: Lower CFG (5.0-8.0) allows for the organic, chaotic variations found in nature without forcing them into rigid patterns.

- Layering and Masking: In professional imaging, one rarely applies a single AI pass to an entire frame. A high CFG Scale might be used to enhance a specific subject (like a car or a building), while a lower scale is used for the sky and clouds to maintain a soft, natural look.

The mastery of the CFG Scale represents the next frontier in aerial imaging. As we move beyond simple 4K capture and into the realm of AI-augmented reality and advanced post-production, the ability to manipulate how AI interprets our aerial data will be the hallmark of the modern drone professional. By understanding and controlling the CFG Scale, we ensure that the eye in the sky remains both a precise instrument of documentation and a powerful tool for creative expression.