The processor, or Central Processing Unit (CPU), is the brain of any sophisticated electronic device. From the complex computations required for autonomous flight navigation in drones to the intricate image processing for high-resolution aerial cinematography, the CPU’s performance is paramount. However, the raw speed at which a CPU can perform calculations is often hindered by its ability to access the data it needs. This is where the concept of cache memory becomes critically important, particularly in the demanding world of drone technology and its associated applications.

The Need for Speed: Bridging the Processor-Memory Gap

Imagine a drone pilot meticulously planning a complex aerial survey route. The flight controller needs to constantly access navigational waypoints, sensor data from GPS and IMUs (Inertial Measurement Units), and potentially even real-time environmental mapping information. All of this data resides in the main system memory, often referred to as RAM (Random Access Memory). While RAM is relatively fast, it is still orders of magnitude slower than the CPU itself.

This speed disparity creates a bottleneck. The CPU, capable of executing billions of instructions per second, frequently finds itself waiting for data to be fetched from RAM. This waiting period, known as latency, significantly degrades overall performance. In scenarios where split-second decisions are crucial, such as obstacle avoidance during high-speed FPV (First Person View) racing or maintaining stable footage during aggressive cinematic maneuvers, this latency can be the difference between success and catastrophic failure.

Cache memory acts as a high-speed buffer between the CPU and the main memory. It’s a small, extremely fast type of RAM integrated directly onto or very close to the processor chip. The core principle behind cache is locality of reference. This principle states that programs tend to access data and instructions that are spatially or temporally close to data and instructions they have recently accessed. In simpler terms, if the CPU needs a piece of data, it’s highly probable that it will need that same piece of data again soon, or it will need data located very near it in memory.

The cache stores copies of frequently used data and instructions from RAM. When the CPU needs to access data, it first checks the cache. If the data is found in the cache (a “cache hit”), it can be retrieved almost instantaneously, allowing the CPU to continue its work without delay. If the data is not in the cache (a “cache miss”), the CPU then has to fetch it from the slower RAM. However, when this data is fetched from RAM, a copy is also placed into the cache, anticipating its future use. This proactive caching significantly reduces the average time it takes for the CPU to access data, thereby boosting overall system responsiveness and performance.

Layers of Speed: Understanding Cache Hierarchies

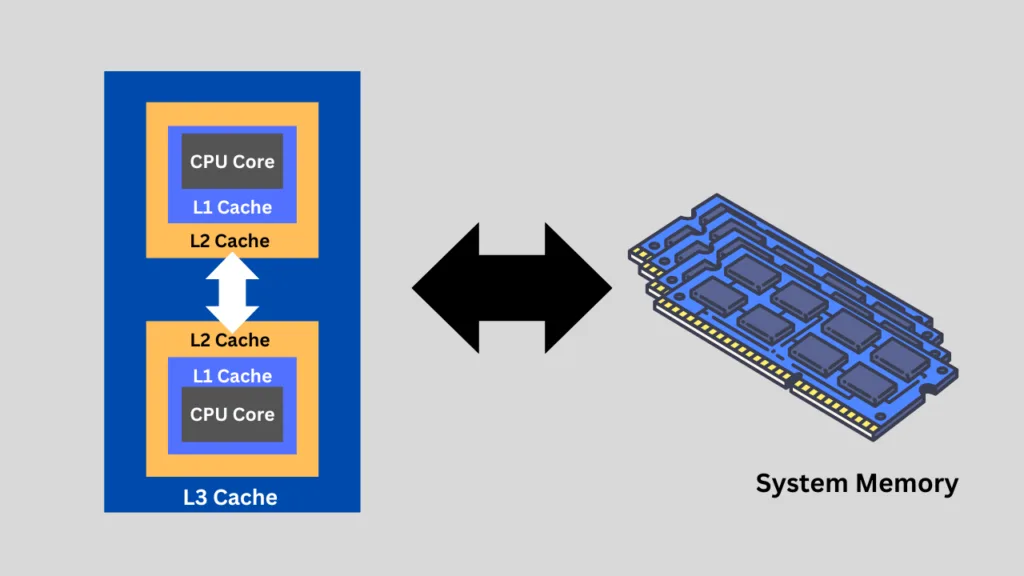

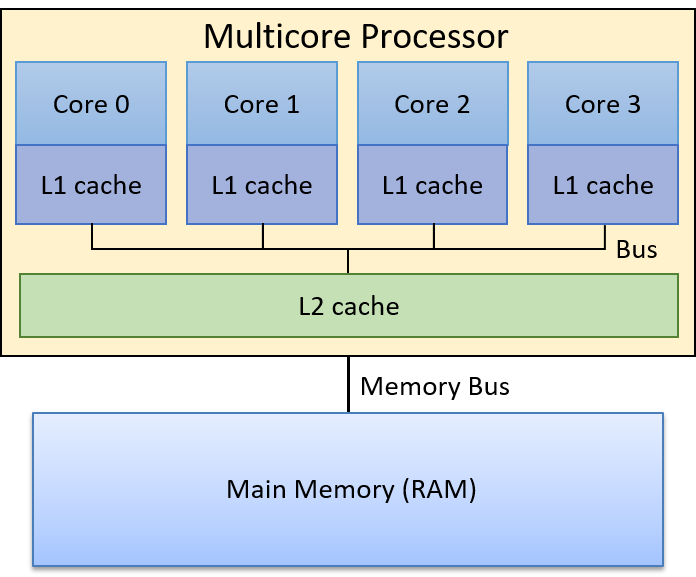

Modern processors employ a multi-level cache hierarchy to further optimize performance. This hierarchy typically consists of several levels, denoted as L1, L2, and L3 cache, each with different characteristics in terms of size, speed, and proximity to the CPU cores.

L1 Cache: The First Line of Defense

The L1 cache is the smallest and fastest cache memory. It is located directly on each individual CPU core. It is further divided into two parts:

- L1 Instruction Cache: Stores frequently used instructions that the CPU is about to execute.

- L1 Data Cache: Stores frequently used data that the CPU needs to operate on.

Because of its extreme proximity and small size, L1 cache offers the lowest latency, often measured in just a few CPU clock cycles. The goal of L1 cache is to provide the CPU core with virtually immediate access to the most critical pieces of data and instructions it needs for its current tasks. For a drone’s flight controller, this might include the immediate previous position, velocity, and upcoming control signals.

L2 Cache: The Secondary Staging Area

The L2 cache is larger and slightly slower than L1 cache. It can be dedicated to each CPU core, or it might be shared between a small group of cores. Its primary function is to hold data and instructions that are frequently accessed but not as immediately critical as those in L1.

If a cache miss occurs in the L1 cache, the CPU will then check the L2 cache. If the data is found in L2 (an L2 hit), it is retrieved much faster than from main memory. L2 cache acts as a buffer for the L1 cache, holding data that has been recently used and might be needed again soon by the same core or its closely associated cores. In a drone’s image processing pipeline, L2 might store intermediate pixel data from recently processed frames, anticipating further enhancement or analysis.

L3 Cache: The Shared Resource Pool

The L3 cache is the largest and slowest of the on-processor caches. It is typically shared among all the CPU cores on a single processor chip. Its role is to store data that is likely to be needed by any of the cores, serving as a common pool of frequently accessed information.

When a cache miss occurs in both L1 and L2 caches, the CPU will then consult the L3 cache. An L3 hit still offers significantly faster access than retrieving data from main RAM. The L3 cache is particularly important in multi-core processors, as it can help reduce contention for data between different cores and improve overall system throughput. For a drone performing simultaneous tasks like navigation, sensor fusion, and recording high-resolution video, the L3 cache can ensure that data relevant to any of these tasks is readily available to the core that needs it.

Cache Management: Algorithms and Strategies

The effectiveness of cache memory hinges on sophisticated algorithms and strategies that determine what data to store, when to store it, and what to discard when space is limited. These are complex computational tasks managed by dedicated hardware within the processor.

Cache Coherence

In multi-core processors, especially those that might manage diverse functions within a drone – for example, one core handling flight control and another handling camera gimbal stabilization – ensuring cache coherence is vital. Cache coherence protocols prevent situations where different cores have outdated versions of the same data in their respective caches. When a piece of data is modified in one cache, coherence protocols ensure that other caches holding that same data are updated or invalidated, guaranteeing that the CPU always works with the most current information. This is absolutely critical for tasks like navigation where precise, up-to-date positional data is essential.

Replacement Policies

Since cache memory is finite, there will inevitably be times when new data needs to be brought in, and older, less frequently used data must be evicted. Various replacement policies are employed to decide which data block to remove. Common policies include:

- Least Recently Used (LRU): This policy evicts the data block that has not been accessed for the longest period. The assumption is that data used recently is more likely to be used again soon.

- First-In, First-Out (FIFO): This policy evicts the oldest data block in the cache, regardless of its usage.

- Random Replacement: This policy randomly selects a data block to evict.

The choice of replacement policy can significantly impact cache performance, and different processors may use variations or combinations of these strategies.

Write Policies

When the CPU writes data, there are different ways this operation can interact with the cache and main memory:

- Write-Through: In this policy, data is written to both the cache and main memory simultaneously. This ensures data consistency but can be slower due to the extra write operation to RAM.

- Write-Back: In this policy, data is initially written only to the cache. The modified data is then written back to main memory only when the cache block containing it is about to be evicted. This is generally faster but requires mechanisms to track modified “dirty” blocks.

The trade-offs between speed and data integrity guide the selection of write policies, often with different policies being applied to different cache levels or types of operations.

The Impact of Cache on Drone Performance

The implications of processor cache for drone technology are profound and far-reaching, touching upon nearly every aspect of their operation and capabilities.

Enhanced Navigation and Autonomy

Precise and responsive navigation is the bedrock of any autonomous drone. Cache memory allows the CPU to rapidly access and process GPS coordinates, inertial data from gyroscopes and accelerometers, and altitude readings. This rapid data retrieval is crucial for real-time trajectory calculations, obstacle detection algorithms, and maintaining stable flight paths, especially in dynamic environments or during complex maneuvers. Without efficient caching, the CPU would struggle to keep up with the sheer volume and speed of sensor data, leading to potential navigation errors or delayed responses.

Real-Time Video Processing and Gimbal Stabilization

For aerial filmmaking and inspection applications, the quality and stability of the video feed are paramount. The CPU is heavily involved in processing raw camera sensor data, applying image enhancements, and managing the gimbal’s movement to ensure smooth footage. Fast access to frame data, image processing algorithms, and gimbal control commands, facilitated by cache, enables higher frame rates, reduced latency in motion prediction, and the ability to perform real-time video analytics or augmented reality overlays.

Advanced Sensing and Mapping

Drones equipped with advanced sensors like LiDAR, thermal cameras, or hyperspectral imagers generate enormous amounts of data. Cache memory plays a critical role in enabling the CPU to quickly process this data for tasks such as 3D environmental mapping, object detection, and scientific data analysis. The ability to rapidly access and manipulate point cloud data from a LiDAR scanner, for instance, is directly dependent on the efficiency of the processor’s cache.

FPV Racing and High-Speed Maneuvers

In the adrenaline-fueled world of FPV drone racing, every millisecond counts. The pilot’s inputs need to be translated into motor commands with minimal delay. The CPU’s cache ensures that these commands, along with sensor feedback for flight adjustments, are processed with extreme speed, allowing for highly responsive control and the ability to execute complex aerial maneuvers at high velocities.

Power Efficiency

While counter-intuitive, efficient cache usage can also contribute to power efficiency. By reducing the number of times the CPU needs to access the much slower and more power-hungry main memory, cache significantly lowers overall power consumption. This is a critical factor for drones, where battery life is a primary limitation, extending flight times and mission durations.

In conclusion, the cache on a processor, with its hierarchical structure and sophisticated management algorithms, is not merely a technical detail; it is a fundamental component that directly underpins the performance, responsiveness, and advanced capabilities of modern drones. From the most basic flight control to the most complex aerial surveying and cinematic endeavors, efficient data access, facilitated by the processor’s cache, is indispensable.