Bootstrap sampling, a foundational technique in computational statistics, offers a powerful and versatile approach to estimating the sampling distribution of a statistic and, consequently, deriving confidence intervals and performing hypothesis tests when traditional analytical methods are intractable. While its applications span numerous scientific disciplines, it holds particular relevance within the realm of Tech & Innovation, especially in areas involving data-driven decision-making, model evaluation, and the robust analysis of complex systems that often characterize cutting-edge technological advancements.

The Core Concept: Resampling with Replacement

At its heart, bootstrap sampling is a Monte Carlo method that leverages repeated sampling from an observed dataset to simulate the process of drawing multiple independent samples from an unknown population. The key to this simulation lies in the practice of resampling with replacement.

Imagine you have a dataset representing the performance metrics of a newly developed autonomous flight system. This dataset, your “original sample,” is assumed to be a representative snapshot of the system’s true performance in the real world. To understand the variability of a specific statistic derived from this data – say, the average flight duration or the success rate of obstacle avoidance maneuvers – you would ideally collect many more independent datasets from the real world. However, this is often prohibitively expensive, time-consuming, or even impossible.

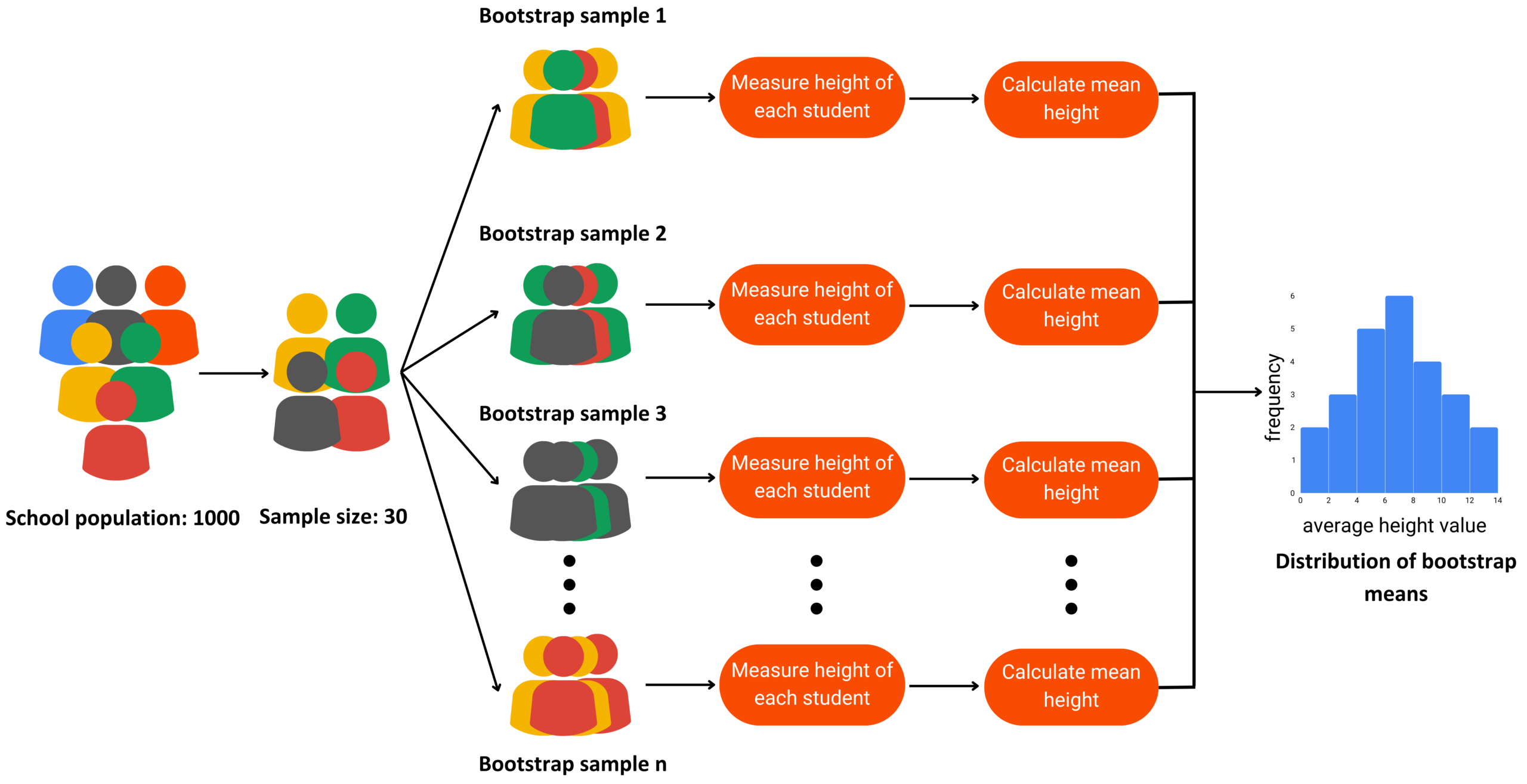

Bootstrap sampling provides an elegant workaround. It involves creating numerous “bootstrap samples” by drawing observations from your original sample with replacement. “With replacement” means that after an observation is selected for a bootstrap sample, it is returned to the original sample, allowing it to be selected again. Each bootstrap sample is typically the same size as the original sample.

How it Works in Practice

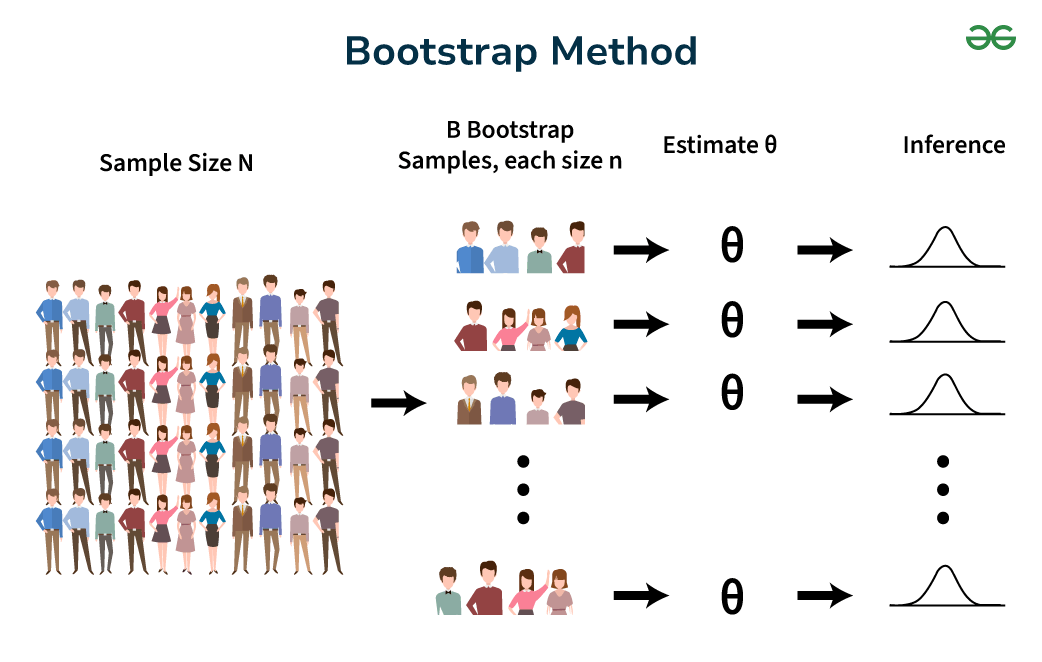

Let’s say your original sample consists of $n$ data points: $X1, X2, ldots, Xn$. A single bootstrap sample, denoted as $X^{1}$, would be a collection of $n$ observations drawn randomly from ${X1, X2, ldots, Xn}$ with replacement. This process is repeated many times, say $B$ times, to generate $B$ bootstrap samples: $X^{1}, X^{2}, ldots, X^{B}$.

For each of these $B$ bootstrap samples, you calculate the statistic of interest. If you’re interested in the mean flight duration, you would compute the mean of $X^{1}$, the mean of $X^{2}$, and so on, up to the mean of $X^{*B}$. This results in a collection of $B$ estimated means, forming the “bootstrap distribution” of the mean.

Why “With Replacement” is Crucial

The “with replacement” aspect is fundamental. If sampling were done without replacement, each bootstrap sample would simply be a permutation of the original sample, yielding no new information about variability. Sampling with replacement introduces variability because:

- Duplicates are likely: Some original data points will appear multiple times in a bootstrap sample.

- Some points are omitted: Conversely, some original data points might not appear in a given bootstrap sample at all.

This process effectively mimics the variation that would occur if you were to draw many independent samples from the underlying population, even though you only have one original sample.

Applications in Tech & Innovation

The versatility of bootstrap sampling makes it an invaluable tool across various facets of technology development and analysis. Its ability to quantify uncertainty and assess the reliability of metrics without relying on strong distributional assumptions is particularly appealing in fields characterized by complex, emergent behaviors and data that may not conform to standard statistical models.

Evaluating Machine Learning Models

In the domain of artificial intelligence and machine learning, particularly with autonomous systems, drone navigation algorithms, or sensor fusion techniques, bootstrap sampling is widely used for model evaluation.

Estimating Model Performance Uncertainty

When developing a predictive model, such as one that forecasts optimal flight paths or identifies potential hazards for a drone, it’s crucial to understand how reliably it performs. Suppose you train a model on a dataset and achieve a certain accuracy. How confident are you in that accuracy?

Bootstrap sampling can be applied to the evaluation dataset. You can repeatedly draw bootstrap samples from your test set, re-evaluate the model on each bootstrap sample, and collect a distribution of performance metrics (e.g., accuracy, precision, recall, F1-score). This distribution allows you to:

- Calculate confidence intervals: For instance, a 95% confidence interval for the model’s accuracy can be constructed from the bootstrap distribution. This provides a range within which the true accuracy is likely to lie.

- Assess model stability: If the performance metrics vary wildly across bootstrap samples, it might indicate that the model is highly sensitive to the specific training data and may not generalize well.

Hyperparameter Tuning and Model Selection

Bootstrap sampling can also aid in selecting the best model or tuning hyperparameters. By applying bootstrap to validation sets during hyperparameter optimization, you can get a more robust estimate of how each hyperparameter configuration performs, reducing the risk of selecting a configuration that performed well purely by chance on a specific split of the data.

Analyzing Sensor Data and System Performance

Modern technological systems, from advanced UAVs to sophisticated mapping drones, rely on a multitude of sensors. The data generated by these sensors often exhibit complex patterns and potential noise.

Quantifying Uncertainty in Sensor Readings

Consider a system that fuses data from multiple GPS receivers and inertial measurement units (IMUs) to determine a drone’s precise location. Each sensor measurement has inherent noise. Bootstrap sampling can be used to:

- Estimate the uncertainty in calculated position: By bootstrapping the individual sensor readings, you can generate a distribution of estimated positions, providing a measure of the positional uncertainty. This is critical for applications requiring high precision, such as aerial surveying or autonomous landing.

- Assess the reliability of derived metrics: For example, in remote sensing applications, you might derive vegetation indices from multispectral imagery. Bootstrapping the pixel values can help quantify the confidence in these derived indices.

Robustness Testing of Algorithms

Any algorithm processing real-world data, especially in dynamic environments like flight, needs to be robust. Bootstrap sampling allows developers to test the robustness of algorithms by simulating various data conditions. By creating bootstrap samples that may contain outliers or slightly different data distributions, one can observe how the algorithm’s output changes and identify potential failure points.

Enabling Advancements in Autonomous Flight

The drive towards fully autonomous flight, whether for drones or other aerial vehicles, relies heavily on the ability to make safe and reliable decisions under uncertainty.

Evaluating Decision-Making Policies

An autonomous flight controller’s decision-making policy is crucial for safe navigation. This policy might be learned through reinforcement learning or designed through complex control logic. Bootstrap sampling can be employed to:

- Estimate the reliability of performance metrics: If a policy is evaluated based on metrics like the number of successful missions or average time to complete a task, bootstrapping these metrics on simulated or real-world flight data can provide confidence intervals, indicating how likely these observed performance levels are.

- Test edge cases: By creating bootstrap samples that are skewed towards specific challenging scenarios (e.g., high wind conditions, unexpected obstacles), developers can assess the robustness of the autonomous system’s responses.

Understanding System Drift and Degradation

Over time, systems can experience drift or degradation in performance. Bootstrap sampling can be used retrospectively on historical data to assess the significance of observed changes in performance metrics. For example, if a fleet of drones shows a gradual increase in flight time failures, bootstrapping can help determine if this trend is statistically significant or within expected random variation.

Advantages and Considerations

The power of bootstrap sampling lies in its simplicity, flexibility, and minimal assumptions. However, like any statistical technique, it comes with its own set of advantages and considerations.

Key Advantages

- Non-parametric: It does not require assumptions about the underlying distribution of the data (e.g., normality). This is a significant advantage in technological applications where data distributions can be complex or unknown.

- Versatility: It can be used to estimate the sampling distribution of virtually any statistic, including means, medians, variances, correlations, regression coefficients, and more complex estimators.

- Intuitive: The concept of resampling to understand variability is relatively straightforward to grasp.

- Computational Efficiency: With modern computing power, performing thousands of bootstrap replications is feasible for most applications.

Important Considerations

- Sample Size: While bootstrap sampling can work with relatively small samples, its effectiveness increases with the size of the original sample. Very small original samples may not contain enough information to accurately represent the population.

- Representativeness of Original Sample: The bootstrap is only as good as the original sample. If the original sample is biased or not representative of the population of interest, the bootstrap estimates will also be biased.

- Independence of Observations: Bootstrap sampling assumes that the observations in the original sample are independent and identically distributed (i.i.d.). If there are strong dependencies (e.g., time series data with autocorrelation), standard bootstrap methods may need modification (e.g., block bootstrap).

- Choice of Statistic: While the bootstrap can estimate the distribution of many statistics, its performance can vary. For highly complex or pathological statistics, analytical approximations might sometimes be more appropriate.

- Number of Replications (B): The choice of $B$, the number of bootstrap samples, is important. A common recommendation is $B ge 1000$, and often $B ge 5000$ for more precise confidence intervals. The marginal gain in precision diminishes with very large $B$.

Conclusion

Bootstrap sampling stands as a cornerstone of modern statistical inference in data-rich and computationally driven fields like Tech & Innovation. Its ability to provide robust estimates of uncertainty, validate models, and analyze complex system performance without stringent distributional assumptions makes it an indispensable tool. From assessing the reliability of AI-driven autonomous flight systems to quantifying the precision of sensor data, bootstrap sampling empowers developers and researchers to make more informed decisions, build more trustworthy technologies, and push the boundaries of what is possible in the ever-evolving landscape of technological advancement.