In an increasingly data-driven world, understanding the fundamental units of digital information is not merely a technicality but a necessity for anyone engaging with technology. From selecting a smartphone with sufficient storage to comprehending the demands of advanced AI models or processing vast datasets from remote sensing platforms, a clear grasp of how digital information is measured empowers users and professionals alike. Among the most commonly encountered units are kilobytes and megabytes, often appearing in file sizes, internet speeds, and storage capacities. The simple question, “What is bigger: megabytes or kilobytes?” serves as a gateway to unraveling the hierarchical structure of digital data, a foundational concept for navigating the complexities of modern tech and innovation. This article delves into these essential units, clarifying their relationship, and illustrating their significance across various technological domains.

The Foundational Blocks of Digital Information: Bits and Bytes

At the heart of all digital data lies a binary system, a language of two states represented by 0s and 1s. This binary nature gives rise to the smallest units of information, which then combine to form larger, more comprehensible units that we interact with daily.

Bits: The Smallest Unit

A bit (binary digit) is the most elementary unit of information in computing and digital communications. It can represent one of two possible states: 0 or 1. Think of it as a single light switch that is either off (0) or on (1). While crucial for the underlying electronic processes, a single bit carries very little meaning on its own for human interpretation. Its power comes from its ability to combine with other bits to form more complex data representations. This fundamental unit is the bedrock upon which all digital data, from simple text characters to intricate algorithms driving autonomous systems, is built.

Bytes: Grouping Bits for Meaning

To create meaningful information, bits are grouped together. The most common grouping is eight bits, which form a byte. A single byte is capable of representing a much wider range of values than a single bit. For instance, one byte can represent 2^8 (256) different values, allowing it to encode a single character (like a letter, number, or symbol) in standard character sets such as ASCII. Historically, a byte was the number of bits used to encode a single character of text in a computer and is the smallest addressable unit of memory in most computer architectures. The byte serves as the primary base unit for measuring digital data, forming the basis for all larger units. Understanding the byte as a collection of eight bits is critical for appreciating how data is structured and processed, laying the groundwork for comprehending larger data measurements.

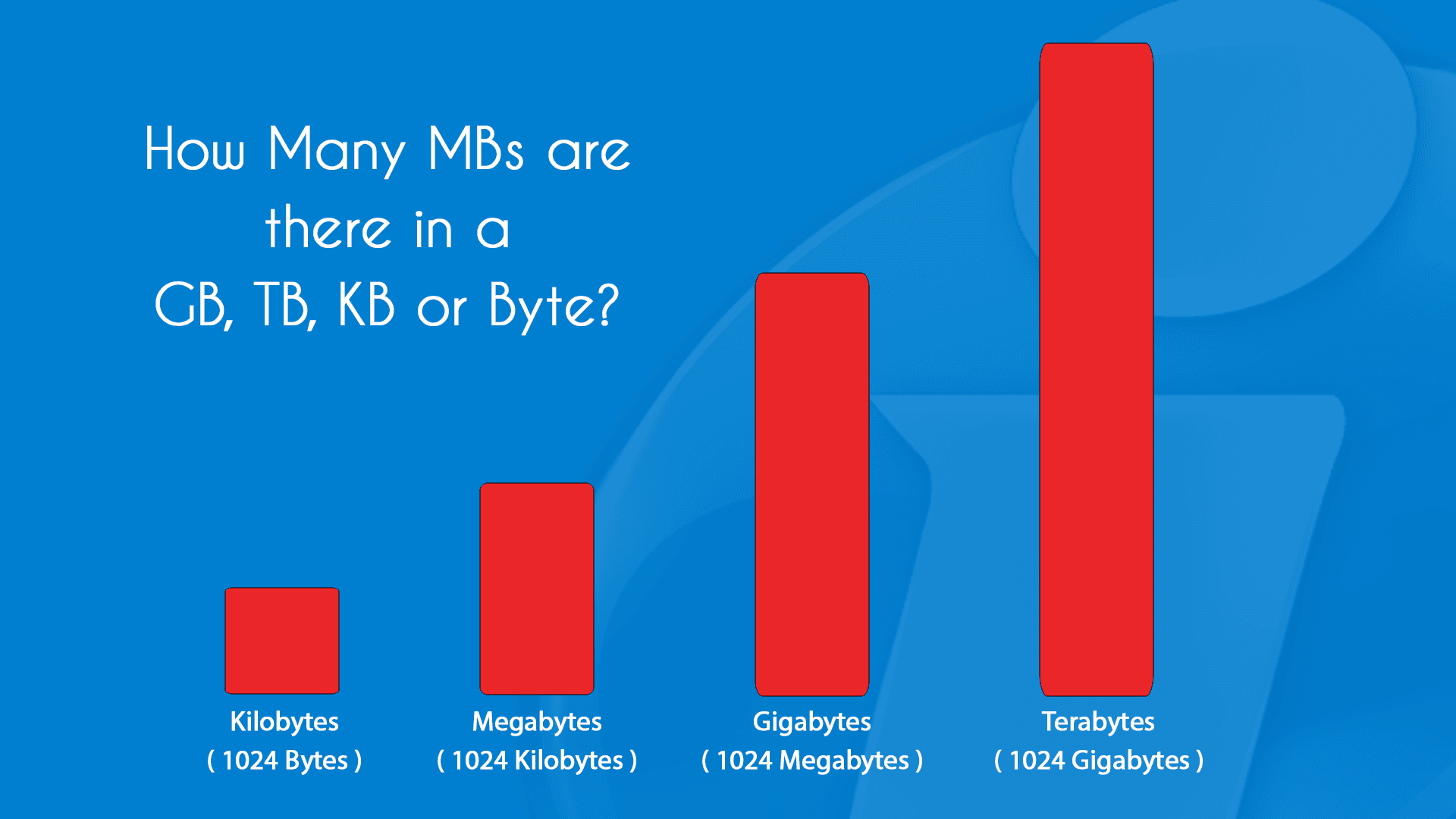

Scaling Up: Kilobytes, Megabytes, and Beyond

As digital data grew in complexity and volume, the need for larger, more convenient units of measurement became apparent. This led to the introduction of prefixes borrowed from the International System of Units (SI), albeit with a slight nuance in the digital realm.

Kilobytes (KB): The First Step Up

The kilobyte (KB) is the next significant step up from the byte. Historically, the prefix “kilo-” in the SI system denotes a multiplier of 1,000. However, in computing, which is based on powers of two, a kilobyte was often understood as 2^10 bytes, which equals 1,024 bytes. This distinction between 1,000 and 1,024 can sometimes cause slight confusion, particularly when comparing advertised storage capacities (which often use the SI definition of 1,000) with actual usable space. For most practical purposes and general understanding, especially when discussing file sizes or small data chunks, a kilobyte can be thought of as approximately one thousand bytes. Documents containing short paragraphs of text, small images, or simple program files often fall into the kilobyte range. For example, a typical plain text email without attachments might be a few kilobytes in size.

Megabytes (MB): A Significant Leap

Building upon the kilobyte, the megabyte (MB) represents an even larger quantity of data. Using the power-of-two standard, one megabyte is 1,024 kilobytes, or 1,024 * 1,024 bytes, which equates to 1,048,576 bytes. If we adhere to the SI definition, a megabyte is 1,000 kilobytes, or 1,000,000 bytes. Regardless of the precise definition used (1,000 or 1,024), the conclusion remains clear: a megabyte is substantially larger than a kilobyte. To put it into perspective, one megabyte is roughly one thousand kilobytes. Files that typically measure in megabytes include larger images, short video clips, MP3 audio files, and many common software applications. For instance, a high-resolution photograph can easily be several megabytes, and a short, compressed video might be tens of megabytes.

The Relationship: Kilobytes to Megabytes

To directly answer the question: Megabytes are significantly bigger than kilobytes.

Specifically:

- 1 Megabyte (MB) = 1,024 Kilobytes (KB) (using the binary definition prevalent in computing)

- 1 Megabyte (MB) = 1,000 Kilobytes (KB) (using the decimal definition common for marketing storage)

This means that a file of 5 MB is 5 times larger than a file of 1 MB, and 5 MB is equivalent to approximately 5,120 KB (5 * 1024). This proportional relationship is crucial for estimating data storage needs, understanding data transfer rates, and managing digital resources effectively. Recognizing this hierarchy – that bits form bytes, bytes form kilobytes, and kilobytes form megabytes – is fundamental to navigating the digital landscape and is particularly important as data scales ever larger.

Why These Units Matter in Modern Technology

Beyond simple size comparisons, the understanding of kilobytes and megabytes has profound implications for how we interact with, design, and innovate within the technological sphere. These units are critical for assessing performance, managing resources, and making informed decisions across various tech applications.

Data Storage: From Local Devices to Cloud Infrastructure

The most intuitive application of these units is in data storage. When you purchase a new device, be it a smartphone, a laptop, or an external hard drive, its storage capacity is almost always advertised in gigabytes (GB) or terabytes (TB), which are even larger units derived from megabytes. However, the files that fill this storage begin at the kilobyte and megabyte level. Understanding these foundational units allows users to gauge how many photos, documents, or applications can be stored. For cloud infrastructure and data centers, managing vast quantities of data, often scaling into petabytes and exabytes, hinges on an intimate understanding of how individual megabytes aggregate to form these colossal datasets. Efficient data storage and retrieval are paramount for the performance and scalability of modern tech services.

Network Speed and Bandwidth: Measuring Data Transfer

Data units are equally critical for understanding network performance. Internet service providers often advertise speeds in “megabits per second” (Mbps) or “gigabits per second” (Gbps), where a bit (not a byte) is the unit of transfer. While 8 bits make a byte, these measurements describe the rate at which data bits can be moved across a network. When downloading files, users typically see progress reported in “kilobytes per second” (KB/s) or “megabytes per second” (MB/s), indicating the rate at which whole bytes of data are being transferred. A clear distinction between bits and bytes is essential here to avoid confusion. A 100 Mbps internet connection theoretically allows for a maximum download speed of approximately 12.5 MB/s (100 divided by 8). Understanding this relationship helps in assessing internet plan suitability for data-intensive tasks like streaming high-definition content, conducting remote sensing data transfers, or participating in cloud-based AI training.

Software and Application Sizing: Installation and Performance

Every piece of software, from operating systems to mobile applications, has a specific file size measured in kilobytes, megabytes, or even gigabytes. Before installation, users check if they have enough free storage. Developers, on the other hand, constantly strive to optimize their code to reduce application size, thereby enhancing download speeds, minimizing storage footprint, and improving performance, especially for resource-constrained devices or in environments with limited bandwidth. The efficiency of data structures and algorithms directly impacts the number of bytes required to represent and process information, a core concern in software engineering and vital for the proliferation of lightweight, high-performance applications in areas like IoT and edge computing.

Practical Implications in Advanced Tech & Innovation

The foundational understanding of kilobytes and megabytes takes on heightened significance in the realm of advanced technology and innovation, where massive datasets and intricate algorithms are the norms.

High-Resolution Data in AI and Machine Learning: Training Models

Artificial Intelligence (AI) and Machine Learning (ML) models thrive on data. The quality and quantity of this data directly impact the performance and accuracy of these systems. For instance, training an image recognition AI requires vast collections of high-resolution images, each potentially several megabytes in size. Video data, crucial for tasks like autonomous navigation or behavioral analysis, scales even faster, with each second often comprising many megabytes. The cumulative effect of millions or billions of such data points quickly leads to datasets in the gigabyte, terabyte, and even petabyte ranges. Researchers and engineers in AI must meticulously manage these data volumes, understanding how megabytes aggregate to inform decisions about storage, processing power, and data transfer pipelines essential for robust model training and deployment. Efficient handling of these data units translates directly into faster training times and more capable AI systems.

Remote Sensing and Mapping: Managing Gigabytes of Geospatial Data

Remote sensing technologies, from satellite imagery to advanced lidar systems, generate enormous quantities of geospatial data. Each captured pixel or data point carries information that contributes to the overall dataset. A single high-resolution satellite image can be hundreds of megabytes, and a large-scale mapping project involving continuous data acquisition can easily produce gigabytes or terabytes of data daily. Processing, storing, and transmitting this voluminous information for applications like environmental monitoring, urban planning, or disaster response necessitates a deep understanding of data units. Innovators in this field are constantly developing new compression algorithms and data management strategies to efficiently handle these massive data streams, ensuring timely insights from detailed geospatial intelligence. The efficient management of megabytes of raw sensor data is the first step towards producing actionable intelligence.

The Era of Big Data: Terabytes and Petabytes

The concept of “Big Data” refers to datasets so large and complex that traditional data processing application software are inadequate to deal with them. These datasets are typically characterized by volume, velocity, and variety. While our focus began with kilobytes and megabytes, these small units are the building blocks that lead to the terabyte (1,024 GB or approximately 1 trillion bytes) and petabyte (1,024 TB or approximately 1 quadrillion bytes) scales of Big Data. Analyzing such immense volumes of information, often sourced from diverse sensors, IoT devices, and digital interactions, is fundamental to advancements in fields like personalized medicine, smart cities, and climate modeling. The ability to efficiently store, process, and query data at these scales relies on an intricate architecture built upon the fundamental understanding of how individual megabytes contribute to the overall data universe.

Navigating Data Efficiently: Best Practices

As the volume of digital information continues its exponential growth, efficient data management is not just convenient; it’s imperative. Understanding data units guides best practices in optimizing storage, transfer, and overall system performance.

Understanding File Compression

File compression techniques are vital tools for managing data efficiently. By understanding that a megabyte represents a significant amount of information, developers and users seek ways to reduce a file’s size without losing critical data. Algorithms like ZIP, JPEG for images, and MP4 for video work by identifying and removing redundant information, effectively packing more data into fewer bytes. A photograph that might originally be 10 MB can be compressed to 2 MB as a JPEG, significantly reducing storage requirements and speeding up transfer times. For large datasets in tech and innovation, advanced compression methods are critical for making massive data manageable for storage, transmission, and processing, especially in bandwidth-constrained environments.

Optimizing Data Usage in Connected Devices

In an ecosystem of interconnected devices, from smart sensors to autonomous vehicles, optimizing data usage is crucial for battery life, network efficiency, and operational costs. Devices often need to transmit data wirelessly, and every kilobyte transferred consumes power and bandwidth. By intelligently processing data at the source (edge computing) and only transmitting essential information, or by using efficient data formats, innovators can reduce the megabytes sent over networks. This optimization extends the operational lifespan of remote devices, ensures more responsive AI systems, and enhances the reliability of data collection for diverse applications, from agricultural monitoring to environmental remote sensing.

Conclusion

The seemingly simple question, “What is bigger: megabytes or kilobytes?” unlocks a fundamental understanding of digital information that underpins virtually every aspect of modern technology and innovation. We’ve seen that megabytes are indeed substantially larger than kilobytes, serving as a critical benchmark for quantifying digital content. From the humble bit to the expansive petabyte, the hierarchy of data units provides the language for describing storage capacities, network speeds, and the demands of advanced computational tasks. For anyone involved in Tech & Innovation, whether designing AI algorithms, deploying remote sensing solutions, or simply managing digital assets, a clear grasp of these units is indispensable. It empowers more informed decisions, facilitates efficient resource management, and ultimately contributes to the development and deployment of more sophisticated, responsive, and data-aware technological solutions that continue to shape our world.