Latency, in the context of audio, refers to the delay between when an audio signal is generated or sent and when it is actually heard or processed. For most everyday audio applications, this delay is imperceptible and insignificant. However, for professionals and enthusiasts working with real-time audio, such as musicians performing live, audio engineers mixing tracks, or drone pilots utilizing FPV (First-Person View) systems, audio latency can be a critical factor impacting performance, usability, and the overall experience. Understanding and managing audio latency is paramount in these specialized fields.

The Impact of Latency in Real-Time Audio Applications

In the realm of audio, latency is not merely a technical inconvenience; it is a tangible barrier that can directly affect the quality of creative output and the efficacy of a system. For musicians, a noticeable delay between pressing a key on a MIDI controller and hearing the resulting sound can make performing intricate passages impossible, throwing off timing and rhythm. Similarly, during live mixing, engineers need to hear the impact of their adjustments instantaneously. Any delay can lead to a cascade of errors, as they react to stale audio information.

Beyond performance, latency can also influence perception and immersion. In interactive audio experiences, where sound is directly tied to user actions, high latency can break the illusion of real-time responsiveness, leading to a disconnect between the user and the system. This is particularly relevant in applications where audio is a primary feedback mechanism.

Musicians and Live Performance

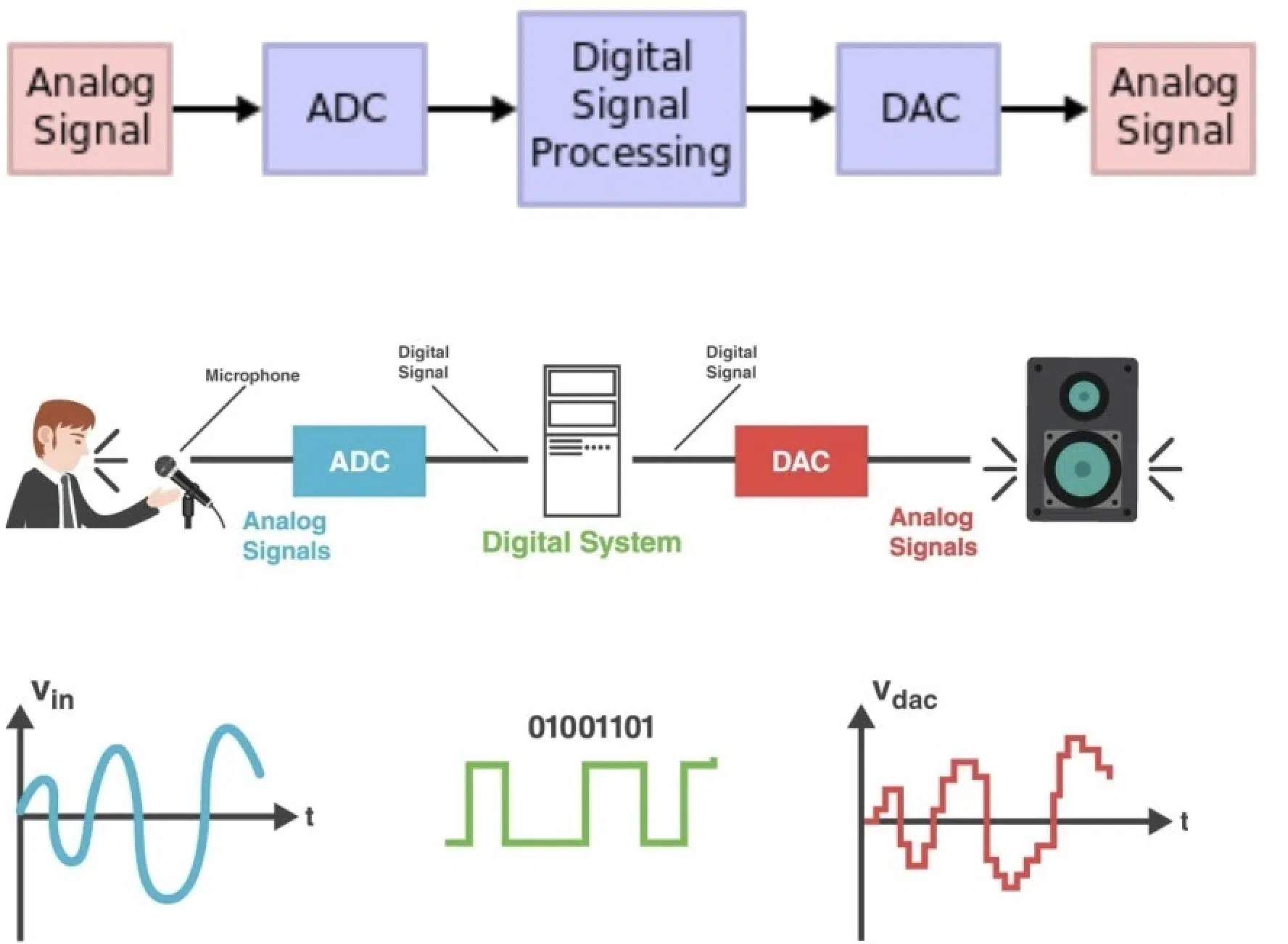

For musicians, especially those relying on digital audio workstations (DAWs) and electronic instruments, latency is a constant consideration. When a musician plays a digital instrument or triggers a sample, the audio signal travels through various processing stages before reaching their ears. This journey involves analog-to-digital conversion, digital signal processing (DSP) on the computer or dedicated hardware, and finally, digital-to-analog conversion. Each of these steps contributes to the overall latency.

MIDI Latency: A common scenario is using a MIDI controller to trigger virtual instruments within a DAW. The MIDI signal itself has negligible latency, but the subsequent audio generation and playback introduces delay. The faster the computer and the more efficient the audio drivers, the lower this latency will be. Musicians often strive for “near-zero” latency, aiming for round-trip times (from signal input to audio output) below 10 milliseconds (ms) for comfortable playing.

Monitoring: During live performances where digital effects or backing tracks are used, musicians need to monitor their own performance with minimal delay. This is often achieved through direct monitoring options on audio interfaces or by employing dedicated hardware monitoring systems that bypass the computer’s processing entirely.

Audio Engineering and Production

Audio engineers, whether in a studio setting or mixing live sound, rely on precise timing and immediate feedback. Latency can compromise the accuracy of their work.

Mixing and Mastering: In mixing, when applying effects, adjusting levels, or panning, engineers need to hear the immediate sonic consequence of their actions. High latency can lead to misjudgments, especially when working with time-sensitive effects like compression or equalization. In mastering, where subtle adjustments can have a significant impact, latency can hinder the fine-tuning process.

Live Sound Reinforcement: For live sound engineers, managing latency across a complex system of microphones, mixers, signal processors, and loudspeaker systems is crucial. The delay in transmitting and processing audio to different parts of a venue can lead to phase issues, echoes, and a generally degraded listening experience for the audience. Synchronization between audio and visual elements in live events also becomes a challenge with significant audio latency.

Factors Contributing to Audio Latency

Several components and processes within an audio system can introduce or exacerbate latency. Identifying these points of delay is the first step in mitigating them.

Hardware and Drivers

The quality and design of audio hardware, particularly audio interfaces, play a significant role.

Audio Interfaces: These devices are responsible for converting analog audio signals to digital for processing by a computer and then converting digital signals back to analog for playback through speakers or headphones. The internal processing within an audio interface contributes a small amount of latency.

Drivers: Audio drivers are the software that allows the operating system and applications to communicate with the audio hardware. Low-level drivers, such as ASIO (Audio Stream Input/Output) drivers on Windows or Core Audio on macOS, are designed for high performance and low latency. Using generic operating system drivers will often result in significantly higher latency.

Software Processing and Buffering

The software environment where audio is processed is a major source of latency.

Digital Signal Processing (DSP): When audio is manipulated by effects plugins, virtual instruments, or other software-based processors, these operations take time to compute. Complex DSP algorithms can introduce more latency than simpler ones.

Buffering: To ensure smooth playback and prevent audio dropouts, audio systems use buffers. These are small chunks of audio data that are pre-loaded for processing. A larger buffer size can reduce the likelihood of glitches but increases latency, as the system has to wait for a larger block of data to be filled before processing can begin. Conversely, a smaller buffer size reduces latency but increases the risk of audio dropouts if the system cannot process data fast enough. Finding the optimal buffer size is a balance between responsiveness and stability.

Sample Rate and Bit Depth: While not direct contributors to latency in the same way as buffering or DSP, the sample rate and bit depth of an audio signal can indirectly influence processing load. Higher sample rates (e.g., 96kHz vs. 44.1kHz) mean more audio data to process per second, which can place a greater demand on the CPU and potentially impact overall latency if the system is not robust enough.

Network Latency (for Remote Audio)

In scenarios involving networked audio, such as remote collaboration, live streaming, or even certain FPV systems, network latency becomes a dominant factor.

Transmission Delay: The time it takes for audio data to travel across a network, from the source to the destination, is highly dependent on network congestion, the distance between devices, and the network infrastructure used.

Synchronization: When multiple audio streams are being transmitted over a network, maintaining synchronization between them can be a challenge. This is particularly important in applications like videoconferencing where audio needs to align with video.

Minimizing and Managing Audio Latency

For applications where low audio latency is critical, several strategies can be employed to minimize and manage it.

Optimizing Hardware and Software Settings

The first line of defense against high latency involves meticulous configuration of the audio system.

High-Performance Audio Interfaces: Investing in professional-grade audio interfaces known for their low-latency performance and robust driver support is a fundamental step.

Low-Latency Drivers: Ensure that the correct, optimized drivers (e.g., ASIO on Windows) are installed and selected within audio applications.

Buffer Size Adjustment: Experiment with buffer size settings in the DAW or audio application. Start with the smallest setting that provides stable playback and gradually increase it if dropouts occur. The optimal setting will vary depending on the computer’s processing power and the complexity of the audio project.

CPU Optimization: Close unnecessary background applications that consume CPU resources. Some DAWs offer features for optimizing CPU performance. Ensure the computer’s power settings are configured for maximum performance.

Choosing Efficient Processing Tools

The selection of audio software and plugins can also influence latency.

Optimized Plugins: Some audio plugins are more CPU-intensive and introduce more latency than others. Opting for well-optimized plugins can make a difference.

Low-Latency Monitoring Options: Utilize direct monitoring features on audio interfaces, which allow the input signal to be sent directly to the output without passing through the computer’s processing.

Offline Processing: For tasks that do not require real-time feedback, such as applying final mastering effects or complex edits, consider performing them as offline processes. This allows the system to dedicate full resources to the task without latency constraints.

Network Considerations for Remote Audio

When dealing with networked audio, network performance is key.

Stable Network Connection: A wired Ethernet connection is generally preferred over Wi-Fi for its stability and lower latency.

Quality of Service (QoS): In network configurations, QoS settings can prioritize audio traffic over other data, reducing interference and latency.

Low-Latency Protocols: Specialized audio-over-IP protocols designed for low latency, such as Dante or AVB, are used in professional installations to achieve near-real-time audio distribution over networks.

The Evolution of Low-Latency Audio Technology

The pursuit of lower audio latency has been a driving force in the development of audio technology. From the early days of digital audio, where latency was often measured in hundreds of milliseconds, to today’s sophisticated systems capable of achieving round-trip times in the single-digit milliseconds, the progress has been remarkable. This evolution has opened up new possibilities for real-time audio manipulation, interactive sound design, and immersive audio experiences.

Advances in Digital Signal Processing

Improvements in processor power and the algorithms used for DSP have been instrumental. More efficient processing allows for complex audio tasks to be completed in shorter timeframes.

Hardware Innovations

The design and integration of components within audio interfaces and other audio hardware continue to evolve. Dedicated low-latency audio chips and optimized internal routing contribute to reduced delay.

Software and Driver Development

The ongoing development of low-latency audio drivers (ASIO, Core Audio) and the optimization of audio operating system architectures have significantly lowered the barriers to achieving near-real-time audio.

Conclusion: The Criticality of Low Latency

In summary, audio latency is the measurable delay between the generation of an audio signal and its perceived output. While often negligible in casual listening, it becomes a critical parameter in professional audio applications such as music production, live performance, and specialized audio-visual systems. Understanding the factors that contribute to latency—hardware limitations, driver inefficiencies, software processing, and network conditions—is essential for effective management. By employing strategies like optimizing hardware settings, utilizing low-latency drivers, carefully managing buffer sizes, and selecting efficient processing tools, users can significantly minimize audio latency. The continuous advancements in digital signal processing, hardware design, and software development are steadily pushing the boundaries of what is achievable in real-time audio, ensuring a more responsive, immersive, and professional audio experience across a diverse range of applications.