In an age defined by data, the ability to efficiently gather, process, and analyze information is paramount for technological advancement and strategic decision-making. From powering advanced algorithms in artificial intelligence to informing the design of cutting-edge hardware, data is the raw material of innovation. Among the sophisticated tools developed to harness this deluge of information, the website scraper stands out as a powerful and transformative technology. Far more than a simple copy-paste mechanism, a website scraper is an automated marvel designed to extract vast quantities of structured and unstructured data from the internet, converting the web’s sprawling information into actionable insights.

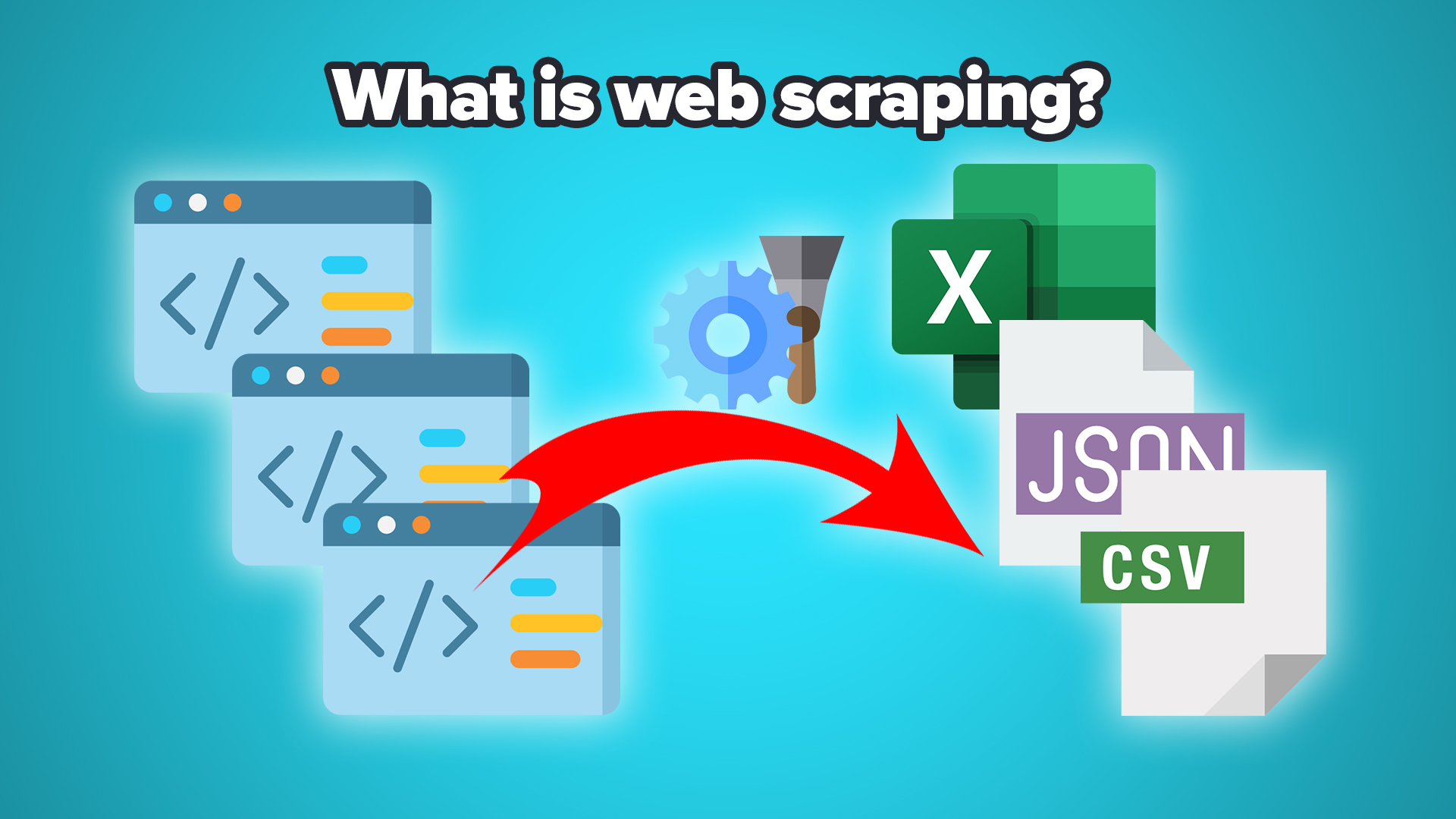

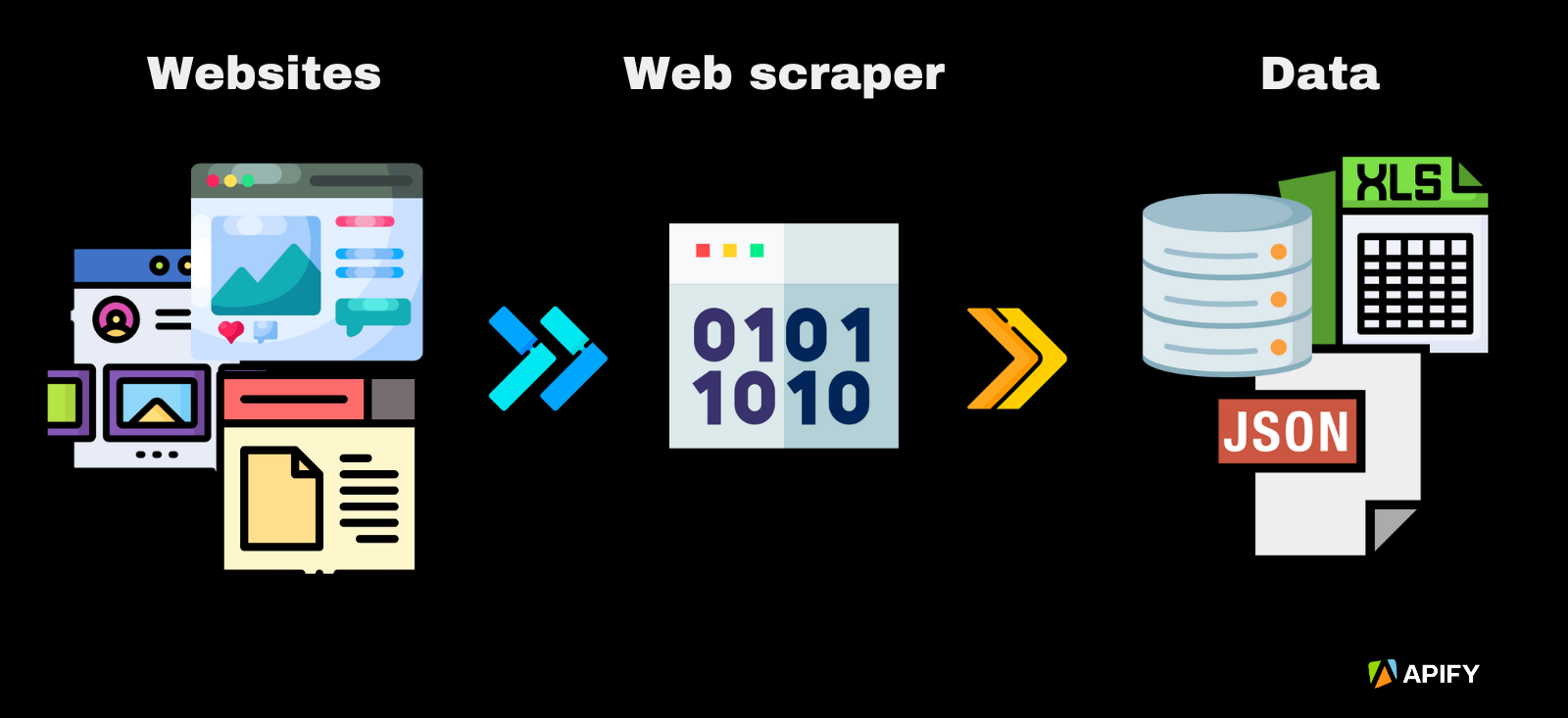

At its core, a website scraper, often referred to as a web scraper or web data extractor, is a program or script that mimics human browsing behavior to navigate websites and systematically collect specific data points. Unlike traditional web crawling, which primarily indexes content for search engines, scraping is focused on data extraction. It’s the digital equivalent of sifting through countless documents to find precisely the pieces of information you need, but at a speed and scale impossible for any human. This technological innovation has become indispensable across a multitude of industries, fueling market research, competitive analysis, lead generation, price monitoring, and even academic research by providing structured access to the world’s largest data repository: the World Wide Web.

The Mechanics of Web Scraping: How Data is Extracted

Understanding how a website scraper works reveals the ingenuity behind its functionality. The process involves a series of steps that emulate a user’s interaction with a website, but with programmed precision and automation.

Requesting and Parsing Web Pages

The initial step in any scraping operation involves making a request to a web server. Just like your browser sends a request when you type a URL, a scraper sends an HTTP GET request to the target website’s server. The server responds by sending back the content of the web page, typically in the form of HTML (HyperText Markup Language), CSS (Cascading Style Sheets), and JavaScript.

Once the scraper receives this raw HTML, the next critical step is parsing. HTML documents are essentially text files structured with tags that define elements like headings, paragraphs, images, links, and tables. Parsing involves breaking down this raw HTML into a digestible, hierarchical structure—often a Document Object Model (DOM) tree. This tree-like representation allows the scraper to navigate through the web page’s elements programmatically, much like a map, to locate specific data points. Tools and libraries in programming languages like Python (e.g., BeautifulSoup, Scrapy) are commonly used for this parsing stage, providing methods to search for elements by their tag names, IDs, classes, or other attributes.

Identifying and Extracting Target Data

With the web page’s structure mapped out, the scraper then employs selection rules to pinpoint the desired data. These rules are typically defined by CSS selectors or XPath (XML Path Language) expressions.

- CSS Selectors: These are patterns used to select elements for styling in CSS, but they are also incredibly effective for selecting elements in web scraping. For instance, a selector might target all

<h1>tags, or elements with a specificclassattribute like.product-price. - XPath: More powerful and flexible than CSS selectors, XPath can navigate through elements and attributes in an XML (or HTML) document. It allows for complex queries, such as selecting the third

<td>element within a<tr>element that has a specific attribute.

Once the target data is identified, the scraper extracts it. This could be text content, image URLs, hyperlinks, numerical values, or any other piece of information displayed on the page. The extracted data is then typically cleaned (e.g., removing extra spaces, formatting inconsistencies) and stored in a structured format, such as a CSV file, JSON file, or a database, making it ready for analysis.

Navigating Multiple Pages and Handling Challenges

Most scraping tasks involve more than just a single page. Scrapers are programmed to follow links to subsequent pages (e.g., “next page” buttons, product category links), allowing them to traverse entire websites. This involves queuing URLs to visit and processing them sequentially or concurrently.

However, web scraping is not without its challenges. Websites often employ various measures to prevent scraping, including:

- CAPTCHAs: These tests are designed to determine whether the user is human.

- IP Blocking: Detecting unusually high request volumes from a single IP address and blocking it.

- User-Agent Checks: Websites might block requests from clients that don’t present common browser user-agent strings.

- JavaScript Rendering: Many modern websites rely heavily on JavaScript to load content dynamically. Simple HTTP requests might not retrieve the full page content. Scrapers often need to integrate headless browsers (like Selenium or Puppeteer) that can execute JavaScript to render the page fully before scraping.

- Evolving Website Structures: Websites frequently update their layouts and HTML structures, which can break existing scraper code, requiring constant maintenance and adaptation.

Applications Across Industries: Fueling Innovation with Data

The utility of website scrapers extends across virtually every sector, transforming how businesses and researchers gather intelligence and make decisions. This innovation in data collection is critical for staying competitive and driving progress.

Business Intelligence and Market Analysis

For businesses, web scraping is a cornerstone of competitive intelligence. Companies scrape competitor websites to monitor pricing strategies, product features, promotional campaigns, and customer reviews. This allows them to adjust their own strategies in real-time, maintain market share, and identify emerging trends. For instance, an e-commerce platform might scrape millions of product listings daily to ensure its prices are competitive. Similarly, startups can scrape job boards to understand skill demands in their industry or analyze public data to identify potential target markets.

Beyond direct competition, market analysis benefits immensely. Scrapers can collect public sentiment from social media, news sites, and forums, offering a pulse on public opinion regarding products, brands, or events. This data is invaluable for marketing campaigns, product development, and crisis management.

Research, Development, and Public Sector Contributions

In the realm of research and development, website scrapers provide researchers with unparalleled access to large datasets that would be impossible to manually compile. Academics scrape scientific articles, patents, government reports, and historical archives to fuel studies in various fields, from economics and social sciences to linguistics and environmental studies. For example, researchers might scrape real estate listings over time to study housing market trends or public health data to track disease patterns.

Government agencies and NGOs utilize scraping for public service. They might scrape public records for investigative journalism, monitor compliance with regulations, or gather data for urban planning and resource allocation. This ability to systematically gather public data contributes to transparency and data-driven policy-making.

Niche Applications and Future Implications

The versatility of web scraping allows for highly specialized applications. In the travel industry, scrapers monitor flight prices and hotel availability across numerous platforms to find the best deals. In finance, they collect news articles and stock market data to inform trading algorithms. Even in sectors like drone technology, market analysts might scrape industry forums, news sites, and e-commerce platforms to identify emerging drone models, assess consumer preferences for features like flight time or camera quality, track regulatory changes in airspace management, or monitor pricing for drone components and services. This kind of scraped data can directly inform R&D, manufacturing, and marketing strategies for drone companies.

Looking ahead, as the web continues to grow in complexity and volume, the sophistication of website scrapers will undoubtedly evolve. Integration with artificial intelligence and machine learning is already enhancing their capabilities, allowing them to understand and extract data from increasingly unstructured or visually complex web pages. The ethical and legal landscape surrounding web scraping also continues to develop, emphasizing the importance of responsible and compliant data extraction practices.

In conclusion, the website scraper is a testament to technological ingenuity, embodying the spirit of innovation by providing an automated solution to a fundamental challenge: converting the internet’s raw information into structured, actionable data. Its pervasive utility across business, research, and specialized domains underscores its role as a critical tool for navigating the data-rich complexities of the modern digital world, empowering advancements that shape our future.