In the rapidly evolving landscape of technology, particularly within the realms of drones, flight systems, and advanced imaging, the ability to make informed decisions based on data is paramount. From optimizing flight paths to verifying the performance of new sensor arrays or assessing the reliability of autonomous navigation, data analysis plays a crucial role. At the heart of this analysis lies the concept of statistical testing. A statistical test is a formal procedure used to make decisions or draw conclusions about a population based on sample data. It provides a rigorous framework for determining whether observed data is likely to have occurred by chance or if it represents a genuine effect or difference. Understanding statistical tests is therefore essential for anyone seeking to extract meaningful insights from the vast datasets generated by modern technological advancements.

The Core Principles of Hypothesis Testing

Statistical testing is fundamentally a process of hypothesis testing. This involves formulating a hypothesis about a population and then using sample data to determine whether there is enough evidence to reject that hypothesis. The process is built upon a foundation of probability and aims to quantify the uncertainty inherent in drawing conclusions from limited observations.

Formulating Hypotheses: Null and Alternative

The cornerstone of any statistical test is the formation of two competing hypotheses: the null hypothesis ($H0$) and the alternative hypothesis ($H1$ or $H_a$).

-

The Null Hypothesis ($H0$): This represents the default assumption, or the status quo. It typically states that there is no effect, no difference, or no relationship between the variables under investigation. For instance, when testing a new drone stabilization algorithm, the null hypothesis might be that the new algorithm has no effect on flight stability compared to the existing one. In statistical terms, $H0$ often posits that a population parameter (like a mean, proportion, or variance) is equal to a specific value or that two parameters are equal.

-

The Alternative Hypothesis ($H1$ or $Ha$): This is the hypothesis that contradicts the null hypothesis. It represents what the researcher is trying to find evidence for. Following the drone stabilization example, the alternative hypothesis could be that the new algorithm does improve flight stability, or that it makes it worse, or simply that it changes stability. Alternative hypotheses can be directional (one-tailed), suggesting a specific direction of the effect (e.g., “greater than,” “less than”), or non-directional (two-tailed), suggesting any difference (e.g., “not equal to”). The choice between one-tailed and two-tailed tests depends on prior knowledge and research objectives.

The Role of Probability and Significance Levels

Statistical tests rely heavily on probability to assess the likelihood of obtaining the observed sample data, assuming the null hypothesis is true. This is where the concept of a p-value becomes crucial.

-

The p-value: The p-value is the probability of observing a test statistic as extreme as, or more extreme than, the one calculated from the sample data, given that the null hypothesis is true. A small p-value suggests that the observed data is unlikely to have occurred by chance alone if the null hypothesis were correct.

-

Significance Level ($alpha$): Before conducting a statistical test, a significance level, denoted by the Greek letter alpha ($alpha$), is chosen. This value represents the threshold for rejecting the null hypothesis. Common choices for $alpha$ are 0.05 (5%), 0.01 (1%), or 0.10 (10%). The significance level pre-determines the acceptable risk of making a Type I error.

Types of Errors in Hypothesis Testing

When making decisions based on sample data, there is always a risk of making an incorrect conclusion. Statistical tests are designed to minimize these risks, but they cannot eliminate them entirely. Two primary types of errors can occur:

Type I Error: False Positive

A Type I error, also known as a false positive, occurs when the null hypothesis is rejected when it is actually true. In simpler terms, you conclude that there is an effect or difference when, in reality, there isn’t one.

-

Analogy: Imagine a drone’s obstacle avoidance system incorrectly identifying a clear sky as an obstacle and initiating an emergency maneuver. This would be a false positive.

-

Probability: The probability of committing a Type I error is equal to the chosen significance level ($alpha$). If $alpha = 0.05$, there is a 5% chance of rejecting a true null hypothesis. This is why choosing a very small $alpha$ (e.g., 0.001) reduces the risk of a Type I error but increases the risk of a Type II error.

Type II Error: False Negative

A Type II error, also known as a false negative, occurs when the null hypothesis is not rejected when it is actually false. This means you fail to detect a real effect or difference that exists in the population.

-

Analogy: Consider a new camera sensor designed to detect subtle thermal anomalies indicative of engine overheating in a drone. A Type II error would occur if the sensor fails to detect a genuine overheating condition, leading to a potentially catastrophic failure.

-

Probability: The probability of committing a Type II error is denoted by the Greek letter beta ($beta$). The value of $beta$ depends on several factors, including the sample size, the significance level, and the true magnitude of the effect (effect size).

-

Power of a Test: The power of a statistical test is defined as 1 – $beta$. It represents the probability of correctly rejecting a false null hypothesis, or the probability of detecting a true effect. Researchers aim to design studies with high power to minimize the risk of Type II errors.

Common Statistical Tests and Their Applications

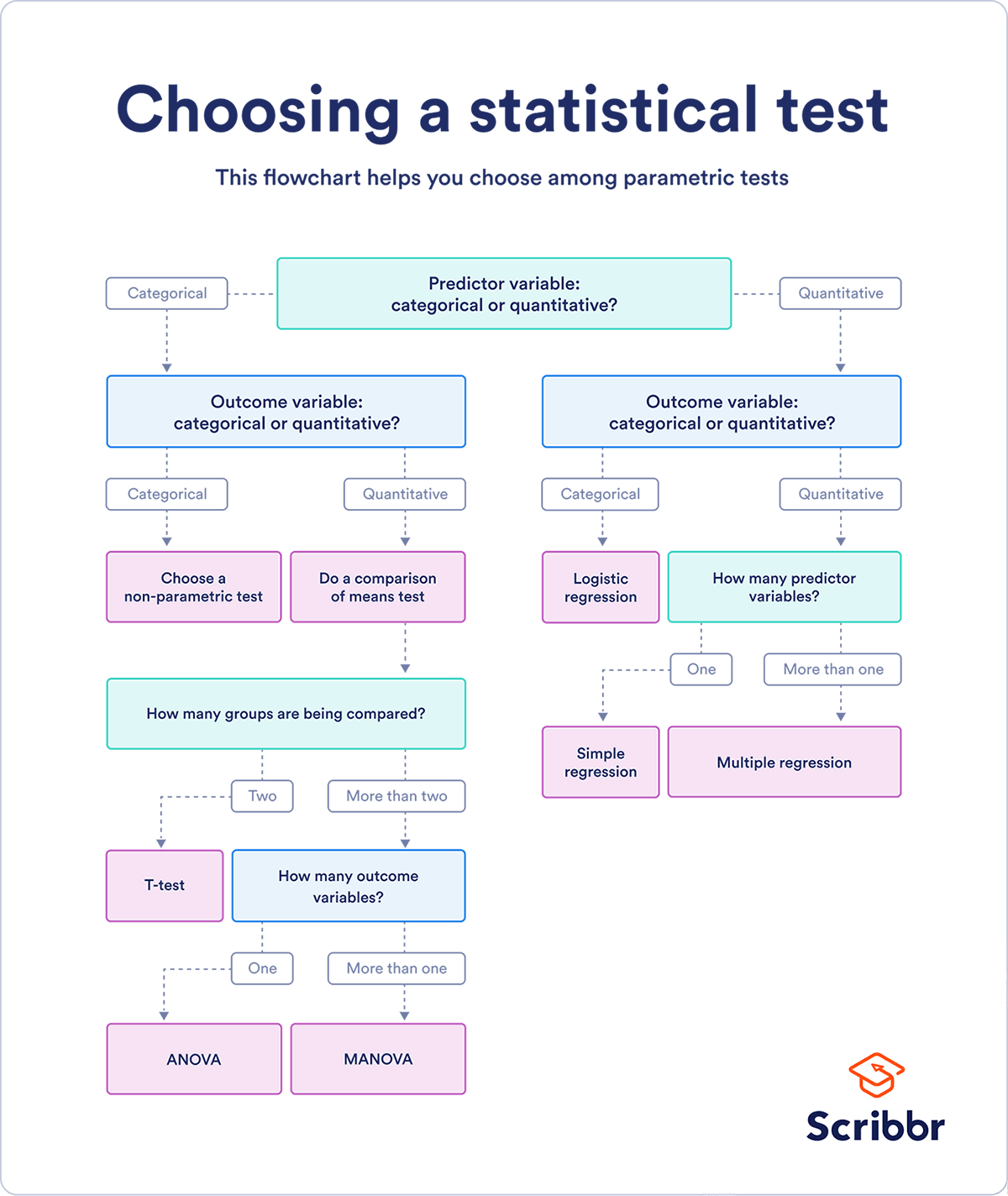

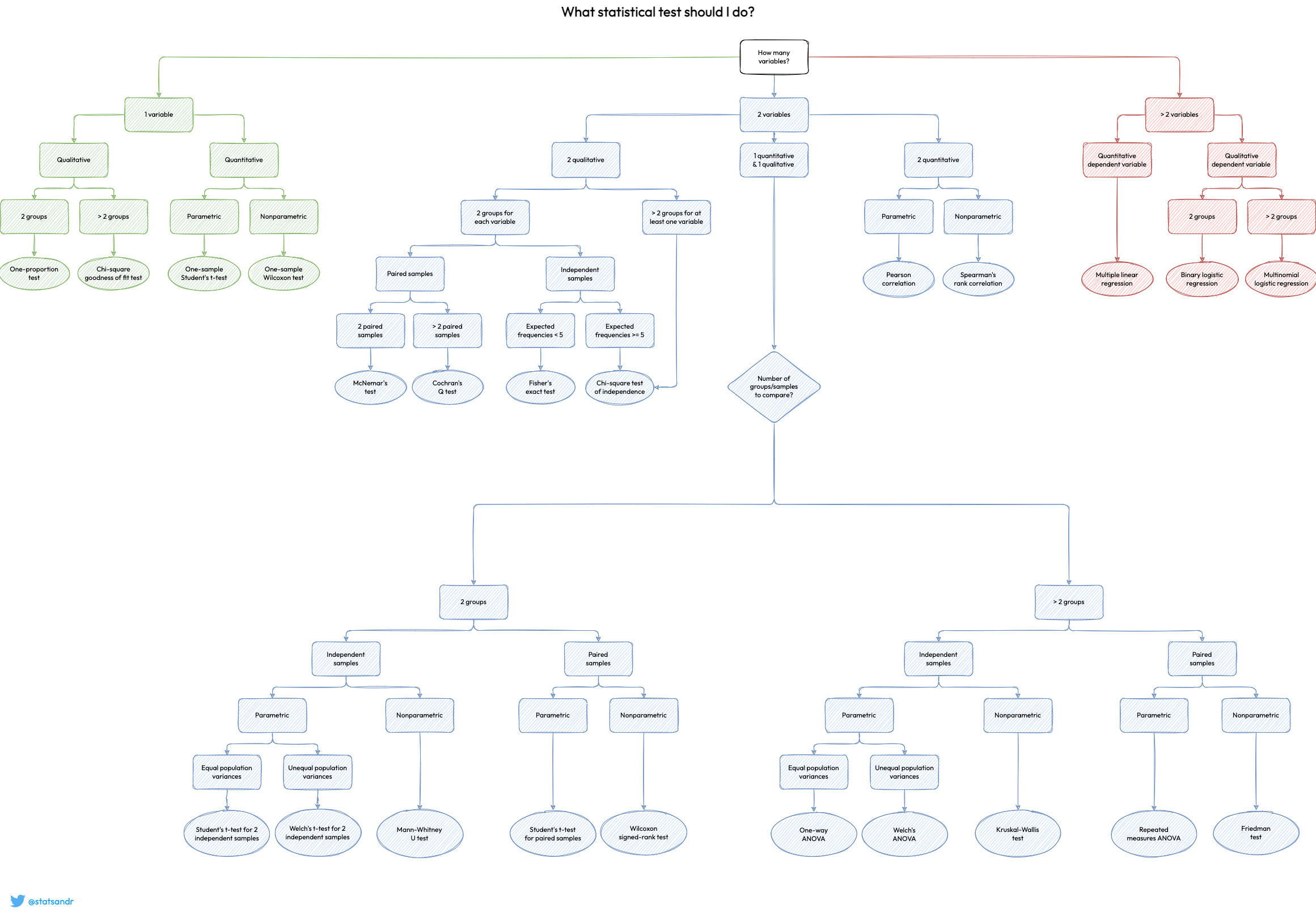

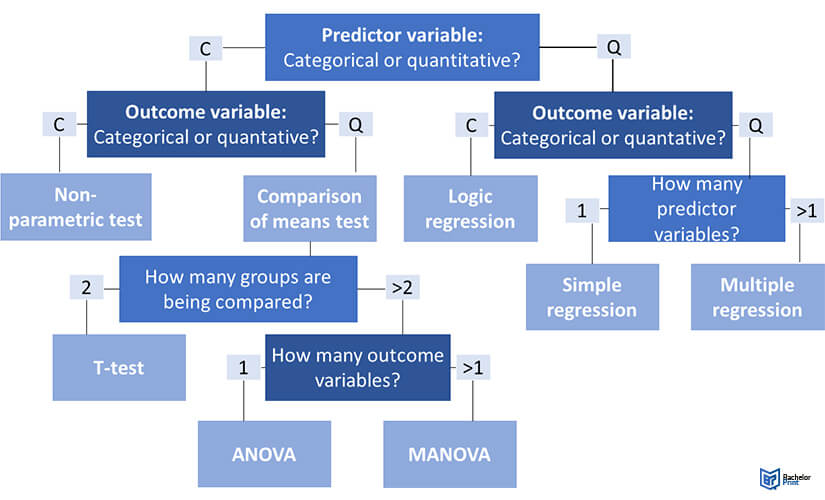

The choice of a specific statistical test depends on several factors, including the type of data being analyzed, the number of groups being compared, and the research question being asked. Here are a few common types of statistical tests and their relevance in technological contexts:

Comparing Means: T-tests and ANOVA

When researchers want to compare the average values of a continuous variable between two or more groups, t-tests and Analysis of Variance (ANOVA) are frequently employed.

-

Independent Samples T-test: This test is used to compare the means of two independent groups. For example, one might use an independent samples t-test to determine if there is a statistically significant difference in battery life between two different models of drone propellers. The null hypothesis would be that the mean battery life for both propeller models is the same, and the alternative hypothesis would be that they are different.

-

Paired Samples T-test: This test is used when the same subjects are measured twice, or when subjects are paired in some way. For instance, to assess the effectiveness of a new flight control interface, one might measure drone stability with the old interface and then with the new interface on the same drone. A paired t-test would then be used to see if there’s a significant improvement.

-

Analysis of Variance (ANOVA): ANOVA is used to compare the means of three or more independent groups. If you wanted to compare the flight efficiency of drones using three different types of autonomous navigation algorithms, ANOVA would be the appropriate test. It allows you to determine if there’s a significant difference in the mean flight efficiency across the three algorithms, and if so, post-hoc tests can identify which specific algorithms differ from each other.

Examining Relationships: Correlation and Regression

Statistical tests can also be used to investigate the strength and nature of relationships between variables.

-

Correlation: Correlation coefficients (like Pearson’s r) measure the strength and direction of a linear relationship between two continuous variables. For example, one might investigate the correlation between the altitude at which a drone flies and the quality of its GPS signal. A statistical test associated with correlation determines if the observed correlation is statistically significant or likely due to random chance.

-

Regression Analysis: Regression goes beyond correlation by allowing us to model the relationship between a dependent variable and one or more independent variables, and to make predictions. Simple linear regression involves one independent variable, while multiple regression involves several. For instance, in drone surveillance, one might use regression to predict the expected range of a sensor based on factors like ambient temperature and humidity. Statistical tests within regression analysis help determine if the independent variables significantly contribute to predicting the dependent variable.

Categorical Data Analysis: Chi-Square Tests

When dealing with categorical data (data that can be divided into groups or categories), chi-square tests are commonly used.

- Chi-Square Test for Independence: This test is used to determine if there is a statistically significant association between two categorical variables. For example, one might want to know if the type of drone (e.g., quadcopter, fixed-wing) is independent of the primary application it’s used for (e.g., photography, mapping, delivery). A chi-square test of independence would help answer this question by comparing the observed frequencies of drone types and applications to what would be expected if there were no association.

The Significance of Statistical Tests in Technological Advancement

The application of statistical testing extends far beyond academic research; it is a vital tool for innovation and quality assurance in every facet of technology.

Ensuring Reliability and Performance

In fields like drone manufacturing and flight system development, statistical tests are indispensable for verifying that products meet rigorous performance standards. Before a new navigation system is integrated into a production drone, its accuracy and reliability must be tested. Statistical tests can quantify the margin of error, assess the consistency of performance under various conditions, and help engineers make data-driven decisions about whether a system is ready for deployment.

Optimizing Design and Functionality

When developing new features or improving existing ones, statistical testing provides a scientific basis for evaluating their effectiveness. For instance, an AI algorithm designed for autonomous obstacle avoidance can be tested using statistical methods to determine if it significantly reduces the number of collisions compared to previous versions or competing algorithms. Similarly, when calibrating sensors for remote sensing applications, statistical tests help ensure that the readings are accurate and free from systematic bias.

Validating Complex Systems

Modern technologies often involve intricate systems with numerous interacting components. Statistical tests are crucial for validating the overall performance and identifying potential failure points. For example, in the development of advanced flight control systems for UAVs, statistical analysis can be used to assess the resilience of the system to sensor noise or unexpected environmental disturbances. This ensures that the technology is robust and dependable in real-world scenarios.

In conclusion, statistical tests are not merely abstract mathematical concepts; they are powerful engines of progress. They provide the framework for translating raw data into actionable insights, enabling engineers, developers, and researchers to make informed decisions, validate their innovations, and ultimately build more reliable, efficient, and advanced technologies.