The question “what is a square plus b square” might initially evoke memories of trigonometry class and the Pythagorean theorem. However, in the context of modern technology, particularly within the realm of advanced imaging systems, this fundamental mathematical concept takes on a surprisingly relevant and practical dimension. This article delves into how the principle of squaring and summing distances, core to the Pythagorean theorem, is intrinsically linked to the sophisticated sensor fusion and image processing techniques that power the next generation of visual technologies. We will explore how this mathematical foundation underpins the accuracy of measurements, the clarity of imagery, and the overall performance of high-end cameras and imaging systems, pushing the boundaries of what is visually possible.

The Geometric Foundation: Distance and Magnitude

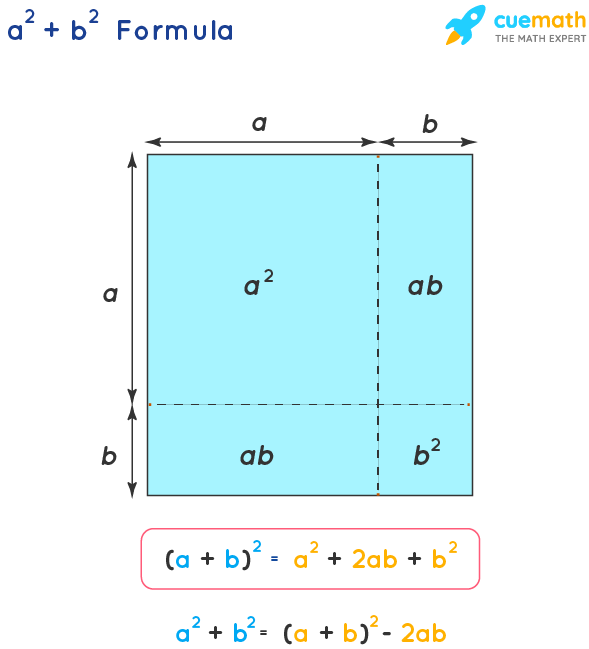

At its heart, “a square plus b square” represents the square of the hypotenuse in a right-angled triangle, a direct application of the Pythagorean theorem ($a^2 + b^2 = c^2$). While this might seem purely theoretical, its underlying principle of calculating magnitude from perpendicular components is a cornerstone of how many modern imaging systems perceive and process spatial information.

Understanding Perpendicular Components

In a two-dimensional plane, any point can be described by its horizontal (x) and vertical (y) coordinates. These coordinates can be visualized as the two perpendicular sides of a right-angled triangle, where the origin (0,0) is the vertex. The distance of this point from the origin, or indeed the distance between two points, can be calculated using the Pythagorean theorem. If we consider the difference in x-coordinates as ‘a’ and the difference in y-coordinates as ‘b’, then the straight-line distance between the two points, the hypotenuse ‘c’, is found by $c = sqrt{a^2 + b^2}$.

Magnitude in Imaging Sensors

Modern imaging sensors, whether in digital cameras, scientific instruments, or advanced optical systems, are essentially capturing light intensity at discrete points arranged in a grid. However, to understand features, movements, and spatial relationships within an image, the system often needs to go beyond simple pixel intensity. This involves analyzing changes in intensity across pixels. For instance, detecting an edge or a change in texture requires comparing the intensity of a central pixel with its neighbors. The magnitude of this change, often representing a gradient, can be calculated using principles derived from the Pythagorean theorem.

Vector Analysis and Signal Strength

In more advanced imaging applications, signals might be represented as vectors. For example, in Doppler imaging or systems that analyze polarization, the signal can have multiple components. The overall strength or magnitude of such a signal is often determined by summing the squares of its individual components and then taking the square root, directly mirroring the $a^2 + b^2 = c^2$ relationship. This is crucial for accurately quantifying signal strength, which can correlate to various physical properties of the scene being captured.

Application in Image Processing and Enhancement

The fundamental concept of $a^2 + b^2$ extends far beyond basic distance calculations and finds critical applications in how images are processed, enhanced, and analyzed to extract meaningful information.

Edge Detection and Feature Extraction

One of the most common image processing tasks is edge detection – identifying boundaries between different regions in an image. Algorithms like the Sobel or Prewitt operators calculate the gradient magnitude of the image intensity. This involves computing the difference in intensity in the horizontal and vertical directions for each pixel and its neighbors. If we denote the horizontal gradient as $Gx$ and the vertical gradient as $Gy$, the gradient magnitude $M$ is calculated as $M = sqrt{Gx^2 + Gy^2}$. This $M$ value represents the strength of the edge at that pixel. A higher magnitude indicates a sharper, more distinct edge, which is vital for object recognition, image segmentation, and many other computer vision tasks.

Noise Reduction and Signal Averaging

In imaging, noise is an inherent challenge, reducing image quality and obscuring details. Techniques for noise reduction often involve averaging or filtering pixel values. However, when dealing with more complex noise models or when trying to enhance faint signals, concepts derived from signal processing, which heavily rely on magnitude calculations, come into play. The signal-to-noise ratio (SNR) is a key metric, and its improvement often involves understanding the power of the signal versus the power of the noise, where power is proportional to the square of amplitude.

Color Space Transformations and Magnitude

Many color spaces are used in imaging, such as RGB, HSV, or Lab. Transformations between these spaces, or calculations involving color intensity and saturation, can involve vector operations. For instance, in some color models, the “chroma” or colorfulness component can be considered as a magnitude derived from the differences in color channels, effectively using the $a^2 + b^2$ principle to represent the intensity of the color information, distinct from brightness.

Advanced Imaging Systems and Sensor Fusion

The true power of the $a^2 + b^2$ concept in modern imaging lies in its application within complex, multi-sensor systems. When data from various sources needs to be combined and interpreted, this mathematical principle becomes indispensable.

Combining Data from Multiple Sensors

Imagine an advanced camera system equipped with not just a standard optical sensor but also perhaps an infrared sensor or a depth sensor. To create a comprehensive understanding of the scene, data from these different sources must be fused. If each sensor provides a measurement of a particular aspect of the scene (e.g., light intensity, heat signature, distance), and these measurements can be represented as components of a larger data set, then the overall magnitude or significance of a feature across all sensors might be calculated by summing the squares of the individual sensor contributions. This allows for a robust determination of feature presence and importance, even if one sensor is less effective in a particular condition.

Inertial Measurement Units (IMUs) and Stabilization

While IMUs are primarily associated with flight and motion control, they are often integrated into camera gimbals and stabilization systems. An IMU typically contains accelerometers and gyroscopes that measure linear acceleration and angular velocity along three orthogonal axes. The total acceleration experienced by the camera, or the magnitude of angular rotation, is derived using the Pythagorean theorem. For example, if an accelerometer measures $ax$, $ay$, and $az$ along the x, y, and z axes respectively, the total acceleration magnitude is $sqrt{ax^2 + ay^2 + az^2}$. This magnitude is critical for active stabilization systems that counteract unwanted movements, ensuring smooth and steady footage. This is directly analogous to calculating the hypotenuse in three dimensions.

Stereo Vision and Depth Perception

Stereo cameras, which use two or more lenses to capture an image from slightly different perspectives, are a prime example of how geometric principles are applied to image understanding. By comparing the disparities between corresponding points in the left and right images, a depth map can be generated. The calculation of disparity and subsequent depth involves trigonometry and geometry. While not a direct $a^2 + b^2$ calculation for depth itself, the underlying geometric relationships and the resolution of vectors into perpendicular components are deeply rooted in the same mathematical foundation. The accuracy of feature matching and the robustness of depth estimation rely on precise spatial understanding, which is built upon such geometric principles.

The Future of Imaging: Quantification and Precision

As imaging technology continues to evolve, driven by advancements in AI, computational photography, and sensor technology, the underlying mathematical principles that enable precise measurement and understanding become even more critical. The seemingly simple question of “what is a square plus b square” points to a fundamental building block that underpins the sophisticated capabilities of modern cameras and imaging systems, enabling them to not just capture light, but to truly understand and interpret the visual world with unprecedented accuracy and detail.

Computational Photography and AI

Computational photography leverages sophisticated algorithms to enhance images beyond the limitations of hardware. Techniques like High Dynamic Range (HDR) imaging, computational deblurring, and even AI-powered scene understanding rely on analyzing pixel data to infer information about the scene. The magnitude of changes in pixel values, the strength of gradients, or the variance across color channels – all of which can be calculated using principles akin to $a^2 + b^2$ – are fundamental inputs for these algorithms. AI models trained on vast datasets use these derived features to reconstruct and enhance images, allowing for greater detail and realism.

Precision Measurement in Scientific and Industrial Imaging

Beyond consumer applications, imaging systems are increasingly used for precise measurement in scientific research, industrial inspection, and medical diagnostics. Whether it’s measuring the size of microscopic structures, detecting defects in manufactured components, or quantifying tissue characteristics in medical scans, accuracy is paramount. The ability to accurately calculate distances, areas, and volumes from image data directly relies on robust geometric and trigonometric principles. The $a^2 + b^2$ relationship is a foundational element in ensuring the reliability and precision of these critical measurements.

Pushing the Boundaries of Visual Fidelity

Ultimately, the quest for higher resolution, better low-light performance, and more accurate color reproduction in imaging systems is a continuous pursuit of visual fidelity. At the core of achieving these advancements are sophisticated algorithms and sensor designs that meticulously analyze and process the light captured. The mathematical elegance of squaring and summing components to determine magnitude, a concept embodied by “a square plus b square,” remains a vital tool in the engineer’s arsenal, enabling the creation of imaging systems that can see and interpret the world with ever-increasing clarity and insight.