In the realm of linear algebra, the concept of a “pivot” is fundamental. It’s a cornerstone in understanding matrix operations, solving systems of linear equations, and comprehending the underlying structure of vector spaces. While the term might initially sound abstract, its practical implications are far-reaching, impacting fields from computer graphics and data analysis to engineering and scientific computing. For anyone engaging with these disciplines, a solid grasp of pivots is not just beneficial, but essential.

The Role of Pivots in Gaussian Elimination

The most direct and perhaps most intuitive place to encounter pivots is during the process of Gaussian elimination, a systematic algorithm used to transform a matrix into row-echelon form or reduced row-echelon form. These forms simplify matrices, making it easier to extract information and solve associated problems.

Understanding Row-Echelon Form

A matrix is in row-echelon form if it satisfies three conditions:

- All non-zero rows are above any rows of all zeros.

- The leading entry (the first non-zero element from the left, also known as the pivot element) of a non-zero row is always strictly to the right of the leading entry of the row above it.

- All entries in a column below a leading entry are zeros.

The pivots are precisely these leading non-zero entries in each non-zero row of a matrix in row-echelon form. They are the “anchors” that define the structure and rank of the matrix.

The Pivot Element and Column

When performing Gaussian elimination, we select a non-zero element in a matrix as a pivot. Typically, we start with the top-leftmost non-zero element. This element, and the row it resides in, are crucial for eliminating other entries in the same column.

The pivot element is the non-zero entry used to create zeros in the column below it. After an element has served as a pivot and all entries below it in its column have been made zero, that element and its corresponding row take on a special significance.

A pivot column is a column in a matrix that contains a pivot element. Identifying pivot columns is vital for understanding the properties of a linear system. The number of pivot columns is equal to the rank of the matrix, which directly informs us about the number of linearly independent rows or columns.

The Elimination Process

The process of Gaussian elimination involves using row operations to transform a matrix. The core operation involving a pivot is scalar multiplication of a row and addition of a multiple of one row to another.

- Selection: Choose a non-zero entry as the pivot. Ideally, this is the first non-zero entry in the current row being processed.

- Normalization (Optional but often helpful): Divide the pivot row by the pivot element to make the pivot element equal to 1. This leads to reduced row-echelon form.

- Elimination: For every other row below the pivot row, subtract a suitable multiple of the pivot row from that row. The multiple is chosen such that the entry in the pivot column of that other row becomes zero.

This systematic procedure ensures that each pivot element effectively “clears out” its column, progressively simplifying the matrix.

Example: Gaussian Elimination and Pivots

Consider the matrix:

$$ A = begin{pmatrix} 2 & 1 & -1 -3 & -1 & 2 -2 & 1 & 2 end{pmatrix} $$

-

First Pivot: The top-left element, 2, can be our first pivot.

- Divide Row 1 by 2: $ R1 leftarrow frac{1}{2} R1 $

$$ begin{pmatrix} 1 & 1/2 & -1/2 -3 & -1 & 2 -2 & 1 & 2 end{pmatrix} $$ - Eliminate entries below the pivot:

- $ R2 leftarrow R2 + 3R_1 $

- $ R3 leftarrow R3 + 2R_1 $

$$ begin{pmatrix} 1 & 1/2 & -1/2 0 & 1/2 & 1/2 0 & 2 & 1 end{pmatrix} $$

- Divide Row 1 by 2: $ R1 leftarrow frac{1}{2} R1 $

-

Second Pivot: The next pivot is the leading non-zero entry in the second row, which is 1/2.

- Divide Row 2 by 1/2: $ R2 leftarrow 2R2 $

$$ begin{pmatrix} 1 & 1/2 & -1/2 0 & 1 & 1 0 & 2 & 1 end{pmatrix} $$ - Eliminate entries below the pivot:

- $ R3 leftarrow R3 – 2R_2 $

$$ begin{pmatrix} 1 & 1/2 & -1/2 0 & 1 & 1 0 & 0 & -1 end{pmatrix} $$

- $ R3 leftarrow R3 – 2R_2 $

- Divide Row 2 by 1/2: $ R2 leftarrow 2R2 $

-

Third Pivot: The leading non-zero entry in the third row is -1. This is our third pivot.

- Divide Row 3 by -1: $ R3 leftarrow -R3 $

$$ begin{pmatrix} 1 & 1/2 & -1/2 0 & 1 & 1 0 & 0 & 1 end{pmatrix} $$

- Divide Row 3 by -1: $ R3 leftarrow -R3 $

The matrix is now in row-echelon form. The pivot elements are 1 (in row 1), 1 (in row 2), and 1 (in row 3). The pivot columns are the first, second, and third columns.

If we were to continue to reduced row-echelon form, we would use these pivots to create zeros above them as well.

Pivots and the Solution of Linear Systems

The concept of pivots is intrinsically linked to solving systems of linear equations represented in matrix form, $Ax = b$. Gaussian elimination, with its focus on pivots, is the primary method for doing so.

Consistent vs. Inconsistent Systems

The presence and position of pivots tell us whether a system of linear equations has a solution and how many solutions it has.

-

Consistency: A system is consistent if it has at least one solution. In the context of Gaussian elimination, a system $Ax=b$ is inconsistent if, after transforming the augmented matrix $[A|b]$ to row-echelon form, we obtain a row that represents the equation $0 = c$, where $c$ is a non-zero constant. This signifies a contradiction (e.g., $0x1 + 0x2 = 5$). Such a row of zeros on the left side of the augmented matrix, paired with a non-zero entry on the right, arises when there is no pivot in the last column of the augmented matrix but there are non-zero entries in the coefficient part of that row.

-

Inconsistency Example: Consider the system:

$x + y = 3$

$x + y = 5$

The augmented matrix is $ begin{pmatrix} 1 & 1 & | & 3 1 & 1 & | & 5 end{pmatrix} $.

Subtracting Row 1 from Row 2 yields $ begin{pmatrix} 1 & 1 & | & 3 0 & 0 & | & 2 end{pmatrix} $.

The second row $0x + 0y = 2$ shows inconsistency. The pivot is in the first column. The last column (representing $b$) doesn’t have a pivot, but if it did have a pivot in a row where the coefficient part was all zeros, it would signal inconsistency.

Free Variables and Unique Solutions

In a system $Ax=b$, after performing Gaussian elimination on the augmented matrix $[A|b]$ to reach row-echelon form, the variables corresponding to the pivot columns are called basic variables. The variables corresponding to columns without pivots are called free variables.

-

Unique Solution: A system has a unique solution if and only if every column in the coefficient matrix $A$ (after row reduction) contains a pivot. This means there are no free variables. If the system is also consistent, then there is exactly one solution.

-

Infinitely Many Solutions: A consistent system has infinitely many solutions if there is at least one free variable. Free variables can be assigned any value, and these choices will determine the values of the basic variables. The number of free variables is $n – r$, where $n$ is the number of variables and $r$ is the rank of the matrix (which equals the number of pivots).

-

No Solution: As discussed, this occurs when the system is inconsistent.

The positions of the pivots directly dictate which variables are dependent (basic) and which are independent (free), thereby controlling the nature of the solution set.

Pivots and Matrix Rank

The concept of a pivot is intrinsically linked to the rank of a matrix. The rank of a matrix is a fundamental property that tells us about the dimensionality of the vector space spanned by its rows (row space) or columns (column space).

Defining Rank via Pivots

The rank of a matrix $A$, denoted as $rank(A)$, is precisely equal to the number of non-zero rows in its row-echelon form. Since each non-zero row in row-echelon form begins with a unique pivot element, the rank of a matrix is also equal to the number of pivot elements in its row-echelon form.

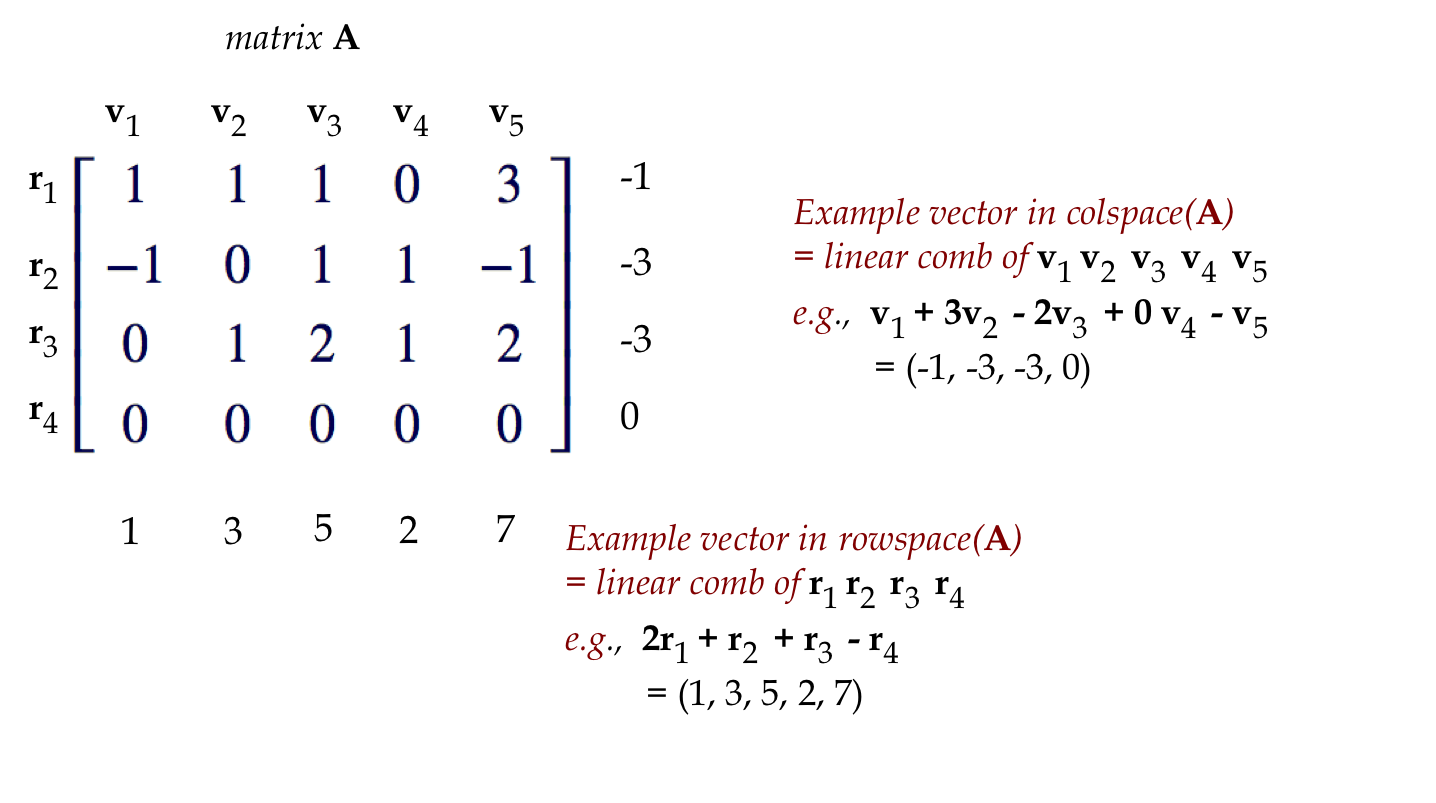

- Row Rank: The dimension of the row space of a matrix is equal to its rank. The non-zero rows in the row-echelon form of a matrix form a basis for its row space.

- Column Rank: The dimension of the column space of a matrix is also equal to its rank. The pivot columns of the original matrix $A$ form a basis for its column space. This is a crucial distinction: we identify the pivot columns in the row-reduced form to know which columns in the original matrix form the basis.

Significance of Rank

The rank of a matrix has profound implications:

- Linear Independence: The rank of a matrix $A$ is the maximum number of linearly independent rows (or columns) in $A$. If $A$ is an $m times n$ matrix, its rank can be at most $min(m, n)$.

- Square Matrices and Invertibility: For an $n times n$ square matrix $A$, it is invertible if and only if its rank is $n$. This means that all $n$ columns (and rows) are linearly independent, and its row-echelon form is the identity matrix. In this case, there will be $n$ pivots. If the rank is less than $n$, the matrix is singular (non-invertible).

- Solutions to $Ax=0$: The null space (or kernel) of a matrix $A$ is the set of all vectors $x$ such that $Ax=0$. The dimension of the null space (nullity) is related to the rank by the Rank-Nullity Theorem: $rank(A) + nullity(A) = n$, where $n$ is the number of columns in $A$. The nullity is equal to the number of free variables in the system $Ax=0$.

Pivots in the Context of Vector Spaces and Transformations

Beyond solving equations, pivots illuminate the structure of vector spaces and the behavior of linear transformations.

Basis of a Vector Space

A basis for a vector space is a set of linearly independent vectors that span the entire space. The concept of pivots helps in identifying bases for the fundamental subspaces associated with a matrix: the column space, row space, null space, and left null space.

- Basis for Column Space: As mentioned, the pivot columns of the original matrix $A$ form a basis for its column space.

- Basis for Row Space: The non-zero rows of the row-echelon form of $A$ form a basis for its row space.

- Basis for Null Space: By solving $Ax=0$ using Gaussian elimination and identifying free variables (corresponding to non-pivot columns), we can construct a basis for the null space. Each free variable, when set to 1 while others are 0, generates a basis vector for the null space.

Linear Transformations

A linear transformation $T: V to W$ maps vectors from one vector space $V$ to another vector space $W$. This mapping can be represented by a matrix. Understanding the pivots of this matrix reveals key properties of the transformation.

- Injectivity (One-to-One): A linear transformation $T$ is injective if its null space contains only the zero vector. For a matrix representation $A$, this means the null space dimension (nullity) is 0, which implies $rank(A) = n$ (the number of columns). This corresponds to having a pivot in every column of $A$.

- Surjectivity (Onto): A linear transformation $T$ is surjective if its image (the set of all possible output vectors) is equal to the entire codomain $W$. For a matrix $A$ representing $T: mathbb{R}^n to mathbb{R}^m$, this means the dimension of the column space (rank) must be equal to the dimension of the codomain $m$. This implies that every column of $A$ in row-echelon form must be a pivot column, and there are no zero rows in the row-echelon form of $A$ that correspond to $0=c$.

- Isomorphism: If a linear transformation is both injective and surjective, it is an isomorphism. For square matrices, this means the matrix is invertible, its rank is $n$, and it represents a transformation that preserves the essential structure of the vector space. All $n$ columns will have pivots.

In essence, pivots act as markers that delineate the essential, independent components of linear systems and transformations, providing clarity on solvability, uniqueness, dimensionality, and structure. Their systematic identification through algorithms like Gaussian elimination makes them indispensable tools in linear algebra and its myriad applications.