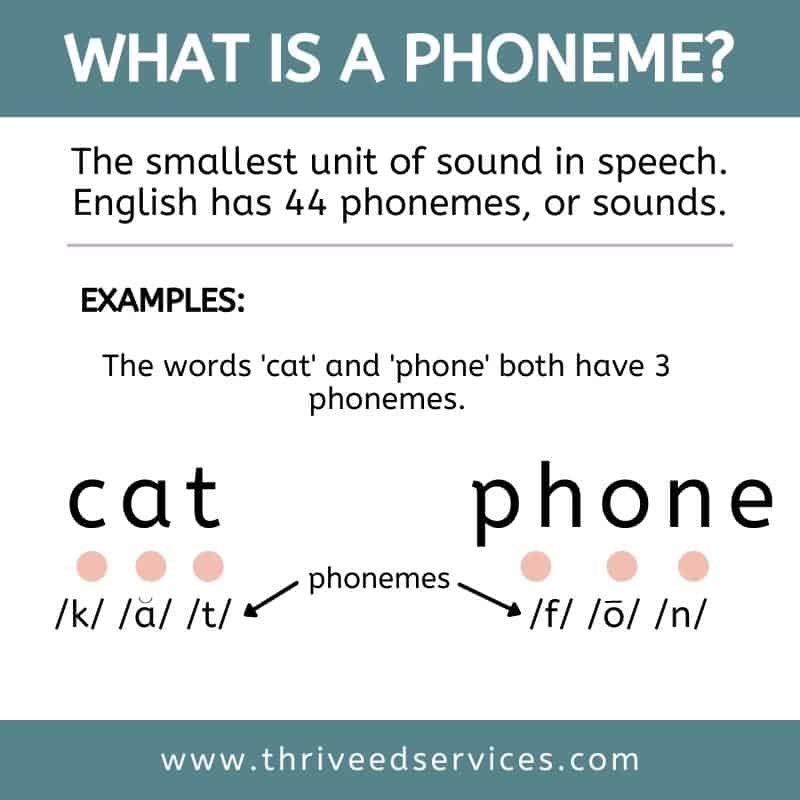

In the realm of linguistics, a phoneme is defined as the smallest unit of sound that can distinguish meaning in a language. It’s a foundational concept, allowing us to break down complex speech into discernible components that, when combined, form words, sentences, and ultimately, coherent communication. But what relevance could such a term possibly hold for the cutting-edge world of drone technology, particularly in areas like AI, autonomous flight, and remote sensing?

The answer lies in metaphor, abstraction, and the pursuit of understanding fundamental units within complex systems. Just as language relies on phonemes as its basic building blocks of meaning, advanced drone systems – especially those driven by artificial intelligence – operate on what we might conceptually term “technological phonemes.” These are the irreducible units of data, perception, command, or decision that, when synthesized, allow a drone to perceive its environment, make autonomous choices, execute intricate maneuvers, and communicate effectively. This article delves into this intriguing metaphorical concept, exploring how these “phonemes” manifest across various facets of drone innovation, from sensory input interpretation to autonomous action and inter-system communication. By dissecting these foundational elements, we gain a deeper appreciation for the sophisticated architectures underpinning modern drone capabilities.

The AI’s Sensory “Phonemes”: Decoding Environmental Input

For an autonomous drone, the world is a symphony of data streams – visual, auditory, thermal, spatial, and more. To make sense of this deluge, the drone’s AI must segment, interpret, and assign meaning to these raw inputs. This process is analogous to how our brains interpret a continuous stream of sound as distinct linguistic phonemes. The AI identifies specific, critical units of information that, when processed, form a comprehensive understanding of its surroundings. These are the sensory “phonemes” of drone intelligence.

Sensor Fusion as Information Synthesis

Modern drones are equipped with an array of sensors, each providing a unique perspective on the environment. High-resolution RGB cameras capture visual details, LiDAR scanners map 3D structures with precision, thermal cameras detect heat signatures, and ultrasonic sensors gauge proximity. Individually, each sensor generates vast amounts of data. The challenge for autonomous systems lies in sensor fusion – combining these disparate data streams into a single, coherent, and actionable understanding.

Here, the “phonemes” are not the raw pixels or individual LiDAR points, but rather the interpreted, meaningful features extracted from these data streams by the drone’s processing unit. For instance, a cluster of LiDAR points indicating a sudden change in elevation might be fused with visual data showing a tree trunk. The “phoneme” isn’t just the height data or the visual texture; it’s the fused understanding of “obstacle present, type: tree, dimensions: X, Y, Z.” This fused unit of information becomes a distinct, meaningful “phoneme” that the AI can then use for navigation and decision-making. Without this sophisticated synthesis, the drone would be overwhelmed by noise, much like trying to understand speech without discerning individual sounds.

Identifying Critical Data Units (Visual, Lidar, Thermal)

Delving deeper, let’s consider the nature of these critical data units across different sensor types.

In visual processing, a “phoneme” could be a detected edge, a specific texture pattern, or a recognized object (e.g., another drone, a power line, a human). Advanced computer vision algorithms, often leveraging convolutional neural networks (CNNs), are designed to identify these fundamental visual “features” that differentiate one object or environmental condition from another. For example, the unique shape of a drone propeller, distinct from a bird’s wing, might be a visual “phoneme” crucial for object identification and collision avoidance.

For LiDAR data, a “phoneme” might be a precisely measured distance to a surface, a recognized planar surface, or a clustered set of points forming a distinct geometric primitive (e.g., a sphere representing a rock, a cylinder representing a pole). These are the basic spatial components that the drone uses to build its internal 3D map of the world, identifying navigable paths versus obstructions.

In thermal imaging, a “phoneme” could be a discrete hot spot indicating a living creature, a specific temperature gradient signaling a leak, or a pattern of heat dissipation that identifies a malfunctioning component. These thermal “phonemes” are vital for search and rescue operations, wildlife monitoring, or industrial inspection, where visual cues might be obscured.

The drone’s AI constantly works to extract, categorize, and prioritize these critical “phonemes” from its sensory input, creating an internal “language” through which it comprehends its operational environment.

Command “Phonemes”: Shaping Autonomous Actions

Beyond understanding its environment, an autonomous drone must act within it. This requires translating its internal comprehension into a series of precise, executable commands. Here, “command phonemes” represent the fundamental, indivisible units of instruction or decision that drive the drone’s physical behavior and strategic choices. These are the equivalent of the motor commands that allow us to articulate speech, transforming abstract thought into tangible action.

Granular Control Inputs and Motor Responses

At the most fundamental level, a drone’s flight is controlled by manipulating the speed of its individual motors, which in turn alters propeller thrust and direction. Each minor adjustment – an increment in motor speed, a subtle change in tilt angle – can be considered a “granular control input.” These are the raw, physical “phonemes” of drone motion.

However, the AI doesn’t directly issue thousands of individual motor commands. Instead, it operates at a higher level of abstraction. A “command phoneme” here would be a pre-defined, elementary action or a specific adjustment parameter that results in a predictable physical response. For example, “increase altitude by 1 meter,” “move forward 0.5 meters,” or “rotate yaw by 5 degrees.” These are composite “phonemes” that encapsulate multiple underlying motor adjustments but represent a single, meaningful directive to the flight controller.

The drone’s flight control system then translates these higher-level “command phonemes” into the precise, real-time motor signals necessary to execute the desired maneuver. The accuracy and responsiveness of this translation are paramount for stable and agile autonomous flight.

AI-Driven Decision Tree Units

More complex than simple physical movements are the strategic decisions an autonomous drone makes. In scenarios like autonomous navigation, obstacle avoidance, or target tracking, the AI constantly evaluates its sensory “phonemes” and generates corresponding “command phonemes” based on its mission objectives and programmed rules. These decision-making processes can be conceptualized as navigating a complex “decision tree.”

Each branch point or logical step in this tree, where the drone chooses one path of action over another based on its current understanding, constitutes a “decision tree unit” or a higher-order “command phoneme.” For example:

- “IF obstacle detected AHEAD, THEN initiate evasive maneuver LEFT.”

- “IF battery LOW, THEN return to charging station.”

- “IF target LOST, THEN commence search pattern.”

These are not merely reactive statements; they are the fundamental rules and choices that form the drone’s operational logic. They represent the AI’s internal “grammar,” dictating how it constructs a sequence of actions from its understanding of the environment. As AI models become more sophisticated with deep learning and reinforcement learning, these “decision tree units” evolve from explicit programming to learned behaviors, allowing the drone to adapt its “command phonemes” to novel situations.

Communication “Phonemes”: Inter-Drone and Human-Drone Interaction

Beyond a single drone’s internal processing, its ability to interact with other drones and human operators is increasingly vital for complex missions. This interaction involves distinct “communication phonemes” – the standardized, meaningful units of data exchanged to convey status, coordinate actions, or receive directives. These are the elements that form a shared “language” between intelligent agents.

Protocol Elements in Swarm Intelligence

Swarm intelligence represents a frontier in drone innovation, where multiple autonomous drones collaborate to achieve a common goal that would be impossible or impractical for a single drone. This requires robust and efficient inter-drone communication. The “communication phonemes” in this context are the discrete messages and signals exchanged according to established protocols.

These “phonemes” can include:

- Position updates: A drone broadcasting its precise GPS coordinates to the swarm.

- Task assignments: The leader drone distributing specific sub-tasks to individual members.

- Sensor data sharing: One drone transmitting its unique sensory “phonemes” (e.g., a visual identification of a target) to the rest of the swarm.

- Status reports: Drones communicating battery levels, system health, or mission progress.

- Collision avoidance warnings: Peer-to-peer alerts about potential spatial conflicts.

Each of these message types, formulated according to a predefined communication protocol (e.g., MAVLink, custom mesh network protocols), acts as a distinct “phoneme” that contributes to the collective understanding and coordinated action of the swarm. The clarity and unambiguous nature of these “phonemes” are critical for maintaining coherence and preventing chaotic behavior in large drone formations. They form the lexicon of a distributed, multi-agent intelligence.

Interpreting Human Command “Tokens”

For human operators, interacting with drones involves issuing commands and receiving feedback. In this human-drone interface, “communication phonemes” are the distinct “tokens” of information exchanged. For the drone, this might involve interpreting a voice command, a gesture, a touch-screen input, or a manual joystick movement as a specific, actionable instruction.

For example, a human saying “Go home” translates into a complex sequence of internal “command phonemes” for the drone. The phrase itself acts as a high-level “communication phoneme” from the human to the drone. Similarly, a drone displaying a specific blinking light pattern, an icon on a ground control station, or an auditory alert is sending “communication phonemes” back to the human operator, conveying its status, warnings, or mission updates.

The development of intuitive user interfaces, natural language processing for voice control, and clear visual/auditory feedback mechanisms are all about refining these human-drone “communication phonemes,” making them as clear, efficient, and unambiguous as possible. This ensures that the human’s intent is correctly translated into drone action, and the drone’s status is accurately understood by the operator.

Learning and Adaptation: Evolving the “Phonemic” Repertoire

Perhaps the most fascinating aspect of advanced drone technology is its capacity for learning and adaptation. Just as human language evolves, with new phonemes entering common usage or existing ones shifting their meaning, so too do the “phonemes” of drone intelligence evolve through continuous learning. This dynamic adaptation allows drones to perform better in novel environments and refine their understanding and action over time.

Reinforcement Learning and Behavioral Units

Reinforcement learning (RL) is a powerful paradigm in AI where an agent learns to make optimal decisions by interacting with an environment and receiving rewards or penalties. In the context of drones, an RL agent might be tasked with learning efficient navigation in a cluttered environment or developing optimal flight patterns for energy conservation.

Here, the “behavioral units” learned through reinforcement can be considered emergent “phonemes.” Initially, the drone might explore random actions. Through trial and error, it discovers sequences of actions that lead to rewards (e.g., successfully reaching a destination, avoiding a collision, conserving battery). These successful action sequences, or even the fundamental components of those sequences, become ingrained as effective “behavioral phonemes.” For instance, a drone might learn that a specific combination of motor inputs (a control “phoneme”) followed by a particular sensor interpretation (a sensory “phoneme”) consistently leads to a desired outcome.

Over time, the drone builds a sophisticated “vocabulary” of these learned “behavioral phonemes,” allowing it to construct increasingly complex and adaptive flight strategies. These are no longer hard-coded commands but dynamically learned response patterns that have proven effective.

Predictive Analytics and Anticipatory “Phonemes”

Advanced drones are not merely reactive; they are increasingly predictive. Using sophisticated algorithms and analyzing historical data, drones can anticipate future events, environmental changes, or the movements of other agents. This capability gives rise to what we might call “anticipatory phonemes” – fundamental units of predictive insight that inform proactive decision-making.

For example, in a dense urban environment, a drone might analyze traffic patterns, weather forecasts, and historical air current data to predict potential congestion points or turbulence zones before they occur. The identification of a specific combination of these factors – “heavy traffic + high winds + building canyon effect” – acts as an “anticipatory phoneme” that triggers a proactive re-routing strategy before the drone even encounters the actual conditions.

Similarly, in remote sensing for agriculture, analyzing historical soil moisture data, weather patterns, and crop growth rates allows a drone to predict areas that will require irrigation or pest control in the near future. The “phoneme” here is the predictive insight itself: “specific area X will show water stress in 48 hours.” This enables precise, targeted actions, optimizing resource use and improving efficiency. These anticipatory “phonemes” are crucial for truly intelligent and proactive autonomous systems, moving beyond mere reaction to informed foresight.

Conclusion

The journey from a linguistic concept to a metaphorical framework for understanding drone technology underscores the depth and complexity of modern innovation. By abstracting “phonemes” as the fundamental, irreducible units of meaning, command, and communication within autonomous drone systems, we gain a valuable lens through which to analyze their intricate workings. From the drone’s ability to decode the sensory “phonemes” of its environment and translate them into precise “command phonemes” for action, to the crucial role of “communication phonemes” in swarm intelligence and human-drone interaction, and finally, to the evolution of “behavioral” and “anticipatory phonemes” through learning and predictive analytics – each aspect highlights the modularity and sophistication of these technological marvels.

As drone technology continues to advance, pushing the boundaries of autonomy, artificial intelligence, and human-machine collaboration, our understanding of these foundational “phonemes” will become increasingly critical. Discerning these fundamental units allows engineers and researchers to design more robust, intelligent, and adaptable systems, ultimately paving the way for drones that can communicate, learn, and operate in our world with unprecedented capability and insight. The language of drones, like any language, is built upon its fundamental units of meaning, and in the dynamic world of tech and innovation, these are its emergent, technological “phonemes.”