In the rapidly evolving landscape of unmanned aerial vehicle (UAV) technology, the intersection of linguistics and logic might seem distant. However, as we push the boundaries of artificial intelligence and autonomous flight, the concept of the “modal verb”—traditionally a staple of grammar used to express possibility, necessity, and permission—has become a foundational metaphor for how drones interpret and execute complex tasks. To understand “what is a modal verb” in the context of tech and innovation is to understand the hierarchical decision-making processes that allow a drone to navigate a cluttered environment, prioritize safety protocols, and fulfill mission objectives without human intervention.

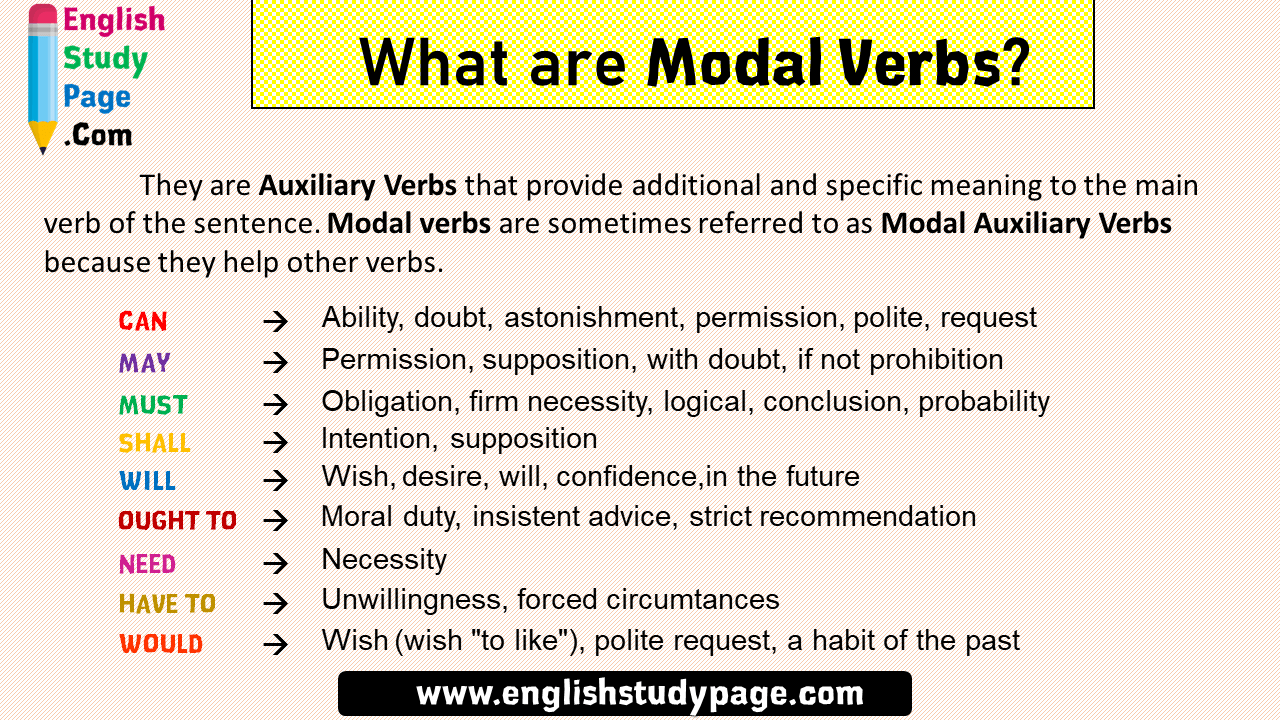

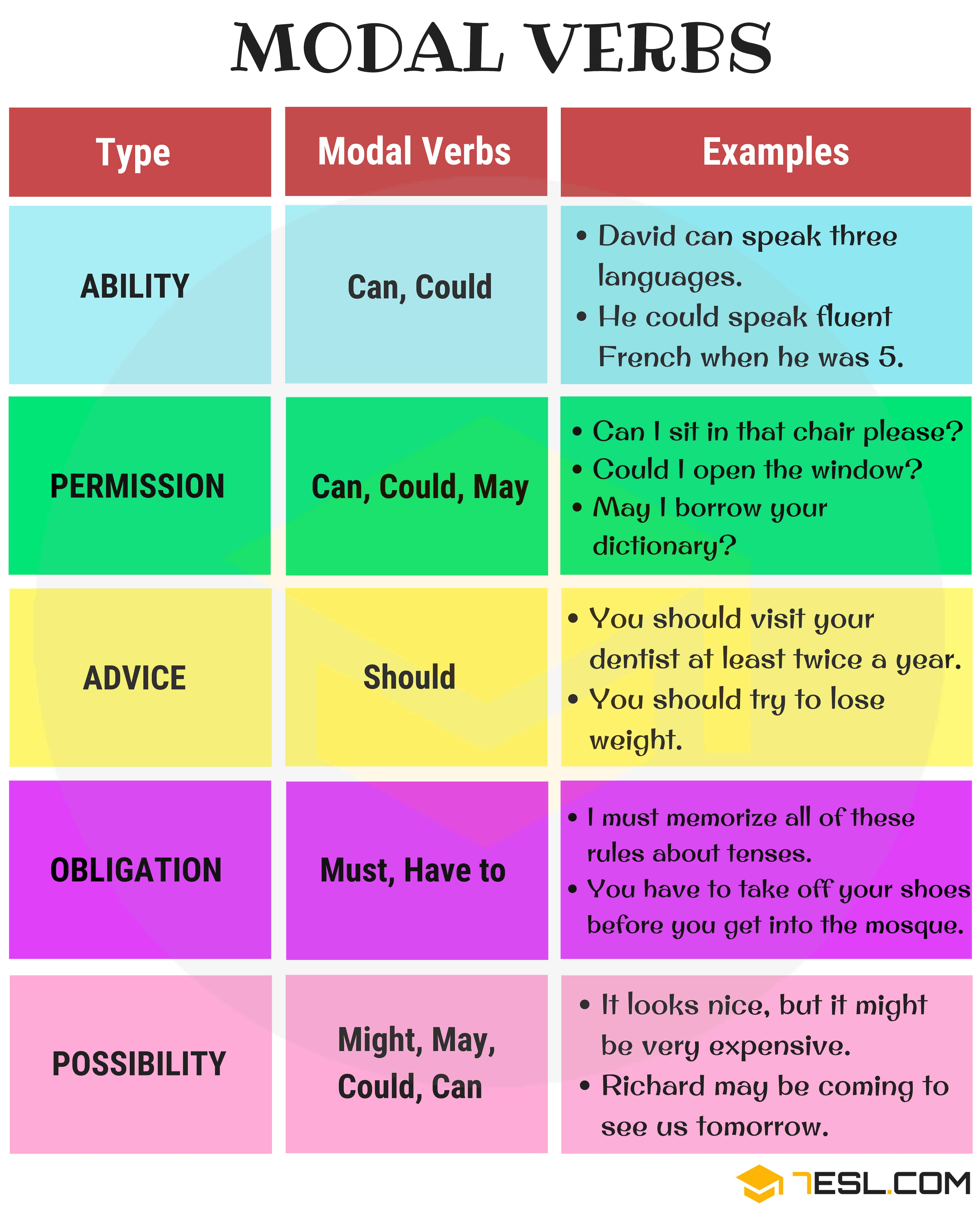

In autonomous systems, modal verbs like must, should, can, and may are translated into lines of code and probabilistic algorithms. These “modalities” define the constraints and capabilities of a drone’s AI. As we delve into the nuances of AI follow modes, remote sensing, and autonomous pathfinding, we see that the future of drone innovation is built upon a digital syntax that mirrors human language’s ability to weigh certainty against possibility.

The Syntax of Autonomy: How Drones Process Commands

At its core, an autonomous drone operates through a series of logical gates. In traditional programming, these were often binary—if X happens, do Y. However, with the advent of sophisticated AI and Tech & Innovation in the UAV sector, the logic has become “modal.” This means the drone isn’t just following a static script; it is evaluating the mode of its existence and the necessity of its actions in real-time.

Understanding Modal Logic in UAV Programming

In software engineering, modal logic allows for the expression of “necessity” and “possibility.” When applied to a drone’s flight controller, this creates a framework where the drone can distinguish between a command it must follow (such as a hardware fail-safe) and a task it should perform (such as maintaining a specific framing in aerial filmmaking).

This is particularly crucial in autonomous mapping and remote sensing. A drone equipped with LiDAR technology must constantly evaluate its environment. Using modal logic, the system determines that it must maintain a specific altitude to ensure data accuracy, while it can deviate from its path if it detects a temporary obstacle like a bird or a moving crane. This fluid decision-making is what separates basic automation from true innovation in flight technology.

The Hierarchy of ‘Must’ and ‘Shall’ in Flight Control

In the documentation for flight safety systems and autonomous protocols, the terms “must” and “shall” are more than just words—they are the highest priority flags in the system’s architecture. These represent the hard constraints of the drone’s operational envelope.

For instance, if a drone’s battery drops below a certain threshold, the AI triggers a “must” command: “The drone must return to the home point.” This command overrides all other sub-tasks. Innovation in this space focuses on how these “musts” are communicated across swarms or integrated into complex missions. By refining the “modalities” of the flight code, developers can create drones that are more resilient to unpredictable environmental variables, ensuring that safety is never sacrificed for the sake of mission completion.

AI Follow Mode: Navigating the ‘Should’ and ‘Could’ of Dynamic Environments

One of the most prominent examples of tech innovation in the drone industry is the AI Follow Mode, or ActiveTrack technology. Here, the “modal verb” framework is applied to visual recognition and kinetic prediction. When a drone follows a subject—be it a mountain biker or a high-speed vehicle—it is constantly calculating what it could do to maintain the best angle versus what it should do to avoid obstacles.

Predictive Kinematics and Intent Recognition

Advanced drones use neural networks to predict where a subject will move next. This involves a modal calculation of possibility: “The subject might turn left based on current trajectory.” The drone’s AI doesn’t just react; it anticipates. This “might” is quantified through probabilistic models.

By using remote sensing and optical flow sensors, the drone builds a 3D map of its surroundings. It evaluates the “can” factor: “I can fly through these trees, but I should fly above them to maintain a stronger GPS signal.” This layer of decision-making represents a massive leap in autonomous flight, moving away from simple obstacle avoidance toward intelligent mission optimization.

Balancing Ambition with Ability: Power Management Constraints

Innovation in drone tech often focuses on the “can”—the capability of the machine. However, the most advanced AI systems are those that understand their own limitations. In follow modes, a drone must constantly assess its kinetic ability. Can the motors handle the current wind resistance? Is the gimbal’s stabilization sufficient for this speed?

Sophisticated drones use “modal” reasoning to throttle their own performance. If the system detects that it cannot safely maintain the follow-speed required without risking a motor stall or a crash, it initiates a soft-degradation of the mission. It might widen the shot or slow down, prioritizing the “must” (safety) over the “should” (the specific shot).

Remote Sensing and the Probability of ‘Might’

In the realm of mapping and remote sensing, “what is a modal verb” takes on a statistical meaning. When a drone is used for agricultural monitoring or structural inspection, it is collecting massive amounts of data. The AI processing this data must deal with the modality of uncertainty.

Uncertainty in 3D Mapping and Photogrammetry

When creating a 3D digital twin of a bridge or a skyscraper, the drone’s sensors may encounter “noise” or gaps in data. Here, the AI uses modal logic to determine what might be there. If a photogrammetry algorithm sees a blur, it evaluates the probability of the structure’s shape. Innovation in this field involves reducing the “might” and moving toward the “is.”

Remote sensing tools like thermal imaging and multi-spectral sensors allow drones to see beyond the visible spectrum. The AI then makes modal assertions: “This area of the crop might be underwatered based on thermal signatures.” By categorizing these findings through degrees of certainty, the drone provides actionable intelligence rather than just raw data.

Sensor Fusion as a Modal Interpreter

The most innovative drones don’t rely on a single sensor. They use “sensor fusion,” which is essentially a way of cross-referencing modal data. If the GPS says the drone is at coordinates X, Y, but the visual sensors say there must be an obstacle in the way, the system must resolve this conflict.

This resolution process is the heart of autonomous innovation. The drone weighs the reliability of its sensors (the “may” vs. the “must”) to decide on the safest course of action. High-end industrial drones now feature redundant IMUs and dual-compass systems specifically to provide a more stable modal framework for decision-making in high-interference environments.

Human-Machine Interface: Using Language to Direct Flight

As we look toward the future of Tech & Innovation, the gap between human language and drone execution is narrowing. We are moving toward a world where “what is a modal verb” isn’t just a metaphor for logic, but the actual method of control.

Natural Language Processing in Commercial UAVs

Recent innovations in Natural Language Processing (NLP) are being integrated into drone controllers and apps. Instead of using joysticks, pilots can give complex, modal commands: “You should stay at least fifty feet away from the building, but you must get a clear shot of the HVAC unit.”

The ability for a drone to parse these linguistic nuances—distinguishing between a soft constraint (should) and a hard constraint (must)—is a pinnacle of AI development. It allows for more intuitive operation, reducing the training time for commercial pilots and allowing the drone to function as a true partner in the field rather than a mere tool.

Translating Intent into Action

The challenge in this innovation lies in context. A drone must understand that “you can land here” is a permission, not a command. If a pilot says, “You may return home if the wind picks up,” the drone must define the threshold of “wind picking up.” This requires a deep integration of environmental sensors and semantic understanding.

Innovators are currently working on “context-aware” AI that can interpret these modal commands by looking at historical flight data, local weather reports, and the specific parameters of the mission. This creates a more flexible and responsive system that mirrors human-to-human communication.

The Future of Modal Innovation in Drone Swarms

As we move toward drone swarms—hundreds of UAVs working in unison—the concept of modal logic becomes even more critical. In a swarm, the individual drone must manage not only its own “musts” and “shoulds” but also those of the collective.

Collaborative Logic and Shared Imperatives

In a search and rescue operation, a drone swarm uses collaborative modality. If Drone A discovers a target, it issues a “must” command to the rest of the swarm: “All units must converge on these coordinates.” Simultaneously, the individual drones must maintain their “should” constraints, such as maintaining a safe distance from one another to avoid mid-air collisions.

This hierarchical, multi-agent logic is the next frontier of tech and innovation. It requires immense processing power and low-latency communication (often via 5G or dedicated mesh networks). By perfecting the way drones communicate necessity and possibility, we can unlock the potential for large-scale autonomous operations, from environmental monitoring to complex urban delivery systems.

In conclusion, “what is a modal verb” in the world of drones is the bridge between human intent and robotic execution. It represents the transition from simple, reflexive machines to intelligent, proactive systems capable of navigating the complexities of the real world. As AI continues to evolve, the “modalities” of drone flight will become increasingly sophisticated, allowing for a level of autonomy that was once the stuff of science fiction.