In the rapidly evolving landscape of unmanned aerial systems (UAS), innovation is not merely a buzzword; it is the very essence driving progress. As drones transition from niche tools to indispensable assets across myriad industries, the demand for more sophisticated, autonomous, and integrated operational frameworks intensifies. It is within this crucible of advanced technological integration that the concept of “Mochaccino” emerges – not as a delightful beverage, but as a codename for a revolutionary, multi-layered framework designed to elevate drone capabilities beyond conventional boundaries.

The “Mochaccino” framework represents a confluence of artificial intelligence, advanced sensor fusion, real-time data analytics, and autonomous decision-making, designed to create a comprehensive operational intelligence layer for complex drone missions. It’s an architectural paradigm shift, moving beyond singular, task-specific drone functions to an integrated ecosystem where data, intelligence, and execution are seamlessly interwoven. This holistic approach addresses the growing complexities of autonomous flight, making drones more intuitive, resilient, and adaptive in dynamic environments. By blending diverse technological elements, “Mochaccino” aims to deliver a richer, more nuanced, and highly performant operational experience, pushing the frontiers of what drones can achieve in critical applications like advanced mapping, infrastructure inspection, environmental monitoring, and disaster response.

The Genesis of Mochaccino: Blending Innovation in Autonomous Systems

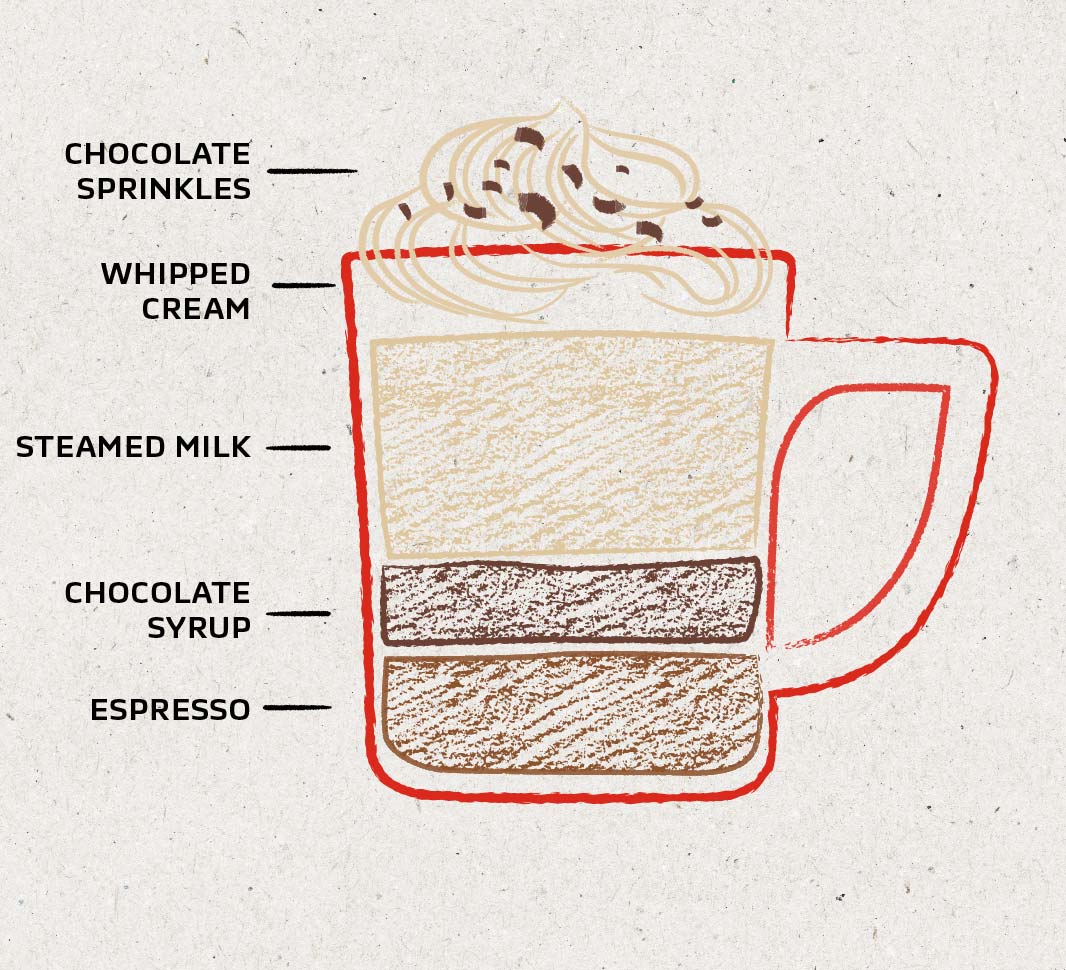

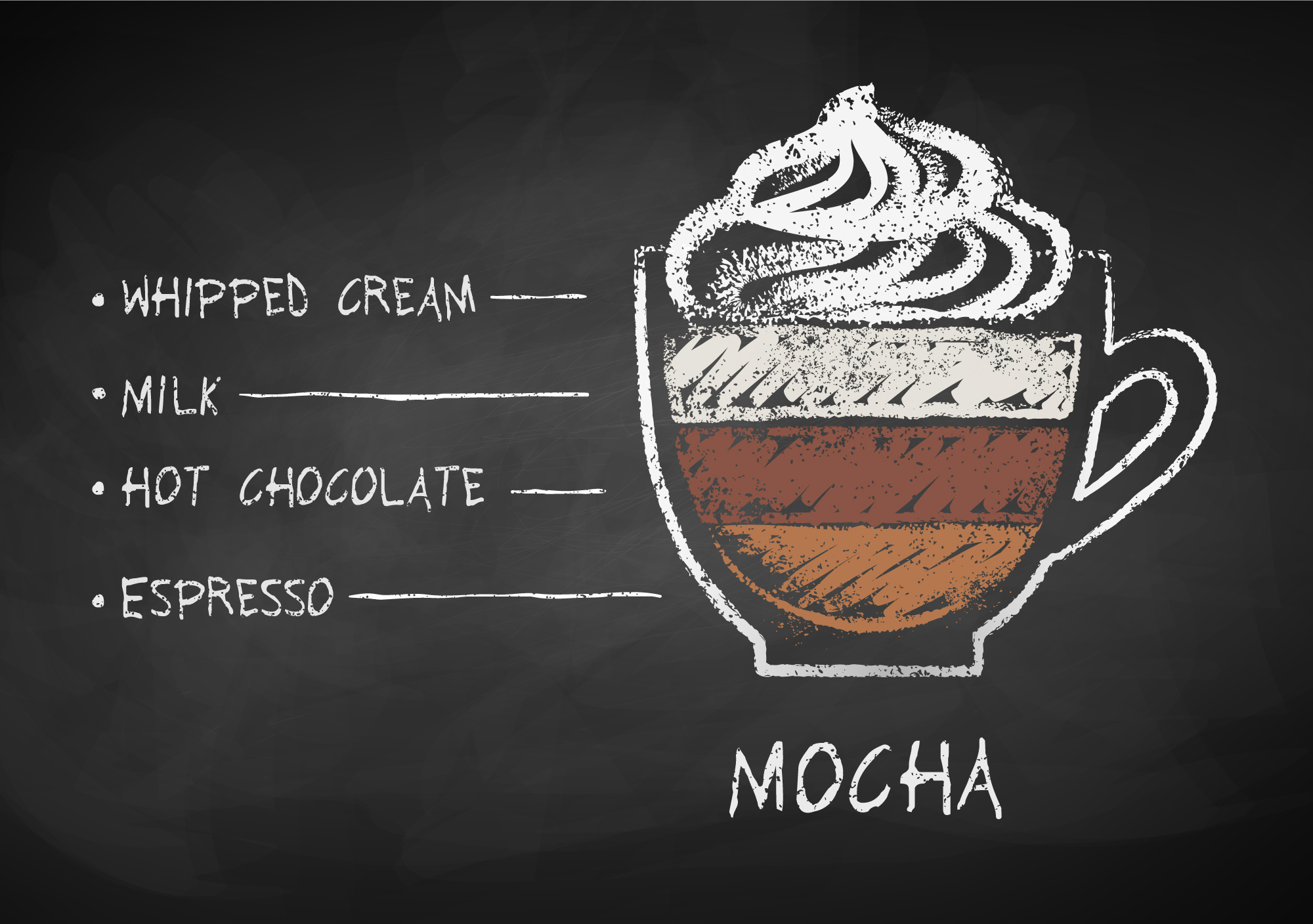

The journey towards the Mochaccino framework began with the recognition that while individual drone technologies were advancing rapidly, their disparate nature often created operational bottlenecks. High-resolution cameras, LiDAR sensors, sophisticated GPS modules, and powerful flight controllers were all critical, yet their integration often remained rudimentary. The vision was to create a unified intelligence layer, a “blend” that would allow these components to communicate, collaborate, and co-evolve, much like the layers of a perfectly crafted coffee drink.

Early autonomous systems, while groundbreaking, often operated within predefined parameters, lacking the dynamic adaptability required for truly complex scenarios. Mochaccino sought to inject a higher degree of cognitive capability into drone operations. This meant moving beyond simple waypoint navigation to systems capable of understanding context, predicting outcomes, and making real-time adjustments based on a flood of incoming data. The genesis was rooted in overcoming the limitations of current autonomous flight systems, which often struggle with unforeseen obstacles, rapidly changing environmental conditions, or the need for on-the-fly mission re-planning without human intervention. The core idea was to develop an operating system for drones that could learn, adapt, and even anticipate, effectively making the drone a proactive, rather than merely reactive, agent in its operational environment.

Overcoming Integration Challenges with Layered Architecture

One of the primary challenges in developing autonomous drone systems has always been the effective integration of diverse hardware and software components. Sensors from different manufacturers, proprietary communication protocols, and varied data formats often lead to fragmented data streams and limited interoperability. Mochaccino tackles this head-on by proposing a modular, layered architecture that provides a universal interface for all integrated components.

At its heart, the Mochaccino framework employs a robust middleware layer that acts as a translator and orchestrator for all data flowing through the system. This layer normalizes data from various sources—be it optical sensors, thermal cameras, radar, or meteorological inputs—and presents it in a unified format to the decision-making engine. This abstraction allows for seamless upgrading or swapping of individual components without requiring a complete overhaul of the core intelligence. Furthermore, the layered design facilitates the development of specialized modules for specific tasks, such as advanced image processing for defect detection or predictive analytics for environmental changes, all while ensuring they operate harmoniously within the broader autonomous system. This architecture ensures that as new technologies emerge, they can be readily incorporated into the Mochaccino ecosystem, enhancing its capabilities without disrupting its fundamental operations.

The Role of Machine Learning and AI in Cognitive Autonomy

At the conceptual core of Mochaccino lies a sophisticated suite of machine learning (ML) and artificial intelligence (AI) algorithms. These are not merely for object recognition or basic navigation; they form the cognitive engine that enables truly autonomous and intelligent decision-making. The framework leverages deep learning models for complex environmental perception, allowing drones to interpret intricate visual data, identify anomalies, and understand spatial relationships with unprecedented accuracy.

AI within Mochaccino extends to predictive analytics, where historical data, real-time sensor inputs, and environmental models are used to forecast potential risks or optimize flight paths. For instance, in an infrastructure inspection scenario, the AI can learn typical patterns of wear and tear, prioritize areas for closer examination, and even predict future maintenance needs. Furthermore, reinforcement learning is utilized to enable drones to learn from their experiences, improving their decision-making capabilities over successive missions. This constant learning loop allows the Mochaccino-powered drone to adapt to novel situations and become increasingly efficient and reliable over time. The goal is to imbue the drone with a level of operational intelligence that minimizes human intervention, freeing operators to focus on higher-level strategic objectives rather than tactical flight control.

Core Components of the Mochaccino Framework: Layered Intelligence

The Mochaccino framework is characterized by its meticulously designed layered intelligence, where each stratum contributes to the overall autonomy, efficiency, and reliability of drone operations. These layers work in concert, creating a synergistic effect that elevates performance beyond what individual components could achieve.

Advanced Sensor Fusion for Comprehensive Environmental Awareness

A cornerstone of Mochaccino’s layered intelligence is its cutting-edge sensor fusion capability. Rather than relying on a single sensor type, the framework intelligently combines data from multiple sources—such as high-resolution RGB cameras, thermal imaging, LiDAR, radar, and hyperspectral sensors—to construct a comprehensive and highly accurate 3D model of the operational environment. This multi-modal perception system mitigates the limitations of individual sensors; for example, LiDAR excels in depth mapping, while thermal cameras can detect heat signatures obscured by foliage, and RGB cameras provide rich textural information.

The sensor fusion algorithms within Mochaccino employ advanced probabilistic methods like Kalman filters and particle filters to process noisy and uncertain sensor data, producing a more robust and reliable representation of the world. This enhanced environmental awareness is crucial for applications requiring high precision, such as volumetric mapping, precision agriculture, or complex obstacle avoidance in dynamic urban settings. By continuously integrating and cross-referencing data from diverse sensor types, Mochaccino ensures that the drone possesses an unparalleled understanding of its surroundings, enabling safer and more effective autonomous navigation and data acquisition.

Predictive Path Planning and Dynamic Obstacle Avoidance

Beyond basic navigation, Mochaccino introduces a new paradigm in path planning and obstacle avoidance. Leveraging its comprehensive environmental awareness, the system employs predictive algorithms that analyze potential trajectories not just in terms of current obstacles, but also anticipated movements and environmental changes. This allows for proactive path adjustments, minimizing risks and optimizing efficiency.

Dynamic obstacle avoidance is handled with sophisticated real-time processing that can detect unexpected objects (e.g., birds, other drones, moving vehicles) and generate evasive maneuvers almost instantaneously. This isn’t merely about stopping; it’s about re-planning a safe and efficient path on the fly, maintaining mission objectives while ensuring safety. The system uses a combination of deep learning for object recognition and classical control theory for precise flight adjustments, ensuring both intelligent decision-making and rapid, stable execution. This capability is particularly critical for BVLOS (Beyond Visual Line of Sight) operations where human intervention for immediate evasive action is not feasible.

Edge Computing and Real-time Data Analytics

To facilitate rapid decision-making and minimize reliance on external communication links, Mochaccino heavily integrates edge computing capabilities directly onto the drone. This means that significant portions of data processing and AI inference occur onboard, close to the data source. Raw sensor data can be analyzed and compressed in real-time, extracting critical insights before transmission or storage.

This onboard processing drastically reduces latency, which is vital for dynamic applications like search and rescue or precision delivery. Instead of sending terabytes of raw video to a cloud server for analysis, the drone can identify key events or anomalies locally and transmit only the relevant metadata or processed findings. This not only conserves bandwidth but also enhances operational security and privacy. The real-time data analytics layer provides operators with immediate actionable intelligence, allowing for swift responses to evolving situations, whether it’s identifying a structural defect in real-time during an inspection or pinpointing a hot-spot during a wildfire mapping mission.

Applications and Impact: Beyond Conventional Drone Operations

The integration and sophistication offered by the Mochaccino framework have profound implications, propelling drone capabilities far beyond their traditional roles. Its layered intelligence unlocks new possibilities across a spectrum of industries, enabling more complex, critical, and autonomous missions.

Advanced Infrastructure Inspection and Maintenance

In infrastructure management, Mochaccino transforms routine inspections. Drones equipped with this framework can autonomously conduct highly detailed assessments of bridges, pipelines, power lines, and wind turbines. The AI-driven defect detection can identify hairline cracks, corrosion, or structural fatigue with superhuman precision, reducing manual inspection times and costs dramatically. Furthermore, the predictive analytics component can track the degradation rate of assets, enabling proactive maintenance scheduling and extending the lifespan of critical infrastructure. By integrating diverse sensor data, Mochaccino creates detailed digital twins of assets, allowing for continuous monitoring and simulation of various stress scenarios, ultimately enhancing safety and operational longevity.

Precision Agriculture and Environmental Monitoring

For agriculture, Mochaccino offers a granular level of insight, allowing for unprecedented precision in crop management. Drones can fly autonomously, monitoring plant health, detecting pest infestations, and assessing irrigation needs with hyperspectral and thermal sensors. The AI can then precisely calculate the required amount of water, fertilizer, or pesticide for specific areas, minimizing waste and maximizing yield. In environmental monitoring, Mochaccino-powered drones can track wildlife populations, monitor deforestation, detect pollution sources, and assess disaster damage with unmatched efficiency and accuracy, providing crucial data for conservation efforts and rapid response.

Enhanced Search and Rescue Operations

Perhaps one of the most impactful applications lies in search and rescue (SAR). Mochaccino-enabled drones can rapidly scan vast, challenging terrains with thermal and optical cameras, identifying individuals or signs of distress far more quickly than ground teams or conventional aerial assets. The real-time data analytics and edge computing ensure that critical information—such as the location of a survivor or a developing hazard—is immediately transmitted to command centers. The autonomous navigation and obstacle avoidance systems allow these drones to operate effectively in complex, dangerous environments, such as collapsed buildings or dense forests, reducing the risk to human rescuers and accelerating intervention times in critical situations.

The Future Horizon: Evolution of the Mochaccino Ecosystem

The Mochaccino framework, while revolutionary today, is designed with future expansion and adaptability in mind. Its modular architecture ensures it can seamlessly integrate emerging technologies and adapt to evolving operational demands, paving the way for even more sophisticated autonomous capabilities.

Interoperability and Swarm Intelligence

One of the key future developments for Mochaccino is enhanced interoperability with other autonomous systems and the expansion into advanced swarm intelligence. Imagine a scenario where multiple Mochaccino-powered drones, each perhaps equipped with specialized sensors, communicate and coordinate in real-time to perform a complex mission, like mapping an entire disaster zone or securing a large perimeter. The framework’s ability to facilitate robust inter-drone communication and shared situational awareness will be critical for enabling true swarm autonomy, where drones collectively make decisions, distribute tasks, and adapt to changing conditions as a unified intelligent entity. This will amplify their operational capacity exponentially, allowing for missions that are currently unfeasible with single-drone deployments.

Ethical AI and Regulatory Harmonization

As Mochaccino pushes the boundaries of autonomous decision-making, the ethical implications of AI will become increasingly central. Future iterations will incorporate robust ethical AI frameworks, ensuring that autonomous decisions align with human values and regulatory guidelines. This includes explainable AI (XAI) features, providing transparency into how autonomous decisions are made, and built-in safeguards to prevent unintended consequences. Furthermore, the evolution of Mochaccino will necessitate close collaboration with regulatory bodies to harmonize technological advancements with legal and safety standards, particularly concerning Beyond Visual Line of Sight (BVLOS) operations and urban air mobility. Establishing trust through transparent and ethically grounded AI will be paramount for widespread adoption.

In conclusion, “Mochaccino” transcends its whimsical codename to represent a monumental leap in drone technology. It is a sophisticated, integrated, and intelligent framework that merges the best of AI, sensor fusion, and autonomous systems to create a new generation of unmanned aerial capabilities. By fostering deeper environmental understanding, predictive operational planning, and real-time analytical power, Mochaccino empowers drones to tackle some of humanity’s most pressing challenges, from safeguarding infrastructure and optimizing agriculture to enhancing environmental stewardship and saving lives. As this integrated ecosystem continues to evolve, it promises a future where autonomous drones are not just tools, but intelligent partners in innovation, continually redefining the horizons of what’s possible in the skies above.