In the dynamic world of drone technology and innovation, precision is paramount. Every byte of data, every line of code, and every sensor reading contributes to the intricate tapestry of autonomous flight, intelligent decision-making, and accurate environmental mapping. Yet, within this complex ecosystem, there exists a concept that, while not immediately intuitive from its biological origins, provides a profound metaphor for understanding critical deviations and fundamental re-alignments in how drone systems perceive and interact with their world: the “frameshift.”

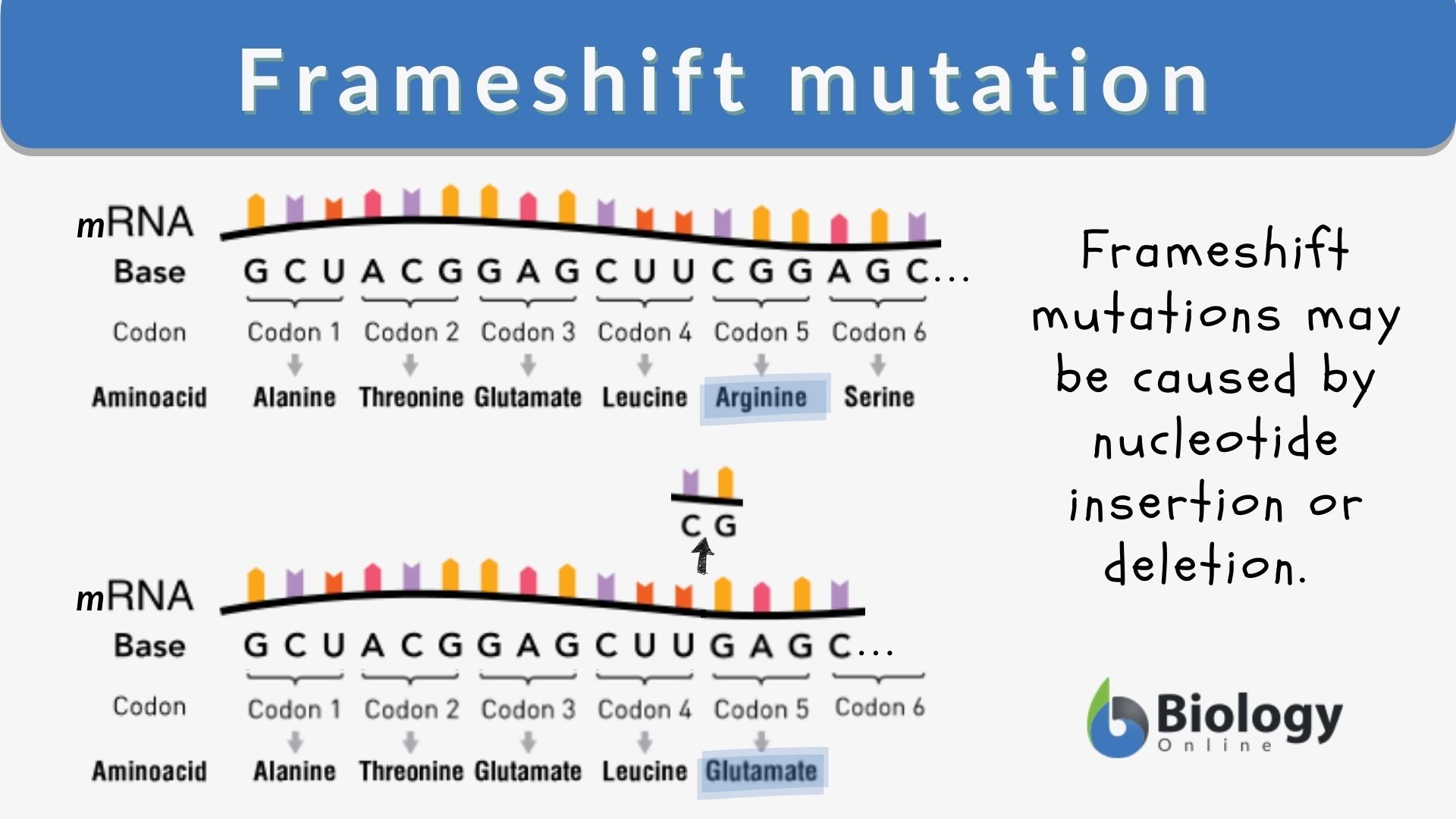

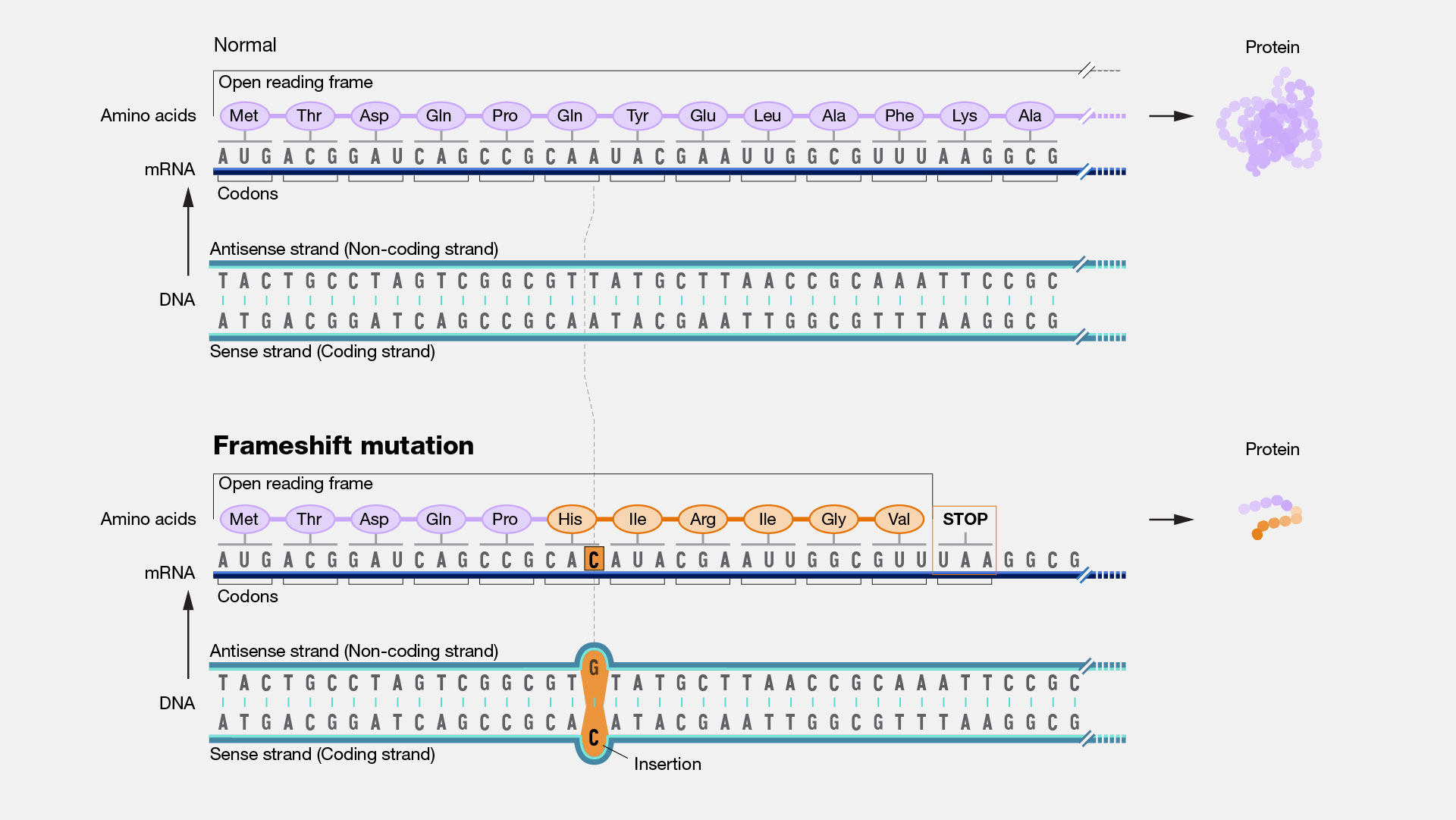

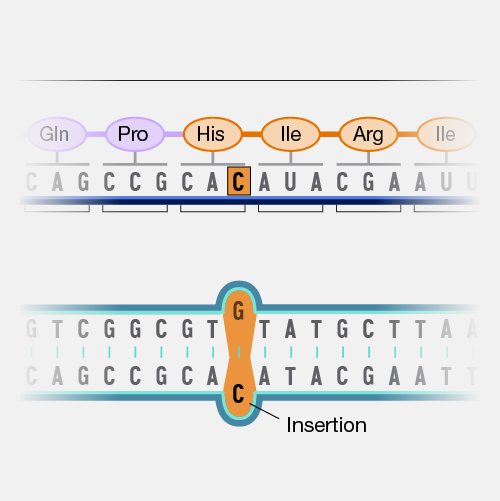

Originally a term from molecular biology describing a mutation that alters the reading frame of a gene, leading to a completely different protein, a “frameshift” in the context of drone technology refers to a subtle yet significant alteration in the interpretative framework of data. It’s a shift in how information is “read” or processed by the drone’s intelligent systems, leading to a fundamental change in perception, decision-making, or outcome. This isn’t necessarily a catastrophic failure, but rather a re-contextualization or re-interpretation of data that can have wide-ranging implications, from minor inaccuracies in mapping to unexpected behaviors in autonomous navigation. Understanding these frameshifts – how they occur, their impact, and how to intentionally induce or prevent them – is crucial for advancing the reliability, intelligence, and innovative capabilities of modern drone platforms.

The Invisible Logic: Data Interpretation and Algorithmic Frameworks

At the heart of every advanced drone system lies a sophisticated interplay of sensors, processors, and algorithms. These components work in concert to collect vast amounts of data—visual, thermal, LiDAR, GPS, inertial—and then interpret this raw information into actionable insights. The “frame” in which this data is interpreted is defined by the underlying algorithms and the established logical structure, dictating how the drone “sees” its environment, understands its position, and plans its next move.

Precision in Autonomous Flight

For autonomous drones, the concept of a stable interpretative frame is non-negotiable. Every decision, from maintaining altitude to executing complex maneuvers, relies on a consistent and accurate understanding of sensory input. A drone utilizing AI for obstacle avoidance, for instance, processes camera feeds and LiDAR data to construct a 3D map of its surroundings. If there’s a minor anomaly or an unforeseen bias introduced into this data stream – perhaps due to sensor calibration drift, environmental interference, or even a subtle bug in a perception algorithm – the drone’s “understanding” of its environment can undergo a frameshift. What was perceived as a clear path might suddenly contain an phantom obstacle, or a genuine threat might be overlooked because its data signature has been misinterpreted. The drone’s internal model of reality shifts, leading to deviations from its intended flight path or mission parameters. This is not necessarily a system crash, but rather a functional misinterpretation within its operational framework.

The Role of Sensors in Data Construction

Sensors are the drone’s eyes and ears, providing the raw data from which its understanding of the world is built. However, raw data is just that – raw. It requires sophisticated processing to become meaningful information. GPS coordinates need to be fused with IMU (Inertial Measurement Unit) data for precise navigation; visual feeds need to be analyzed by computer vision algorithms for object recognition; and LiDAR point clouds must be rendered into coherent spatial maps. Each of these processing steps involves assumptions, filters, and models that together form the interpretative frame. A frameshift can occur if the assumptions of these models are violated, if calibration drifts, or if external conditions introduce noise that the processing framework is not designed to handle. For example, specific lighting conditions might cause a camera’s auto-exposure to adjust in a way that fundamentally alters the visual features an AI model is trained to recognize, essentially shifting the “frame” of visual interpretation for that moment.

Understanding a “Frameshift”: Misinterpretations and Their Consequences

A frameshift in drone technology is often characterized by a discrepancy between expected outcomes and actual drone behavior, stemming from a fundamental misinterpretation of data rather than a hardware failure. These shifts can be subtle, making them difficult to diagnose, but their cumulative effects can be significant, impacting mission success, safety, and data integrity.

Subtle Deviations in AI Perception

The increasing reliance on Artificial Intelligence and Machine Learning in drones introduces new vectors for frameshifts. AI models learn patterns and make predictions based on vast training datasets. If a real-world scenario presents data that, while superficially similar, contains subtle differences not encountered in training, the AI’s “perception” can undergo a frameshift. For example, an AI trained to identify specific crop diseases might struggle if environmental conditions alter the visual presentation of the disease in an unexpected way, leading to misclassification (a frameshift in diagnostic interpretation). Similarly, in AI Follow Mode, a drone might misinterpret the subject’s intention if their movement patterns deviate slightly from trained behaviors, leading to unexpected changes in tracking behavior. These are not errors in the traditional sense, but a shift in the AI’s understanding of the context.

Cascading Effects in Mapping and Remote Sensing

In applications like mapping, surveying, and remote sensing, a frameshift can have cascading effects on the accuracy and reliability of the generated outputs. Imagine a drone conducting an aerial survey to create a high-resolution 3D model of a construction site. If, due to GPS signal degradation or IMU drift, the drone’s estimated position shifts by a few centimeters at a critical juncture, every subsequent data point collected could be slightly misaligned. This subtle frameshift in spatial understanding can propagate throughout the entire dataset. When these images are stitched together, the resulting map might contain distortions, misaligned features, or inaccurate measurements, rendering the entire dataset less useful or even erroneous. The ‘ground truth’ understood by the processing software has fundamentally shifted from the actual ground truth. Similarly, in remote sensing, a frameshift in how spectral data is interpreted could lead to misidentification of materials or environmental conditions, impacting ecological studies or resource management decisions.

Mitigating Frameshifts: Strategies for Robust Drone Systems

Preventing and managing frameshifts is a critical aspect of developing robust and reliable drone technology. It involves a multi-layered approach encompassing hardware design, software architecture, and sophisticated algorithmic techniques designed to maintain a consistent and accurate interpretative frame.

Redundancy and Cross-Validation

One of the most effective strategies against unwanted frameshifts is the implementation of redundancy and cross-validation in sensory input and data processing. By employing multiple types of sensors that provide overlapping information (e.g., GPS, RTK/PPK, visual odometry, LiDAR for navigation), the drone’s system can continuously cross-reference data points. If one sensor’s reading suggests a frameshift (e.g., a sudden, unexplainable change in position), it can be validated or invalidated by comparing it against data from other, independent sensors. This sensor fusion approach helps to filter out noise, identify faulty readings, and maintain a stable and accurate understanding of the drone’s state and environment, essentially preventing a single point of failure from causing a system-wide interpretive shift.

Advanced Error Correction and Machine Learning Models

Beyond hardware redundancy, sophisticated software techniques, particularly advanced error correction algorithms and resilient machine learning models, play a vital role. Kalman filters and their variants are widely used to estimate a drone’s state by combining noisy sensor measurements, continuously refining the interpretative frame. For AI systems, techniques like anomaly detection, uncertainty quantification, and continuous learning from diverse datasets help to minimize frameshifts. Anomaly detection can flag data that falls outside expected parameters, prompting further investigation. Uncertainty quantification allows AI models to express their confidence in a prediction, highlighting areas where a frameshift might be occurring due to novel or ambiguous input. Furthermore, ethical AI design and robust validation processes are crucial to ensure that the AI’s “frame of understanding” remains consistent and aligned with intended operational goals, even under challenging conditions.

The Transformative Potential: Deliberate “Frameshifts” for Innovation

While often discussed in terms of undesirable outcomes, the concept of a “frameshift” also holds immense potential for deliberate innovation. By intentionally inducing or designing systems to embrace a frameshift—a fundamental re-alignment in data interpretation or algorithmic logic—developers can unlock new capabilities, redefine existing paradigms, and push the boundaries of drone intelligence.

Redefining Autonomous Decision-Making

A deliberate frameshift in autonomous decision-making could involve changing the drone’s core objective function or risk assessment framework. For example, current autonomous systems often prioritize efficiency or safety based on predefined rules. A frameshift here might mean enabling drones to dynamically adapt their priorities based on real-time ethical considerations, resource availability, or even crowd psychology in urban environments. Imagine drones that, instead of simply avoiding obstacles, learn to predict human intent and “frameshift” their flight path to proactively manage pedestrian flow at a concert. This requires a fundamental re-evaluation of how the drone interprets its mission and its environment, moving beyond simple task execution to more nuanced, adaptive, and context-aware behavior. This “frameshift” in intelligence could lead to truly symbiotic human-drone interactions.

Pushing the Boundaries of Data Analytics

In remote sensing and mapping, a deliberate frameshift could involve revolutionizing how multi-modal data is fused and interpreted. Instead of simply layering different data types (e.g., thermal over visual), a frameshift would entail developing entirely new analytical frameworks that derive insights from the interdependencies and synergies between these data streams that are currently overlooked. For instance, combining drone-captured spectral data with localized air quality sensor readings and even social media sentiment analysis could create a frameshift in environmental monitoring, allowing for predictions and interventions that were previously impossible. This isn’t just about more data; it’s about fundamentally re-evaluating the “frame” through which we extract knowledge, leading to a richer, more holistic understanding of complex systems. Such a conceptual frameshift could unlock entirely new applications, from hyper-local climate modeling to predictive infrastructure maintenance.

Conclusion

The concept of a “frameshift” provides a powerful lens through which to examine the intricate workings of drone technology and innovation. Whether an unwelcome deviation caused by subtle data misinterpretations or a deliberate re-alignment of algorithmic logic for groundbreaking new capabilities, understanding these shifts is essential. As drones become increasingly autonomous and intelligent, their ability to accurately and consistently interpret their world—or to intentionally adopt new interpretative frameworks—will define the next generation of aerial robotics. By diligently working to prevent undesirable frameshifts through robust engineering and by strategically pursuing deliberate ones, we can unlock the full potential of drones to revolutionize industries, enhance safety, and drive unprecedented technological advancement.