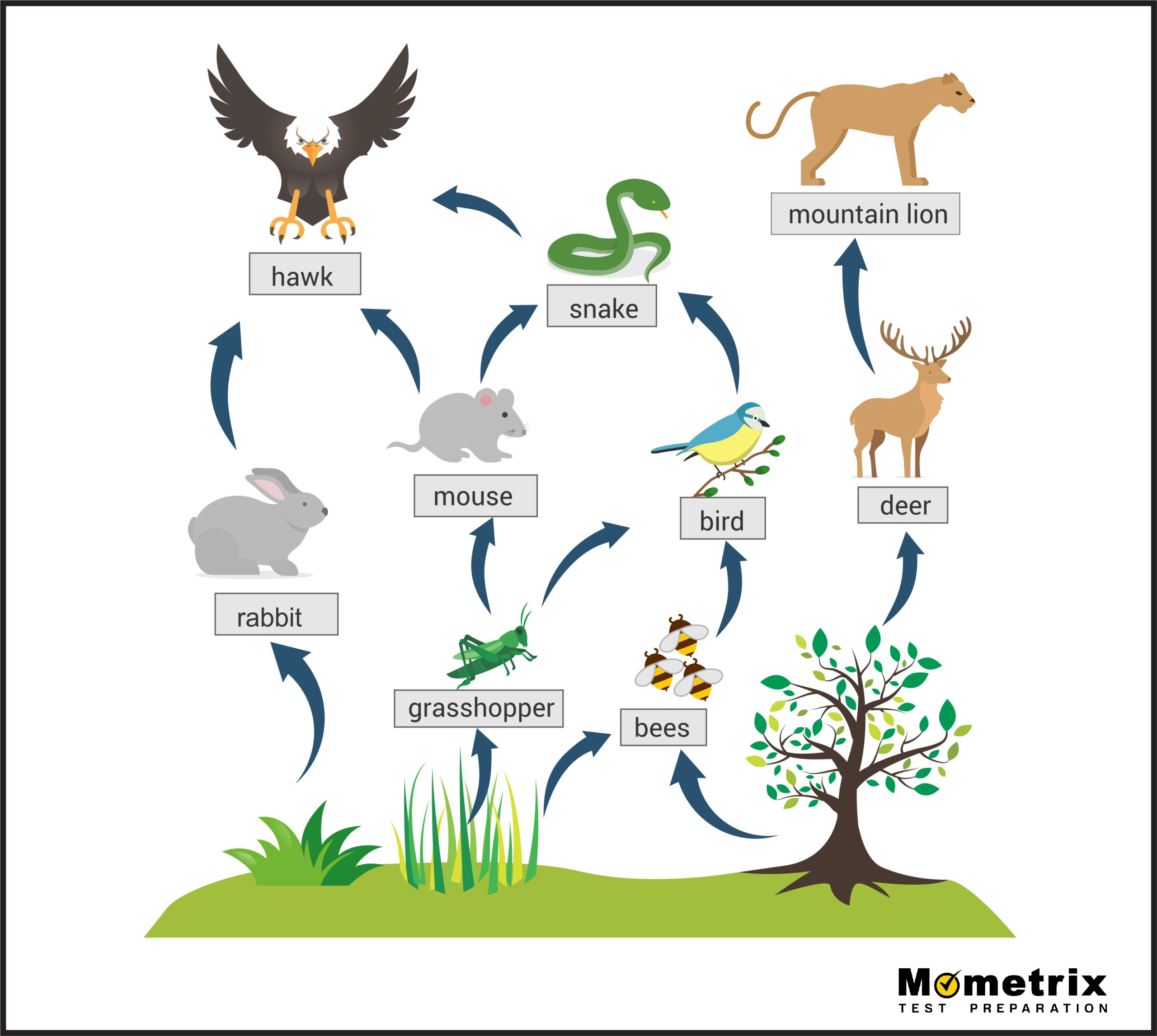

In the context of advanced tech and innovation, the concept of a “food web” serves as a powerful metaphor for the intricate, interdependent systems that constitute modern drone remote sensing and mapping architectures. Just as a biological food web describes the flow of energy and nutrients through an ecosystem, a technological food web describes the flow of data, power, and intelligence through a drone’s operational framework. To understand what this web is made up of, we must look beyond the physical aircraft and examine the complex layers of sensors, processing algorithms, and autonomous systems that allow a drone to perceive, interpret, and act upon the world.

The Foundational Layer: Sensors as the Primary Producers of Data

In any ecosystem, the primary producers—usually plants—convert raw energy into a form that others can use. In the drone technology food web, the “primary producers” are the high-end sensors and remote sensing instruments. These devices capture raw electromagnetic energy and translate it into digital data packets that fuel the rest of the system.

Optical and Multispectral Instruments

The most common “producers” in the drone web are high-resolution optical sensors. These go far beyond standard photography; they include multispectral and hyperspectral cameras that capture wavelengths invisible to the human eye. By measuring the “Red Edge” or Near-Infrared (NIR) bands, these sensors provide the foundational data for the Normalized Difference Vegetation Index (NDVI). This raw data is the base energy of the tech web, providing the high-frequency information necessary for precision agriculture and environmental monitoring.

LiDAR and Active Remote Sensing

While optical sensors are passive, relying on ambient light, LiDAR (Light Detection and Ranging) acts as a more specialized producer. By emitting laser pulses and measuring the time it takes for them to bounce back, LiDAR creates dense 3-point clouds. This represents a different species of data—one that focuses on structural geometry and topographical precision. Within the innovation ecosystem, LiDAR is essential for stripping away “noise” (like forest canopies) to reveal the “ground truth” (the digital terrain model) beneath.

Thermal and Atmospheric Sensors

Thermal sensors represent the heat-mapping layer of the food web. By detecting long-wave infrared radiation, these sensors allow the drone to perceive temperature differentials. This is critical for industrial inspections, search and rescue, and energy auditing. When combined with atmospheric sensors that measure gas concentrations or particulate matter, the drone moves from being a simple camera platform to a complex mobile laboratory.

The Processing Pipeline: How Information Flows Through the Tech Web

Once the primary producers (the sensors) have generated raw data, that data must be “consumed” and processed. In a technological food web, this is the stage of data ingestion, normalization, and photogrammetry. Without this layer, the raw bits and bytes are disconnected and unusable; the processing pipeline provides the connective tissue that turns individual snapshots into a coherent “web” of information.

Photogrammetric Stitching and Orthomosaics

The first level of data consumption involves photogrammetry software. Here, overlapping images are analyzed to identify common points, which are then used to stitch thousands of photos into a single, georeferenced orthomosaic map. This process requires significant computational “metabolism.” The innovation in this space involves moving from local desktop processing to cloud-based distributed computing, where massive server farms act as the “decomposers” and “rebuilders” of the data, refining raw inputs into highly accurate 2D and 3D models.

Data Fusion and Cross-Layer Analysis

A sophisticated drone food web does not rely on a single data source. Innovation in remote sensing now emphasizes “Data Fusion.” This is the process of overlaying different types of data—such as draping a thermal map over a 3D LiDAR mesh. By fusing these layers, the system creates a holistic view of the environment that is greater than the sum of its parts. This convergence is where the tech web becomes truly resilient, as the strengths of one sensor (e.g., the structural detail of LiDAR) compensate for the weaknesses of another (e.g., the lack of visual color in LiDAR).

The Role of Telemetry and Real-Time Transmission

For a web to function, energy must move efficiently. In drone tech, this movement is facilitated by high-bandwidth data links and telemetry systems. Using Occusync, 5G, or satellite links, the “energy” (data) can be transmitted from the drone (the edge) to the command center (the core) in real-time. This low-latency transmission is what allows the drone web to react dynamically to changing environmental conditions, much like an organism reacting to a stimulus.

AI and Machine Learning: The Apex of the Digital Ecosystem

At the top of the food web sit the apex processors: Artificial Intelligence (AI) and Machine Learning (ML). These systems do not just consume data; they interpret it, draw conclusions, and direct the behavior of the lower levels. The transition from “automated” to “autonomous” is defined by the sophistication of this AI layer.

Computer Vision and Object Recognition

Modern drone innovation relies heavily on Onboard AI. Using Convolutional Neural Networks (CNNs), drones can now identify and track objects in real-time. Whether it is identifying a specific weed in a field of crops or tracking a vehicle through a dense urban environment, computer vision acts as the “eyes and brain” of the ecosystem. This ability to categorize data as it is being collected reduces the “trophic waste” of unnecessary data storage, allowing the system to focus only on what is relevant.

Predictive Analytics and Change Detection

The true power of the tech food web lies in its ability to predict the future. By analyzing temporal data—maps taken of the same area over weeks or months—AI algorithms can perform “change detection.” They can identify erosion patterns, monitor the progress of a construction site, or predict crop yields. This predictive capability is the “apex” of the system, transforming historical data into future-proof business intelligence.

Edge Computing: Processing at the Source

A major shift in the innovation niche is the move toward “Edge Computing.” Instead of sending all data to a central “stomach” (the cloud) for processing, the drone’s onboard processors (like the NVIDIA Jetson series) handle the computation locally. This “local metabolism” allows for instantaneous decision-making, such as obstacle avoidance or autonomous flight path adjustments, without needing a constant link to a home base. This makes the drone a self-sustaining unit within the larger technological web.

The “Cyber-Ecological” Cycle: Remote Sensing for Environmental Impact

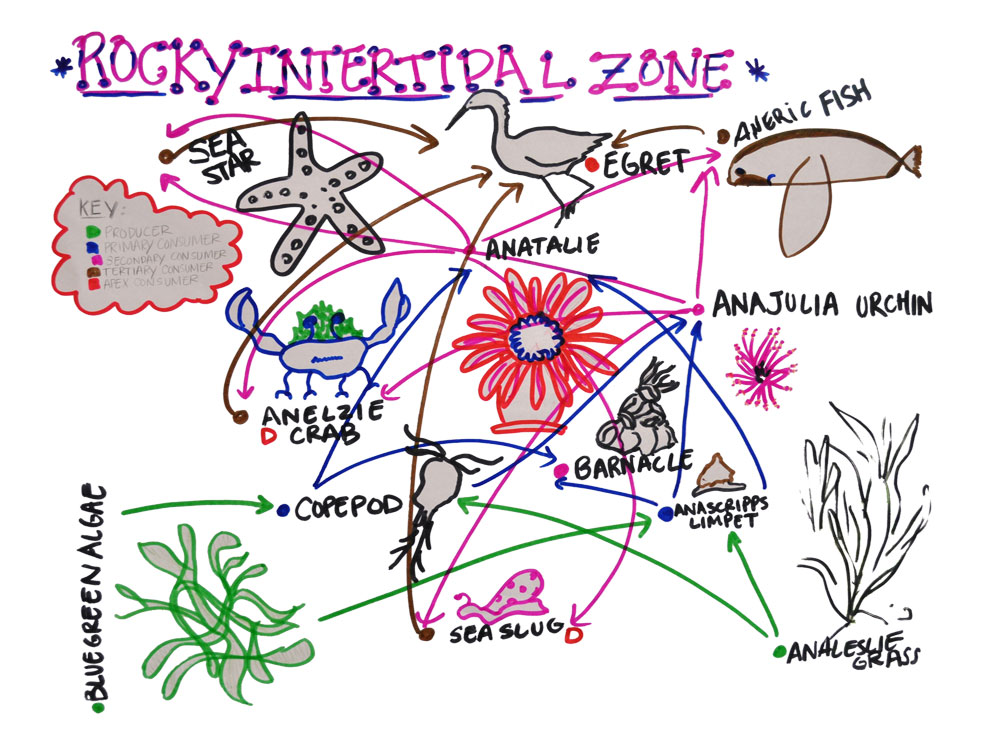

The metaphor of a food web is particularly apt when we consider how these drone technologies are used to study and protect actual biological ecosystems. The integration of remote sensing and AI creates a “Cyber-Ecological” cycle where technology serves the environment.

Biodiversity Mapping and Species Protection

Drones equipped with AI-driven thermal sensors are now used to protect endangered species. In this application, the drone web acts as a silent guardian. By patrolling vast areas of wilderness, the sensors detect the heat signatures of poachers or animals, and the AI classifies them. This data is then fed back to park rangers, creating a feedback loop that protects the biological food web through the digital one.

Forest Management and Carbon Sequestration

In the fight against climate change, the drone mapping web is used to calculate biomass and carbon sequestration. LiDAR-equipped drones can map the individual branches of trees across an entire forest, allowing scientists to calculate the exact volume of wood and, by extension, the amount of carbon stored. This high-level remote sensing provides the granular data needed for carbon credit markets and large-scale reforestation efforts.

The Feedback Loop: Autonomous Iteration

The final component of what a drone food web is made up of is the feedback loop. Every flight provides data that can be used to optimize the next mission. In an autonomous mapping system, the AI learns which flight paths yield the best data density and adjusts its future behavior accordingly. This “evolutionary” aspect of drone tech means that the web is constantly refining itself, becoming more efficient and more capable with every byte of data processed.

Conclusion: The Interconnected Future of Drone Tech

What is a food web made up of in the world of drone innovation? It is a complex, tiered system where sensors act as the foundation, data processing acts as the metabolic engine, and AI acts as the guiding intelligence. This web is characterized by its interconnectedness; a failure in the telemetry layer can starve the AI of data, while a breakthrough in sensor technology can provide an influx of “energy” that elevates the entire system’s capabilities.

As we move toward a future of fully autonomous drone swarms and integrated “smart cities,” the technological food web will only become more intricate. The transition from simple remote sensing to integrated, AI-driven spatial intelligence marks the evolution of drones from mere tools into sophisticated digital organisms that are an essential part of our global technological ecosystem. Understanding this web—not just as individual parts, but as a holistic flow of information—is the key to unlocking the next generation of aerial innovation.