In the intricate world of advanced technology, where autonomous systems, artificial intelligence, and complex algorithms govern everything from flight paths to data analysis, the concept of a “breakdown” takes on a unique and critical dimension. While traditionally associated with human psychological states, for a sophisticated machine, an “emotional breakdown” can be metaphorically understood as a critical system failure—a moment when its intricate programming and operational parameters cease to function coherently, leading to unexpected behaviors, operational paralysis, or even catastrophic failure. This isn’t about silicon chips experiencing feelings, but rather about systems reaching a state where their designed resilience and logical processing capabilities are overwhelmed, resulting in a departure from expected performance. As we push the boundaries of AI, autonomous flight, remote sensing, and mapping, understanding these technological “breakdowns” becomes paramount for ensuring reliability, safety, and continued innovation. This article delves into what constitutes such a breakdown in advanced tech, exploring its causes, manifestations, and the strategies employed to prevent and mitigate these critical operational events.

Defining the “Breakdown” in Advanced Systems

In the realm of Tech & Innovation, an “emotional breakdown” for a system refers not to a state of sentience, but to a profound operational failure that compromises its ability to perform its designed functions reliably and safely. It’s a condition where the system’s internal logic, decision-making capabilities, or physical components succumb to stress, errors, or unforeseen circumstances, leading to a significant and often unpredictable deviation from its intended operational state. This differs significantly from minor glitches or transient errors, representing a more fundamental compromise of the system’s integrity.

Beyond Simple Bugs: The Nature of Systemic Collapse

A systemic collapse, or a true “breakdown,” goes beyond individual software bugs or minor hardware malfunctions. It often involves a cascading failure where an initial fault triggers a chain reaction, overwhelming redundant systems or error-correction protocols. For an autonomous drone, this might mean a sensor input anomaly causing a navigation error, which in turn leads to a critical attitude control failure and an eventual crash, rather than just a temporary loss of GPS signal. Such a collapse signifies that the system’s architecture, despite its layers of complexity and safeguards, has reached a point of incoherence, where its core operational principles are fundamentally undermined. This often occurs under novel or extreme conditions that were not fully anticipated during design and testing.

Differentiating Malfunction from “Breakdown”

It’s crucial to distinguish between a routine malfunction and a systemic “breakdown.” A malfunction is typically a localized or temporary issue that the system might self-correct, work around, or that can be easily diagnosed and repaired. For instance, a temporary loss of video feed on an FPV drone is a malfunction. A “breakdown,” however, implies a more severe and often widespread failure impacting multiple interconnected subsystems, leading to a loss of overall control or purpose. If that FPV drone suddenly loses all control, goes unresponsive to commands, and descends uncontrollably due to a flight controller logic error that no failsafe can override, that’s closer to a system “breakdown.” The latter suggests a deeper vulnerability in the system’s design or its interaction with complex operational environments.

Root Causes of Operational Distress

The factors leading to a technological “breakdown” are multifaceted, often arising from a combination of software, hardware, and environmental challenges. Understanding these root causes is essential for designing more resilient and robust autonomous systems.

Software Imperfections and Algorithmic Flaws

At the heart of many system breakdowns lie imperfections in software and flaws in algorithms. Bugs, logical errors, race conditions, and memory leaks can accumulate or manifest under specific conditions, leading to unexpected behavior. For AI-driven systems, particularly those utilizing machine learning in autonomous flight or remote sensing, biases in training data can lead to skewed decision-making. An AI follow mode drone might misidentify its target due to poor training data, or a mapping drone might misinterpret terrain features if its environmental sensing algorithms are not robust enough for varying light conditions. Furthermore, complex interactions between different software modules can create emergent properties and vulnerabilities that are difficult to predict and debug, making the system prone to failure when faced with real-world complexities.

Hardware Limitations and Environmental Stressors

Beyond software, physical limitations and environmental factors play a significant role. Hardware components—from drone motors and ESCs to critical sensors like gyroscopes and accelerometers—can fail due to manufacturing defects, wear and tear, or external damage. Thermal stress, electromagnetic interference, severe weather conditions (wind, rain, extreme temperatures), and even unexpected physical impacts can push hardware beyond its operational limits. A navigation system reliant on GPS might experience a “breakdown” in accuracy due to signal jamming or urban canyon effects. For systems like micro drones or racing drones, which operate at high speeds and under significant physical stress, hardware integrity is under constant challenge, increasing the likelihood of critical component failure during flight.

Data Integrity and Input Overload

Autonomous systems heavily depend on vast streams of accurate and timely data. A “breakdown” can be triggered by compromised data integrity (corrupted, incomplete, or malicious data) or by an overload of input that the system cannot process efficiently. Remote sensing drones collecting gigabytes of data per second might experience processing bottlenecks, leading to delays or errors in critical real-time decisions. Similarly, if an obstacle avoidance system receives too much conflicting information from multiple sensors in a complex environment, it might become overwhelmed, leading to paralysis or erroneous avoidance maneuvers. Ensuring data quality, implementing robust data filtering, and designing systems with sufficient processing headroom are crucial to prevent these data-induced “breakdowns.”

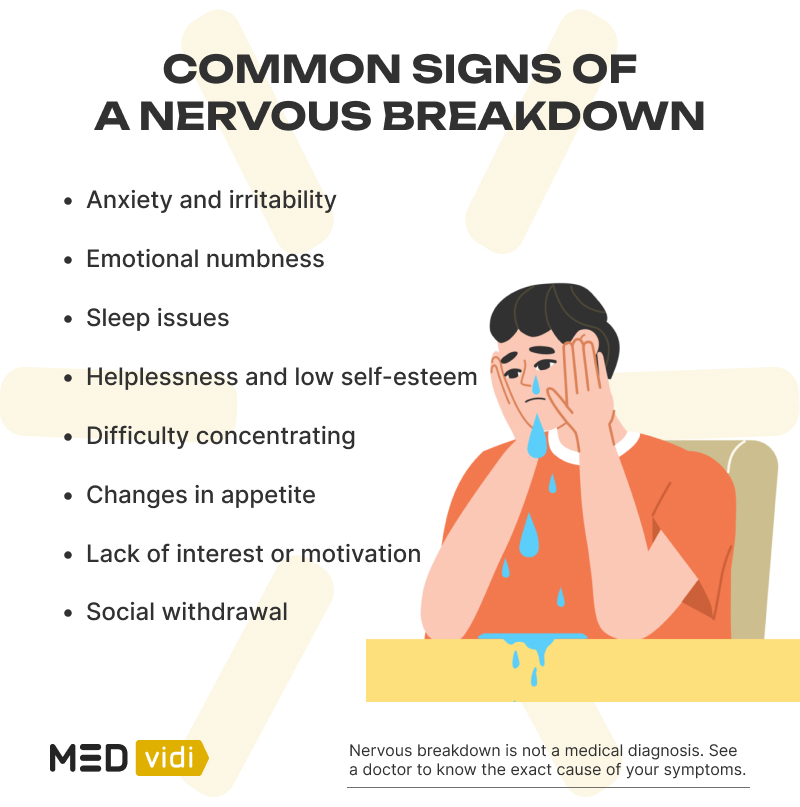

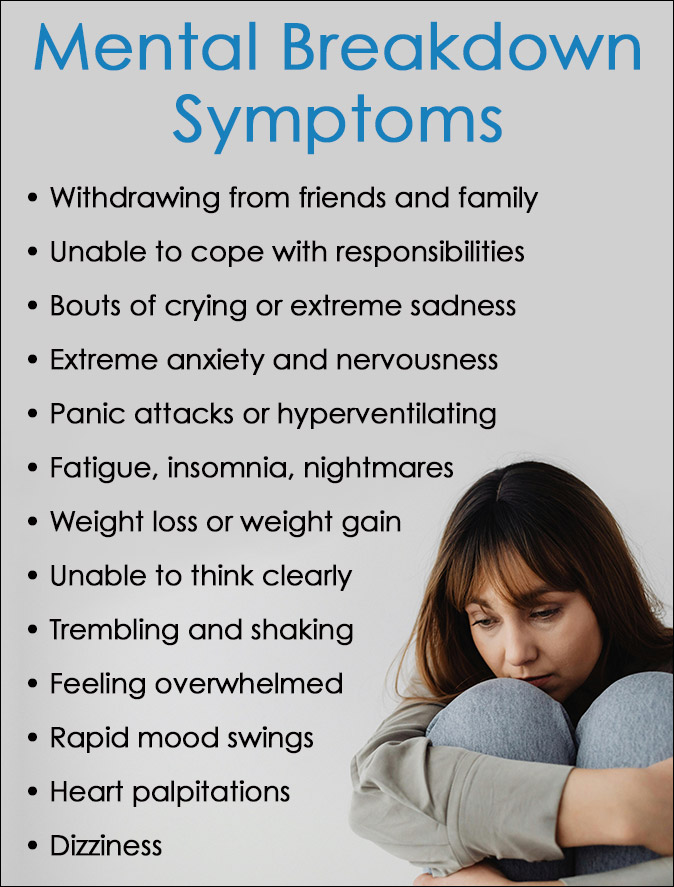

Manifestations: When Systems Go Awry

A technological “breakdown” can manifest in various ways, from subtle performance degradation to outright catastrophic failure. Recognizing these signs early is crucial for intervention and preventing further damage or risk.

Unpredictable Behavior and Loss of Autonomy

One of the most alarming manifestations of a system breakdown is unpredictable behavior. An autonomous drone, instead of following its programmed flight path, might suddenly veer off course, enter an uncontrolled descent, or fail to respond to commands. This loss of autonomy indicates a fundamental compromise in its decision-making or control systems. In AI-powered mapping or remote sensing, an unexpected breakdown could lead to erratic data collection patterns, incomplete scans, or the drone ignoring critical safety parameters. Such unpredictable actions not only jeopardize the mission but also pose significant safety risks to surrounding environments and personnel. The system ceases to be a reliable, predictable agent and becomes a liability.

Performance Degradation and System Crashes

Before a complete breakdown, systems often exhibit signs of performance degradation. This might include slower response times, decreased accuracy in tasks like object detection or GPS positioning, or increased energy consumption. For drone technology, this could mean a reduction in flight stability, shaky camera footage despite a gimbal, or a significantly shortened battery life. Ultimately, severe degradation can lead to a complete system crash, where the software halts unexpectedly, requires a hard reboot, or where the physical drone simply ceases to operate effectively and falls out of the sky. These crashes are often the most visible and impactful indicators of a deep-seated “breakdown,” highlighting critical flaws that bypass existing failsafes.

Security Vulnerabilities and Data Corruption

A system “breakdown” can also manifest through heightened security vulnerabilities or data corruption. When a system’s integrity is compromised, it may become susceptible to external attacks, leading to unauthorized access, control hijacking, or data exfiltration. A drone’s autonomous flight system, for example, could be maliciously taken over if its network protocols or software layers suffer a breakdown in their security architecture. Similarly, internal malfunctions can lead to the corruption of collected data, making valuable aerial imagery or sensor readings unusable. This not only erodes trust in the technology but can also have serious consequences for critical applications like infrastructure inspection, environmental monitoring, or public safety surveillance, where data integrity and system security are paramount.

Building Resilience: Strategies for Prevention and Recovery

Preventing technological “breakdowns” requires a multi-layered approach encompassing robust design, rigorous testing, and continuous monitoring. Building resilience into advanced systems is key to mitigating risks and ensuring reliable operation.

Robust Design and Redundancy in Architecture

At the foundational level, preventing breakdowns begins with robust design and architectural redundancy. This involves designing hardware and software components with fault tolerance, meaning they can continue to operate even if parts of the system fail. For drones, this might include redundant flight controllers, multiple independent sensors (e.g., dual GPS modules), and backup power systems. Software architecture should employ modularity, clear interfaces, and comprehensive error handling routines to prevent a failure in one component from cascading throughout the entire system. Implementing failsafe mechanisms that automatically return a drone to its launch point or land it safely upon detecting critical anomalies is a prime example of building resilience through redundancy and intelligent design.

Advanced AI Training and Validation Protocols

For AI-driven systems, particularly those involved in autonomous flight, AI follow mode, or intelligent mapping, the prevention of “breakdowns” hinges on advanced training and rigorous validation protocols. This means using vast, diverse, and unbiased datasets for machine learning models, meticulously testing AI behavior across a wide range of real-world scenarios and edge cases. Techniques like adversarial training and simulation can expose vulnerabilities and improve the AI’s ability to handle novel situations without ‘breaking down’ under pressure. Continuous learning models that can adapt and improve post-deployment, coupled with human-in-the-loop oversight, ensure that the AI remains reliable and its decision-making transparent and predictable, minimizing the chances of unforeseen failures.

Proactive Monitoring and Predictive Analytics

Even with robust design and thorough testing, real-world operation will always present new challenges. Proactive monitoring and predictive analytics are critical for identifying potential “breakdowns” before they occur. This involves equipping systems with sophisticated diagnostic tools that constantly monitor performance metrics, sensor data, and system logs for anomalies. AI and machine learning algorithms can be employed to analyze these data streams, identifying patterns that may indicate impending hardware failure (e.g., unusual motor vibrations, declining battery health) or software degradation (e.g., increasing latency, resource consumption spikes). For drone fleets, predictive maintenance based on such analytics can schedule timely interventions, replacing components or updating software before a critical failure leads to a full “breakdown.”

The Human Element in Systemic Integrity

While we discuss autonomous systems, the human element remains irreplaceable in ensuring their overall integrity and preventing catastrophic “breakdowns.” From ethical design to operational oversight, human intervention is the ultimate safeguard.

Ethical AI and Trustworthy Autonomous Systems

The development of ethical AI is paramount in preventing “breakdowns” that could have societal or safety consequences. This involves designing AI systems that are transparent in their decision-making, accountable for their actions, and align with human values. For autonomous flight and remote sensing applications, this means ensuring that the AI prioritizes safety, respects privacy, and operates within legal and ethical boundaries. A breakdown in ethical guidelines during development can lead to systems that, while technically functional, make biased or harmful decisions, essentially representing an “ethical breakdown” in their operation. Trustworthy AI principles ensure that systems are not just functional but also reliable, secure, and socially responsible.

Human-in-the-Loop and Emergency Override Protocols

Despite advancements in autonomy, retaining a “human-in-the-loop” or “human-on-the-loop” remains a critical safety measure. For complex drone operations or autonomous vehicles, human operators provide oversight, make critical decisions in unforeseen circumstances, and can initiate emergency override protocols. When an autonomous system approaches a state of “breakdown”—evidenced by unpredictable behavior or critical error alerts—human intervention can prevent catastrophic failure. This often involves clear, intuitive control interfaces and well-defined emergency procedures that allow operators to take manual control, trigger failsafe landings, or shut down a system gracefully. The symbiosis between autonomous capability and human oversight forms the most robust defense against technological “breakdowns.”

The metaphorical “emotional breakdown” in advanced technological systems is a critical challenge as we venture further into the age of autonomy and AI. It highlights the profound complexities inherent in designing, deploying, and maintaining systems that are robust, reliable, and safe. By understanding the causes of systemic failures, recognizing their diverse manifestations, and diligently implementing strategies for prevention and recovery—from robust engineering and advanced AI training to proactive monitoring and essential human oversight—we can build more resilient technologies. Ultimately, our ability to mitigate these technological “breakdowns” will define the future success and trustworthiness of innovations across aerial filmmaking, drone technology, mapping, remote sensing, and beyond, ensuring that our machines remain steadfast partners rather than sources of unpredictable risk.