In the rapidly evolving landscape of Unmanned Aerial Vehicles (UAVs), the term “grammar” has moved beyond the realm of linguistics to become a foundational concept in autonomous navigation and robotic logic. When we ask what a “determiners grammar” is within the context of drone technology and innovation, we are looking at the structured rules, logic gates, and hierarchical processing systems that allow a machine to interpret its environment. Just as grammar in language determines how words relate to one another to create meaning, a determiners grammar in drone technology dictates how sensor inputs (the determiners) are parsed by the flight controller to execute complex, autonomous maneuvers.

This framework is the invisible scaffolding behind AI follow modes, obstacle avoidance, and remote sensing. It is the difference between a drone that simply reacts to a signal and one that understands its spatial context. By exploring the intersection of deterministic logic and machine learning, we can begin to understand how modern drones translate raw data into the sophisticated “language” of autonomous flight.

The Syntax of Flight: Understanding the Framework of Drone Autonomy

At its core, the autonomy of a drone is governed by a set of rules known as deterministic logic. In this ecosystem, “determiners” are the specific variables—altitude, velocity, proximity to obstacles, and GPS coordinates—that provide the necessary context for the drone’s internal “grammar” or software architecture. This architecture must be robust enough to handle trillions of operations per second while remaining flexible enough to adapt to unpredictable environmental changes.

Defining the Grammar of UAV Logic

The “grammar” of a drone refers to the software protocols that define how different subsystems interact. For example, when an autonomous drone is tasked with mapping a forest canopy, its internal grammar dictates that the data from the downward-facing LiDAR must take precedence over the lateral obstacle avoidance sensors if the goal is to maintain a specific altitude relative to the trees. This hierarchical structure ensures that the drone does not experience “logical conflicts” where two different sensor inputs provide contradictory commands.

This grammar is typically written in low-level programming languages that prioritize execution speed. However, as we move into the era of AI and neural networks, this grammar is becoming more “generative.” Modern drones are no longer restricted to a rigid “if-then” logic; instead, they use probabilistic models to determine the most likely safe path, effectively “writing” their own flight syntax in real-time as they encounter new obstacles.

The Role of “Determiners” in Real-Time Decision Making

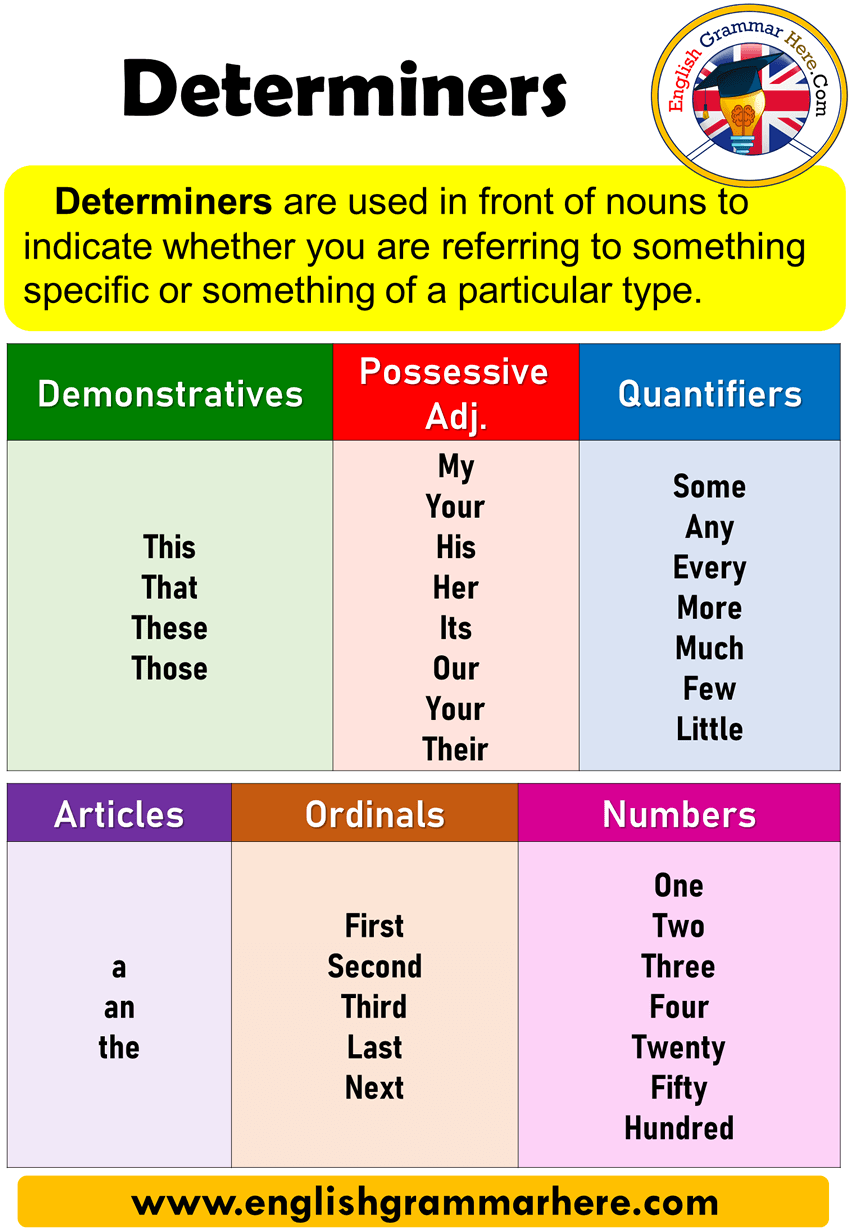

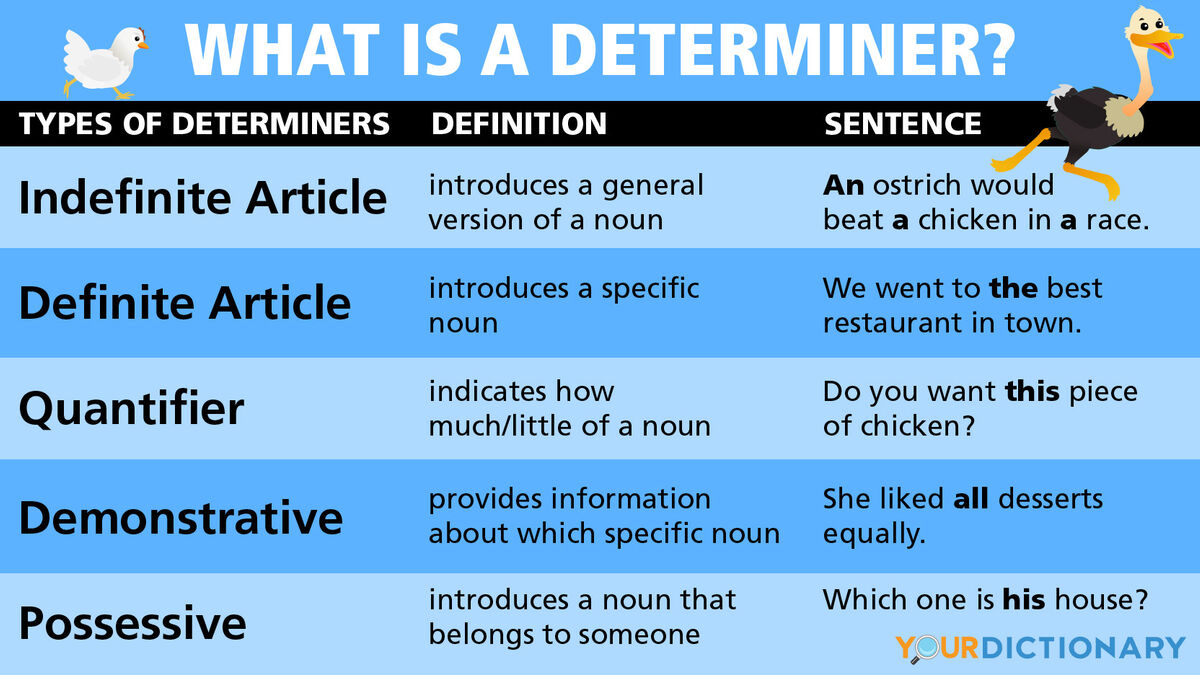

In linguistic grammar, a determiner (like “the,” “a,” or “every”) clarifies the noun it precedes. In drone technology, a determiner is a data point that clarifies the drone’s state within its environment. For instance, a “proximity determiner” from an ultrasonic sensor clarifies the “noun” of an obstacle. Without these determiners, the drone’s processor would be receiving a chaotic stream of numbers with no reference point.

Innovation in this field focuses on “High-Fidelity Determiners”—sensors that provide extremely high-resolution data. When a drone uses a 4K visual sensor combined with AI, the determiners become much more descriptive. Instead of just sensing “an object,” the drone can identify “a moving vehicle” or “a power line.” This level of detail allows for a much more complex grammar of movement, enabling the drone to perform maneuvers that were once thought impossible for autonomous systems.

Sensor Fusion: The Vocabulary of Environmental Awareness

For a drone to exhibit true intelligence, it must do more than just see; it must perceive. This perception is achieved through sensor fusion, a process where multiple determiners are combined to create a single, unified “vocabulary” of the environment. Sensor fusion is the engine that drives tech innovations like SLAM (Simultaneous Localization and Mapping) and autonomous remote sensing.

LiDAR and the Parsing of Three-Dimensional Space

LiDAR (Light Detection and Ranging) acts as one of the most powerful determiners in the drone’s toolkit. By emitting laser pulses and measuring the time it takes for them to bounce back, LiDAR creates a high-density point cloud. In our grammar analogy, these points are the “phonemes” of the landscape. The drone’s processor then parses these points to build a 3D map.

The innovation here lies in how the drone handles this massive influx of data. A “determiners grammar” allows the system to filter out “noise”—such as dust particles or rain—while focusing on solid structures. This allows drones to navigate through dense indoor environments or complex industrial sites without human intervention. The ability to distinguish between a temporary obstruction (like a person walking) and a permanent one (like a wall) is a hallmark of advanced algorithmic logic.

Optical Flow and Visual Odometry

While LiDAR provides the structure, optical flow and visual odometry provide the “verbs” of the flight—the movement. Optical flow sensors track the motion of pixels across a camera sensor to determine the drone’s speed and direction relative to the ground. This is particularly crucial in GPS-denied environments, such as inside tunnels or under bridges.

By integrating optical flow into the determiners grammar, developers allow the drone to maintain a stable hover even when external positioning systems fail. The drone “reads” the ground below it, using visual patterns as anchors. This technological leap has moved drones from being mere toys to essential tools for infrastructure inspection and indoor search-and-rescue operations.

Mapping and Remote Sensing: Constructing the Spatial Narrative

In the realm of Tech and Innovation, mapping is not just about taking pictures; it is about constructing a spatial narrative. When a drone performs remote sensing, it is essentially acting as a mobile laboratory that gathers data to determine the health of crops, the structural integrity of a dam, or the progression of coastal erosion.

Photogrammetry as a Descriptive Language

Photogrammetry is the science of making measurements from photographs. In the context of drone grammar, photogrammetry serves as a descriptive tool. By taking hundreds of overlapping images, the drone provides the raw “text” that software then translates into a 3D model.

The “determiner” in this scenario is the GSD (Ground Sampling Distance), which defines how much ground each pixel represents. Innovation in this space has led to “Autonomous Mapping Missions,” where the drone’s grammar allows it to calculate its own flight path to ensure 100% coverage with the highest possible resolution, adjusting for wind speed and light conditions automatically.

Semantic Segmentation and Object Recognition

The most significant advancement in drone innovation is semantic segmentation. This is an AI-driven process where the drone’s “grammar” allows it to label every pixel in its field of view. Instead of seeing a collection of brown and green shapes, the drone identifies “soil,” “water,” “corn,” and “weeds.”

This remote sensing capability is revolutionary for precision agriculture. A drone can fly over a field, use its determiners grammar to identify areas of nitrogen deficiency, and then generate a prescription map for a ground-based fertilizer spreader. The drone isn’t just a camera in the sky; it is an intelligent agent capable of complex environmental analysis.

AI Follow Mode and Predictive Analytics

One of the most popular applications of these logical systems is the AI Follow Mode. This feature requires a highly sophisticated grammar because it involves predicting the future state of a moving object. Whether it is a mountain biker on a trail or a vehicle on a highway, the drone must determine where the target will be seconds before it arrives there.

The Grammar of Motion Prediction

To follow a subject effectively, the drone uses “Predictive Determiners.” It calculates the subject’s current velocity, acceleration, and likely trajectory. The grammar of the follow mode then dictates how the drone should position itself to maintain the desired framing while avoiding obstacles.

If a biker goes behind a tree, the drone’s grammar doesn’t just stop; it uses “persistence logic” to estimate where the biker will emerge based on their previous speed and direction. This “inference” is a high-level grammatical function that mimics human intuition, allowing for seamless cinematic shots without manual control.

Obstacle Avoidance as a Logical Constraint

Obstacle avoidance is the “punctuation” of a flight path. It provides the constraints within which the drone must operate. Modern drones use a “Voxel-based” approach, where the space around the drone is divided into a 3D grid of cubes (voxels). Each voxel is a determiner: it is either “occupied” or “empty.”

The innovation in autonomous flight is the speed at which the drone can re-calculate a path through these voxels. When an obstacle is detected, the grammar triggers an “evasive subroutine,” which must be executed in milliseconds. This requires massive computational power on the “edge”—meaning the processing happens on the drone itself rather than in the cloud.

The Evolution of Deterministic Logic in Swarm Intelligence

As we look toward the future of drone technology, the “determiners grammar” is expanding from a single unit to a collective. Swarm intelligence involves multiple drones communicating with one another to achieve a common goal, necessitating a “collaborative grammar.”

Collaborative Grammar in Multi-UAV Systems

In a swarm, the determiners are not just environmental; they are also social. Each drone must determine its position relative to its neighbors to avoid collisions while maintaining the swarm’s formation. This requires a shared syntax—a communication protocol where drones “talk” to each other to distribute tasks.

Innovation in swarm logic allows for decentralized control, where there is no “leader” drone. Instead, every drone follows a simple set of grammatical rules that result in complex, emergent behavior. This is being used in light shows, large-scale mapping, and even defense applications, where a swarm can cover a vast area much more efficiently than a single, larger aircraft.

Edge Computing and the Future of Flight Autonomy

The final frontier of this technological evolution is the integration of more powerful AI chips directly into the drone’s hardware. This allows for a much more complex “determiners grammar” that can process deep learning algorithms in real-time. As edge computing power grows, drones will move from following pre-programmed rules to possessing “contextual awareness.”

A drone of the future won’t just follow a path; it will understand the mission’s objective. It will recognize that a person on the ground is signaling for help or that a specific part of a bridge shows signs of structural fatigue that weren’t in the original inspection parameters. This shift from reactive logic to proactive intelligence is the ultimate goal of the “determiners grammar” in drone innovation. By refining the rules and expanding the vocabulary of autonomous systems, we are moving toward a world where flight is not just automated, but truly intelligent.