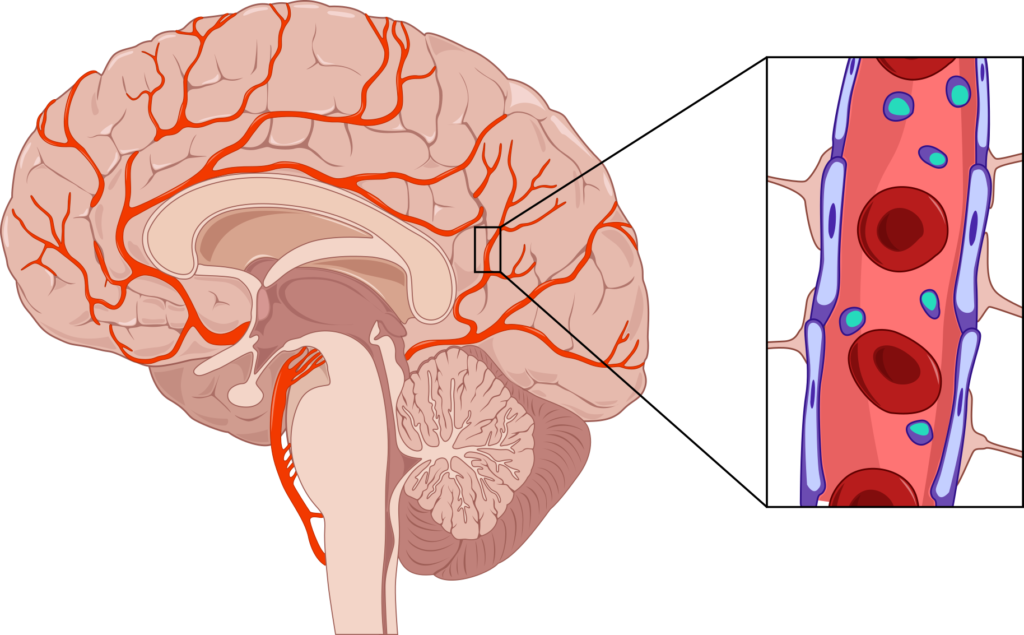

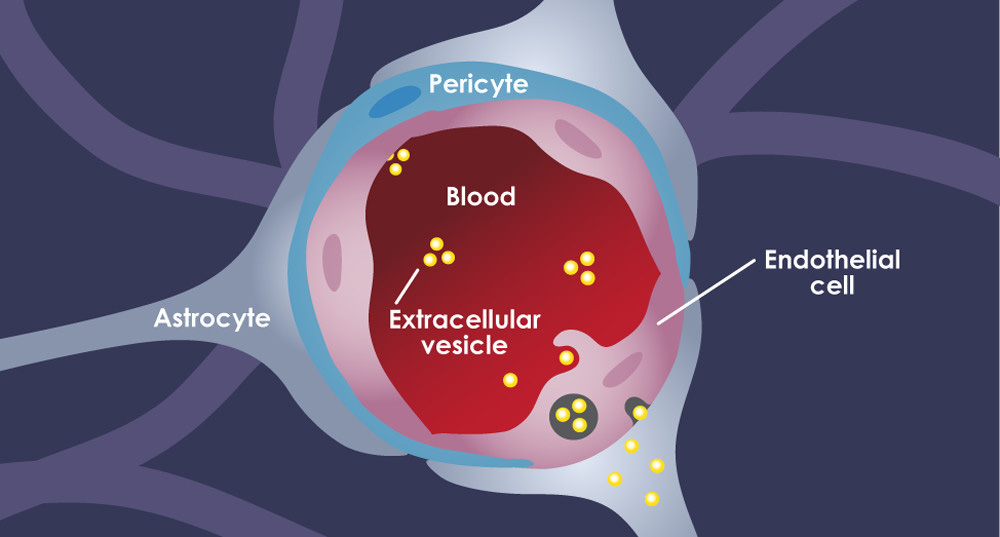

In the realm of biological sciences, the term “blood-brain barrier” refers to a highly selective semipermeable border that separates the circulating blood from the brain and extracellular fluid in the central nervous system. Its fundamental role is to protect the brain from harmful substances while allowing essential nutrients to pass through. This intricate biological defense mechanism has a profound analogy in the sophisticated world of unmanned aerial vehicles (UAVs), particularly in how modern drones process vast amounts of raw sensory data and translate it into intelligent, autonomous actions.

For advanced drone technology, especially those engaged in complex tasks like autonomous navigation, precise mapping, or sophisticated remote sensing, a similar “barrier” is crucial. This metaphorical “blood-brain barrier” in drones represents the intelligent interface and processing layers that filter, interpret, and convert the deluge of ‘raw blood’ — unrefined environmental data from various sensors — into actionable ‘brain’ signals that guide the drone’s perception, decision-making, and flight control. Without such a sophisticated system, a drone would merely be a collection of sensors and motors, incapable of the nuanced interaction with its environment that defines true innovation in aerial robotics. This article will delve into the components and functions of this technological “barrier,” exploring how it underpins the cutting-edge capabilities of contemporary drones.

The Drone’s Sensory Input: A Deluge of “Blood” Data

A drone, much like a living organism, gathers information about its surroundings through a variety of senses. These senses provide the raw, unfiltered “blood” data that forms the foundation of all subsequent intelligence and action. The volume and complexity of this data are immense, presenting both incredible opportunities and significant processing challenges.

Raw Environmental Data Acquisition

Modern drones are equipped with an array of sophisticated sensors, each contributing a unique perspective to the drone’s understanding of its environment. Visual cameras, ranging from high-resolution RGB sensors to specialized multispectral and hyperspectral imagers, capture detailed photographic and video data. Thermal cameras detect infrared radiation, revealing heat signatures invisible to the human eye, crucial for search and rescue, inspection, and environmental monitoring. LiDAR (Light Detection and Ranging) systems emit laser pulses and measure the time it takes for them to return, creating highly accurate 3D point clouds of the terrain and objects. Radar offers robust performance in adverse weather conditions, detecting objects and measuring distances.

Beyond these primary mapping and imaging sensors, drones also rely heavily on internal navigation sensors. Inertial Measurement Units (IMUs) — comprising accelerometers and gyroscopes — provide data on the drone’s orientation, angular velocity, and linear acceleration. Global Positioning System (GPS) or other Global Navigation Satellite Systems (GNSS) offer precise location data. Barometers measure atmospheric pressure to determine altitude. Each of these sensors constantly streams data, often at very high frequencies, creating a continuous torrent of information. This stream, rich yet unorganized, is the “blood” that must be processed for the drone’s “brain” to function.

The Challenge of Noise and Irrelevance

While abundant, raw sensory data is far from perfect. It is often fraught with noise, ambiguity, and redundancy. Environmental factors play a significant role: varying light conditions, shadows, reflections, atmospheric haze, and precipitation can all introduce inaccuracies or obscure critical features in camera data. LiDAR point clouds can be sparse in certain areas or suffer from multi-path reflections. GPS signals can drift or be temporarily lost. IMU data can accumulate errors over time.

Beyond physical noise, a large portion of the raw data might be irrelevant to the drone’s immediate task. For instance, an inspection drone flying over a power line needs to focus on the structure itself, filtering out the complex background of trees, buildings, and sky. An autonomous drone navigating through a forest needs to distinguish between traversable paths and impenetrable obstacles, ignoring non-critical visual clutter. The “blood-brain barrier” must effectively discern vital information from the overwhelming background noise, much like a biological barrier selectively permits nutrients while blocking toxins. Without this filtering, the drone’s “brain” would be overwhelmed, leading to inefficient processing, poor decision-making, or even catastrophic failure.

The Drone’s “Brain”: The Core of Intelligent Flight

Once the raw sensory data, the “blood,” is collected, it must be directed to the drone’s “brain” – its onboard computational hardware and software systems. This is where the magic of transformation occurs, turning raw inputs into meaningful insights and commands.

Onboard Processing Units and Flight Controllers

At the heart of every advanced drone is a powerful suite of processing units. These often include dedicated CPUs (Central Processing Units) for general computation, GPUs (Graphics Processing Units) for parallel processing vital for image and video analysis, and specialized AI accelerators or NPUs (Neural Processing Units) designed to efficiently run machine learning models. These powerful chips are integrated into the drone’s flight controller, which is the central nervous system responsible for interpreting commands, managing motor speeds, maintaining stability, and executing flight patterns.

The flight controller acts as the primary relay station, taking in the filtered data and applying pre-programmed logic and complex algorithms to ensure the drone flies stably and safely. It continuously processes IMU data to make micro-adjustments to motor output, preventing unwanted drifts and ensuring precise maneuvers. For higher-level autonomy, these processing units also manage the more complex tasks of understanding the environment, planning trajectories, and executing mission objectives based on the intelligent interpretation of sensor data.

Artificial Intelligence and Machine Learning Algorithms

The true intelligence of the drone’s “brain” comes from its sophisticated artificial intelligence (AI) and machine learning (ML) algorithms. These algorithms are the “gatekeepers” and advanced processors of the metaphorical blood-brain barrier. They are trained on vast datasets to recognize patterns, classify objects, predict outcomes, and make decisions in real-time.

For example, deep learning models can perform semantic segmentation, identifying and classifying every pixel in a camera feed to distinguish between roads, buildings, vegetation, and obstacles. Object detection algorithms pinpoint specific items of interest, like damaged infrastructure, missing persons, or agricultural anomalies. Reinforcement learning can enable drones to learn optimal flight strategies through trial and error in simulated environments, improving their ability to navigate complex scenarios. These AI/ML components are not merely data processors; they are the interpreters, turning a stream of numbers into a rich, contextual understanding of the world, much like a brain processes sensory input to form perception. They enable the drone to not just see, but to understand what it is seeing, allowing it to adapt and respond intelligently.

Constructing the “Barrier”: From Data to Decision

The essence of the drone’s “blood-brain barrier” lies in the intricate processes that bridge the gap between raw data acquisition and intelligent decision-making. This involves a multi-layered approach to data handling, purification, and interpretation.

Filtering and Pre-processing Layers

Before raw sensory data can be fed into advanced AI models, it undergoes rigorous filtering and pre-processing. This initial stage is critical for cleaning up the “blood,” removing noise, and preparing it for meaningful analysis. Techniques such as sensor fusion are paramount here. Algorithms like Kalman filters or Extended Kalman Filters combine data from multiple disparate sensors (e.g., GPS, IMU, altimeter, visual odometry) to produce a more accurate and robust estimate of the drone’s state (position, velocity, orientation) than any single sensor could provide alone. This fusion helps to compensate for the weaknesses of individual sensors and to mitigate noise.

Beyond fusion, data normalization techniques ensure consistency across different data types and scales. Image processing algorithms apply noise reduction, contrast enhancement, and distortion correction to camera feeds. For LiDAR data, outlier removal and point cloud registration ensure that the 3D map is accurate and coherent. These pre-processing layers are the initial defensive lines of the “barrier,” ensuring that only the most relevant and highest-quality information is passed on for deeper cognitive processing. They transform raw, disparate inputs into a unified, clean, and contextually relevant data stream, ready for the drone’s higher-level “brain” functions.

Real-time Decision Making and Control Loops

With clean, pre-processed, and fused data, the drone’s “brain” can then engage in real-time decision-making and execute precise control commands. This is where the conceptual “barrier” delivers its most tangible output: translating understanding into action. AI and ML algorithms, having processed the refined data, can now make informed judgments. For instance, an object detection system might identify an impending collision risk, prompting the path planning module to generate an evasion maneuver. A mapping drone might analyze terrain data to optimize its flight path for efficient data collection.

These decisions are then fed into sophisticated control loops, which are the feedback mechanisms that allow the drone to maintain stability and execute desired movements. PID (Proportional-Integral-Derivative) controllers are commonly used to adjust motor speeds based on deviations from desired flight parameters (e.g., altitude, heading, speed). The entire process—from sensing to filtering, to AI interpretation, to control command generation, and back to sensing—occurs in milliseconds. The speed and efficiency of this “blood-brain barrier” are paramount for autonomous flight, where split-second decisions can mean the difference between mission success and failure. The real-time nature of this process is what empowers drones to dynamically interact with and adapt to their ever-changing environments.

Applications of the Metaphorical “Barrier” in Drone Innovation

The effectiveness of this metaphorical “blood-brain barrier” is evident in the transformative capabilities it enables across various drone applications, pushing the boundaries of what these aerial platforms can achieve.

Enabling Autonomous Navigation and Obstacle Avoidance

Perhaps one of the most significant advancements powered by this “barrier” is the drone’s ability to navigate complex environments autonomously and avoid obstacles. By intelligently processing sensor data, drones can construct real-time 3D maps of their surroundings, identify potential hazards, and plot safe, efficient trajectories. This capability is vital for operating in confined spaces, urban environments, or dense natural landscapes where manual control is impractical or dangerous. Features like AI Follow Mode, for example, rely entirely on robust object recognition and tracking algorithms within the “barrier” to continuously identify and follow a subject while dynamically avoiding obstacles. Autonomous mapping missions, once requiring extensive human oversight, can now be executed with minimal intervention, as the drone intelligently adjusts its flight path to ensure optimal data capture and prevent collisions.

Advanced Remote Sensing and Data Interpretation

Beyond mere navigation, the “blood-brain barrier” elevates drones into powerful platforms for advanced remote sensing and intelligent data interpretation. Instead of just collecting raw images or point clouds, drones equipped with sophisticated AI can analyze this data on-the-fly or post-flight to extract actionable insights. In agriculture, drones can identify plant diseases, nutrient deficiencies, or irrigation issues with unparalleled precision, informing targeted interventions. For infrastructure inspection, AI can automatically detect cracks in bridges, corrosion on power lines, or anomalies in solar panels, significantly reducing inspection times and improving safety. This transformation from raw data capture to meaningful, actionable intelligence is a direct result of the “barrier’s” ability to filter, interpret, and contextualize vast amounts of information, enabling drones to go beyond observation to true understanding.

Future Frontiers: Towards Greater Autonomy and Human-Like Cognition

The ongoing development of the drone’s metaphorical “blood-brain barrier” is propelling the industry towards even greater autonomy and capabilities that verge on human-like cognition. Future innovations will focus on enhancing the “barrier’s” filtering and processing power, particularly through advancements in edge computing, where more data processing occurs directly on the drone rather than relying solely on ground stations. This will lead to faster decision-making and more robust performance in remote or contested environments.

Furthermore, research into explainable AI (XAI) will make drone decisions more transparent and trustworthy, allowing operators to understand why a drone took a particular action. Bio-inspired algorithms, drawing insights from biological nervous systems, could lead to more adaptive and resilient AI systems. The ultimate goal is to create drones that can not only perceive and react but also learn, reason, and adapt to unforeseen circumstances with minimal human intervention, effectively possessing a “consciousness” of their environment. The continuous refinement of this technological “blood-brain barrier” is fundamental to achieving truly intelligent, autonomous, and safe aerial robotics, opening up a future where drones seamlessly integrate into our daily lives and industries.

In conclusion, while the biological “blood-brain barrier” protects the most vital organ of living beings, its metaphorical counterpart in drone technology safeguards and empowers the intelligence of aerial robotics. It is the indispensable interface that transforms a chaotic torrent of sensory data into precise, intelligent actions, enabling the remarkable advancements we see in autonomous flight, remote sensing, and beyond. As technology evolves, so too will this “barrier,” paving the way for drones that are increasingly sophisticated, capable, and integral to the future of innovation.