In the dynamic and often unpredictable world of technology and innovation, measuring success is paramount. While baseball has its “batting average” to quantify a player’s offensive reliability, the tech industry, too, relies on analogous metrics to assess performance, inform decisions, and drive progress. This article explores the concept of a “batting average” not as a literal sports statistic, but as a potent metaphor for a crucial success rate or Key Performance Indicator (KPI) within the realm of Tech & Innovation. Just as a hitter’s average reveals their consistency at the plate, a “tech batting average” provides a clear, concise snapshot of a system’s efficacy, an algorithm’s accuracy, or a project’s successful execution. Understanding and applying such metrics allows innovators to navigate complexities, optimize development cycles, and ultimately, hit more “home runs” in their pursuit of groundbreaking solutions.

Defining the “Tech Batting Average”

The essence of a batting average is a ratio: successful outcomes divided by total attempts. In the context of Tech & Innovation, this core principle can be adapted to evaluate a vast array of activities, from the performance of an autonomous drone to the success rate of a new software feature deployment. It serves as a fundamental, intuitive metric to gauge consistency and reliability.

The Core Metric: Success-to-Attempt Ratio

At its heart, a “Tech Batting Average” (TBA) is a simple yet powerful ratio: the number of successful instances of a technological process, system, or innovation divided by the total number of attempts or opportunities. For instance, if an AI-powered drone attempts 100 autonomous deliveries and successfully completes 95 of them, its “delivery batting average” would be .950. Similarly, if a new feature deployment is attempted across 50 user groups and succeeds in 48 without significant issues, its deployment batting average is .960.

This metric offers a direct parallel to the baseball batting average (hits divided by at-bats). It focuses on the most critical aspect: the ability to deliver the intended outcome consistently. It can be applied to diverse scenarios:

- Autonomous Systems: Percentage of successful missions/tasks completed versus total missions/tasks initiated.

- AI/Machine Learning Models: Percentage of accurate predictions or classifications versus total predictions.

- Software Development: Percentage of bug-free feature implementations versus total feature attempts.

- Hardware Prototyping: Percentage of prototypes that meet all design specifications versus total prototypes built.

The strength of the success-to-attempt ratio lies in its clarity and ease of understanding. It provides a universal language for performance, cutting through technical jargon to present a straightforward measure of effectiveness.

Why This Metric Matters in Innovation

In the high-stakes environment of Tech & Innovation, resources are often scarce, and time is of the essence. A clear understanding of performance through a “Tech Batting Average” is invaluable for several reasons:

- Performance Assessment: It offers a tangible way to measure the reliability and effectiveness of new technologies. A low TBA for an autonomous navigation system, for example, signals a critical need for improvement before wider deployment.

- Decision Making: Insights from TBAs can guide strategic decisions. Should more resources be allocated to improving an AI model’s accuracy (its TBA)? Or is a particular hardware design consistently failing (low prototype TBA), suggesting a fundamental re-evaluation is needed?

- Benchmarking and Comparison: TBAs allow for internal benchmarking of different projects, teams, or even iterations of a single technology. They can also provide a basis for comparing performance against industry standards or competitor offerings, where applicable.

- Risk Management: A consistently low TBA in critical systems—like drone obstacle avoidance—highlights significant risks that need immediate mitigation, preventing potential failures or accidents.

- Stakeholder Communication: Presenting complex technical performance as a “batting average” makes it accessible to non-technical stakeholders, fostering clearer communication and trust in the development process. For instance, explaining a drone’s “mission success average” is far more intuitive than delving into intricate sensor fusion algorithms.

Ultimately, the “Tech Batting Average” is more than just a number; it’s a diagnostic tool that provides actionable intelligence, helping innovators understand what’s working, what’s not, and where to focus their efforts for maximum impact.

Calculation and Contextualizing the “Tech Batting Average”

While the basic formula for a “Tech Batting Average” is straightforward, its real value emerges when contextualized. Raw numbers alone can be misleading without understanding the underlying factors that contribute to, or detract from, a system’s performance.

Simple Ratios and Complex Realities

Calculating a TBA typically involves straightforward division:

TBA = (Number of Successful Outcomes) / (Total Number of Attempts)

For example:

- Autonomous Drone Landing Success: If a drone attempts 200 autonomous landings and succeeds in 190, the TBA is 190/200 = 0.950.

- AI Model Anomaly Detection: If an AI security system processes 10,000 network packets and correctly identifies malicious activity in 9,800 cases where it truly existed, and had 200 false negatives, then for detection accuracy, if we define “attempts” as cases where malicious activity existed, it would be 9800/10000 = 0.980. However, defining “attempts” for AI can be complex, often requiring metrics like precision, recall, and F1-score for a fuller picture (discussed later).

- Successful Software Deployments: If a development team pushes 30 new code changes to production, and 27 of them are deployed without critical errors or rollbacks, their deployment TBA is 27/30 = 0.900.

While the calculation is simple, the “reality” is often complex. A 0.950 TBA for drone landings might be phenomenal in extreme weather conditions but mediocre in ideal laboratory settings. The context—the conditions under which the attempts are made, the inherent difficulty of the task, and the definition of “success”—is critical for meaningful interpretation.

Factors Influencing a “Tech Batting Average”

Just as a baseball player’s batting average is influenced by everything from their skill to the opposing pitcher, a “Tech Batting Average” is shaped by a multitude of technical, environmental, and operational factors. Understanding these influences is key to improving performance.

- Algorithm Sophistication and Design (Player Skill): The fundamental quality and sophistication of the underlying algorithms, AI models, or software architecture play a huge role. Well-designed, robust, and optimized systems naturally achieve higher success rates.

- Data Quality and Training (Pitching/Defense): For AI and machine learning, the quality, quantity, and relevance of training data are paramount. Poorly labeled, biased, or insufficient data will inevitably lead to a lower “batting average” for predictive accuracy, much like a good pitcher challenges a batter.

- Hardware Reliability and Calibration (Equipment): The physical components of a system—sensors, processors, actuators, drone airframes—must be reliable and properly calibrated. Faulty sensors or manufacturing defects can drastically reduce a system’s “batting average” for consistent operation.

- Environmental Variables (Ballpark Factors): Real-world deployments often involve unpredictable conditions. Weather (wind, rain), electromagnetic interference, network latency, varying light conditions, and dynamic obstacles can all impact the success rate of autonomous systems, similar to how a ballpark’s dimensions or altitude affect a baseball game.

- Testing Rigor and Validation (Practice/Scouting): Thorough and diverse testing, including unit tests, integration tests, stress tests, and real-world simulations, is crucial. The more rigorously a system is tested and validated against edge cases, the higher its operational “batting average” will be in deployment.

- Operational Procedures and Human Factors (Coaching/Slumps): For complex systems, the operational procedures, training of human operators, and maintenance schedules significantly influence success. Human error in setup or intervention can lower a system’s effective “batting average.” Moreover, continuous iteration and refinement of technology (analogous to a player working through a slump) are vital for sustained high performance.

By meticulously analyzing these influencing factors, development teams can pinpoint weaknesses, prioritize improvements, and systematically enhance their “Tech Batting Average” across various applications.

Limitations and Evolving Performance Metrics

While the “Tech Batting Average” offers a simple and intuitive measure of success, it, like its baseball counterpart, has inherent limitations. Relying solely on a single ratio can obscure critical aspects of performance and hinder a comprehensive understanding of technological progress and value.

Beyond the Simple Ratio: The Need for Nuance

The primary limitation of a simple “Tech Batting Average” is that it treats all successes and failures equally, and often ignores the context or magnitude of those outcomes. This can be misleading:

- Impact vs. Mere Success: A “successful” drone delivery to the wrong address is still a failure in terms of user experience, even if the drone completed its flight path. A successful execution of a minor software task might be less valuable than a challenging, partially successful attempt at a breakthrough feature that yields significant learning. The batting average doesn’t differentiate between a single, low-impact hit and a game-winning grand slam.

- Severity of Failure: Not all failures are equal. A minor glitch that causes a system to restart might count as a “failure,” but it pales in comparison to a catastrophic system crash that results in data loss or physical damage. A simple TBA doesn’t reflect this difference in severity.

- Resource Consumption: A system might achieve a high success rate, but at an incredibly high cost in terms of computing power, energy consumption, or development time. The TBA doesn’t account for efficiency or resource utilization.

- Learning from Failure: Some “failures” provide invaluable data and insights that lead to future successes. A simple batting average labels these as failures, potentially discouraging risk-taking and learning from experimentation, which is crucial for innovation.

- What it doesn’t measure: The batting average doesn’t inherently account for innovation potential, strategic value of a project, user satisfaction, maintainability of code, or the scalability of a solution.

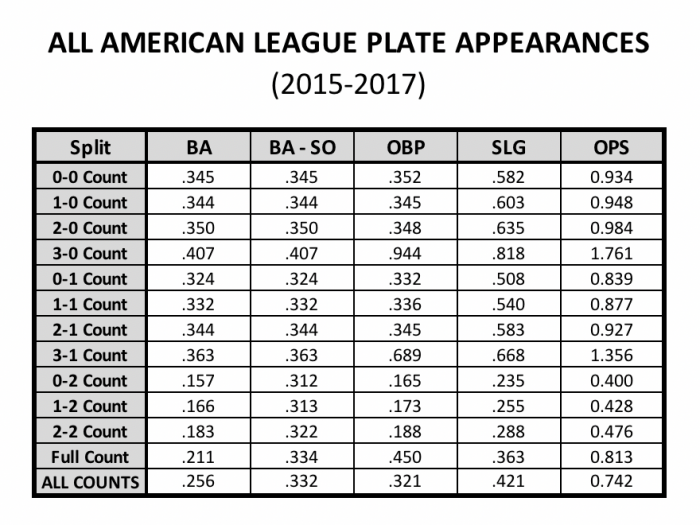

Just as a baseball batting average doesn’t tell the whole story without considering on-base percentage, slugging percentage, and situational hitting, a “Tech Batting Average” needs to be complemented by other metrics for a holistic view.

The Future of “Tech Batting Averages”: Holistic KPIs

Recognizing these limitations, the tech industry, particularly in advanced fields like AI, robotics, and autonomous systems, is increasingly moving towards more sophisticated and holistic Key Performance Indicators (KPIs). These advanced metrics provide a richer, multi-dimensional view of performance, similar to how modern baseball analytics (Sabermetrics) have revolutionized player evaluation with statistics like OPS (On-base Plus Slugging) or WAR (Wins Above Replacement).

Future “Tech Batting Averages” will integrate:

- Weighted Success Metrics: Assigning different scores or weights to successes and failures based on their impact, severity, or strategic importance. For example, a successful drone delivery in extreme weather might count for more than one in calm conditions.

- Efficiency and Resource Metrics: KPIs that combine success rates with resource consumption, such as “successful tasks per CPU hour,” “accuracy per unit of energy,” or “features deployed per developer hour.”

- Reliability Metrics: Beyond just success/failure, measuring metrics like Mean Time Between Failures (MTBF), Mean Time To Repair (MTTR), and system uptime provides crucial insights into system robustness.

- User Experience (UX) and Satisfaction Metrics: For user-facing innovations, the ultimate “batting average” might be tied to user engagement, task completion rates, or Net Promoter Scores (NPS), reflecting the true impact on the end-user.

- Innovation Velocity and Impact Metrics: Tracking the rate at which new features are successfully introduced and their measurable impact on business goals (e.g., revenue, user retention) provides a forward-looking “batting average” for innovation itself.

- Learning Rate and Adaptability: Metrics that quantify how quickly a system (especially AI) improves its “batting average” over time through new data or updated models, or how effectively it adapts to new, unseen conditions.

By combining the simplicity of a “Tech Batting Average” with these more nuanced and comprehensive KPIs, organizations can develop a truly insightful framework for evaluating, optimizing, and driving innovation across all facets of technology.

Applying “Tech Batting Average” Across Drone and Flight Technologies

The metaphor of a “Tech Batting Average” finds particularly resonant applications within the drone and flight technology sectors, where performance, reliability, and precision are paramount. These domains offer clear opportunities to quantify success-to-attempt ratios for various critical functions.

Case Study: Autonomous Drone Navigation

In the realm of autonomous drone navigation, the concept of a “batting average” is directly applicable to measure the consistency and reliability of a drone’s ability to operate independently.

- Definition: An autonomous drone navigation batting average could be defined as the percentage of missions successfully completed without any human intervention or critical errors, out of the total missions attempted.

- Success Criteria: A “successful outcome” might mean:

- Reaching the target destination within a specified tolerance.

- Adhering to all predefined flight paths and geofences.

- Successfully avoiding all detected obstacles (static and dynamic).

- Maintaining stable flight in varying environmental conditions.

- Performing required tasks (e.g., precise payload drop-off, data collection) at the destination.

- Attempts: Each initiation of an autonomous mission constitutes an “attempt.”

- Impact: A high navigation TBA (e.g., 0.990) signifies a highly reliable and trustworthy autonomous system, crucial for applications like package delivery, infrastructure inspection, or search and rescue, where failure can have significant consequences. A lower average would highlight areas for improvement in sensor fusion, path planning algorithms, or obstacle detection capabilities.

Case Study: AI-Powered Imaging Systems for Aerial Surveillance

Drones equipped with advanced cameras and AI for aerial surveillance or inspection represent another prime area for applying a “Tech Batting Average,” especially concerning the accuracy of their imaging and analytical capabilities.

- Definition: Here, the TBA would measure the accuracy and reliability of an AI-powered imaging system in correctly identifying specific objects, anomalies, or features from aerial footage. This could be framed as the percentage of correct identifications versus the total number of objects or anomalies the system was tasked to find.

- Success Criteria: A “successful outcome” might involve:

- Accurately identifying a specific type of vehicle in a parking lot.

- Correctly detecting a defect on a wind turbine blade.

- Precisely classifying crop health in an agricultural field.

- Minimizing false positives (identifying something that isn’t there) and false negatives (missing something that is there).

- Attempts: Each instance where the AI system processes an image or video segment with the opportunity to make an identification constitutes an “attempt.”

- Impact: A high “imaging batting average” (e.g., 0.985 for defect detection) is vital for industries like infrastructure inspection, agriculture, and security, where misidentification or missed anomalies can lead to costly oversights or security breaches. This metric directly informs the trustworthiness and operational efficacy of vision-based AI.

Case Study: FPV Racing and Performance Optimization

Even in the high-octane world of FPV (First-Person View) drone racing, a “batting average” concept can be instrumental for pilot training, drone design, and performance optimization.

- Definition: For an FPV racing pilot or a specific drone configuration, a “racing batting average” could represent the percentage of successful gate passes, lap completions, or even race finishes without a crash or penalty, out of total attempts.

- Success Criteria: “Successful outcomes” could be:

- Navigating a specific gate cleanly without touching it.

- Completing an entire race track lap within a target time without crashing.

- Finishing a multi-lap race without significant errors or disqualifications.

- Attempts: Each gate approach, each lap started, or each race entered can be an “attempt.”

- Impact: Pilots can use their gate-passing TBA to identify weaknesses in their flying technique. Drone engineers can use a “configuration TBA” (e.g., for a specific propeller type or motor setting) to evaluate which setups yield more consistent, error-free flight, directly impacting competitive performance and pushing the boundaries of drone design for speed and agility. This allows for data-driven iteration and continuous improvement in a highly competitive environment.

These case studies highlight how the “Tech Batting Average” serves as a versatile and intuitive metric across diverse applications within drone and flight technologies, providing clarity on performance and guiding the path to innovation.

Conclusion

The term “batting average,” traditionally rooted in baseball, offers a remarkably potent and intuitive metaphor for measuring success and reliability in the complex landscape of Tech & Innovation. While its literal definition applies to sports, its conceptual framework—the ratio of successful outcomes to total attempts—is universally applicable.

By adopting a “Tech Batting Average,” organizations can gain clear, actionable insights into the performance of autonomous systems, the accuracy of AI models, the efficiency of development processes, and the reliability of hardware. This metric serves as a foundational KPI, simplifying complex technical achievements into an easily understandable figure that informs strategic decisions, fosters effective communication, and helps benchmark progress.

However, just as baseball analytics have evolved beyond simple averages, the tech industry must also embrace more nuanced and holistic KPIs. While the “Tech Batting Average” provides a crucial snapshot, a comprehensive understanding requires supplementary metrics that account for impact, resource efficiency, severity of failure, and the invaluable lessons learned from every attempt. By combining the clarity of a “batting average” with these advanced analytical tools, the world of Tech & Innovation can ensure it is not just hitting for average, but consistently driving towards truly transformative and impactful breakthroughs.