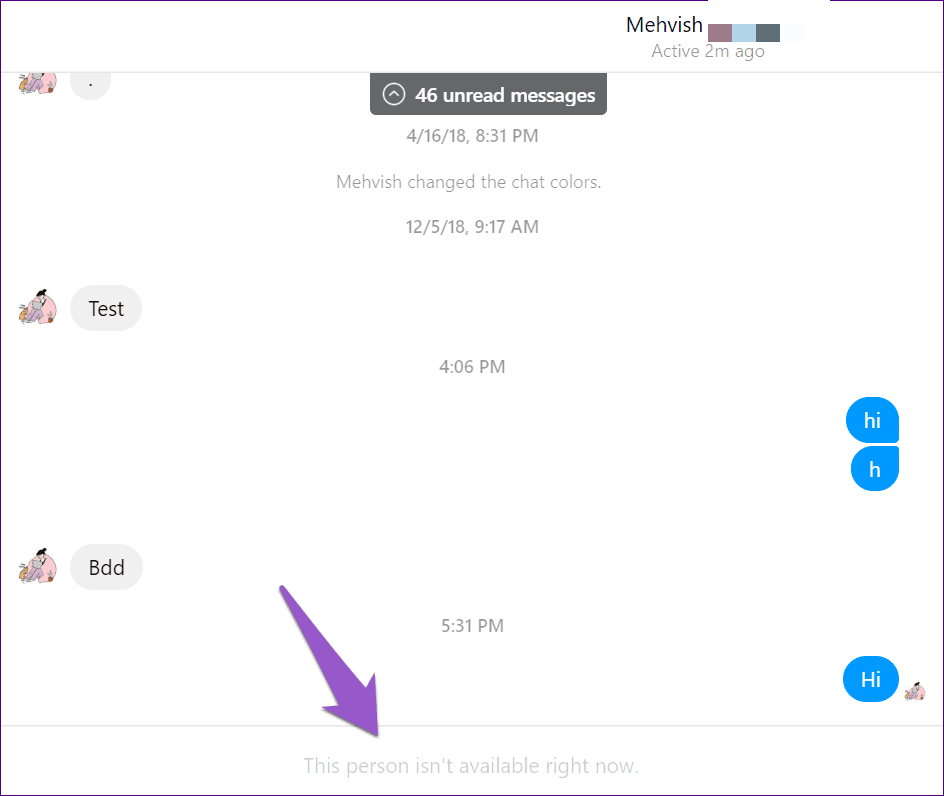

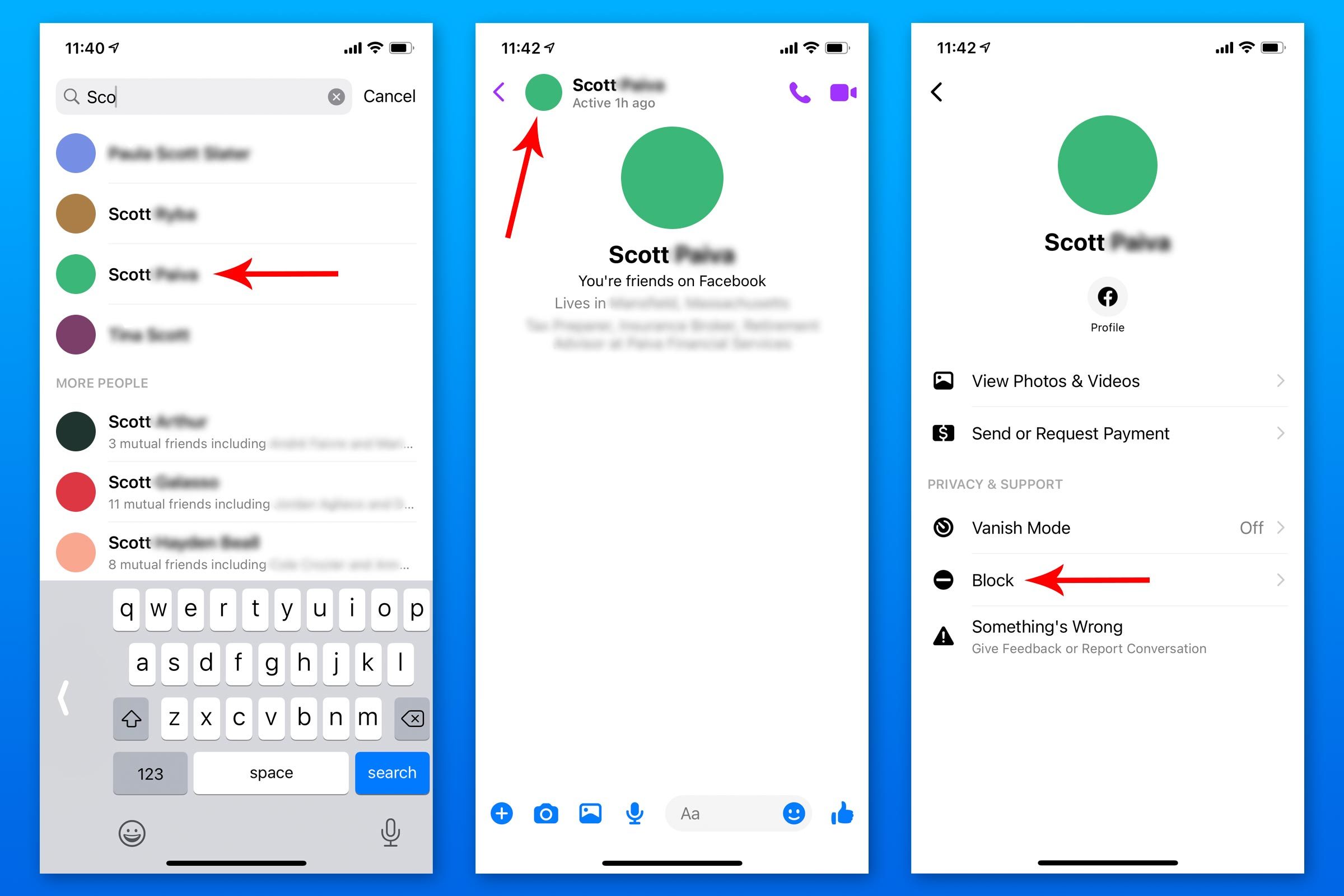

The concept of “blocking” in the digital age is most commonly associated with social media platforms – a personal control mechanism to limit interaction and maintain digital boundaries. However, beneath this familiar user interface lies a fundamental principle that underpins vast segments of modern technology: the deliberate exclusion or restriction of data, access, or interaction. In the sophisticated ecosystems of Tech & Innovation, particularly within autonomous systems, advanced sensor networks, and AI-driven platforms, the act of “blocking” takes on a far more intricate and critical role. It’s not merely about severing a connection; it’s about defining operational parameters, ensuring security, enhancing efficiency, and safeguarding privacy. This exploration delves into what “happens when you block someone” in the context of cutting-edge technology, examining the technical analogs and profound implications of such mechanisms across various domains.

The Digital Gates: Filtering Unwanted Interactions in Autonomous Systems

In the complex landscape of autonomous operations, from drone navigation to smart city management, the ability to control incoming information and define permissible boundaries is paramount. Just as a social media user blocks an unwanted account, technological systems employ sophisticated “blocking” mechanisms to filter out irrelevant data, enforce operational limits, and protect sensitive zones. These digital gates are fundamental to the reliability and safety of advanced tech.

Data Segregation and Privacy Protocols

Autonomous systems, especially those involved in mapping, remote sensing, and aerial surveillance, constantly collect vast amounts of data. Not all of this data is relevant to every operation, nor is all of it permissible for general access. Data segregation protocols act as sophisticated “blockers,” ensuring that sensitive information—whether personal identifiers, classified locations, or proprietary operational details—is isolated and protected.

Consider a drone equipped with high-resolution cameras for aerial mapping. While its primary function is to capture geographical data, it might inadvertently record identifiable human activity or license plates. Privacy protocols built into the drone’s software or cloud processing backend will automatically “block” or redact these sensitive elements, either by blurring, anonymizing, or completely omitting them from the final dataset. This ensures compliance with data protection regulations (like GDPR) and upholds ethical standards. Furthermore, in scenarios involving multi-layered access, data streams are often compartmentalized. A general operator might only see aggregated mapping data, while a specialist might have access to finer details—effectively “blocking” the general operator from unauthorized information. This principle extends to IoT networks, where different nodes or users are “blocked” from accessing data streams not pertinent to their specific function, thereby reducing the attack surface and maintaining data integrity.

Exclusion Zones and Geo-fencing

Perhaps the most direct physical analogy to “blocking” in autonomous systems is the concept of exclusion zones, often implemented through geo-fencing. For drones and other unmanned aerial vehicles (UAVs), this technology defines virtual perimeters that the system is programmed not to enter or exit. When a drone approaches an established geo-fence, its internal navigation system “blocks” further movement in that direction, initiating a hover, a return-to-home sequence, or a course correction.

These exclusion zones are critical for safety and security. They prevent drones from flying into restricted airspace (e.g., near airports, military bases, or critical infrastructure), over private property without permission, or into areas deemed too dangerous for flight. For instance, temporary geo-fences can be established around disaster sites to prevent interference with emergency services. Similarly, in autonomous ground vehicles, geo-fencing can restrict movement to designated routes or prevent entry into pedestrian-only areas. The “block” here is a proactive safety measure, preventing collisions, protecting sensitive locations, and ensuring public safety by preemptively stopping an autonomous agent from entering a forbidden “space.”

Shielding the System: Cybersecurity and Threat Prevention

The integrity and security of autonomous systems are constantly under threat from malicious actors. In this domain, “blocking” mechanisms are the digital fortresses that defend against cyberattacks, unauthorized access, and data breaches. They are essential for maintaining trust and operational continuity in a connected world.

Safeguarding Against Malicious Interference

Autonomous drones, AI-powered systems, and networked sensors are highly susceptible to various forms of cyber warfare, from denial-of-service (DoS) attacks that flood systems with traffic, to sophisticated malware designed to hijack control or exfiltrate data. Cybersecurity protocols act as powerful “blockers” against such threats. Firewalls, intrusion detection and prevention systems (IDPS), and network access control (NAC) are designed to identify and “block” suspicious data packets, unauthorized connection attempts, or known malicious software signatures.

For a drone, this could mean blocking a rogue command signal attempting to override its flight path, or identifying and neutralizing a piece of malware trying to compromise its onboard telemetry. In AI systems, it involves robust input validation and anomaly detection to “block” poisoned data intended to corrupt training models or manipulate decision-making processes. The “block” in this context is a defensive action, isolating and neutralizing threats before they can cause significant damage, much like blocking a malicious user from interacting with your social media profile prevents further harm.

Authentication and Access Control

Just as blocking someone on social media prevents them from viewing your private posts, authentication and access control mechanisms in technology “block” unverified entities from interacting with critical systems or sensitive data. Strong authentication, often involving multi-factor verification, ensures that only authorized individuals or other verified systems can gain access. Once authenticated, access control policies define what those entities can do.

In a drone operation center, for instance, a flight controller might be authenticated to command a drone, but “blocked” from accessing its financial billing records. Similarly, an AI development team member might have access to model training data, but be “blocked” from deploying changes to a live production system without additional layers of approval. This hierarchical “blocking” of access ensures that the principle of least privilege is upheld, minimizing the potential for internal misuse or external compromise if an authorized account is breached. Certificates, encryption keys, and digital signatures all play a role in establishing and enforcing these “blocks,” ensuring that only trusted parties can initiate commands or retrieve information from autonomous systems and their vast data repositories.

Optimizing Performance: Enhancing Efficiency Through Exclusion

Beyond security and control, the principle of “blocking” is also a critical tool for enhancing the efficiency, accuracy, and overall performance of technological systems. By strategically excluding noise, irrelevant data, or inefficient pathways, systems can operate more cleanly, make better decisions, and achieve their objectives more effectively.

Noise Reduction and Signal Filtering

In camera systems, sensor technology, and communication networks, raw data is often accompanied by “noise”—unwanted signals, interference, or random fluctuations that can obscure true information. Signal filtering mechanisms act as sophisticated “blockers” of this noise. For instance, in an FPV (First Person View) drone system, electromagnetic interference from motors or power lines can degrade the video feed. Integrated filters “block” these noisy frequencies, resulting in a clearer, more stable image for the pilot.

Similarly, in remote sensing for mapping, various atmospheric conditions or sensor limitations can introduce inaccuracies. Advanced algorithms apply filters to “block” these erroneous data points, thereby improving the precision and reliability of the generated maps. In thermal imaging cameras, noise reduction techniques “block” thermal fluctuations not directly related to the target, providing a sharper, more accurate temperature reading. This strategic “blocking” of interference is fundamental to extracting valuable insights from complex data streams, allowing the “signal” to emerge clearly.

AI Learning and Negative Feedback

In the realm of Artificial Intelligence, especially in machine learning, the concept of “blocking” is central to how models learn to make accurate predictions and decisions. Negative feedback, a form of intellectual “blocking,” tells an AI what not to do or what not to recognize. When an AI model makes an incorrect prediction, providing negative feedback effectively “blocks” that particular incorrect association from being reinforced.

For example, in training an AI to identify objects in aerial footage (e.g., distinguishing a car from a bush), if the model incorrectly labels a bush as a car, the human trainer provides negative feedback. This feedback “blocks” the AI from repeating that mistake, guiding it to adjust its internal parameters until it learns to correctly differentiate between the two. In reinforcement learning, an autonomous agent (like a drone learning to navigate an obstacle course) receives “negative rewards” for colliding with obstacles. These negative rewards act as “blocks,” teaching the agent to avoid those actions in the future and discover optimal, collision-free paths. This iterative process of “blocking” incorrect or undesirable behaviors is what refines AI models, making them more intelligent, efficient, and reliable in their tasks, such as autonomous flight control or object recognition.

Ethical Considerations and Systemic Biases in “Blocking” Mechanisms

While “blocking” in tech is crucial for security and efficiency, its implementation is not without ethical considerations and potential pitfalls. Just as a biased block on social media can lead to echo chambers, biased or opaque “blocking” mechanisms in autonomous systems can have significant, sometimes detrimental, consequences.

Unintended Consequences of Exclusion

The strategic “blocking” of certain data, access, or interactions can inadvertently lead to unintended consequences, especially when the underlying algorithms are designed with biases or incomplete understanding of real-world complexities. For instance, an autonomous facial recognition system designed to “block” access to sensitive areas might be less accurate for certain demographic groups if its training data was biased, effectively “blocking” legitimate access for some while allowing others. Similarly, an AI-powered content moderation system, intended to “block” harmful content, might inadvertently suppress legitimate discourse if its rules are too broad or culturally insensitive.

In drone operations, an overly aggressive geo-fencing policy could inadvertently “block” critical public services, like emergency medical deliveries, from reaching remote areas. The consequence of such “blocking” is not just inconvenience but can be a denial of essential services or the perpetuation of systemic biases present in the data or the design choices. Understanding these unintended consequences is vital for responsible technological development.

Transparency and Accountability in Automated “Blocks”

When an autonomous system makes a decision to “block” something – be it data, a user, or a physical action – the reasoning behind that block is paramount. Lack of transparency in these automated “blocking” mechanisms can erode trust and make accountability difficult. If an AI system “blocks” a loan application or a medical diagnosis, the affected individual deserves to know why.

For critical autonomous systems, such as those involved in public safety or national security, the ability to audit and understand every “block” decision is non-negotiable. This necessitates explainable AI (XAI) frameworks that can articulate the factors leading to a specific exclusion. Furthermore, clear human oversight and intervention protocols are essential to override or adjust automated “blocks” when necessary, preventing algorithmic mistakes from causing irreversible harm. Establishing accountability means not only understanding what was blocked but who is responsible for the design, deployment, and monitoring of these “blocking” rules. Without transparency and accountability, the power of automated “blocking” risks becoming a black box that could disproportionately affect individuals or groups, mimicking the worst aspects of unchecked power.

Conclusion

The seemingly simple act of “blocking someone” on a social platform metamorphoses into a multi-faceted and critical set of operations within the intricate world of Tech & Innovation. From meticulously segmenting data in cloud infrastructure and enforcing no-fly zones for UAVs, to safeguarding against sophisticated cyber threats and refining the learning processes of advanced AI, the principle of exclusion, restriction, and filtering is foundational. It empowers autonomous systems to operate safely, securely, and efficiently, allowing them to navigate complex environments, protect sensitive information, and make informed decisions by effectively “blocking” noise, threats, and undesirable actions.

However, the power of these technical “blocks” comes with significant responsibility. As we embed more of these automated gatekeepers into our technological fabric, ethical considerations surrounding fairness, transparency, and accountability become paramount. Understanding the nuances of what happens when you block someone in these advanced technological contexts is not just about appreciating the engineering marvels, but also about ensuring that these powerful tools serve humanity wisely, preventing unintended exclusions and fostering a more equitable and secure digital future. The digital gates are open for innovation, but they must be built and managed with precision, foresight, and a profound sense of responsibility.