In the intricate world of autonomous systems and advanced drone technology, the concept of a “friend” or a “social network” might seem entirely alien, rooted in human interaction and digital platforms. However, when we consider the complex, interconnected web of sensors, data streams, algorithmic modules, and collaborative agents that define modern flight technology, the metaphor of “unfriending someone on Facebook” offers a profound lens through which to examine critical system disconnections and their repercussions. Here, “Facebook” represents the integrated operational environment or data network an AI inhabits, and “someone” signifies a vital data source, an algorithmic module, a specific sensor, or even another AI agent within a multi-drone swarm. The act of “unfriending” then translates into the deliberate or automatic severing of a connection, the rejection of a data stream, or the isolation of an entity within this sophisticated technical ecosystem. Understanding these metaphorical “unfriend” events is crucial for designing robust, resilient, and intelligent autonomous systems.

The Algorithmic Social Graph: Redefining “Friends” in AI Contexts

For an autonomous drone, every piece of data it receives, every sensor it trusts, and every computational module it relies upon can be considered a “friend” in its operational social graph. These are not relationships born of emotion, but of functional dependency, data fidelity, and algorithmic trust. An AI’s ability to execute complex tasks, from autonomous navigation to object recognition and predictive flight, hinges on the integrity and constant availability of these “friends.”

Defining “Friend” in AI Contexts

In the realm of artificial intelligence and robotics, a “friend” to a drone’s core AI might manifest in several forms:

- Sensor Nodes: A GPS module providing positional data, an IMU (Inertial Measurement Unit) tracking orientation, or a lidar system mapping obstacles. Each sensor is a data-providing entity, crucial for the drone’s perception of its environment.

- Data Streams: Continuous feeds of information, such as real-time weather updates, terrain maps, or telemetry from other drones in a swarm. These streams are the lifeblood of situational awareness.

- Algorithmic Modules: Specialized software components responsible for specific functions like object tracking (e.g., AI Follow Mode), path planning, image processing, or threat assessment. The core AI “trusts” these modules to perform their tasks accurately.

- External Agents: In multi-drone operations or human-on-the-loop scenarios, this could be another autonomous drone sharing its intent and data, a ground control station, or even a human operator providing high-level commands.

These “friends” are interconnected within what we might metaphorically call the drone’s “Facebook”—a sophisticated, dynamic network of dependencies and communication pathways. The “friendship” is defined by active data exchange, mutual reliability, and contribution to shared operational goals. A high trust score, consistent data quality, and predictable behavior are the hallmarks of a good “friend” in this algorithmic social graph.

“Facebook” as the Operational Network

Extending the metaphor, “Facebook” represents the comprehensive, interconnected operational environment an autonomous system inhabits. This isn’t a social media platform but a highly structured, often distributed network where all its “friends” (sensors, data, modules, agents) communicate, share information, and influence decision-making. This environment encompasses:

- Onboard Systems Architecture: The internal network connecting the drone’s flight controller, various sensors, processing units, and communication modules.

- Distributed Sensor Networks: A broader network where the drone might integrate data from static ground sensors, satellites, or other aerial platforms.

- Multi-Agent Swarm Communication: The mesh network or centralized hub facilitating communication and coordination among multiple drones in a collaborative mission.

- AI’s Internal Knowledge Graph: A semantic web of information, rules, and learned patterns that the AI uses to understand its world and make decisions.

Within this “Facebook,” the AI constantly evaluates the status, reliability, and relevance of its “friends.” A robust “friendship” ensures seamless operation, informed decision-making, and mission success.

Algorithmic Disengagement: The Act of “Unfriending”

The concept of “unfriending” in this technical context refers to the deliberate or automated process by which an autonomous system severs a functional connection or deprioritizes an entity within its operational network. This is not an emotional act but a strategic decision based on real-time data, system integrity, and mission objectives.

Triggers for Disconnection

Autonomous systems are designed to adapt and maintain optimal performance, even in challenging environments. An “unfriend” event is typically triggered by:

- Sensor Malfunction or Anomaly Detection: A sensor (e.g., a camera providing blurry images, a GPS module sending erratic coordinates) is detected as faulty or compromised. Continued reliance on its data would degrade performance or endanger the mission.

- Outdated or Conflicting Data Streams: If a data source provides information that is no longer accurate or contradicts more reliable sources, the system might “unfriend” it to prevent erroneous decision-making. For instance, an old map data stream conflicting with real-time lidar scans.

- Security Threats or Compromised Nodes: In networked operations, if an external agent or an internal module is identified as compromised or exhibiting malicious behavior, it must be isolated immediately to protect the entire system.

- Optimization and Redundancy: The system might “unfriend” a less efficient or redundant data source when a superior alternative becomes available or if resource allocation dictates minimizing unnecessary connections.

- Dynamic Mission Re-prioritization: If mission parameters change, certain data streams or agents that were previously critical might become irrelevant, leading to their deprioritization or “unfriending” to free up processing power and communication bandwidth.

The Technical Mechanisms

The actual process of “unfriending” is implemented through various technical mechanisms:

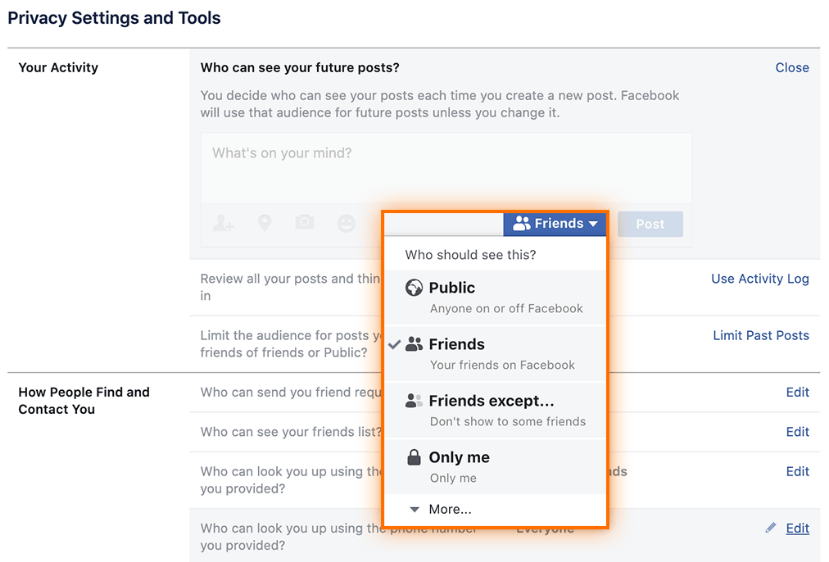

- Trust Score Adjustment: AI algorithms constantly assign trust scores or confidence levels to their data sources and modules. A low score can lead to data being ignored or filtered out.

- Firewalling and Isolation: In cases of security threats, compromised nodes can be programmatically isolated through internal firewalls, preventing data exchange and influence.

- Re-routing Communication Paths: If a communication link (a “friend”) becomes unreliable, the system automatically switches to alternative pathways, effectively “unfriending” the problematic link.

- Module Deactivation: Specific algorithmic modules can be temporarily or permanently deactivated if they are underperforming or no longer required for the current phase of a mission.

- Dynamic Data Fusion Algorithms: These algorithms intelligently weigh and combine data from multiple sources. When one source is “unfriended,” its weight drops to zero, and the system recalculates its understanding based on the remaining “friends.”

Immediate and Long-Term Repercussions for Autonomous Operations

The act of “unfriending” an entity within an autonomous system’s operational network carries significant consequences, ranging from immediate operational adjustments to long-term impacts on system intelligence and mission success.

Impact on Real-time Decision-Making

When a critical “friend” (e.g., a primary navigation sensor or a key data stream) is “unfriended,” the most immediate effect is often a degradation in the drone’s real-time decision-making capabilities. The system might experience:

- Loss of Situational Awareness: Without a particular sensor’s input, the drone’s understanding of its environment becomes incomplete, potentially leading to navigation errors, missed obstacles, or incorrect target identification.

- Increased Computational Load: The AI may need to expend more processing power to compensate for the missing data, perhaps by cross-referencing other sources, initiating fallback procedures, or increasing the uncertainty in its models.

- Mission Deviation or Failure: In critical scenarios, the loss of a key “friend” could force the drone to abort its mission, return to base, or, in worst-case scenarios, lead to a catastrophic failure if no viable alternatives exist. For example, if the AI Follow Mode “unfriends” the visual tracker of its target due to occlusion, it must immediately switch to predictive algorithms or other sensors to maintain the lock.

Swarm Dynamics and Collaborative Intelligence

In multi-drone swarm operations, the “unfriending” of an agent (another drone) can profoundly disrupt collaborative intelligence. If one drone in a swarm “unfriends” another due to suspected malfunction or adversarial behavior:

- Communication Breakdown: The affected drone might be isolated from the shared communication network, losing its ability to contribute to collective decision-making or receive vital commands.

- Loss of Coordination: The swarm’s ability to execute synchronized maneuvers, perform complex data collection patterns, or maintain formation could be compromised, leading to inefficiencies or even collisions.

- Fragmentation of Shared Situational Awareness: Each drone in a swarm builds a portion of the overall environmental map. If a key contributor is “unfriended,” gaps in the shared map emerge, reducing the collective understanding of the mission area.

Data Integrity and Predictive Modeling

Beyond immediate operational impacts, “unfriending” can have significant long-term effects on the data integrity and predictive modeling capabilities of an autonomous system.

- Data Gaps: The ongoing absence of data from an “unfriended” source creates persistent gaps in the AI’s data sets, which can hinder its ability to build complete maps, recognize complex patterns, or perform robust predictive analyses.

- Bias Introduction: If an AI consistently “unfriends” certain types of data or agents, it could inadvertently introduce biases into its learning models, leading to skewed perceptions or suboptimal strategies in future operations.

- Reduced Predictive Accuracy: AI systems rely heavily on historical and real-time data to predict future states or optimal actions. Losing a reliable “friend” means less data for training and inference, potentially diminishing the accuracy of its predictive models for flight paths, object movements, or environmental changes.

Architecting Resilience: Mitigating the Impact of Disengagement

Given the critical nature of these “unfriend” events, the design of autonomous systems places a strong emphasis on resilience—the ability to maintain functionality and mission effectiveness despite disconnections or failures.

Redundancy and Distributed Architectures

The most fundamental strategy to mitigate the impact of an “unfriend” is through redundancy. By having multiple “friends” (i.e., redundant sensors, diverse data sources, and backup communication channels), the system can absorb the shock of losing one connection.

- Sensor Redundancy: Implementing multiple GPS units, IMUs, or visual cameras means if one fails, others can take over, often seamlessly.

- Distributed Data Processing: Spreading data processing and storage across multiple nodes or agents ensures that the failure of one component doesn’t cripple the entire system.

- Mesh Communication Networks: These networks provide multiple pathways for data transfer, ensuring that if one communication link is “unfriended,” data can still reach its destination via an alternative route.

Adaptive Learning and Self-Healing Systems

Advanced AI systems incorporate adaptive learning capabilities that enable them to dynamically adjust to “unfriend” events.

- Dynamic Recalibration: Upon losing a data source, the AI can automatically recalibrate its internal models, re-weighing the influence of remaining “friends” and compensating for the loss.

- Proactive Search for Alternatives: An intelligent system might actively search for new “friends” (e.g., activating a secondary sensor, seeking data from a different network node) to fill the void left by a disconnection.

- Predictive Compensation: Using predictive modeling, the AI can temporarily infer missing data based on historical patterns and the inputs from its remaining “friends,” allowing it to continue operating until a full resolution is found.

Ethical AI and System Audits

While “unfriending” is a technical necessity, it must be governed by clear protocols and transparency, especially as AI systems become more complex and integrated.

- Auditable Disengagement Logs: Every instance of an AI “unfriending” a data source or agent should be logged and auditable, allowing human operators to understand why a connection was severed and if the decision was optimal.

- Purposeful Disconnection: Mechanisms should be in place to ensure that “unfriending” is always purposeful, driven by system integrity, security, or mission optimization, rather than arbitrary or biased algorithmic decisions.

- Prevention of Cascading Failures: Robust system design must prevent a single “unfriend” event from triggering a chain reaction of disconnections that could lead to widespread system failure.

In essence, while “unfriending someone on Facebook” carries social implications for humans, for autonomous systems, it represents a critical operational juncture. The ability of advanced drones and AI to intelligently manage these disconnections—to identify unreliable “friends,” sever ties when necessary, and adapt gracefully to their absence—is paramount to their continued evolution and the safe, effective execution of their increasingly complex missions.