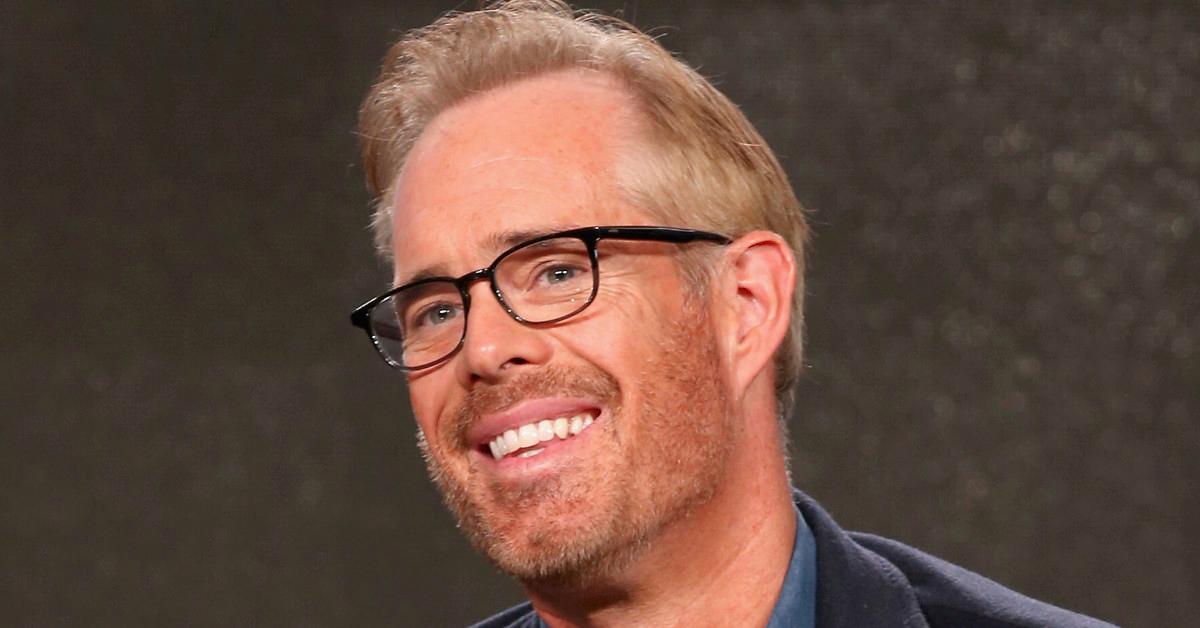

The departure of Joe Buck from his long-standing home at Fox Sports to ESPN marked the end of an era in traditional sports broadcasting. However, the narrative of “what happened” to the industry’s most recognizable voice is inextricably linked to a much larger story: a tectonic shift in the technology and innovation that powers live media. While Buck’s move was a headline-grabbing personnel shift, the underlying reality is that the medium he occupies is being fundamentally redefined by AI follow mode, autonomous flight, and advanced remote sensing.

The transition from the legacy broadcast models of the early 2000s to the high-tech, data-saturated environments of today represents a leap in how we consume live events. We are no longer in an age where a single voice and a few stationary cameras suffice. Instead, the industry has pivoted toward a decentralized, tech-driven approach where innovation dictates the viewer’s experience as much as the commentary does.

The Dawn of AI Follow Mode: Changing the Narrative of Live Sports

The traditional “play-by-play” format, which Joe Buck mastered, is increasingly being supplemented—and in some cases, supplanted—by AI follow mode and computer vision. This technology is the backbone of the “smart” broadcast, allowing for a level of tracking and analysis that was physically impossible for human operators or observers just a decade ago.

Machine Learning and Predictive Play Dynamics

At the heart of the innovation currently sweeping through the broadcasting world is the implementation of neural networks trained to recognize and predict athletic movement. AI follow mode utilizes object detection algorithms—such as YOLO (You Only Look Once) or SSD (Single Shot MultiBox Detector)—to identify players, the ball, and even specific tactical formations in real-time.

What happened to the “standard” broadcast is that it became a data-gathering exercise. These AI systems can maintain a lock on a wide receiver’s route or a soccer ball’s trajectory with sub-millimeter precision, regardless of how crowded the frame becomes. For commentators like Buck, this means the “narrative” is now backed by instantaneous visual proof. The AI doesn’t just follow the action; it understands the context, automatically zooming or adjusting the focal length based on the velocity of the play.

Reducing Human Error in High-Stakes Coverage

The move toward AI-driven tracking also addresses the inherent limitations of human reaction time. In the high-velocity world of professional sports, a camera operator might lose a ball in the sun or miss a quick transition. AI follow mode eliminates these “blind spots.” By integrating with multi-camera arrays, the system can hand off tracking from one lens to another without a single frame of jitter. This innovation ensures that the audience never misses a moment, effectively automating the role of the technical director and allowing the talent to focus on providing deeper insight rather than simply describing what is happening on screen.

Autonomous Flight Systems: Navigating the Stadium Skyline

Perhaps the most visible change in the landscape of professional sports media—and a key component of the new era Joe Buck now finds himself in—is the integration of autonomous flight. Drones are no longer just “novelty” shots used for transitions; they are essential instruments of the broadcast.

The Precision of Programmed Flight Paths

Autonomous flight has revolutionized the “bird’s-eye view.” Unlike traditional piloted drones, which are subject to pilot fatigue and radio interference, autonomous systems operate on pre-programmed, high-precision flight paths. These paths are calculated using sophisticated spatial mapping to ensure the drone moves in perfect synchronization with the game’s rhythm.

In modern stadium environments, autonomous flight systems use Real-Time Kinematic (RTK) GPS to achieve centimeter-level positioning accuracy. This allows drones to fly safely in tight spaces, such as between stadium tiers or near scoreboard structures, providing angles that were previously restricted due to safety concerns. This innovation provides a “dynamic flow” to the broadcast, where the camera moves with a fluidity that mirrors the action on the field, creating a more immersive experience for the viewer.

Signal Integrity and Redundancy in High-Density Environments

One of the greatest challenges in stadium-based tech is managing the radio frequency (RF) environment. With tens of thousands of fans using cellular data, the potential for signal interference is massive. What has happened to solve this is the development of robust, frequency-hopping spread spectrum (FHSS) technology and redundant autonomous protocols.

Modern broadcast drones are equipped with edge-computing capabilities that allow them to continue their mission even if the primary command link is momentarily lost. The drone can process its own obstacle avoidance data and maintain its flight path using onboard LiDAR and optical flow sensors. This level of autonomy is what allows networks to confidently deploy aerial assets during a live broadcast, knowing the technology is resilient enough to handle the chaotic RF environment of a sold-out arena.

Remote Sensing and Mapping: The Integration of Real-Time Analytics

While the voice of the announcer provides the “soul” of the game, remote sensing and mapping provide the “brain.” The integration of these technologies has transformed the field of play into a digital twin, where every movement is measured and cataloged.

LiDAR and Photogrammetry in Game Preparation

Before Joe Buck even picks up a microphone, the venue has been meticulously mapped using LiDAR (Light Detection and Ranging) and photogrammetry. This tech and innovation allow networks to create highly accurate 3D models of the stadium. These models serve two purposes: they allow for the calibration of autonomous flight systems, and they provide the framework for advanced augmented reality (AR) overlays.

By using remote sensing to map the exact topography of the turf or the geometry of the stands, broadcasters can “pin” digital information to the physical world. When you see a “first-down line” that stays perfectly in place regardless of the camera angle, or a “stat-tracker” hovering over a player’s head, you are seeing the result of high-resolution spatial mapping. This technology bridges the gap between the physical event and the digital data stream.

Augmented Reality Driven by Remote Sensors

The “innovation” in this sector is the speed at which data is processed. We have moved from post-game analysis to real-time telemetry. Sensors embedded in player equipment and the ball itself transmit data at thousands of cycles per second. This information is processed by remote servers and integrated back into the broadcast feed with near-zero latency.

For the viewer, this means that “what happened” is explained not just by the announcer, but by a suite of visual data. We see the exact speed of a pitch, the launch angle of a home run, or the probability of a catch, all rendered in real-time. This level of remote sensing has elevated the broadcast from a simple viewing experience to an analytical deep dive, satisfying a more sophisticated and tech-savvy audience.

The Transition from Personality to Precision: The Future of Media Tech

The story of Joe Buck is a microcosm of the broader shift from “personality-led” media to “precision-led” media. As we look toward the next decade, the innovations in AI, autonomous systems, and remote sensing will only become more ingrained in our daily lives.

Swarm Intelligence and Multi-Angle Autonomy

The next frontier in broadcast tech is drone swarm intelligence. Instead of a single autonomous drone, we will see coordinated groups of UAVs working in unison. These “swarms” will be governed by AI that determines the optimal positioning for each unit to capture every possible angle of a play. If a touchdown is scored, one drone might focus on the receiver, another on the quarterback’s release, and a third on the sideline reaction—all coordinated by a central autonomous processor.

This move toward multi-angle autonomy will drastically reduce the overhead required for live production while increasing the quality of the output. It represents a move toward “automated storytelling,” where the technology itself identifies the most compelling visual narrative and presents it to the audience.

5G Latency and the Cloud-Based Booth

Finally, the evolution of 5G and edge computing is fundamentally changing the “where” of broadcasting. What happened to the traditional broadcast truck? It is being replaced by the cloud. With high-bandwidth, low-latency 5G connections, the massive amount of data generated by AI follow modes and remote sensors can be processed in the cloud and sent back to viewers instantly.

This allows for a “decentralized booth,” where the talent, the directors, and the tech teams don’t even need to be in the same city, let alone the same stadium. The innovation of 5G ensures that the massive data throughput required for 4K and 8K autonomous feeds is handled with the same reliability as a hardwired connection.

In conclusion, when we ask what happened to the traditional icons of broadcasting, we must look at the digital infrastructure rising around them. The industry has moved beyond the simple transmission of images and sound. We are now in an era of intelligent, autonomous, and data-driven innovation. From the AI that tracks the ball to the autonomous drones that circle the stadium, the technology is no longer just a tool—it is the very fabric of the modern spectator experience. Joe Buck’s career transition is simply one chapter in a much larger book about the relentless march of technological progress in the world of high-tech media.