The notion of a “hard drive” conjures images of physical spinning platters or solid-state chips within a personal computer. However, as technology rapidly advances, particularly within the realm of Tech & Innovation, the concept of data storage and processing expands far beyond the confines of a traditional laptop. In sophisticated systems that enable autonomous flight, intelligent mapping, and remote sensing, data is the lifeblood, and its management is paramount. This article delves into the sophisticated, often abstract, ways data is handled in these cutting-edge technological frontiers, exploring the evolution from simple storage to dynamic, integrated processing architectures.

The Evolving Landscape of Data in Tech & Innovation

Gone are the days when data was solely about archiving static information. In the context of modern Tech & Innovation, data is a constantly flowing, dynamic entity that is actively processed, analyzed, and utilized in real-time to drive intelligent decision-making. This shift has necessitated a fundamental re-imagining of how data is stored, accessed, and integrated into the operational fabric of advanced systems.

From Static Archives to Real-Time Streams

Historically, storage mediums like hard drives served primarily as repositories for completed data sets. You would record information, save it, and access it later. This model is increasingly insufficient for applications demanding immediate situational awareness and responsive action. Consider autonomous vehicles: they are not merely recording their journeys; they are processing live sensor feeds – from cameras, lidar, radar – to understand their environment, predict the behavior of other agents, and navigate safely. This requires not just storage, but incredibly rapid ingestion and processing capabilities.

The Cloud as a Distributed ‘Hard Drive’

For many large-scale Tech & Innovation applications, the concept of a single, localized “hard drive” is being superseded by distributed cloud-based storage and processing. This offers unparalleled scalability, accessibility, and computational power. When a drone performs aerial mapping of a vast area, the raw imagery and sensor data can be transmitted to cloud servers. These servers, acting as a colossal, distributed data repository, then process this information using powerful algorithms, often leveraging AI, to generate detailed maps, 3D models, or environmental assessments. The “drive” in this scenario isn’t a single physical unit but a vast network of interconnected storage and computing resources.

Edge Computing: Intelligence Closer to the Source

Conversely, for applications requiring ultra-low latency and rapid responses, processing and storage are increasingly moving to the “edge” – closer to where the data is generated. This is particularly relevant in scenarios like remote sensing from high-speed drones or autonomous systems operating in environments with limited connectivity. An edge device, equipped with significant processing power and onboard memory, can perform initial data analysis and decision-making locally. This means that instead of sending raw, voluminous data back to a central cloud server, only processed insights or critical alerts are transmitted. This onboard intelligence acts as a specialized, high-performance local storage and processing unit, making decisions in milliseconds.

The Architecture of Data Management in Advanced Systems

Understanding how data is managed in Tech & Innovation requires looking beyond the physical form factor of a storage device. It involves comprehending the complex interplay of hardware, software, and algorithms that enable data to be captured, stored, processed, and acted upon.

Sensor Data Ingestion and Pre-processing

The journey of data in many advanced technological systems begins with a multitude of sensors. For instance, in autonomous flight systems, cameras capture visual information, lidar generates 3D point clouds, and GPS provides location data. These sensors produce massive volumes of raw data at high frequencies. This raw data must be efficiently ingested and, often, pre-processed. Pre-processing can involve tasks like noise reduction, image enhancement, or data format conversion. Specialized hardware, such as field-programmable gate arrays (FPGAs) or dedicated image signal processors (ISPs), are often employed to handle these high-throughput tasks, acting as the initial gatekeepers of the data stream before it’s destined for longer-term storage or more intensive computation.

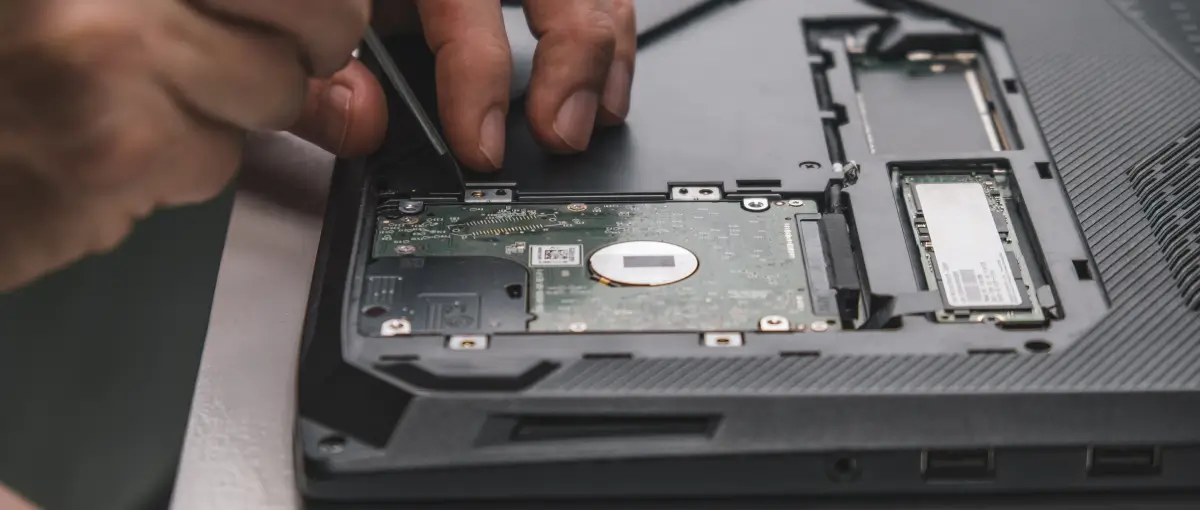

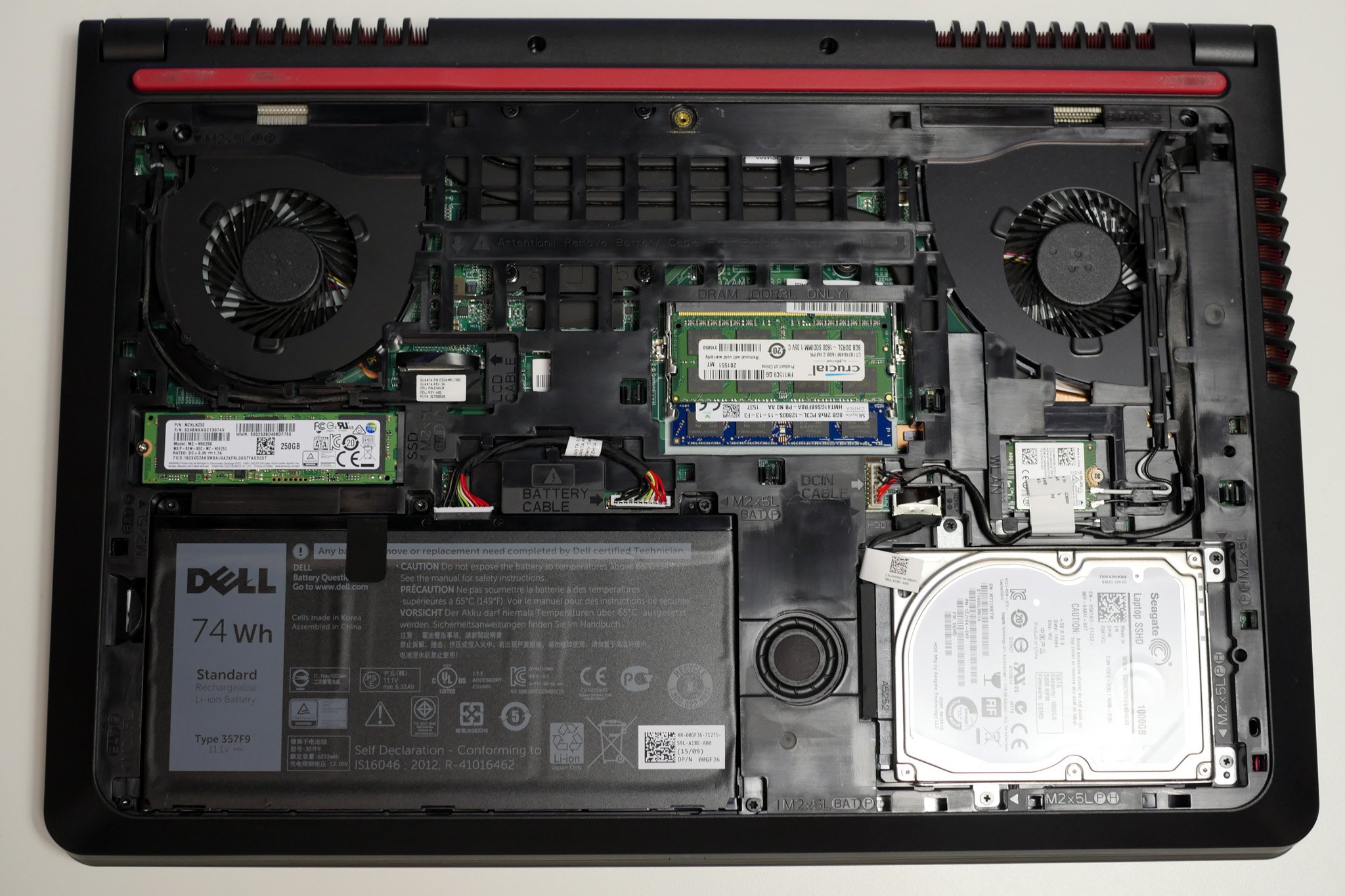

Onboard Data Buffering and Temporary Storage

Before data can be fully processed or transmitted, it often needs to be temporarily stored. This is where concepts akin to high-speed memory buffers and temporary storage come into play. For a racing drone, for example, it might briefly buffer video frames to ensure smooth playback or for post-flight analysis of critical flight segments. In autonomous mapping, preliminary sensor readings might be held in temporary memory while the system calibrates or waits for a stable GPS lock. This temporary storage is crucial for managing the transient nature of real-time data and ensuring that no critical information is lost during processing bottlenecks.

Long-Term Data Archiving and Retrieval

Once data has been processed and its immediate utility has passed, it is often archived for future analysis, compliance, or training purposes. This long-term storage can take various forms. For organizations engaged in large-scale remote sensing or environmental monitoring, vast archives of satellite imagery or drone survey data might be maintained. These archives can reside in on-premises data centers, private clouds, or public cloud storage services, optimized for cost-effectiveness and long-term durability. The key here is not just storage capacity but also efficient indexing and retrieval mechanisms, allowing researchers or analysts to quickly access specific historical data points when needed.

Processing Power: The Engine of Data Utilization

In the realm of Tech & Innovation, data is rarely stored for its own sake; it is a resource to be analyzed and leveraged. The processing power associated with data management is as critical as the storage itself.

High-Performance Computing (HPC) for Big Data

Many advanced applications, such as complex AI model training for autonomous navigation or sophisticated environmental simulations based on remote sensing data, require immense computational resources. High-Performance Computing (HPC) clusters, consisting of numerous interconnected processors, are employed to tackle these “big data” challenges. These clusters can process terabytes or even petabytes of data, enabling breakthroughs in fields like predictive modeling, scientific research, and complex system design. The data itself might be stored on specialized high-speed storage arrays connected to the HPC, facilitating rapid access for the computationally intensive tasks.

Machine Learning and AI for Data Insights

Machine learning (ML) and artificial intelligence (AI) are at the forefront of transforming raw data into actionable insights. Algorithms are trained on vast datasets to recognize patterns, make predictions, and automate complex tasks. For instance, AI models are used to detect anomalies in infrastructure from aerial imagery, identify species in remote ecological surveys, or predict potential hazards in autonomous navigation. The “hard drive” in this context becomes a sophisticated knowledge base and a training ground for intelligent systems, constantly learning and evolving as new data is ingested and processed.

Real-Time Analytics and Decision Support

In many operational scenarios, the ability to analyze data and make decisions in real-time is paramount. Consider a drone performing an inspection of a critical piece of infrastructure: it might be using onboard AI to identify structural defects as it flies. This requires real-time data analysis and immediate feedback. Similarly, in autonomous flight systems, sensor data is continuously analyzed to make micro-adjustments for stabilization, obstacle avoidance, or optimal pathfinding. This dynamic processing and decision-making are made possible by architectures that prioritize speed and low latency, often involving specialized hardware accelerators and optimized software algorithms.

The Future of Data Storage in Technological Advancements

The trajectory of data storage and processing within Tech & Innovation points towards increasing integration, intelligence, and efficiency. The boundaries between storage, processing, and networking are becoming increasingly blurred.

Towards Unified Data Fabrics

The future envisions a “data fabric” – a unified, intelligent, and distributed layer that seamlessly integrates data from various sources, regardless of their location or format. This fabric would allow for effortless data access, management, and processing across diverse systems, from edge devices to massive cloud infrastructures. For Tech & Innovation applications, this means that an autonomous drone operating in the field could seamlessly access and contribute to a global data repository, enhancing its capabilities and contributing to collective intelligence.

Neuromorphic Computing and Beyond

Emerging paradigms like neuromorphic computing, which mimics the structure and function of the human brain, hold the promise of radically different approaches to data processing and learning. These systems could process information in a highly parallel and energy-efficient manner, potentially transforming how we handle the ever-increasing volume of data generated by advanced technologies. The concept of a “hard drive” might evolve into something more akin to a dynamic, self-optimizing neural network capable of both storing and processing information in unprecedented ways.

Quantum Computing and Data

While still in its nascent stages, quantum computing has the potential to revolutionize data processing for certain types of complex problems. Its ability to perform calculations that are intractable for even the most powerful classical supercomputers could unlock new possibilities in fields like material science, drug discovery, and advanced AI. If quantum computing matures, the way we store and process extremely complex datasets could be fundamentally altered.

In conclusion, while the term “hard drive” may evoke a specific, tangible piece of hardware from the past, the underlying principle of storing and processing information remains central to the advancements in Tech & Innovation. From the distributed clouds to the intelligent edge, and the evolving architectures that manage these systems, the “look” of data storage is increasingly abstract, dynamic, and intrinsically linked to the intelligence and functionality of the technologies themselves. It is a testament to the relentless evolution of our ability to capture, understand, and leverage the ever-expanding universe of digital information.