Shadow banning, a term that has gained significant traction across various online platforms, refers to the practice of partially or wholly blocking a user’s content or account from public view without their explicit knowledge. Unlike a direct ban, which typically notifies the user of their suspension, shadow banning is a more insidious form of content moderation. It operates in the digital shadows, making it difficult for affected users to ascertain why their reach has been curtailed or if they have even been subjected to such a measure.

The implications of shadow banning are profound, particularly for individuals and entities who rely on online visibility for their livelihood, advocacy, or creative expression. It can stifle legitimate discourse, hinder the growth of emerging creators, and create an uneven playing field on platforms that are increasingly becoming central to modern communication and commerce. Understanding the nuances of shadow banning is crucial for navigating the complexities of the digital landscape and for advocating for greater transparency in online content distribution.

The Mechanics and Manifestations of Shadow Banning

Shadow banning is not a monolithic practice; rather, it manifests in a variety of ways, each with its own distinct impact on user visibility and engagement. The underlying intent is generally to reduce the reach of certain content or users without alerting them, thereby avoiding direct confrontation or the user’s ability to circumvent the restrictions.

Reduced Visibility and Reach

The most common and insidious form of shadow banning involves the subtle reduction of a user’s content visibility. This can occur across various social media platforms and online forums. For instance, posts from a shadow-banned user might no longer appear in the main feeds of their followers, be excluded from trending topics, or have their search rankings significantly degraded.

Algorithmic Demotion

At the heart of this reduced visibility often lies algorithmic demotion. Platforms employ complex algorithms to curate content and determine what users see. When a user is shadow-banned, these algorithms are tweaked to de-prioritize their content. This could be based on a variety of factors, including perceived violations of community guidelines, repetitive posting patterns, or even algorithmically determined engagement metrics that are artificially suppressed. The effect is that even if a user posts high-quality, relevant content, it is unlikely to reach its intended audience.

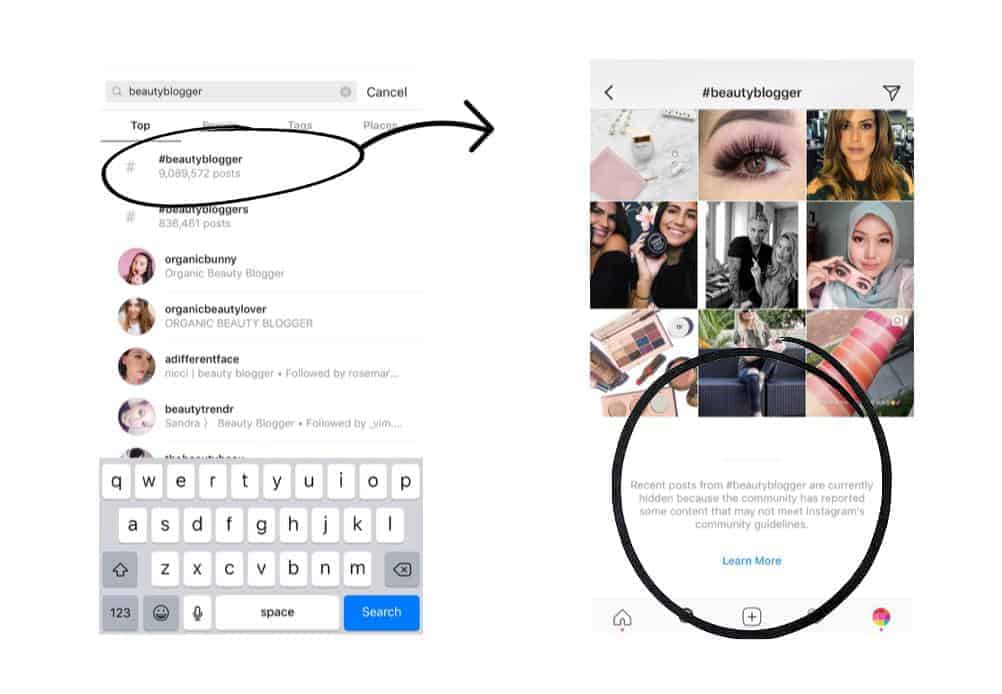

Search and Discovery Penalties

Shadow banning can also severely impact a user’s ability to be discovered through search functions or exploration features. Content from a shadow-banned account might be entirely absent from search results, or it may appear so far down the list as to be effectively invisible. Similarly, features designed to help users discover new content, such as “explore” pages or suggested accounts, may actively exclude shadow-banned users, further isolating them from potential new audiences.

Restricted Interactions and Engagement

Beyond simply reducing the visibility of content, shadow banning can also interfere with a user’s ability to interact with others on a platform. These restrictions can be subtle and cumulative, creating a frustrating experience for the user.

Comment and Reply Suppression

One common manifestation is the suppression of comments or replies made by a shadow-banned user. Their comments might not appear to other users, or they may only be visible to the user themselves. This can lead to a feeling of shouting into the void, as their contributions are effectively silenced within conversations. This is particularly damaging for users who engage in discussions, provide customer support, or participate in community building.

Direct Message Limitations

In some instances, shadow banning can extend to direct messaging. While less common, users may find that their direct messages are not being delivered, or that they are unable to receive replies from certain individuals. This can disrupt private communications and create a sense of isolation and disconnection from the platform’s social fabric.

Like and Share Invisibility

Even seemingly minor engagement metrics can be targeted. It’s possible for a user’s likes or shares to not be visible to others, or for the algorithms to simply disregard these interactions when calculating the visibility of their content. This further compounds the problem of reduced reach and can make it difficult for users to gauge the true impact of their online presence.

The Rationale Behind Shadow Banning

Platforms often resort to shadow banning as a tool to manage their online ecosystems, aiming to maintain a certain quality of user experience and to enforce their terms of service without incurring the backlash that direct bans can sometimes provoke. However, the opacity of this practice raises significant ethical and practical concerns.

Moderating Undesirable Content Without Direct Confrontation

One of the primary drivers for shadow banning is the desire to curb the spread of content that violates community guidelines or terms of service, but which may not warrant a full, immediate ban. This could include spam, repetitive low-quality content, or content that skirts the edges of acceptable discourse. By reducing the visibility of such content, platforms can attempt to mitigate its impact without the user necessarily understanding they are being penalized.

Combating Spam and Bot Activity

Shadow banning is frequently employed as a strategy against spam accounts and automated bots. These entities often flood platforms with unsolicited messages, irrelevant links, or promotional material. Instead of outright banning thousands of ephemeral accounts, platforms may opt to shadow ban them, effectively rendering them invisible and their spamming efforts futile. This is often a more scalable approach to managing bot networks.

Addressing “Gray Area” Violations

Many platform violations fall into a “gray area.” They might not be outright hate speech or illegal activity, but they could be disruptive, misleading, or simply contribute to a negative user experience. Shadow banning allows platforms to address these violations by limiting the reach of the offending content and user, thereby discouraging further such behavior without the user necessarily understanding the full extent of the penalty.

Maintaining Platform Health and User Experience

Ultimately, platforms aim to create environments where users feel comfortable and engaged. Shadow banning, from a platform’s perspective, can be seen as a tool to filter out content and users that detract from this goal.

Preventing “Ban Evasion”

When users are directly banned, they often create new accounts to circumvent the restriction. Shadow banning can be a more subtle way to manage problematic users, making it less obvious that they are being targeted and thus potentially reducing the incentive to create new, identical accounts.

Avoiding Negative PR and User Outcry

A direct, widespread ban of a prominent user or a large group of users can often lead to significant public backlash, media scrutiny, and negative publicity for the platform. Shadow banning offers a less visible, and therefore potentially less controversial, method of content moderation. This allows platforms to maintain control over their content ecosystem with a lower risk of public outcry.

The Impact on Users and the Digital Landscape

The consequences of shadow banning extend far beyond the individual user, influencing the broader dynamics of online communication, creativity, and commerce. The lack of transparency surrounding this practice creates a landscape of uncertainty and distrust.

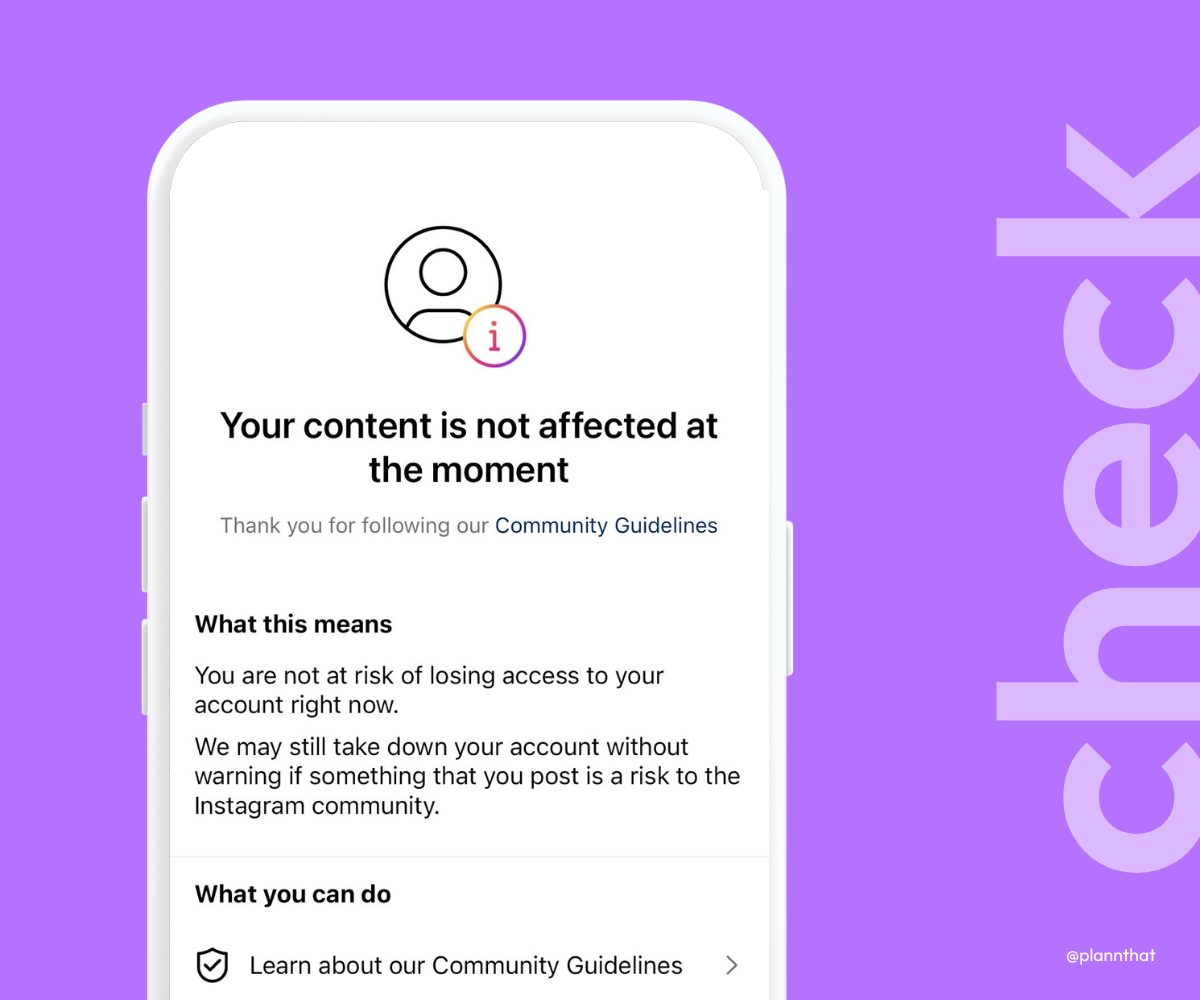

Erosion of Trust and Transparency

The most significant impact of shadow banning is the erosion of trust between users and the platforms they rely on. When users are not informed about why their content is not being seen or why their engagement is declining, they are left to speculate. This can lead to frustration, paranoia, and a general distrust of the platform’s algorithms and moderation policies. The absence of clear communication creates an environment where users feel powerless and subject to unseen forces.

Hindering Free Expression and Discourse

For individuals and organizations who use online platforms to share information, advocate for causes, or express themselves creatively, shadow banning can be a severe impediment. It can silence dissenting voices, limit the reach of important social or political messages, and create an environment where only the most visible or platform-favored content can thrive. This can lead to a less diverse and less robust public sphere.

Economic Ramifications

For content creators, small businesses, and influencers who depend on online visibility for their income, shadow banning can have devastating economic consequences. A sudden and unexplained drop in reach can lead to a significant loss of engagement, leads, and sales. This uncertainty makes it difficult to plan and sustain online businesses, creating financial precarity for many.

The Challenge of Detection and Recourse

One of the most challenging aspects of shadow banning is its inherent stealth. Unlike direct bans, there are no explicit notifications or clear indicators of restricted activity. This makes it difficult for users to confirm if they are indeed being shadow-banned, leading to a cycle of self-doubt and platform-related anxiety.

The “Am I Shadow Banned?” Phenomenon

The ambiguity of shadow banning has given rise to a widespread online phenomenon of users actively seeking confirmation of their status. This involves testing visibility, asking followers about received content, and consulting third-party tools or forums dedicated to identifying shadow bans. This obsessive pursuit of certainty consumes valuable time and energy that could otherwise be directed towards content creation or meaningful engagement.

Limited Avenues for Appeal and Resolution

Due to the clandestine nature of shadow banning, the avenues for recourse are often limited. Without clear evidence of a violation or a direct communication from the platform, users struggle to appeal any perceived restrictions. This lack of accountability further entrenches the power imbalance between platforms and their users, leaving many feeling without a voice or a means to rectify their situation. The absence of a transparent appeals process is a significant flaw in the current model of online content moderation.