In the early days of unmanned aerial vehicles (UAVs), the concept of multitasking was virtually non-existent. A drone was a singular machine designed for a singular purpose: to maintain stability while responding to manual pilot inputs. If a drone could hover without drifting, it was considered a success. However, the rapid acceleration of tech and innovation has transformed the drone from a remotely piloted aircraft into a sophisticated flying computer. When we ask “what does multitask mean” in the context of modern drone technology, we are referring to the ability of the onboard system to process, analyze, and execute multiple complex computational threads simultaneously without compromising flight safety or mission integrity.

Multitasking in a drone is the silent engine behind autonomy. It represents the shift from reactive systems to proactive, intelligent platforms. Today’s high-end enterprise and consumer drones are required to manage flight stability, process high-resolution visual data, run artificial intelligence (AI) algorithms for object recognition, and navigate via GPS—all within milliseconds. This article explores the architectural depth of multitasking in drone technology, the hardware that makes it possible, and how it is redefining the capabilities of autonomous flight.

Decoding Multitasking in the Context of Unmanned Aerial Systems

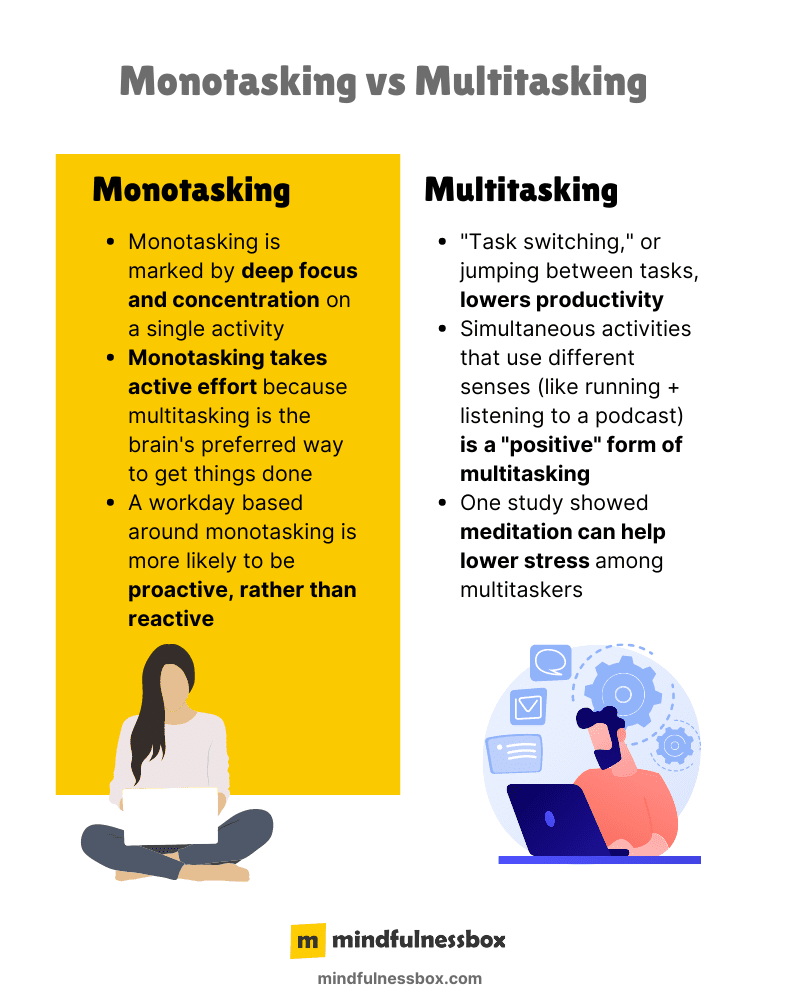

To understand multitasking in a drone, one must look past the physical movement of the propellers and into the “brain” of the aircraft—the flight controller and the companion computer. In computing, multitasking is the concurrent execution of multiple tasks over a certain period. In a drone, these tasks are divided into high-priority flight loops and secondary data-processing tasks.

From Linear Processing to Parallel Execution

Traditional drones operated on a linear processing model. The flight controller would read sensor data (gyroscope, accelerometer), calculate the necessary motor adjustments, and send the signal to the Electronic Speed Controllers (ESCs). This cycle happened thousands of times per second. However, adding a task—such as “detect a tree”—would often overwhelm these simple processors.

Modern innovation has introduced parallel execution. Using multi-core processors and specialized System-on-a-Chip (SoC) architectures, drones can now separate “housekeeping” tasks (keeping the drone level) from “cognitive” tasks (understanding the environment). This means that while one part of the processor is ensuring the drone doesn’t crash due to a gust of wind, another part is running a neural network to identify a specific human subject for an AI Follow Mode mission.

The Role of Real-Time Operating Systems (RTOS)

The foundation of drone multitasking is the Real-Time Operating System. Unlike a standard computer OS, which might prioritize a background update over a mouse click, an RTOS in a drone is built for “determinism.” It ensures that critical flight tasks are prioritized above all else. Multitasking in this environment means the system can juggle telemetry logging, obstacle sensing, and gimbal stabilization without ever delaying the motor update loop. If the multitasking architecture fails, the drone doesn’t just “lag”—it falls out of the sky.

The Synergy of Artificial Intelligence and Flight Autonomy

The most visible manifestation of multitasking in drone technology is seen in autonomous flight modes. When a drone is set to “AI Follow Mode,” it is performing an incredible feat of computational multitasking that was impossible a decade ago.

Computer Vision and Subject Tracking

For a drone to follow a mountain biker through a forest, it must perform several tasks at once. First, it must use computer vision to distinguish the biker from the background. This involves pixel-by-pixel analysis and pattern matching in real-time. Second, it must predict the biker’s trajectory to maintain a cinematic composition. Third, it must simultaneously scan the environment for obstacles like branches or power lines. This “sensor fusion”—the merging of data from multiple sources—is the pinnacle of multitasking innovation.

Simultaneous Localization and Mapping (SLAM)

One of the most complex multitasking challenges in drone tech is SLAM. This technology allows a drone to enter an unknown environment (like a collapsed building or a cave) and build a 3D map of the space while simultaneously determining its own location within that map.

This requires the drone to:

- Process data from LiDAR or depth-sensing cameras.

- Identify “landmarks” or geometric features in the environment.

- Calculate the drone’s movement relative to those features.

- Update the 3D model in real-time.

- Plan a safe flight path through the newly mapped area.

All of these threads must run in parallel. If the mapping task falls behind the localization task, the drone loses its “sense of self” and may collide with an object it has already seen but failed to process.

Engineering Efficiency: The Hardware of Multitasking

The transition toward true multitasking drones has necessitated a revolution in hardware. We are no longer using simple 8-bit microcontrollers; we are using powerful AI-optimized silicon that rivals the power of high-end smartphones.

The Rise of NPUs and GPUs in the Sky

To handle the massive data throughput required for multitasking, manufacturers have integrated Neural Processing Units (NPUs) and Graphics Processing Units (GPUs) into drone architecture. While the CPU (Central Processing Unit) handles general logic and flight commands, the NPU is dedicated solely to AI tasks, such as recognizing a leak in a pipeline or identifying a specific crop type in a field.

This hardware separation is what allows drones to be “multitaskers.” By offloading the heavy mathematical lifting of image recognition to a dedicated chip, the main processor remains free to handle the high-frequency requirements of flight stability and communication with the pilot’s controller.

Latency and Data Throughput

Multitasking is irrelevant if it introduces latency. In autonomous flight, a delay of even 100 milliseconds can be the difference between a successful mission and a total loss of equipment. Innovation in drone tech now focuses on “low-latency multitasking,” where data moves through the bus architecture at lightning speeds. This is particularly vital for Remote Sensing and mapping, where the drone must timestamp every piece of sensor data with exact GPS coordinates and orientation angles while the data is being captured.

Beyond Flight: Multitasking as a Tool for Data Acquisition

In industrial and enterprise sectors, multitasking defines the efficiency of a mission. A drone is no longer just a camera; it is a data-gathering platform that performs multiple analytical roles while in the air.

Mapping and Remote Sensing

During a mapping mission, a drone doesn’t just take photos. Modern multitasking systems allow the drone to perform real-time “edge computing.” As the drone flies over a construction site, it can capture photogrammetric data, process a low-resolution 2D orthomosaic for the pilot to review on the ground, and monitor its own thermal health—all at once. This ability to analyze data “at the edge” (on the drone itself) rather than waiting to upload it to a cloud server is a breakthrough in tech and innovation.

Search and Rescue Operations

In search and rescue (SAR) scenarios, the multitasking capability of a drone can save lives. A SAR drone might be equipped with both a visual and a thermal sensor. The multitasking system can run an AI algorithm that scans the thermal feed for human heat signatures while simultaneously providing a high-definition visual feed to the operator. If a signature is found, the system can automatically drop a waypoint, calculate the exact coordinates, and notify ground teams, all while maintaining its search pattern and monitoring its remaining flight time against the distance back to the home point.

The Future of Multitasking: Swarm Intelligence and Edge Computing

The next frontier of drone multitasking isn’t limited to a single aircraft; it extends to the coordination of multiple drones working as a cohesive unit. This is known as “Swarm Intelligence,” and it represents the ultimate expression of multitasking at a systems level.

Swarm Multitasking

In a swarm, the “multitasking” occurs both within each individual drone and across the entire network. Imagine ten drones mapping a massive forest fire. They must communicate with each other to ensure they don’t occupy the same airspace, divide the search area efficiently, and aggregate their data into a single, unified map in real-time. This requires a level of decentralized multitasking where each drone makes autonomous decisions based on the collective data of the group.

Autonomous Decision-Making

As we look toward the future, the definition of multitasking will shift from “doing many things at once” to “deciding many things at once.” Innovation in autonomous flight is moving toward a model where the drone is given a high-level objective—such as “inspect this bridge for structural cracks”—and the drone determines the best flight path, camera angles, and data points to collect without human intervention.

In this scenario, the drone is multitasking between execution and strategy. It is flying the mission while simultaneously analyzing the quality of the data it is collecting and adjusting its behavior to improve that data. This “closed-loop” multitasking is the hallmark of the next generation of aerial tech.

Conclusion

What does multitask mean in the world of drones? It means the disappearance of the gap between hardware and intelligence. It is the ability of a machine to sense the world, understand its position within it, and perform complex tasks—all while maintaining the delicate balance of flight. Through innovations in AI, processing power, and sensor fusion, drones have evolved into the most capable multitasking tools in the modern technological arsenal. As we continue to push the boundaries of what is possible, the multitasking drone will move from being an assistant to a truly autonomous partner in industry, science, and exploration.