In the rapidly evolving landscape of unmanned aerial vehicles (UAVs), terms like “AI,” “autonomous flight,” and “machine learning” are frequently used to describe the capabilities of modern drones. However, one specific acronym stands at the core of the visual intelligence revolution in the drone industry: CNN. While many associate these three letters with a global news organization, in the context of tech and innovation—specifically within the realms of robotics and aerial systems—CNN stands for Convolutional Neural Network.

A Convolutional Neural Network is a specialized type of deep learning algorithm primarily designed to process and interpret visual data. For drones, the implementation of CNNs represents the transition from simple remote-controlled aircraft to intelligent, autonomous systems capable of perceiving their environment in 3D. By mimicking the way the human visual cortex operates, CNNs allow drones to recognize objects, navigate complex environments, and perform high-level tasks such as precision mapping and infrastructure inspection without human intervention.

The Architecture of CNNs in Autonomous Systems

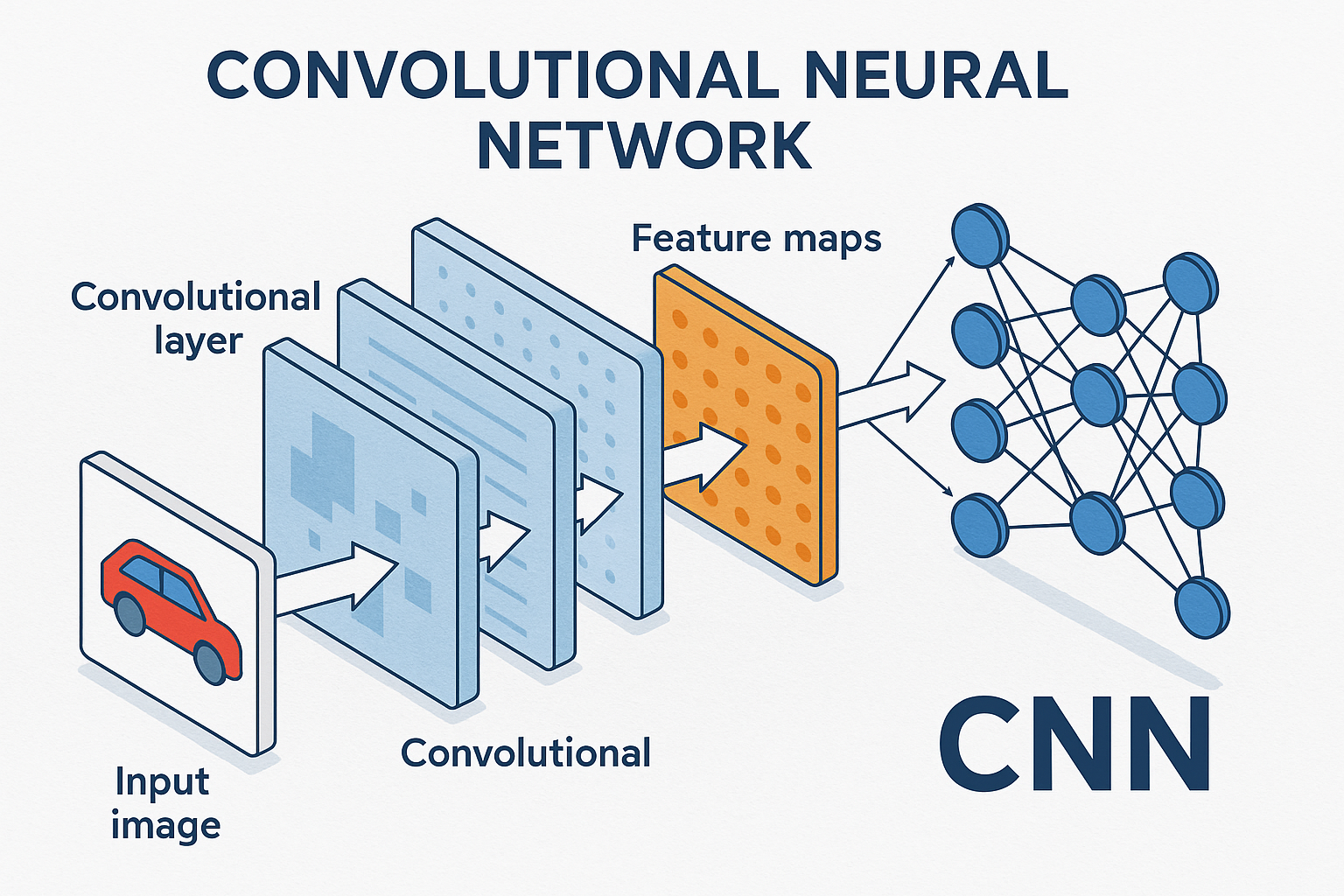

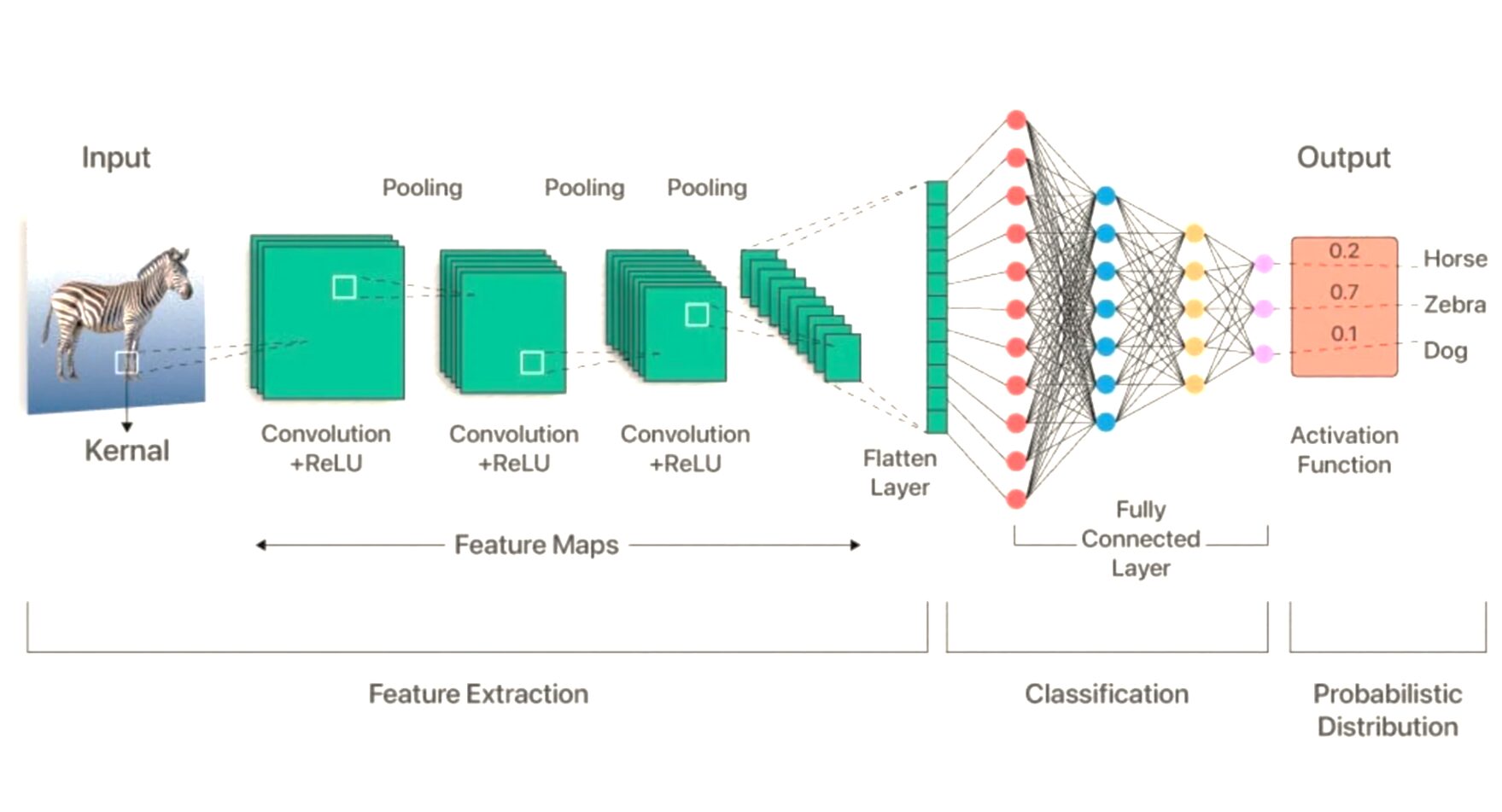

To understand what CNN means for drone technology, one must look at how these networks are structured. Unlike traditional algorithms that follow a rigid set of “if-then” rules, a CNN learns to identify patterns through exposure to massive datasets. This architecture is composed of several layers, each serving a specific purpose in transforming raw pixel data into actionable intelligence.

Convolutional Layers: Filtering Visual Data

The first and most critical component of a CNN is the convolutional layer. In this stage, the network applies various “filters” or “kernels” to the incoming video feed from the drone’s camera. These filters scan the image to detect basic features such as edges, lines, and colors. As the data moves deeper into the network, the layers begin to recognize more complex shapes, such as the curve of a power line, the rectangular shape of a building, or the specific silhouette of a human being.

This process is what allows a drone to “see” in the traditional sense. By breaking down an image into its fundamental components, the CNN can reconstruct a digital understanding of the physical world. For drones operating in high-stakes environments, such as search and rescue missions, the ability of convolutional layers to distinguish a person from the surrounding foliage is a game-changing innovation.

Pooling and Activation: Reducing Complexity

After the convolutional layers extract features, “pooling” layers are used to simplify the information. Pooling reduces the spatial dimensions of the data, which minimizes the computational power required by the drone’s onboard processor. This is essential for UAVs, as they have limited battery life and processing capacity compared to ground-based servers.

Following pooling, “activation functions” determine which features are important enough to be passed to the next layer. This helps the drone ignore “noise”—such as lens flare, shadows, or moving grass—and focus solely on the objects that matter for its flight path or mission objective.

Fully Connected Layers: Making the Final Decision

The final stage of the CNN is the fully connected layer. Here, all the features identified in the previous steps are combined to reach a conclusion. For instance, if the network has detected a set of propellers, a specific frame shape, and a landing gear, the fully connected layer identifies the object as “another drone.” This classification is the “brain” of the operation, providing the high-level logic needed for autonomous decision-making.

How CNNs Enable Advanced Drone Autonomy

The true value of CNNs is realized when they are integrated into the flight controller of a drone. This integration enables a level of autonomy that was previously impossible, allowing the aircraft to handle dynamic environments in real-time.

Object Detection and Classification

Object detection is perhaps the most visible application of CNNs in modern drones. High-end consumer and enterprise drones use CNN-based models to identify and track objects. Whether it is a filmmaker using a “Follow-Me” mode to track a mountain biker or a security drone identifying an unauthorized vehicle on a restricted site, CNNs provide the visual recognition engine.

The precision of these networks allows drones to distinguish between similar-looking objects. For example, a drone used in livestock management can use a CNN to not only count sheep but also identify signs of distress or illness based on movement patterns and posture, tasks that require a deep level of visual “understanding.”

Real-Time Path Planning and Obstacle Avoidance

Beyond simple recognition, CNNs are vital for obstacle avoidance systems. By processing data from multiple sensors—including RGB cameras, thermal sensors, and LiDAR—a CNN can create a real-time 3D map of the environment. This is often referred to as SLAM (Simultaneous Localization and Mapping).

As the drone flies, the CNN identifies obstacles such as tree branches, power lines, or glass walls. The system doesn’t just see these objects; it calculates their distance and predicts their movement. If a drone is flying through a forest, the CNN-driven autonomy system can calculate the safest path through the canopy at high speeds, making adjustments in milliseconds to avoid collisions.

AI-Powered “Follow-Me” Modes

In the world of aerial filmmaking and tech innovation, CNNs have revolutionized the “Follow-Me” feature. Older versions of this technology relied on GPS signals from a controller or wearable device. Modern drones, however, use vision-based tracking powered by CNNs. This allows the drone to maintain a lock on a subject even if the GPS signal is lost or if the subject moves behind an obstacle. The CNN “remembers” the visual characteristics of the subject and can re-acquire the target as soon as it reappears.

CNNs in Mapping and Remote Sensing

While flight autonomy is a major focus, the impact of CNNs on the data collected by drones is equally profound. In industries like agriculture, construction, and environmental science, drones are used to gather vast amounts of aerial imagery that would be impossible for humans to analyze manually.

Automated Feature Extraction

In large-scale mapping projects, CNNs are used to perform automated feature extraction. For example, in urban planning, a drone can capture thousands of images of a city. A CNN-trained algorithm can then process these images to automatically identify and categorize every road, building, swimming pool, and solar panel in the dataset. This turns raw imagery into structured GIS (Geographic Information System) data in a fraction of the time it would take a human analyst.

Agricultural Monitoring and Crop Health Analysis

In precision agriculture, CNNs are trained to recognize specific biological signatures. Drones equipped with multispectral cameras capture data that the human eye cannot see. CNNs analyze these images to detect early signs of pest infestations, nutrient deficiencies, or dehydration. By identifying these issues at the “leaf level” across hundreds of acres, CNNs enable farmers to apply treatments only where they are needed, reducing costs and environmental impact.

Infrastructure Inspection and Fault Detection

The inspection of critical infrastructure—such as bridges, wind turbines, and cell towers—is one of the most dangerous jobs in the world. Drones powered by CNNs are making this task safer and more efficient. A drone can fly close to a wind turbine blade and use a CNN to identify microscopic cracks or signs of erosion. The network can classify the severity of the damage, allowing maintenance crews to prioritize repairs based on data-driven insights rather than scheduled guesswork.

The Future of CNNs in Drone Innovation

As we look toward the future, the role of CNNs in drone technology is set to expand even further, driven by improvements in hardware and more sophisticated neural architectures.

Edge Computing and On-Board AI

One of the biggest hurdles in drone AI has been the need for heavy processing power. Historically, complex CNNs had to be run on powerful ground stations or in the cloud. However, the rise of “Edge AI” hardware—specialized chips like the NVIDIA Jetson or dedicated Neural Processing Units (NPUs)—allows drones to run deep learning models directly on the aircraft. This reduces latency, as the drone does not need to send data back and forth to a server, making its autonomous reactions even faster and more reliable.

Swarm Intelligence and Collaborative Learning

The next frontier for CNNs in the drone space is swarm intelligence. In this scenario, multiple drones work together to complete a task, such as searching a disaster zone or mapping a massive industrial site. CNNs allow these drones to share visual data and “learn” from each other’s perspectives. If one drone in the swarm identifies an obstacle or a point of interest, the entire fleet can update its collective understanding of the environment.

Beyond Vision: Multi-Modal Neural Networks

While CNNs are primarily focused on visual data, the future of drone innovation lies in multi-modal networks. These are systems that combine convolutional layers for vision with other types of neural networks for processing sound, radio frequencies, or chemical signatures. Imagine a drone that uses a CNN to see a leak in a pipeline and a different neural network to “smell” the gas or “hear” the hiss of the pressure release.

The term “CNN” represents the backbone of modern drone intelligence. It is the technology that bridges the gap between a flying camera and an autonomous robot. By enabling machines to interpret the visual world with human-like accuracy, Convolutional Neural Networks are not just a feature of modern drones—they are the catalyst for the next generation of aerial innovation. Whether it is through safer flight, more efficient data collection, or entirely new ways of interacting with our environment, the influence of CNNs on the drone industry is profound and permanent.