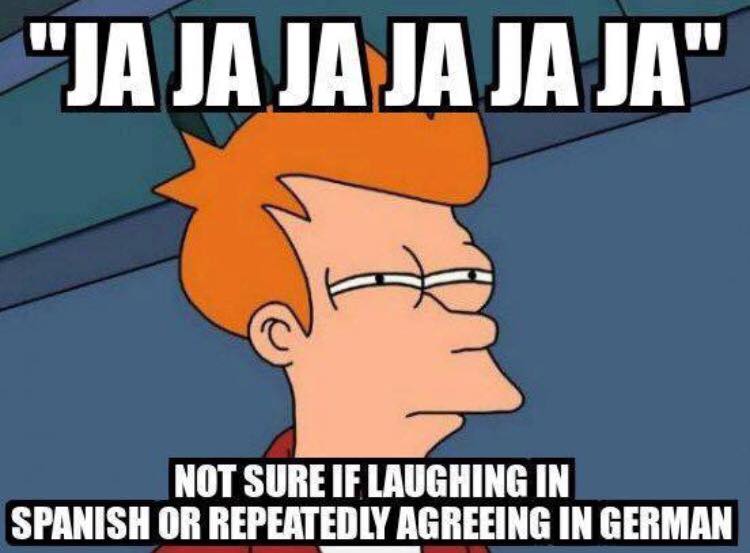

In the rapidly evolving landscape of unmanned aerial vehicles (UAVs), commonly known as drones, technological innovation often centers on enhancing flight capabilities, imaging precision, or operational efficiency. However, as drones become more integrated into daily life and collaborative environments, a critical frontier emerges: the nuanced understanding of human communication and emotion. The seemingly simple question, “what does jajajaja mean in Spanish,” transcends a mere linguistic inquiry when viewed through the lens of artificial intelligence (AI) and human-drone interaction (HDI). It represents a fundamental challenge for autonomous systems: to interpret complex human expressions, such as laughter, and integrate this understanding into their operational protocols and interaction models.

The Nuance of Human Expression in an Autonomous World

As drones transition from purely mechanical tools to sophisticated partners in tasks ranging from logistics to creative arts, their ability to comprehend and respond to human nuances becomes paramount. While “jajajaja” is a straightforward textual representation of laughter in Spanish-speaking cultures, its implications for drone technology are profound, hinting at a future where machines not only execute commands but also intuitively grasp emotional states.

Beyond Simple Commands: The Need for Contextual Understanding

Current drone operation largely relies on explicit commands—joystick inputs, pre-programmed flight paths, or specific voice instructions. However, the next generation of drones, particularly those designed for close human collaboration or sensitive environments, will require a deeper form of intelligence. Imagine a rescue drone assessing a situation where human rescuers might express frustration, relief, or even a nervous laugh. An AI capable of recognizing these emotional cues could potentially adapt its behavior—perhaps slowing down, offering a comforting presence, or prioritizing certain actions based on perceived human stress levels. The ability to interpret “jajajaja” isn’t about the drone understanding a joke, but about recognizing an emotional state that signifies comfort, amusement, or even a social signal within a group. This moves beyond basic command interpretation to a more holistic, empathetic form of interaction. For instance, in an aerial filmmaking scenario, if a director playfully exclaims “jajajaja” after a successful, complex shot, an advanced drone system with an understanding of this positive feedback could log the shot as particularly effective, refine its internal parameters for similar future maneuvers, or even display a subtle positive reinforcement in its user interface.

Textual Cues and Emotional Inference

In an increasingly digital world, human communication often occurs through text. Whether it’s a message in a drone control app, a comment on a social media live stream of drone footage, or a textual log from an operator, “jajajaja” serves as a ubiquitous example of a text-based emotional cue. For AI systems, interpreting such cues requires sophisticated natural language processing (NLP) capabilities. It’s not just about translating the word, but inferring the underlying emotion—joy, amusement, satisfaction, or even irony. This inference is critical because human emotions are rarely singular and often carry context-dependent meanings. A drone designed to monitor construction sites, for example, might encounter situations where a textual “jajajaja” from a team leader could indicate a minor mishap being taken lightly, or a moment of successful problem-solving. Distinguishing between these scenarios, through additional contextual data like sensor readings or other verbal cues, would allow the drone to provide more relevant data or assistance. The challenge lies in moving from mere keyword recognition to deep sentiment analysis, where the system understands not just what is said, but how it is said and what it truly signifies in a given situation.

AI’s Role in Bridging the Communication Gap

The ambition to enable drones to understand complex human expressions like “jajajaja” fundamentally rests on advancements in artificial intelligence. AI serves as the bridge between raw linguistic data and actionable operational intelligence for autonomous systems.

Natural Language Processing for Human-Drone Interfaces

Natural Language Processing (NLP) is the cornerstone of allowing drones to understand and interact with human language. For a drone to interpret “jajajaja,” its NLP module would need to go beyond basic dictionary definitions. It would require:

- Lexical Analysis: Recognizing “jajajaja” as a specific textual pattern representing laughter, akin to “lol” or “haha” in English.

- Contextual Understanding: Analyzing the surrounding text, the interaction history, and the operational context (e.g., mission type, current drone status) to gauge the intent and significance of the laughter. Is it a relaxed expression of humor, a signal of agreement, or perhaps a nervous reaction?

- Sentiment Analysis: Determining the emotional tone associated with the expression. “jajajaja” is generally positive, but its intensity and specific emotional valence (e.g., gentle amusement vs. boisterous joy) can vary.

- Cultural Nuance: Recognizing that “jajajaja” is specific to Spanish and Portuguese-speaking cultures, and that similar expressions exist across different linguistic groups, requiring diverse linguistic models.

Integrating advanced NLP into drone control systems would allow for more intuitive voice commands, more nuanced feedback from operators, and even the ability for drones to respond in ways that feel more natural and less robotic, enhancing overall user experience and reducing cognitive load on human operators. This could mean a drone adjusting its camera angle based on a verbally expressed “Oh wow, jajajaja, look at that!” from an aerial cinematographer, without explicit joystick input.

Interpreting Sentiment: From “jajajaja” to Joy

The true leap in AI for drones is not just in processing words, but in interpreting the sentiment behind them. “jajajaja” unequivocally conveys joy or amusement. For an AI, recognizing this sentiment involves:

- Emotional Mapping: Linking specific linguistic patterns to known human emotions.

- Predictive Behavior: Using the identified emotion to anticipate human actions or needs. If an operator expresses “jajajaja” during a complex maneuver, it might indicate satisfaction, suggesting the drone’s autonomous execution was successful and could be replicated or enhanced. Conversely, laughter in a stressful situation might be a coping mechanism, prompting the drone’s AI to prioritize safety protocols or offer alternative solutions.

- Adaptive Learning: Continuously refining its understanding of emotional cues based on operator feedback and mission outcomes. Over time, an AI drone could learn the specific emotional communication patterns of its human collaborators, fostering a more personalized and efficient working relationship.

This goes beyond simple operational directives. It moves towards an AI that can understand the ‘why’ behind human expressions, enabling drones to become truly adaptive, intelligent partners rather than mere remote-controlled machines.

Future Paradigms of Human-Drone Collaboration

The ability of drones to interpret human expressions like “jajajaja” unlocks new paradigms for human-drone collaboration, pushing the boundaries of what autonomous systems can achieve in tandem with human partners.

Adaptive Flight Paths and Responsive Behavior

Imagine a drone assisting in crowd monitoring or emergency response. If the drone’s AI can interpret expressions of panic, relief, or even collective amusement from the crowd below (perhaps via audio analysis linked to textual “jajajaja” equivalents), it could adapt its flight path and data collection strategy in real-time. A celebratory “jajajaja” during a public event could signal a positive, relaxed atmosphere, allowing the drone to maintain its routine surveillance. Conversely, if expressions of distress are detected, the drone might automatically shift to search and rescue protocols, adjust its camera to zoom into areas of concern, or alert human responders more urgently. In aerial filmmaking, if a drone senses via operator chatter (containing “jajajaja”) that a particular shot is generating excitement, it could autonomously repeat or refine the flight path to capture multiple variations, enhancing creative output without explicit prompting. This dynamic responsiveness, driven by an understanding of human emotional states, represents a significant evolution from rigid, pre-programmed flight patterns to fluid, intelligent adaptation.

Ethical Considerations and User Experience in Emotionally Aware Systems

Developing drones capable of interpreting human emotions also raises crucial ethical considerations. Privacy concerns, particularly regarding the collection and analysis of human emotional data, must be rigorously addressed. Safeguards are needed to ensure that such capabilities are used responsibly and transparently, respecting individual rights and avoiding misuse. Furthermore, the user experience (UX) design for emotionally aware drone systems becomes critical. How should a drone communicate its understanding of human emotion? Should it offer verbal affirmations, display visual cues, or subtly adjust its behavior? The goal is to create interactions that feel natural and supportive, not intrusive or unnerving. Over-interpretation or misinterpretation of human emotions could lead to undesirable or even dangerous outcomes, underscoring the need for robust, transparent, and user-testable AI models. The design must ensure that the drone’s interpretation of “jajajaja” translates into helpful, predictable, and trustworthy behavior.

Training Data and Algorithmic Development

The foundation for these advanced capabilities lies in comprehensive training data and sophisticated algorithmic development. To accurately interpret expressions like “jajajaja,” AI models require vast datasets of human communication, annotated with emotional labels and contextual information.

Building Robust Models for Diverse Linguistic and Emotional Inputs

Developing AI models that can accurately interpret “jajajaja” and similar expressions across various languages and cultural contexts is an immense undertaking. It involves:

- Multi-modal Data Collection: Gathering not just text, but also audio and visual data of human expressions, allowing the AI to correlate textual laughter with vocal tone and facial cues.

- Contextual Annotation: Expert annotators are needed to label data, not just for the presence of laughter, but for its specific emotional valence, intensity, and the surrounding situational context. This helps the AI differentiate between genuine amusement, sarcastic laughter, or nervous giggles.

- Cross-Cultural Training: Recognizing that emotional expressions, while universal in principle, manifest differently across cultures. A “jajajaja” in Spanish might have subtle differences in usage compared to “ㅋㅋㅋㅋㅋ” in Korean or “哈哈哈哈” in Chinese. AI models must be trained on diverse datasets to be globally effective.

- Reinforcement Learning: Enabling drones to learn from their interactions. If a drone’s interpretation of an operator’s “jajajaja” leads to a positive outcome (e.g., a successful maneuver, a clear shot), the AI reinforces that interpretation. Conversely, negative outcomes help the AI refine its understanding.

This iterative process of data collection, annotation, model training, and real-world testing is essential for building robust AI systems that can reliably understand and respond to the rich tapestry of human emotion and communication, moving beyond the literal meaning of words to grasp the underlying human experience. The journey from “what does jajajaja mean in Spanish” to a drone that truly understands the sentiment it conveys is a testament to the ongoing innovation in AI and its transformative potential for human-drone interaction.