In the realm of audio, the term “DSP” is ubiquitous, appearing on everything from high-end studio gear to the latest smartphone apps. But what exactly does DSP stand for, and more importantly, what does it do? For musicians, audio engineers, and even casual listeners, understanding Digital Signal Processing (DSP) is key to appreciating the sophisticated technology that shapes the sound we experience every day. At its core, DSP refers to the manipulation of digital audio signals. This manipulation can encompass a vast array of processes, from simple adjustments like volume control and equalization to complex transformations like reverberation, pitch shifting, and compression. The fundamental principle is to take an analog audio signal, convert it into a digital format, process that digital information, and then convert it back into an analog signal that can be heard. This digital realm offers unparalleled flexibility and precision, allowing for creative sound design and meticulous audio restoration that would be impossible with purely analog methods.

The journey of sound through the digital pipeline is a fascinating one, and DSP is the engine that drives its transformation. It’s the invisible hand that sculpts the raw audio captured by microphones, refines it for playback, and imbues it with character and polish. Whether you’re an aspiring producer meticulously crafting a mix, a live sound engineer balancing an orchestra, or simply someone enjoying your favorite album, DSP is silently at work, enhancing your auditory experience. This article will delve into the intricacies of Digital Signal Processing, exploring its fundamental principles, its diverse applications in music production and performance, and its ongoing evolution, demonstrating how this powerful technology has become indispensable in the modern audio landscape.

The Digital Transformation: From Analog Waves to Digital Data

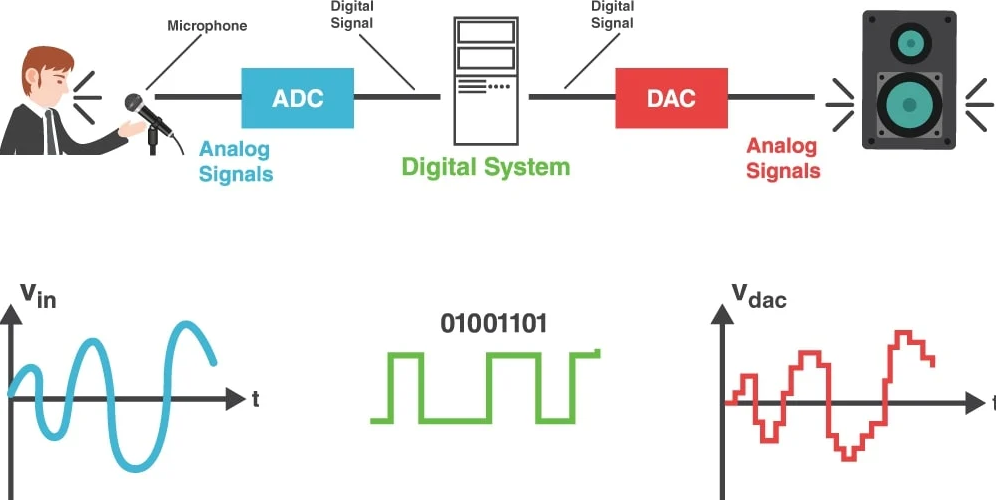

The genesis of DSP in music lies in the necessity of translating the continuous, analog waveform of sound into a discrete, digital representation. This process is fundamental to how all digital audio systems function, from recording studios to streaming services. Without this initial conversion, the sophisticated manipulation that DSP offers would be impossible.

Analog to Digital Conversion (ADC): Capturing the Sound

Sound, in its natural state, is an analog phenomenon. It’s a continuous wave of pressure variations that travel through the air. To process this sound digitally, it must first be converted into a format that computers can understand: numbers. This is where the Analog to Digital Converter (ADC) comes into play.

An ADC essentially samples the incoming analog audio signal at regular intervals. The frequency at which these samples are taken is known as the sampling rate. Common sampling rates in audio include 44.1 kHz (kilohertz) for CDs, 48 kHz for professional video, and higher rates like 96 kHz or 192 kHz for high-resolution audio. A higher sampling rate allows for a more accurate representation of the original analog waveform, capturing higher frequencies with greater fidelity.

Alongside the sampling rate, the bit depth determines the resolution of each sample. Bit depth refers to the number of bits used to represent the amplitude (loudness) of each sample. For instance, a 16-bit system can represent 2^16 (65,536) different amplitude levels, while a 24-bit system offers 2^24 (16,777,216) levels. A higher bit depth results in a wider dynamic range (the difference between the loudest and quietest sounds) and lower quantization noise, leading to a more detailed and nuanced audio signal.

Digital to Analog Conversion (DAC): Bringing Sound Back to Life

Once the audio has been processed digitally, it needs to be converted back into an analog signal that our ears can perceive. This is the role of the Digital to Analog Converter (DAC). The DAC takes the digital data (the sampled and quantified values) and reconstructs a continuous analog waveform. The quality of the DAC is crucial, as a poor-quality conversion can introduce artifacts and degrade the overall sound. The DAC essentially “connects the dots” of the digital samples, interpolating between them to create a smooth, continuous output signal that mimics the original sound, but now potentially transformed by DSP.

The Core of DSP: Algorithmic Manipulations of Digital Audio

With the audio signal now in a digital format, the true power of DSP can be unleashed. This involves applying a variety of algorithms to modify the digital audio data in specific ways. These algorithms are essentially sets of mathematical instructions that dictate how the signal should be altered. The versatility of these algorithms is what makes DSP so transformative in the music industry.

Time-Based Effects: Shaping Space and Dimension

Perhaps the most widely recognized and creatively utilized DSP effects are those that manipulate the time domain of an audio signal. These effects are crucial for creating a sense of space, depth, and character within a mix.

Reverberation (Reverb): Simulating Acoustic Spaces

Reverb is the simulation of the complex reflections of sound that occur in an acoustic environment. Digital reverb algorithms analyze the characteristics of various spaces – from small rooms to grand cathedrals – and apply a dense series of echoes to the dry audio signal. Key parameters in digital reverb include:

- Decay Time: The duration it takes for the reverb to fade away.

- Pre-Delay: The time between the original sound and the onset of the first reflections.

- Diffusion: The density and complexity of the reflections.

- Damping: How high frequencies are absorbed over time, simulating air absorption in real spaces.

By adjusting these parameters, engineers can place instruments in virtual rooms, create vast sonic landscapes, or simply add a touch of ambience.

Delay (Echo): Creating Rhythmic Repetitions

Delay effects, often referred to as echo, create distinct repetitions of the original sound at specific intervals. Unlike the dense wash of reverb, delay is characterized by discrete, audible repeats. Parameters for delay include:

- Delay Time: The time between each echo. This is often synchronized to the tempo of the music (e.g., a quarter note delay, an eighth note delay).

- Feedback: The number of times the echo repeats before fading out.

- Damping/Filtering: Similar to reverb, this controls how the echoes change in character over time.

Delay can be used creatively to create rhythmic patterns, add width and stereo interest, or to emphasize specific musical phrases.

Dynamic Range Processing: Controlling Loudness and Impact

Dynamic range processing manipulates the difference between the loudest and quietest parts of an audio signal. This is essential for making tracks sound more polished, impactful, and consistent in volume.

Compression: Reducing Dynamic Range

Compression is a fundamental tool for controlling dynamics. It reduces the volume of the loudest parts of a signal, making the overall sound more even. Key parameters include:

- Threshold: The level at which compression begins to act.

- Ratio: The amount by which the signal is compressed (e.g., a 4:1 ratio means for every 4dB above the threshold, the output increases by only 1dB).

- Attack: The time it takes for the compressor to engage once the signal crosses the threshold.

- Release: The time it takes for the compressor to disengage once the signal drops below the threshold.

- Knee: The sharpness of the transition into compression.

Compression can be used subtly to glue a mix together, or more aggressively to create a pumping or “squashed” effect.

Gating: Removing Unwanted Noise

A noise gate works in reverse to a compressor. It attenuates or silences a signal when its level drops below a specific threshold. This is invaluable for removing unwanted background noise, such as amplifier hum or bleed between microphones.

- Threshold: The level below which the gate will close.

- Attack/Release: How quickly the gate opens and closes.

- Hold: A parameter that keeps the gate open for a short duration after the signal drops below the threshold.

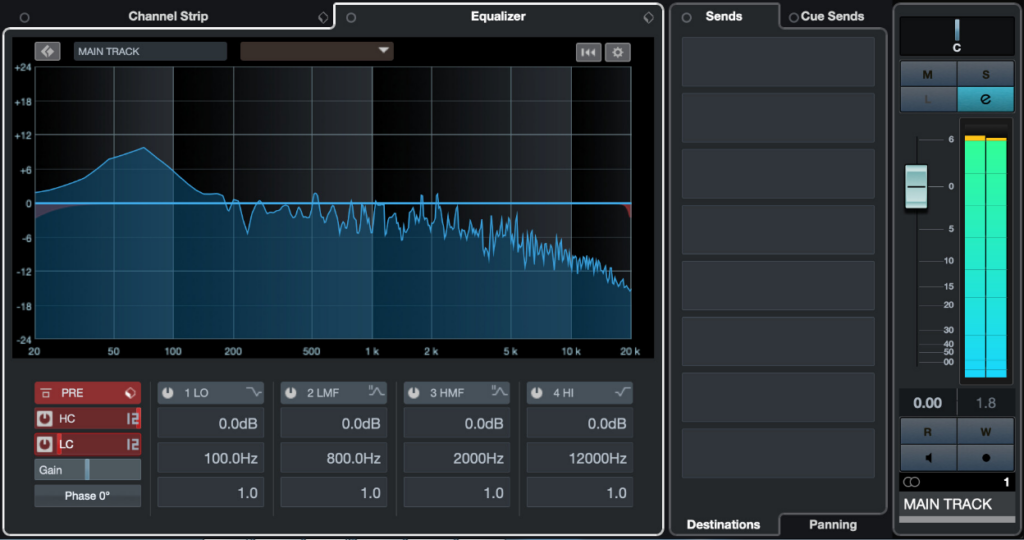

Frequency Domain Processing: Sculpting Tone and Clarity

Frequency domain processing, most notably equalization (EQ), allows for precise control over the tonal balance of an audio signal. It involves boosting or cutting specific frequencies within the audible spectrum.

Equalization (EQ): Shaping Tonal Character

EQ is used to enhance desirable frequencies, reduce problematic ones, and generally shape the sonic character of an instrument or voice. Digital EQs offer a wide array of filter types and precise control.

- Filter Types: High-pass (cuts low frequencies), low-pass (cuts high frequencies), shelving (boosts or cuts a band of frequencies above or below a certain point), and parametric (allows precise control over frequency, gain, and bandwidth/Q).

- Frequency Bands: Different bands are associated with different sonic characteristics. For example, low frequencies provide warmth and power, mid-frequencies contain the core body of most sounds, and high frequencies add air, clarity, and sparkle.

Modulation Effects: Adding Movement and Texture

Modulation effects introduce periodic variations to an audio signal, creating movement, width, and unique textures.

Chorus: Creating a Thicker, Wider Sound

Chorus takes a copy of the audio signal, slightly detunes and delays it, and then mixes it back in with the original. This creates the illusion of multiple instruments playing simultaneously, resulting in a richer, wider sound.

Flanger and Phaser: Distinctive Sweeping and Swirling Tones

Flangers and phasers create sweeping, swirling, or jet-like effects by using delay and feedback with a modulating phase shift or delay time. They are often used to add character and movement to guitars, synthesizers, and vocals.

Advanced DSP Applications: From AI to Immersive Audio

Beyond these foundational effects, DSP continues to evolve, enabling increasingly sophisticated and innovative audio manipulation techniques. The integration of artificial intelligence and the demands of new listening formats are pushing the boundaries of what’s possible.

AI-Powered Audio Enhancement and Restoration

Artificial intelligence is beginning to play a significant role in DSP. Machine learning algorithms can now be trained to perform complex audio tasks with remarkable efficiency and accuracy.

De-noising and De-reverberation

AI-powered tools can intelligently identify and remove unwanted noise or excessive reverb from recordings, often with greater precision than traditional methods. This is invaluable for restoring damaged audio or cleaning up problematic live recordings.

Source Separation

AI is also enabling the separation of individual instruments or vocal tracks from a mixed audio recording – a process that was once incredibly difficult and time-consuming. This has profound implications for remixing, sampling, and audio forensics.

Spatial Audio and Immersive Soundscapes

The advent of spatial audio technologies like Dolby Atmos and Sony 360 Reality Audio, which aim to place sounds in a three-dimensional space around the listener, heavily relies on advanced DSP.

Object-Based Audio

DSP algorithms are used to position and move individual audio “objects” within a virtual soundfield. This allows for a more immersive and dynamic listening experience, where sounds can appear to come from above, below, or all around the listener.

Binaural Rendering

For headphone listening, DSP techniques like binaural rendering are employed to simulate the way our ears perceive sound direction and space. By carefully manipulating the phase and amplitude of audio signals to each ear, it creates a convincing 3D soundscape.

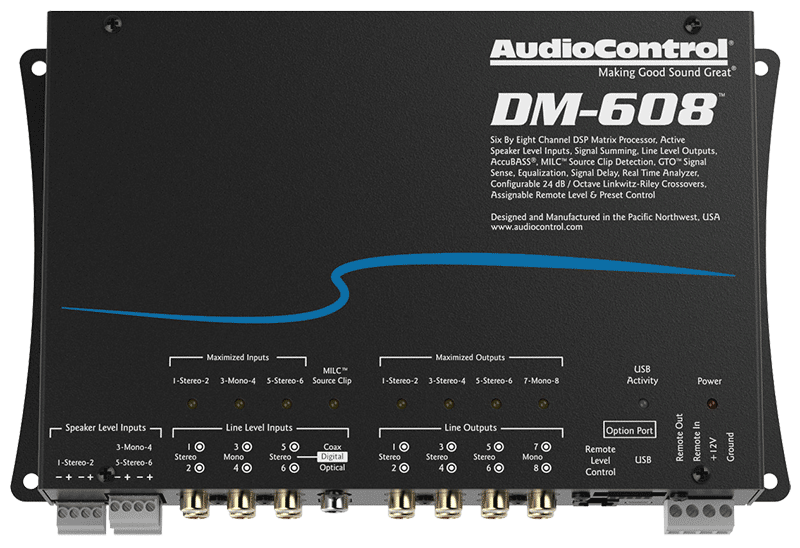

Real-Time Processing and Live Performance

DSP is not confined to the studio; it’s an essential component of live sound reinforcement and performance.

Live Effects and Mixing

Digital mixing consoles and effect units utilize DSP to provide instantaneous manipulation of live audio. This includes EQ, compression, reverb, and delay, allowing sound engineers to sculpt the sound of a performance in real-time.

Modeling and Simulation

DSP is used to model the characteristics of classic analog hardware, allowing musicians and engineers to access the sonic qualities of vintage compressors, EQs, and amplifiers in a digital environment. This provides a vast sonic palette for creativity without the need for expensive and bulky physical equipment.

In conclusion, “DSP” stands for Digital Signal Processing, and it is the bedrock of modern audio manipulation. From the fundamental conversion of analog sound to digital data, through a vast array of algorithmic transformations that shape tone, space, and dynamics, to cutting-edge AI applications and immersive audio experiences, DSP is the unseen force that makes the music we love sound as good as it does. As technology continues to advance, the capabilities and applications of Digital Signal Processing will undoubtedly continue to expand, offering ever more exciting possibilities for sound creation and appreciation.