In the rapidly evolving landscape of drone technology, particularly within the realms of Tech & Innovation—encompassing AI follow modes, autonomous flight, mapping, and remote sensing—the foundational principles of geometry are not merely academic concepts but practical cornerstones. While mathematics often begins with “undefined terms” to build a logical system, these very terms—point, line, and plane—represent fundamental abstractions that are intrinsically understood and applied in real-world drone operations. For sophisticated aerial systems to navigate, map, and interact with their environment autonomously, a deep, albeit often implicit, reliance on these geometric primitives is essential. Understanding how these “undefined” yet intuitively grasped concepts underpin cutting-edge drone applications provides critical insight into the intelligence and precision behind modern UAV capabilities.

The Foundational Geometry of Drone Mapping and Remote Sensing

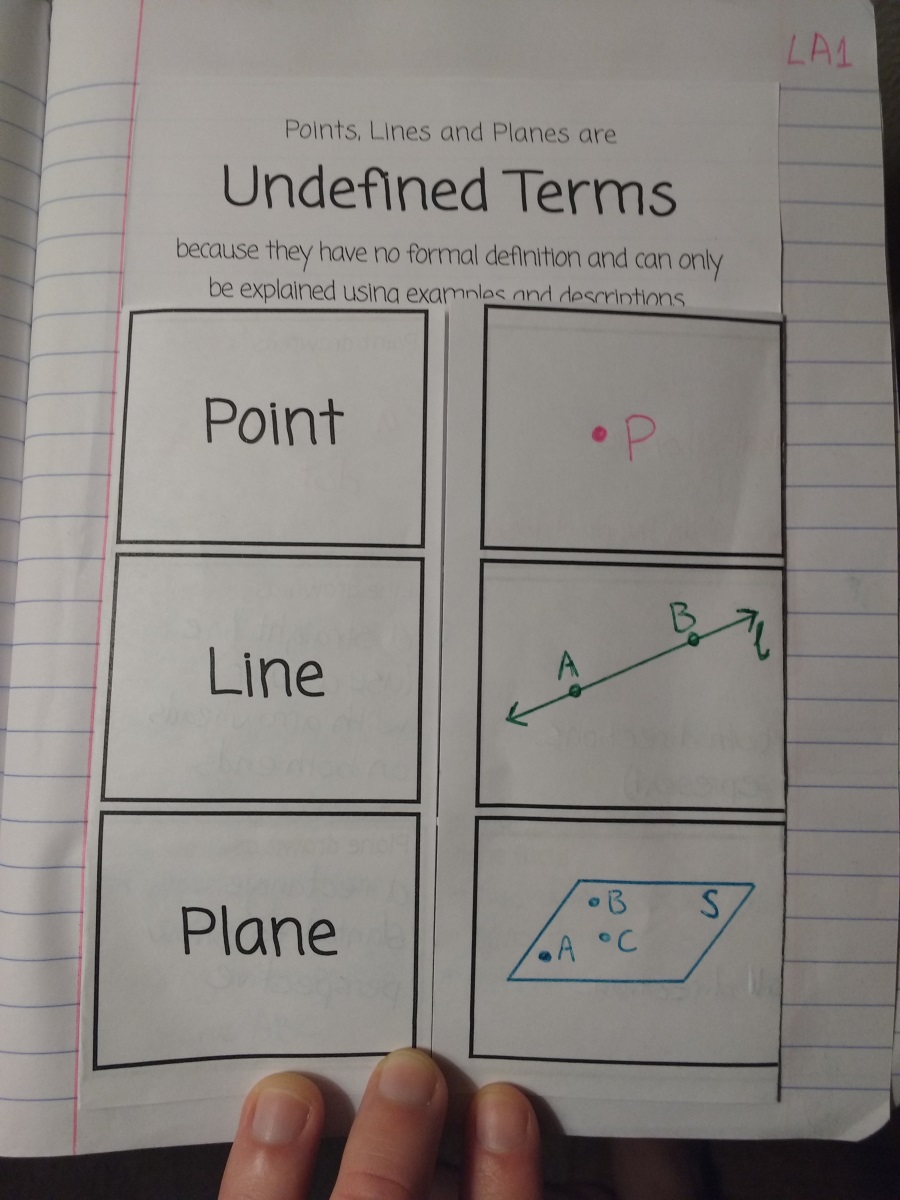

Drone mapping and remote sensing are inherently geometric endeavors. From generating precise orthomosaics to constructing complex 3D models, every piece of data collected by a drone needs to be accurately situated in space. This spatial understanding is built upon the practical application of points, lines, and planes, acting as the fundamental building blocks for representing and analyzing the physical world.

Points: The Atomic Units of Spatial Data

In the context of drone mapping and remote sensing, a “point” represents the most fundamental unit of spatial information. While mathematically undefined, its practical meaning is crystal clear: a specific location in 3D space. For drones, every pixel in an image, every laser return from a LiDAR scan, and every GPS coordinate logged represents a point. These points are not abstract entities but carry vital information—elevation, color, intensity, and time—that collectively form a comprehensive dataset.

- Photogrammetry: In photogrammetry, thousands, even millions, of corresponding points across multiple overlapping images are identified and triangulated to reconstruct 3D space. Each point represents a distinct feature on the ground, enabling the creation of detailed point clouds, which are essentially vast collections of precisely located points.

- LiDAR: LiDAR (Light Detection and Ranging) systems on drones emit laser pulses and measure the time it takes for them to return. Each return generates a single, highly accurate point in 3D space, capturing the topography and features of the terrain below. The density and precision of these points allow for unparalleled detail in terrain modeling and object detection.

- GPS and IMU: The drone’s onboard Global Positioning System (GPS) and Inertial Measurement Unit (IMU) constantly record the aircraft’s own position as a series of time-stamped points in a global coordinate system. This positional data is crucial for georeferencing all other collected data, ensuring that every captured point is accurately placed on the earth’s surface.

Lines: Defining Paths and Boundaries from Above

Just as a point is a location, a “line” represents a continuous one-dimensional extent. In drone operations, lines are crucial for defining trajectories, mapping linear features, and understanding boundaries. They emerge from the connection of multiple points and provide structure and direction.

- Flight Paths and Trajectories: The most obvious application of lines is in defining a drone’s flight path. Autonomous missions are pre-programmed with a series of waypoints (points), which the drone connects to form a defined trajectory (a line or sequence of lines). The drone’s actual movement through space during a mission can be visualized as a continuous line, critical for mission planning, execution, and post-flight analysis.

- Feature Extraction: From remote sensing data, lines are extracted to represent linear features on the ground. Roads, rivers, property boundaries, power lines, and fault lines are all mapped and analyzed as geometric lines. Advanced algorithms identify these features within point clouds or orthomosaics, converting raw data into meaningful linear representations for various applications like infrastructure inspection or environmental monitoring.

- Vector Data: Geographic Information Systems (GIS), heavily utilized with drone data, represent many features as vector lines. This allows for precise measurements of length, calculation of network properties, and spatial analysis of connectivity, all foundational to creating comprehensive digital maps.

Planes: Understanding Surfaces and Territories

A “plane,” mathematically an infinitely extending flat surface, translates in drone technology to the concept of surfaces and areas. While a true infinite plane is an abstraction, drones operate within and model various finite planar or curvilinear surfaces—from flat agricultural fields to the intricate facades of buildings.

- Surface Modeling: Drones excel at creating Digital Surface Models (DSMs) and Digital Terrain Models (DTMs). These models represent the earth’s surface (or objects on it) as a collection of interconnected points that form a continuous, often triangulated, surface—a practical manifestation of a plane. These 2.5D surfaces are vital for calculating volumes, analyzing slopes, and understanding hydrological flow.

- Area Definition and Coverage: When planning a mapping mission, operators define the area of interest—a rectangular or polygonal “plane” on the ground—that the drone needs to cover. The drone’s flight plan is then generated to ensure comprehensive photographic or LiDAR coverage of this defined planar region.

- Volume Calculation: For applications like quarry management or construction progress monitoring, drones capture data that allows for the precise calculation of material volumes (e.g., stockpiles). These calculations are based on defining a base plane and then measuring the geometric shape above it, leveraging the concept of planes to quantify physical quantities.

Geometric Principles in Autonomous Flight and Navigation

Beyond mapping, the very act of a drone flying autonomously—navigating, avoiding obstacles, and maintaining stability—is a continuous application of geometric principles. The drone’s “understanding” of its environment and its ability to respond are deeply rooted in processing spatial relationships defined by points, lines, and planes.

Trajectories as Geometric Sequences of Points and Lines

Autonomous drones follow pre-programmed flight plans or generate real-time trajectories. These are essentially sequences of points connected by lines, forming a geometric path through 3D space.

- Waypoint Navigation: Modern drones can execute complex missions by autonomously moving between a series of user-defined waypoints. Each waypoint is a distinct point in space (latitude, longitude, altitude), and the drone calculates the optimal line (or curved path) to connect them, considering factors like wind, speed, and desired camera angle.

- Path Planning Algorithms: For more complex autonomous tasks, such as inspecting infrastructure or flying through challenging terrain, path planning algorithms dynamically generate collision-free trajectories. These algorithms model the drone’s environment using geometric primitives (points for obstacles, lines for constrained paths) and compute a safe, efficient line-segment-based path.

Spatial Awareness: Perceiving Obstacles and Environments as Geometric Forms

For drones to operate safely and effectively in complex environments, they must have robust spatial awareness. This involves perceiving their surroundings and understanding objects as geometric forms relative to their own position.

- Obstacle Avoidance: Drones equipped with obstacle avoidance systems use sensors (optical, ultrasonic, LiDAR) to detect objects in their flight path. These systems interpret sensor data to identify the location (point), extent (line), and surface (plane) of obstacles. They then perform rapid geometric calculations to adjust the drone’s trajectory, generating a new line of flight to bypass the detected geometric form.

- Visual Odometry: When GPS signals are weak or unavailable, drones use visual odometry—analyzing the apparent motion of features between consecutive camera frames. This technique essentially tracks the movement of identifiable points and uses geometric triangulation to estimate the drone’s own position and orientation relative to its environment.

Real-time Localization and Mapping (SLAM) Through Geometric Correlation

SLAM (Simultaneous Localization and Mapping) is a critical technology for autonomous drones, particularly in GPS-denied environments. It allows a drone to build a map of an unknown environment while simultaneously keeping track of its own location within that map.

- Feature-based SLAM: This approach identifies distinct geometric features (points, lines, planes) in the environment from sensor data. As the drone moves, it continually matches newly observed features with those already in its map. The geometric correlation between these features allows the drone to refine its position and update the map simultaneously.

- Dense SLAM: More advanced SLAM techniques build dense 3D maps by combining millions of individual depth measurements, effectively constructing a detailed geometric representation of the environment as a continuous surface or point cloud. The drone’s localization within this map is based on its geometric relationship to these dense features.

Innovation at the Intersection: AI, Computer Vision, and Geometric Abstractions

The future of drone technology, particularly in AI follow modes, remote sensing analysis, and autonomous decision-making, increasingly relies on sophisticated geometric understanding. AI and computer vision systems process raw sensor data to extract meaningful geometric information, enabling drones to interpret their environment with human-like intelligence.

AI’s Reliance on Geometric Feature Extraction

Artificial intelligence algorithms, especially those employed in computer vision for drones, fundamentally rely on geometric feature extraction. Before an AI can “understand” an object or a scene, it must identify and process its geometric properties.

- Object Recognition: When an AI-powered drone identifies a person for ‘follow mode’ or classifies a specific crop in an agricultural field, it first extracts geometric features like edges (lines), corners (points), and overall shape (plane/surface) from the image data. These geometric descriptors are then fed into deep learning models for classification and tracking.

- Semantic Segmentation: This advanced computer vision technique segments an image into regions based on the semantic meaning of objects (e.g., distinguishing “road” from “car” from “tree”). Each segment represents a distinct geometric region or plane, allowing the drone to understand the composition and layout of its environment at a granular, geometrically informed level.

Semantic Segmentation and Geometric Understanding

Semantic segmentation takes geometric understanding a step further by assigning a label to every pixel in an image, effectively outlining the boundaries (lines) and areas (planes) of different objects and surfaces. This allows drones to build a rich, semantically aware geometric model of their surroundings.

- Precise Environmental Interaction: For complex autonomous tasks like precision agriculture (spraying only specific plant areas) or inspection (focusing on damaged sections of a bridge), semantic segmentation provides the drone with a detailed geometric map of its operational area, enabling highly targeted and efficient actions.

- Dynamic Scene Understanding: As drones move, their semantic segmentation algorithms continually update, providing a real-time, geometrically informed understanding of a dynamic environment. This is crucial for navigating crowded spaces or adapting to changing conditions.

The Future: Predictive Geometry for Smarter Drones

The evolution of drone technology points towards systems that can not only understand existing geometry but also predict future geometric states. This ‘predictive geometry’ will be a hallmark of truly intelligent autonomous flight.

- Predictive Path Planning: Future drones will use advanced AI to not just avoid obstacles, but to predict the movement of dynamic objects (people, cars, other drones) and generate geometrically optimal and safe flight paths in anticipation. This involves modeling predicted lines of motion and potential collision points.

- Digital Twin Generation: Remote sensing and mapping will evolve to create ‘digital twins’—real-time, geometrically perfect virtual replicas of physical environments. These digital twins, built from a continuous stream of points, lines, and planes, will enable drones to operate within a virtual world that mirrors reality, allowing for advanced simulation, planning, and control.

- Adaptive Geometric Modeling: Drones will become adept at adaptively building and refining geometric models of their environment, focusing computational resources on areas of high uncertainty or change. This dynamic geometric understanding will be paramount for robust autonomy in highly variable and unpredictable scenarios.

In essence, while “point, line, and plane” remain undefined in the axiomatic sense of pure geometry, their conceptual clarity and practical utility are foundational to every facet of advanced drone technology. From the precise mapping of our world to the intricate dance of autonomous flight, these geometric primitives are the intuitive bedrock upon which the most sophisticated innovations in the drone industry are built and will continue to evolve.