The Core Concept of Mipmaps in Geospatial Data

Mipmaps, a portmanteau of the Latin multum in parvo (much in little) and “map,” represent a fundamental technique in computer graphics, particularly critical for efficient and high-quality rendering of large textures and geospatial datasets. At its heart, a mipmap is a sequence of images, each one a progressively lower-resolution representation of a single base texture. This hierarchical structure allows graphics processors to select the most appropriate resolution level for a given object or data point based on its distance from the viewer, significantly optimizing rendering performance and visual fidelity.

In the realm of drone-based mapping and remote sensing, where vast quantities of high-resolution imagery and 3D models are routinely generated, mipmaps transition from a simple graphics optimization to an indispensable tool for data visualization and analysis. Imagine trying to display a gigapixel orthomosaic map covering hundreds of square kilometers. Without mipmaps, rendering such a massive texture would overwhelm even the most powerful systems, leading to extreme lag, visual artifacts, and a frustrating user experience. Mipmaps provide the intelligent scaling mechanism that makes interactive exploration of these complex datasets not just possible, but smooth and intuitive.

Addressing Aliasing and Performance Challenges

One of the primary motivations for the development of mipmaps was to combat aliasing artifacts, specifically minification aliasing. This phenomenon occurs when a texture is rendered at a much smaller size than its native resolution, causing distant or small details to shimmer, flicker, or disappear entirely. This happens because the rendering engine has to sample many texture pixels into a single screen pixel, leading to incorrect averaging and information loss.

In mapping applications, where a user might be viewing an entire city map at once, or zooming out from a highly detailed industrial site survey, minification aliasing would severely degrade the visual quality. Mipmaps preemptively solve this by providing pre-filtered, downsampled versions of the texture. When a texture is far away or occupies only a few pixels on the screen, the graphics processor automatically selects a smaller mipmap level. This level has already been properly filtered and averaged during its creation, ensuring that distant details are represented smoothly and accurately, without the shimmering associated with raw downsampling.

Beyond visual quality, mipmaps are a performance powerhouse. They drastically reduce the amount of data that needs to be processed and transferred from memory to the graphics card. Instead of always fetching the full-resolution texture (which might be many gigabytes) and then scaling it down in real-time, the system only fetches the relevant, smaller mipmap level. This conserves memory bandwidth, improves cache efficiency, and reduces the computational load on the GPU, leading to significantly faster rendering times and a much more responsive user interface, crucial for handling the massive datasets common in remote sensing.

How Mipmaps Are Generated

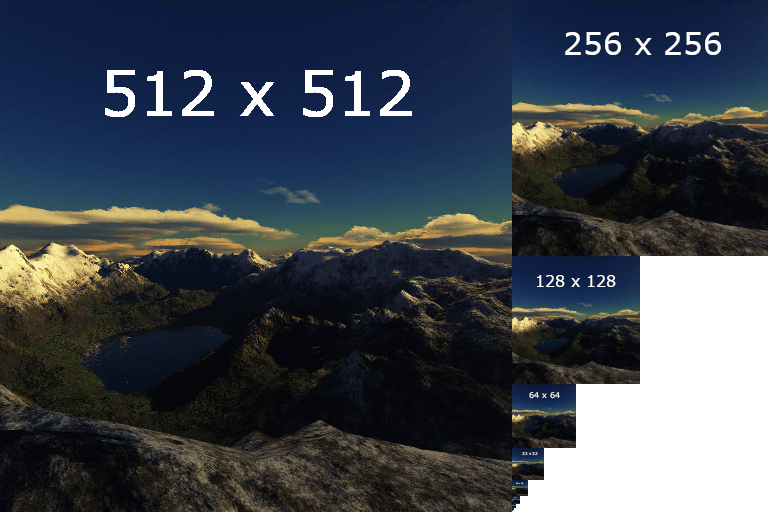

The generation of mipmaps is a straightforward, yet critical, process. Starting with the highest-resolution base image (level 0), each subsequent mipmap level (level 1, level 2, and so on) is created by downsampling the previous level. Typically, this involves reducing the dimensions by half (e.g., a 2048×2048 image becomes 1024×1024, then 512×512, down to 1×1).

During this downsampling, a carefully designed filter is applied. This filtering process is vital; it averages or blends the pixel values from the higher-resolution image to create the pixels of the lower-resolution image. Simple nearest-neighbor sampling would introduce aliasing, defeating the purpose. Instead, advanced filters like bilinear, trilinear, or even more sophisticated algorithms are used to ensure that the downsampled image accurately represents the original content, preserving detail where possible and smoothing transitions appropriately. This pre-filtering is what eliminates the shimmering effects seen with real-time downsampling.

For drone-derived mapping products like orthomosaics, this generation often happens during the post-processing phase, after the initial high-resolution image has been stitched and georeferenced. Mapping software platforms or GIS (Geographic Information System) applications will compute the full mipmap chain for the entire dataset, storing these levels alongside the primary data. This pre-computation means that when a user interacts with the map – zooming in or out, panning across vast areas – the system can instantly access the correctly scaled and filtered texture level without any on-the-fly calculations, ensuring optimal performance and visual quality.

Mipmaps in Drone-Based Mapping and Remote Sensing

The utility of mipmaps truly shines in the specialized domain of drone-based mapping and remote sensing. Drones equipped with high-resolution cameras and LiDAR sensors capture immense volumes of data, which are then processed into detailed orthomosaics, 3D point clouds, and intricate mesh models. The sheer scale and detail of these outputs demand sophisticated visualization strategies, and mipmaps provide an elegant solution to manage this complexity.

Efficient Visualization of Orthomosaics

Orthomosaics, which are geometrically corrected, high-resolution aerial images stitched together to form a seamless map, are a cornerstone of drone mapping. These can easily span hundreds of gigabytes or even terabytes for large projects. Without mipmaps, viewing such a dataset would be akin to trying to load a massive, uncompressed image into a web browser – slow, memory-intensive, and prone to crashes.

Mipmaps enable orthomosaics to be rendered efficiently across all zoom levels. When a user is zoomed out, viewing an entire land parcel or construction site, the mapping software fetches a low-resolution mipmap level, providing a quick overview. As the user zooms in, progressively higher-resolution mipmap levels are loaded and displayed. This dynamic loading ensures that only the necessary data for the current view is in memory and processed by the GPU. The transition between these levels is often seamless, creating the illusion of infinite detail and smooth exploration. This efficiency is critical for professionals who need to quickly navigate large areas, identify features, and perform initial assessments without being bogged down by rendering delays.

Enhancing 3D Model Rendering

Beyond 2D orthomosaics, drones are increasingly used to generate highly detailed 3D models of structures, terrains, and environments through photogrammetry or LiDAR. These models are typically textured with high-resolution imagery captured during the flight. Displaying these textured 3D models interactively, especially when they consist of millions of polygons and enormous texture maps, presents an even greater challenge than 2D maps.

Mipmaps are indispensable here. Each texture applied to the 3D model benefits from its own mipmap chain. As the viewer moves closer to or further away from a building or a section of terrain in the 3D model, the appropriate mipmap level for that specific texture is chosen. This prevents the aliasing artifacts that would otherwise make distant parts of the model appear blurry or shimmering, and ensures that close-up details are sharp and clear. Moreover, by reducing the texture data load, mipmaps significantly improve the frame rates during navigation, allowing engineers, urban planners, and surveyors to smoothly inspect models, identify defects, or plan interventions. This optimization is crucial for collaborative review and client presentations where real-time, high-fidelity visualization is paramount.

Real-Time Data Exploration and Analysis

The ability to interactively explore and analyze drone-collected geospatial data in real-time is a significant advantage offered by mipmaps. In applications ranging from precision agriculture to environmental monitoring and infrastructure inspection, users need to quickly pan, zoom, and query information across vast areas. Mipmaps facilitate this by ensuring that the underlying visual data is always rendered optimally.

For instance, an agricultural expert might be examining a crop health map derived from multispectral drone imagery. With mipmaps, they can smoothly zoom from a regional overview down to individual plant rows without any noticeable lag, allowing them to pinpoint problem areas efficiently. Similarly, in remote sensing for disaster response, quickly navigating through damaged areas visualized by drone imagery requires instant feedback, which mipmaps provide by intelligently managing data resolution. This seamless experience translates directly into improved productivity and more effective decision-making, as analysts can focus on the data itself rather than waiting for slow renders.

The Technical Advantages for Drone Applications

The technical underpinnings of mipmaps offer distinct and critical advantages for the highly data-intensive nature of drone-based mapping and remote sensing. These benefits extend beyond mere visual quality, impacting system performance, memory management, and overall operational efficiency.

Reduced Memory Bandwidth and Improved Cache Coherency

One of the most significant technical benefits of mipmaps is the dramatic reduction in memory bandwidth consumption. When a graphics processor needs to sample a texture, especially a large one, it must fetch data from the system’s memory. If only the full-resolution texture were available, the GPU would constantly be requesting huge chunks of data, even for objects that only occupy a few screen pixels. This repetitive fetching of large, unnecessary data leads to memory bandwidth bottlenecks, slowing down the entire system.

Mipmaps alleviate this by ensuring that the GPU usually fetches only a small fraction of the data. When an object is distant, a small mipmap level is selected. This level is compact, requiring far less data transfer. This reduction in data movement frees up memory bandwidth, allowing the GPU and CPU to perform other tasks more efficiently. Furthermore, smaller data footprints lead to improved cache coherency. Data that is smaller and frequently accessed is more likely to reside in the GPU’s fast on-chip cache, leading to near-instantaneous access times compared to fetching from main memory. This combined effect significantly boosts rendering speeds and overall system responsiveness, which is crucial when dealing with the gigabytes or terabytes of data generated by modern drone surveys.

Smoother Zooming and Panning Experiences

The user experience in mapping and GIS applications is heavily reliant on the smoothness of interaction, particularly during zooming and panning operations. Without mipmaps, changing zoom levels would often result in noticeable stutters, slow loading times, or jarring visual pop-ins as the system struggles to render or re-sample the full-resolution data.

Mipmaps create an inherently smooth and fluid experience. As a user zooms out, the system automatically transitions to smaller, pre-computed mipmap levels. When zooming in, higher-resolution levels are progressively loaded. Because these transitions are managed by the GPU using optimized, pre-filtered data, they are often imperceptible to the user. This ensures that the map or 3D model always appears sharp and consistent, regardless of the zoom level. This seamless interaction is not just about aesthetics; it improves workflow efficiency by allowing users to rapidly navigate and scrutinize complex datasets without frustration, enabling faster identification of features or anomalies.

Optimizing Remote Sensing Workflows

The optimization provided by mipmaps extends deep into various remote sensing workflows. For data providers, generating mipmaps during the post-processing phase (after orthorectification and mosaicking) is a standard practice because it makes the final data products far more usable and performant for their clients.

For end-users in fields like environmental science, civil engineering, or urban planning, mipmaps simplify the handling of massive datasets. Instead of having to downsample data manually for different viewing scales, which is time-consuming and prone to introducing artifacts, the mipmap structure handles this automatically. This allows analysts to focus on extracting insights from the data, rather than wrestling with data management or visualization issues. Furthermore, when sharing data, the mipmap structure allows for streaming solutions where only the necessary resolution tiles are sent over a network, making collaboration and cloud-based analysis much more efficient and accessible.

Future Implications and Advanced Techniques

As drone technology and remote sensing capabilities continue to evolve, so too will the methods for handling and visualizing the ever-growing volumes of data. Mipmaps, while a foundational technique, are also subject to ongoing innovation and integration with advanced technologies, further enhancing their utility in drone applications.

Adaptive Mipmapping and Dynamic Level of Detail

Traditional mipmapping generates a fixed set of resolution levels. However, future advancements in drone-based mapping and real-time 3D reconstruction are likely to see more widespread adoption of adaptive mipmapping and dynamic level of detail (LOD) systems. Adaptive mipmapping can dynamically generate or select mipmap levels based on factors beyond just distance, such as viewing angle, screen space coverage, or even semantic importance. For instance, a critical infrastructure component might always render at a higher detail level than surrounding, less significant terrain, even at the same distance.

Dynamic LOD takes this a step further by integrating mipmaps within a broader system that manages the complexity of the entire scene, not just individual textures. This could mean that detailed 3D models derived from photogrammetry could have their mesh geometry simplified in distant views, while their textures are simultaneously managed by mipmaps. This combined approach offers unparalleled optimization, ensuring that highly complex scenes, such as digital twins of entire cities generated from drone data, can be explored interactively and smoothly on a wider range of hardware, from powerful workstations to portable devices.

Integration with Cloud-Based Processing

The trend towards cloud-based processing and storage for drone data is accelerating. Mipmaps play a crucial role in making these cloud platforms efficient. When large orthomosaics or 3D models reside in the cloud, streaming the entire high-resolution dataset over the internet for every user interaction is impractical.

Here, mipmaps enable tile-based streaming where only the relevant mipmap levels and geographical tiles for the user’s current view are transmitted. This drastically reduces network bandwidth requirements and accelerates load times for remote users. Future developments will see even tighter integration, where mipmap generation itself might become a serverless cloud function, automatically triggered upon upload of new drone data. This automation ensures that data is immediately accessible and optimized for visualization across various client applications – from web-based GIS portals to mobile field applications – facilitating truly collaborative and distributed remote sensing workflows. The continued evolution of mipmap techniques, therefore, is not just about graphics; it’s about enabling the next generation of data-driven insights from the skies.