In an era defined by rapid technological advancement, from autonomous drones navigating complex environments to artificial intelligence making split-second decisions, the sophistication of our digital tools often obscures the fundamental mechanisms that power them. We interact with intuitive graphical interfaces, write code in human-readable programming languages, and marvel at the seamless operation of complex systems. Yet, beneath these layers of abstraction lies a silent, foundational language: machine code.

Machine code is the lowest-level programming language, a system of instructions and data that a computer’s central processing unit (CPU) can directly understand and execute. It is the raw, binary truth of computation – a sequence of bits, represented as zeros and ones, that dictate every operation a computer performs. For anyone delving into the intricacies of modern tech and innovation, understanding machine code isn’t merely an academic exercise; it’s a window into the very soul of digital systems, revealing how AI follow modes operate, how autonomous flight paths are executed, and how mapping data is processed at its most granular level. This article aims to demystify machine code, exploring its structure, its critical role, and its enduring relevance in the ever-evolving landscape of technology.

The Foundation of Digital Computing

At its core, every digital device, from a supercomputer to a micro-drone’s flight controller, operates by executing a series of instructions. These instructions are not the Python, Java, or C++ code that developers typically write. Instead, they are machine code – binary patterns precisely tailored to a specific CPU architecture. This deep-level interaction is what fundamentally drives all computing processes, making machine code the true lingua franca between software and hardware.

From High-Level to Low-Level: A Translation Process

The vast majority of software development today occurs using high-level programming languages. These languages, like Python, C++, Java, or Rust, are designed to be human-readable, abstract away hardware specifics, and facilitate complex problem-solving with less effort. They feature constructs like variables, functions, loops, and conditional statements that closely resemble human thought processes and natural language.

However, a computer’s CPU does not understand these high-level abstractions. Before any program written in C++ can run, it must be translated into machine code. This translation is primarily performed by compilers or interpreters. A compiler reads the entire source code written in a high-level language and translates it into an executable file containing machine code specific to the target CPU architecture. For instance, a program compiled for an Intel x86 processor will not natively run on an ARM-based chip found in many smartphones or embedded systems without recompilation or emulation. Interpreters, on the other hand, translate and execute code line by line at runtime, offering flexibility but often with a performance overhead. This compilation or interpretation process is crucial, as it bridges the vast semantic gap between human-centric programming and machine-centric execution, turning logical instructions into tangible electrical signals within the CPU.

Binary Language and CPU Execution

The ultimate form of machine code is binary – a sequence of 0s and 1s. This binary representation is not arbitrary; it directly corresponds to the electrical states within a computer’s circuitry (e.g., high voltage vs. low voltage). Each specific CPU architecture (like x86, ARM, MIPS, RISC-V) has its own unique instruction set architecture (ISA), which defines the set of all possible machine code instructions that CPU can execute.

When a program runs, the CPU fetches these binary instructions from memory, decodes them, and then executes them. This process is often broken down into a pipeline:

- Fetch: Retrieve the next instruction from memory.

- Decode: Interpret what the instruction means (e.g., “add these two numbers,” “load data from this memory location,” “jump to this part of the code”).

- Execute: Perform the operation specified by the instruction.

- Memory Access: Read from or write to memory if the instruction requires it.

- Write Back: Store the result of the operation in a register or memory.

This cycle happens millions or billions of times per second, making up the fabric of all digital computation. Understanding that every single action, from rendering a high-resolution drone image to calculating a flight correction, boils down to these fundamental binary operations provides critical insight into the limits and capabilities of any computing system.

Anatomy of a Machine Instruction

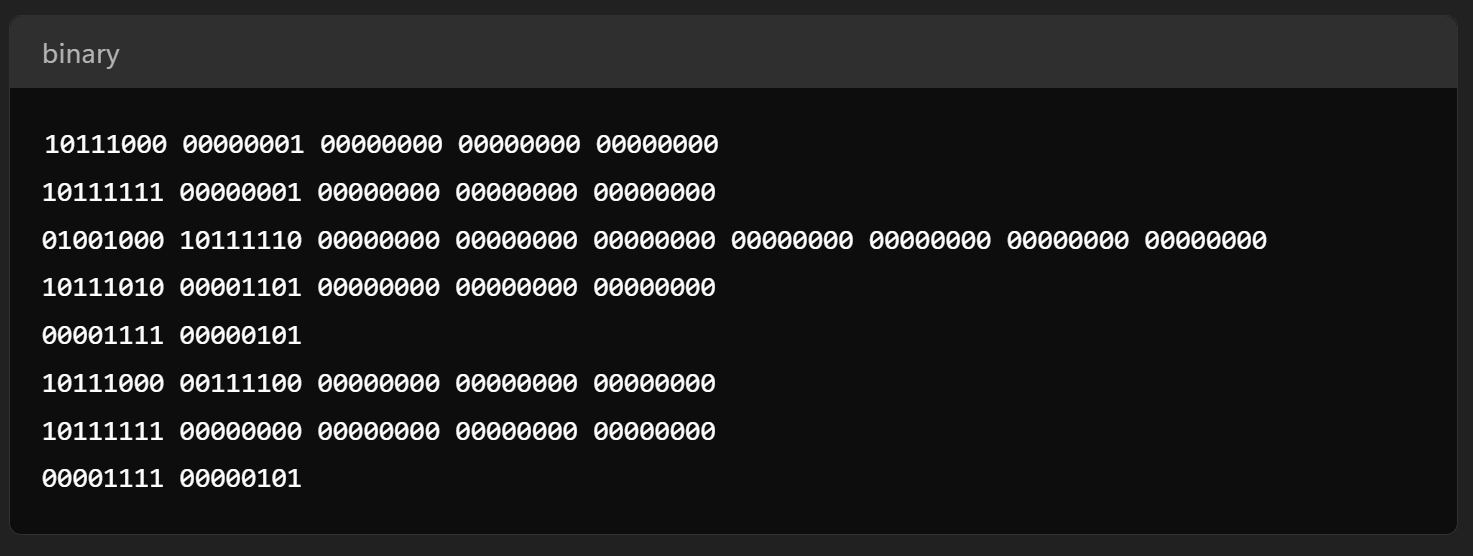

While machine code might appear as an intimidating stream of binary digits, individual instructions possess a structured format that dictates their purpose and operation. Breaking down these instructions reveals the precision with which a CPU performs its tasks.

Opcodes and Operands: The Building Blocks

Every machine instruction is typically composed of two primary components: the opcode and the operands.

- Opcode (Operation Code): This is the part of the instruction that specifies the operation to be performed. For example, there’s an opcode for “add,” another for “subtract,” one for “move data,” one for “jump to a different part of the program,” and so on. Each specific operation the CPU can perform has a unique binary opcode associated with it. When the CPU decodes an instruction, the opcode tells it what to do.

- Operands: These are the data or memory addresses that the operation will act upon. If the opcode is “add,” the operands would specify which two numbers to add and where to store the result. Operands can be registers (small, fast storage locations directly on the CPU), immediate values (constants embedded within the instruction itself), or memory addresses (locations in RAM where data is stored). The number and type of operands vary depending on the instruction and the CPU’s ISA.

For example, a simplified machine instruction might look like 00101000 01100010 00000011. The first few bits (00101000) could be the opcode for “ADD,” while the subsequent bits (01100010 and 00000011) might represent the locations of the two numbers to add (e.g., CPU registers R2 and R3) and where to store the sum (e.g., R1).

Addressing Modes and Data Manipulation

Beyond opcodes and operands, understanding how CPUs access data is crucial. Addressing modes define the various ways in which the CPU can calculate the effective memory address of an operand. Common addressing modes include:

- Immediate Addressing: The operand value is directly specified in the instruction itself.

- Register Addressing: The operand is stored in a CPU register. This is the fastest method.

- Direct Addressing: The instruction contains the full memory address of the operand.

- Indirect Addressing: The instruction specifies a register or memory location that contains the address of the operand.

- Indexed/Base-Register Addressing: An offset is added to a base register’s value to find the operand’s address, useful for array access.

These addressing modes give the CPU flexibility and efficiency in manipulating data. Complex data structures, large arrays used in mapping algorithms, or even the rapidly changing sensor data from a drone’s stabilization system are all accessed and processed using these fundamental mechanisms. The efficiency of data manipulation at this level directly impacts the overall performance and responsiveness of any technological system.

Why Machine Code Matters in Modern Tech & Innovation

While high-level languages dominate software development, machine code remains supremely relevant, particularly at the cutting edge of tech and innovation. Its importance is underscored in areas where performance, efficiency, and direct hardware control are paramount, such as AI, autonomous systems, and specialized hardware design.

Performance and Efficiency: The Need for Speed

In domains like autonomous flight, real-time AI decision-making, or high-fidelity remote sensing, every nanosecond counts. High-level languages, by design, introduce layers of abstraction that can sometimes lead to less-than-optimal machine code generation by compilers. While compilers are highly sophisticated, they cannot always produce code that is as finely tuned as hand-optimized machine code (or its human-readable counterpart, assembly language) crafted by an expert programmer.

Understanding machine code allows developers to write code that directly leverages specific CPU features, minimizes unnecessary operations, and optimizes memory access patterns. This capability is critical for:

- Embedded Systems: Devices with limited processing power and memory, like drone flight controllers or specialized sensors, where every byte of code and every CPU cycle is precious.

- Real-time Systems: Applications where operations must complete within strict time constraints, such as the guidance system of a racing drone or an obstacle avoidance system.

- Performance-Critical Libraries: Core algorithms in areas like AI (e.g., neural network inference engines) or graphics processing often have segments optimized at the assembly/machine code level to extract maximum performance.

Embedded Systems and Resource Constraints

Many modern innovations, especially in robotics and IoT, rely on embedded systems. These are specialized computer systems designed to perform dedicated functions, often with tight constraints on cost, power consumption, and physical size. Think of the tiny computers inside a micro-drone, an AI-powered smart camera, or a specialized remote sensing unit.

In these resource-constrained environments, the directness of machine code is invaluable. Developers working with embedded systems often need to:

- Directly control hardware: Access specific registers or memory-mapped I/O ports to interact with sensors, motors, or communication modules without the overhead of operating system layers.

- Optimize memory footprint: Write compact code that fits into limited ROM/RAM, as every additional instruction or variable consumes precious resources.

- Manage power consumption: Craft code that puts the CPU into low-power states or carefully schedules operations to conserve battery life, crucial for extended drone flight times or remote deployments.

Understanding machine code enables precise control over these factors, which is essential for the practical implementation and long-term viability of many innovative technologies.

Security, Debugging, and Reverse Engineering

Beyond performance, machine code plays a vital role in ensuring the security and reliability of digital systems.

- Security: Attackers often analyze machine code (or assembly) to find vulnerabilities, understand how software functions, or reverse engineer proprietary algorithms. Conversely, security professionals use the same techniques to analyze malware, patch vulnerabilities, and verify software integrity. In sensitive applications like autonomous vehicles or critical infrastructure, understanding what the underlying machine code is actually doing is paramount for preventing exploits.

- Debugging: When a high-level program crashes or misbehaves, understanding its corresponding machine code can be crucial for deep-level debugging. Tools like debuggers allow developers to step through machine instructions, inspect register values, and observe memory states, providing insights that high-level debugging might miss, especially when dealing with race conditions or hardware-related issues.

- Reverse Engineering: For proprietary systems where source code isn’t available, reverse engineering the machine code is the primary method to understand its functionality. This can be for interoperability, security analysis, or competitive analysis – a critical skill in understanding the inner workings of cutting-edge tech.

The Future of Machine Code and Low-Level Programming

The relevance of machine code is not diminishing but evolving. As technology pushes boundaries, the interaction between high-level concepts and low-level execution becomes ever more critical, particularly with the emergence of specialized hardware and advanced AI.

The Rise of Specialized Architectures

The era of one-size-fits-all CPU architectures is giving way to an explosion of specialized hardware. Graphics Processing Units (GPUs) for parallel computation, Field-Programmable Gate Arrays (FPGAs) for custom logic, and increasingly, Application-Specific Integrated Circuits (ASICs) designed for AI workloads (e.g., Google’s TPUs, NVIDIA’s AI inference chips) are becoming commonplace. Each of these specialized processors has its own unique instruction set architecture and, consequently, its own machine code.

Designing and programming these specialized architectures often requires a deeper understanding of their underlying machine code or low-level assembly to unlock their full potential. Projects like RISC-V, an open-source instruction set architecture, are democratizing the design of custom processors, further emphasizing the importance of understanding the fundamental building blocks of computation. This trend means that while most developers won’t write machine code daily, the principles of efficient low-level execution are becoming increasingly vital for engineers driving innovation in these areas.

The Interplay with AI and Automation

Perhaps paradoxically, Artificial Intelligence itself is beginning to interact with and even generate machine code.

- AI for Compiler Optimization: AI algorithms can analyze code patterns and optimize them more effectively than traditional compilers, potentially generating more efficient machine code.

- AI for Hardware Design: AI is being used to design custom chip architectures, where the design process implicitly involves defining new instruction sets and their corresponding machine code.

- AI for Code Generation: While nascent, the idea of AI generating highly optimized, even assembly or machine code-level, implementations for specific tasks is a future possibility. This could allow for unprecedented performance gains in specialized applications.

- Firmware Analysis and Security: AI is also employed in analyzing vast amounts of machine code for security vulnerabilities, reverse engineering, and identifying malicious patterns in firmware, which is critical for protecting the integrity of autonomous systems and IoT devices.

This symbiotic relationship suggests that machine code, far from becoming obsolete, will remain a cornerstone, influencing and being influenced by the very technologies it enables.

Conclusion

Machine code, the binary language directly understood by a computer’s CPU, stands as the invisible backbone of all modern technology. From the intricate computations driving AI-powered autonomous drones to the precise control required for remote sensing and mapping, every sophisticated operation ultimately distills down to a sequence of machine instructions. While abstracted by layers of high-level programming languages and user-friendly interfaces, its fundamental importance endures, particularly in the realm of Tech & Innovation where performance, efficiency, security, and direct hardware control are paramount.

Understanding machine code provides a profound insight into how digital systems truly operate, revealing the logic gates, registers, and memory manipulations that turn abstract algorithms into tangible results. As technology continues its relentless march forward, embracing specialized hardware and more intelligent software, the mastery of these low-level principles will remain a distinguishing characteristic of engineers and innovators pushing the boundaries of what is possible. Machine code is not just a relic of computing’s past; it is the enduring, unseen language that will continue to shape its future.