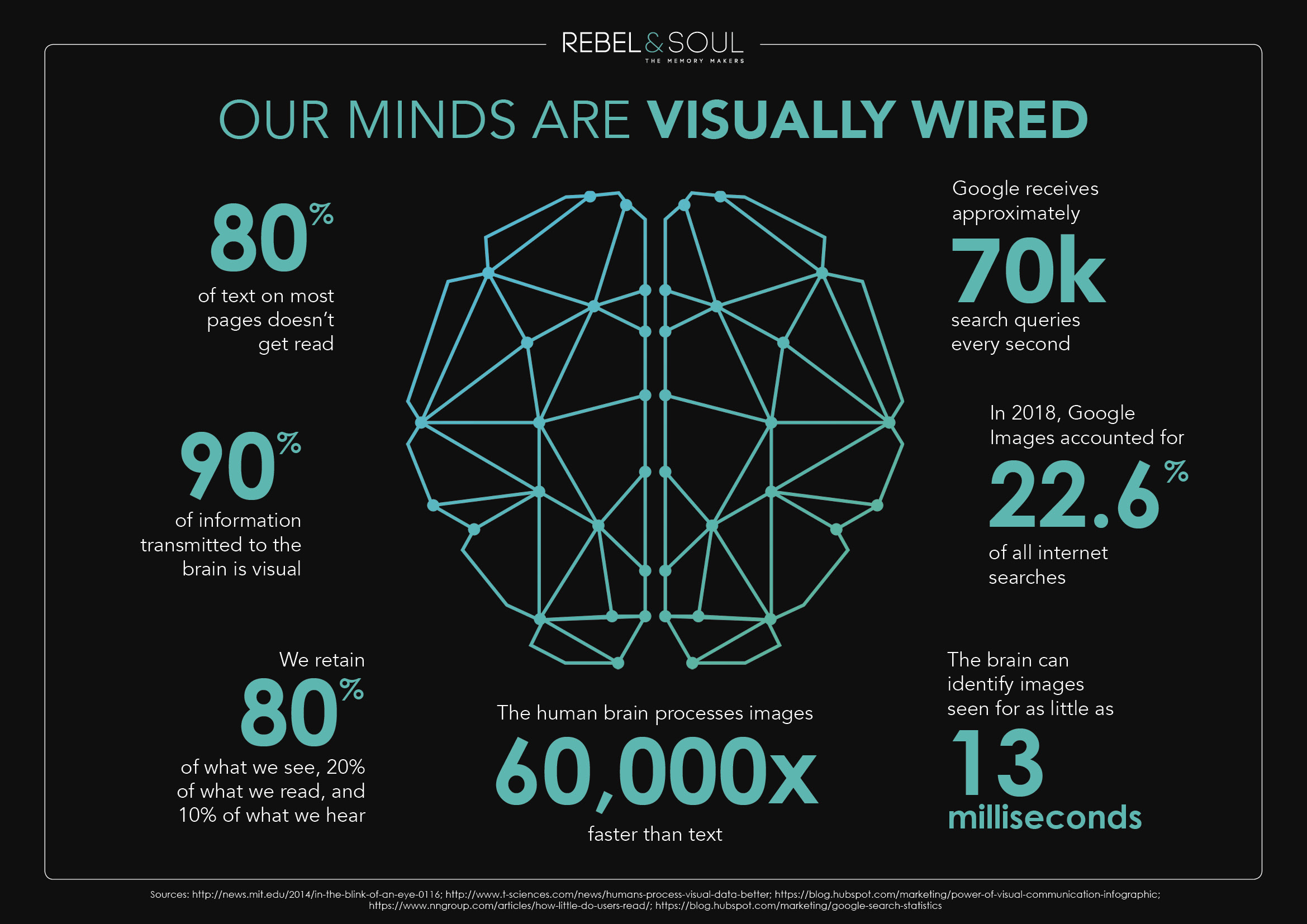

In the world of high-end digital imaging and aerial cinematography, we often obsess over specifications: 4K resolution, 120 frames per second (fps), and bitrates that exceed hundreds of megabits. However, the most critical component of the imaging chain isn’t the CMOS sensor or the image processor; it is the human brain. To understand why we demand high-performance camera systems, we must first answer a fundamental physiological question: how often does your brain update what you are seeing?

Understanding the “refresh rate” of human consciousness is not merely a neurological curiosity. For professionals in the imaging industry, it is the blueprint for creating immersive, realistic, and effective visual content. By aligning camera technology with the biological limitations and strengths of the human eye, we can push the boundaries of what is possible in digital storytelling.

The Physiological “Frame Rate”: Understanding Human Visual Perception

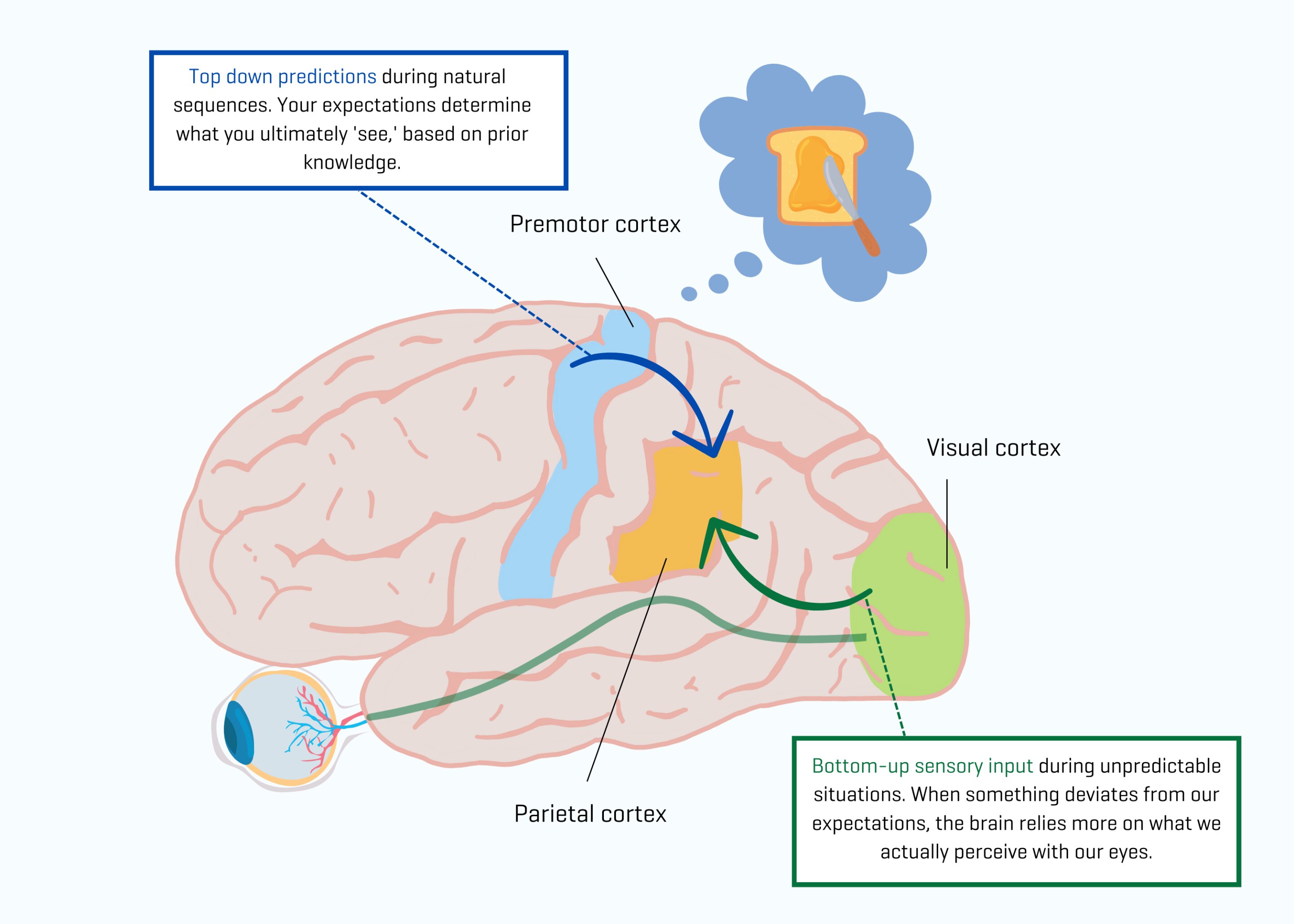

The human eye does not operate like a digital camera. While a camera captures a series of discrete, static images and strings them together, the brain perceives the world as a continuous flow of information. However, this flow is an illusion constructed by the visual cortex through a process of rapid sampling and integration.

The Flicker Fusion Threshold

One of the most important concepts in imaging is the “Flicker Fusion Threshold.” This is the frequency at which an intermittent light stimulus appears to be completely steady to the average human observer. For most people, this threshold sits between 50 and 60 Hertz (Hz). This is why household lightbulbs, which pulse at the frequency of the power grid, appear to be constantly on. In the context of imaging, this threshold explains why 60fps video appears significantly “smoother” than 24fps; it nears the point where the brain can no longer distinguish between individual frames, even during rapid motion.

Latency and the Brain’s Processing Speed

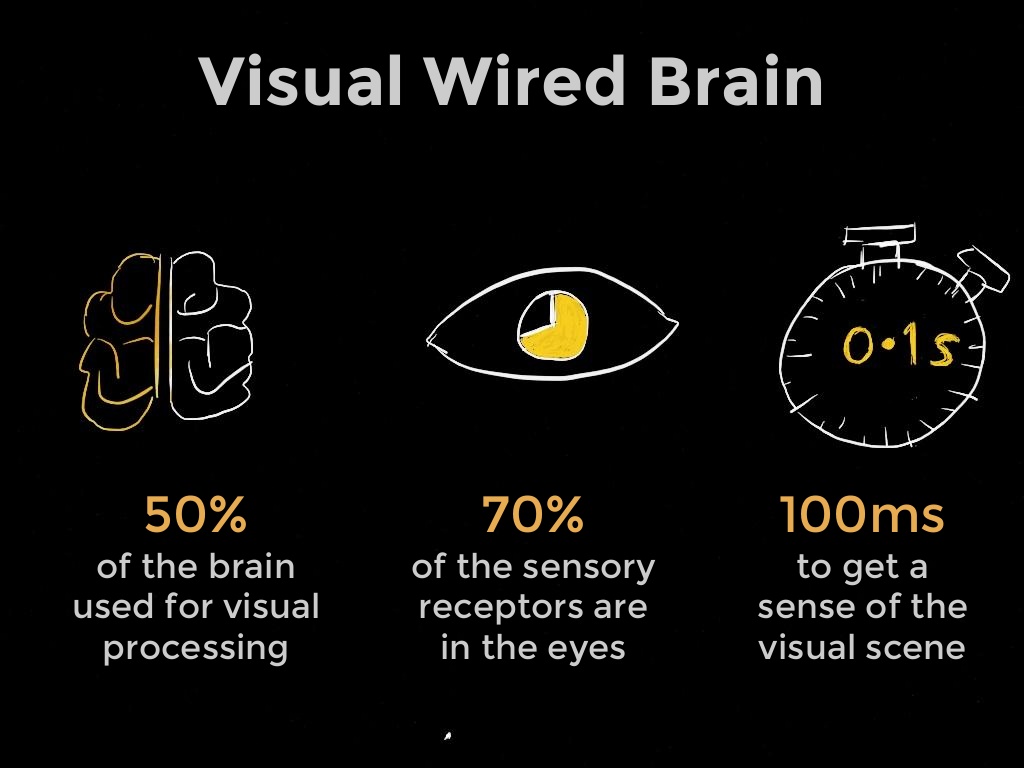

While we can detect flicker at high frequencies, our ability to process complex changes—such as identifying a specific object moving through a frame—is slower. Research suggests that the brain takes approximately 13 to 80 milliseconds to process an image. In the world of First-Person View (FPV) imaging and remote camera operation, this is known as “end-to-end latency.” If a camera system introduces more than 30-40ms of delay, it begins to conflict with the brain’s internal update cycle, leading to a “disconnected” feeling or, in many cases, motion sickness.

Bridging the Gap: How Digital Imaging Syncs with Human Biology

Modern camera systems are designed to exploit the quirks of human vision. To create a “cinematic” look, we often intentionally slow down the capture rate, whereas to create a “realistic” look, we accelerate it. This choice is dictated by how the brain interprets motion blur and detail.

Frame Rates (FPS) vs. Refresh Rates (Hz)

It is a common misconception that the brain cannot see past 60fps. In reality, the human visual system can detect artifacts and subtle motion improvements at much higher rates—up to 500Hz in certain conditions. This is why high-end imaging systems now offer 120fps and 240fps modes. When these frames are played back on a display with a high refresh rate (Hz), the brain receives more “updates” per second, resulting in a reduction of “stroboscopic effect” (the choppy look of fast-moving objects). For imaging professionals, higher frame rates provide the temporal resolution necessary for the brain to track movement without visual fatigue.

The “Soap Opera Effect” and Hyper-Realism

The reason 24fps remains the standard for cinema is that it approximates the amount of motion blur the human brain expects when it isn’t focusing on a single, sharp point. When we increase the frame rate to 60fps or higher, we enter the realm of “hyper-realism.” The brain receives so much information that the image begins to look “too real,” often referred to as the “Soap Opera Effect.” Understanding the brain’s update cycle allows cinematographers to choose the right frame rate for the intended emotional response: 24fps for storytelling and 60fps+ for sports, action, or immersive FPV applications.

FPV Systems and the Criticality of Real-Time Visual Updates

In the niche of FPV (First-Person View) imaging, the relationship between the brain’s update rate and the camera’s output is a matter of precision and safety. When a pilot is navigating a camera-equipped UAV through a complex environment, the “hand-eye-brain” loop must be as tight as possible.

Reducing Signal Latency for Immersive Flight

When the brain perceives a movement but the visual system sees a delayed version of that movement, “vestibular-ocular conflict” occurs. This is the primary cause of nausea in VR and FPV systems. To counter this, modern digital FPV systems focus on “constant latency” transmission. By ensuring that the camera captures and transmits images at a rate that matches the brain’s expectation for real-time feedback (typically under 28ms), imaging technology allows the brain to “forget” it is looking at a screen and instead accept the digital feed as its primary reality.

Ocular Strain and Digital Smoothing

Low-quality imaging systems often suffer from “frame drops” or “micro-stutter.” Because the brain is conditioned to expect a consistent update interval from the environment, these digital hiccups cause significant ocular strain. Advanced image processors now use algorithms to smooth out these transitions. By maintaining a rock-solid frame delivery, the camera system reduces the cognitive load on the pilot or operator, allowing for longer sessions and more complex maneuvers without mental fatigue.

High-Resolution Imaging and the Limits of Human Detail Retention

While temporal resolution (how often the brain updates) is one side of the coin, spatial resolution (how much detail is in each update) is the other. The brain does not see the entire world in “4K.” Our central vision (the fovea) is high-resolution, while our peripheral vision is much lower.

Spatial vs. Temporal Resolution

There is a trade-off in imaging technology between how many pixels we can capture (spatial) and how fast we can capture them (temporal). Because the brain updates its “mental map” of a scene frequently, it can actually “construct” a higher-resolution image from a 1080p/60fps video than from a 4K/24fps video if the subject is moving quickly. This is because the brain uses the additional temporal data to fill in the gaps. For high-action imaging, prioritizing frame rate over raw pixel count often results in a perceptually “sharper” image for the viewer.

Why 60fps is the Gold Standard for Modern Aerial Cinematography

For many years, 30fps was the standard for digital video. However, as screen sizes have grown and our understanding of visual processing has evolved, 60fps has become the benchmark for professional imaging. At 60fps, the camera provides an update every 16.6 milliseconds. This aligns perfectly with the brain’s fastest motion-processing capabilities. For aerial shots—where the entire frame is often in motion—60fps provides the fluid motion required to prevent the brain from noticing the transition between frames, resulting in a seamless visual experience.

Future Innovations: Adaptive Imaging and AI-Driven Visual Syncing

As we look toward the future of camera and imaging technology, the goal is to create systems that even more closely mimic the human brain’s own processing methods.

Gaze-Tracking and Variable Rate Shading

In the near future, imaging systems may utilize “foveated rendering” and adaptive capture. By using sensors to track where a human viewer is looking, a camera or display system can allocate more processing power and higher resolution to that specific area, while reducing the update rate in the periphery. This mimics exactly how the brain processes the visual field, allowing for incredibly high-resolution imaging without the massive data overhead usually required.

The Evolution of Ultra-Low Latency Transmissions

As AI becomes more integrated into image sensors, we are seeing the rise of “predictive frame generation.” By using AI to predict the next millisecond of motion, camera systems can theoretically deliver an image to the brain before the traditional processing delay would allow. While still in its infancy, this technology aims to bring digital imaging latency down to near-zero, perfectly syncing the camera’s output with the brain’s internal clock.

In conclusion, the development of modern imaging technology is a constant race to catch up with the human brain. By understanding that our “visual update rate” is a complex mix of flicker fusion, processing latency, and motion integration, engineers can design cameras and FPV systems that feel natural, immersive, and incredibly sharp. Whether it’s the choice between 24fps and 60fps or the implementation of ultra-low latency digital links, every advancement in imaging is ultimately designed to satisfy the most sophisticated image processor in existence: the human mind.