In the modern digital landscape, the question “What ethnic do I look like?” has evolved from a simple social inquiry into a complex technological challenge. While human observers use a lifetime of social context to guess heritage, modern Unmanned Aerial Vehicles (UAVs) equipped with advanced Artificial Intelligence (AI) and Computer Vision are beginning to answer this question through the cold, precise language of biometrics and pattern recognition. Within the niche of Tech and Innovation, the ability of a drone to identify, categorize, and analyze human features represents a pinnacle of edge computing and neural network development.

This article explores the sophisticated intersection of drone technology and human identification, examining how autonomous systems interpret physical characteristics and the innovative frameworks that allow machines to “see” and “classify” human diversity from hundreds of feet in the air.

The Evolution of Biometric Identification in Drone Technology

The journey from simple motion detection to the granular analysis of human features has been one of the most rapid advancements in the drone industry. Early UAVs were little more than flying cameras, dependent entirely on a human pilot to interpret what the lens was seeing. Today, the integration of powerful On-Board Neural Processing Units (NPUs) has transformed these devices into autonomous analysts.

The Shift from Simple Motion Detection to Advanced Facial Analysis

In the early stages of drone innovation, “computer vision” was limited to detecting contrast and movement. A drone could recognize that a “blob” was moving across a field, but it could not distinguish between a human, an animal, or a swaying tree. As processing power moved from ground-based servers directly onto the drone’s hardware (edge computing), the depth of analysis increased exponentially.

Modern drones utilize Convolutional Neural Networks (CNNs) to perform real-time feature extraction. When a drone’s camera captures an image, the software breaks the human form down into mathematical vectors. To answer the question of “what someone looks like,” the AI measures distances between ocular orbits, the width of the nasal bridge, and the curvature of the jawline. This transition from “motion tracking” to “feature analysis” is the foundation of modern demographic identification.

Understanding the Algorithms: How AI Classifies Human Features

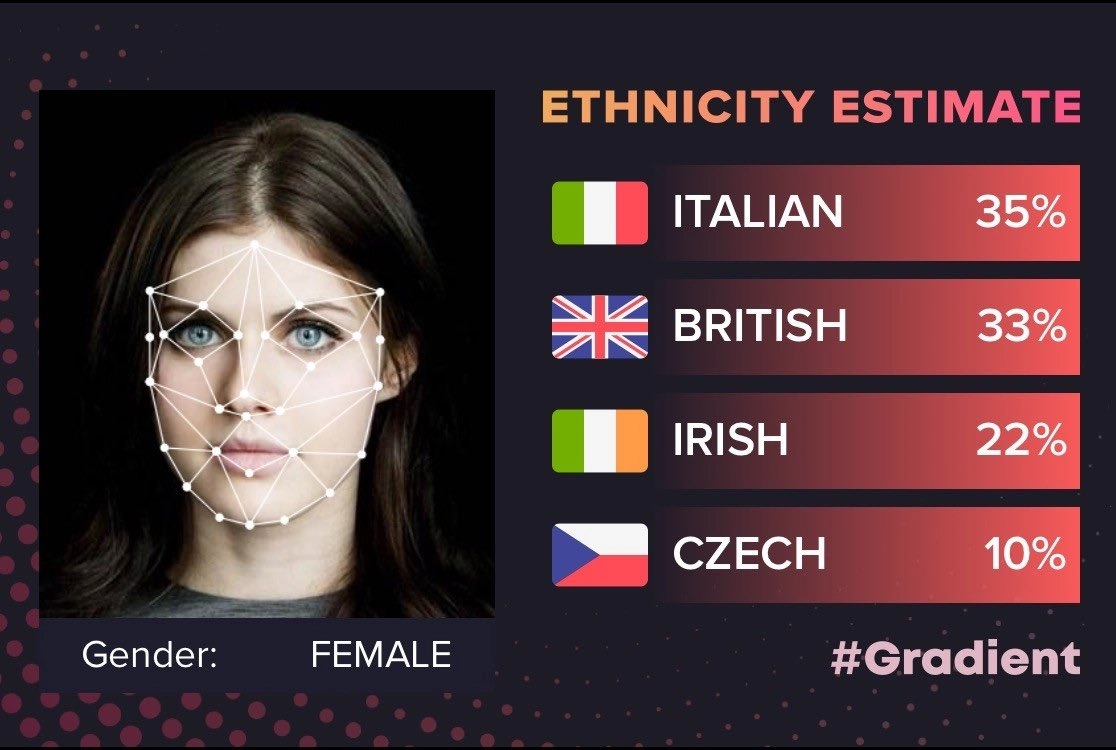

To a drone’s AI, ethnicity or physical appearance is not a social construct but a data set comprised of “biometric landmarks.” Algorithms are trained on massive datasets containing millions of images of diverse faces from around the globe. By using deep learning, the drone learns to associate certain clusters of physical traits with specific geographical or demographic markers.

When a drone identifies a subject, it creates a “biometric template.” This template is a digital map of the face that ignores temporary factors like lighting or expression and focuses on the underlying geometry. This technology is what allows high-end surveillance and research drones to categorize human populations with increasing accuracy, providing a technical answer to what a subject “looks like” based purely on statistical probability and visual data.

The Role of Computer Vision in Demographic and Ethnic Identification

At the heart of Tech and Innovation in the drone sector is Computer Vision (CV). This field is dedicated to giving machines the ability to gain high-level understanding from digital images or videos. In the context of identifying human characteristics, CV goes beyond simple photography to perform what is known as “semantic segmentation.”

Deep Learning and Pattern Recognition in Aerial Imaging

Aerial imaging presents unique challenges for identification. Unlike a stationary security camera at eye level, a drone often views subjects from high angles, varying distances, and shifting light conditions. To determine a subject’s characteristics, drone AI uses “pose-invariant” recognition. This means the algorithm can reconstruct a 3D model of a face or body from a 2D aerial image.

Deep learning models, specifically those utilizing “Attention Mechanisms,” allow the drone to focus on the most “discriminative” parts of a human face—the areas that remain most consistent regardless of environment. By analyzing these patterns, the drone can match a subject’s features against a database of global phenotypes. This isn’t just about looking at skin tone; it’s about the complex structural geometry of the human skull and soft tissue that the AI uses to categorize the subject’s physical appearance.

The Ethical Challenges of Algorithmic “Ethnic” Profiling

As we push the boundaries of what drone AI can do, the tech industry must grapple with the ethical implications of “ethnic” identification. The question “What ethnic do I look like?” takes on a different weight when answered by a surveillance drone. Innovation in this sector is currently focused on “Algorithmic Fairness.”

Developers are working to ensure that the datasets used to train drone AI are diverse enough to prevent “algorithmic bias,” where the AI might misidentify or fail to recognize people from certain backgrounds. The goal of current innovation is to create “Blind Recognition” systems that can identify a specific individual for search and rescue or security purposes without relying on or reinforcing harmful ethnic stereotypes.

Applications of Human-Centric Recognition in Modern UAVs

The ability of a drone to analyze human characteristics isn’t just a technical feat; it has vital real-world applications. From finding a lost hiker to securing large-scale events, the tech behind “what someone looks like” is a life-saving tool.

Search and Rescue: Identifying Specific Traits in Emergency Scenarios

In a Search and Rescue (SAR) mission, time is the most critical factor. When a drone is deployed to find a missing person, rescuers can input specific descriptors into the drone’s AI. These might include height, hair color, and other physical traits. The drone’s “Object Detection” system then scans thousands of acres of terrain, filtering out everything that doesn’t match the biometric profile of the missing individual.

Innovation in this space has led to “Attribute-Based Person Re-Identification” (Re-ID). If a person is lost in a crowded mountainous region, the drone can use its AI to distinguish that specific person based on their unique physical characteristics and clothing, even if their face is obscured. This level of precision is only possible because the drone understands the nuances of human appearance.

Security and Surveillance: Precision Monitoring in Diverse Environments

In the realm of security, “Tech and Innovation” has moved toward “Non-Cooperative Biometrics.” This refers to the drone’s ability to identify individuals who are not looking directly at the camera. By analyzing gait (the way a person walks) and body proportions, a drone can identify a person’s characteristics from a distance where facial features might be blurred.

This is particularly useful in international border security or large-scale public safety operations. The drone’s ability to categorize human features allows for “automated alerts.” For instance, if a drone is programmed to look for a specific person of interest, it can scan a crowd of thousands and use its “Human Analysis” module to flag individuals who match the physical profile, significantly reducing the workload for human operators.

The Technical Infrastructure Behind High-Fidelity Human Recognition

To achieve the level of analysis required to answer “what someone looks like” from the air, drones require more than just a good camera. They need a sophisticated ecosystem of hardware and software working in perfect synchronization.

Edge Computing and On-Board Neural Processing

The most significant innovation in recent years is the move away from the “Cloud” and toward the “Edge.” Processing high-definition video data to identify human ethnicities or features requires immense computational power. In the past, this data had to be sent to a powerful ground station, creating latency (delay).

Modern “Smart Drones” now feature dedicated AI chips, such as the NVIDIA Jetson series or custom TPUs (Tensor Processing Units). These chips allow the drone to run complex neural networks locally. By processing the “What do I look like?” query directly on the aircraft, the drone can react in milliseconds, adjusting its flight path to get a better angle or zooming in to confirm a biometric match without needing a constant data link to a server.

The Integration of Multispectral Imaging and Thermal Sensors

Standard RGB (Red, Green, Blue) cameras are often insufficient for accurate human identification in poor lighting or through foliage. Innovation in “Sensor Fusion” has led to drones that combine standard visual data with thermal and multispectral sensors.

Thermal imaging can detect the heat signature of human skin, while multispectral sensors can detect “biological signatures” that distinguish human tissue from synthetic materials. When combined with AI, these sensors allow the drone to “see” through disguises or identify a subject’s physical characteristics in total darkness. This multi-layered approach to imaging provides a far more accurate “picture” of a person’s physical makeup than a simple photograph ever could.

Future Horizons: Towards Unbiased and Precise Autonomous Identification

The future of drone technology lies in the refinement of these identification systems to be more accurate, more ethical, and more autonomous. We are moving toward a world where the “Tech and Innovation” within a drone will understand human diversity better than ever before.

Refining the Dataset: Overcoming Bias in AI Vision

One of the most exciting areas of innovation is the development of “Synthetic Data” for AI training. To ensure a drone can accurately identify what someone “looks like” across all ethnicities, researchers are using 3D modeling to create millions of “virtual humans.” These virtual subjects provide a perfectly balanced dataset, allowing the AI to learn human features without the biases inherent in historical photo databases. This results in a more “equitable” computer vision system that performs with high accuracy across all demographic groups.

The Convergence of Generative AI and Aerial Robotics

As we look forward, the integration of Generative AI with drone technology will allow for “Predictive Identification.” Imagine a drone that can be given a verbal description—”Search for a male of Mediterranean descent, approximately 6 feet tall”— and the drone’s Generative AI will create a visual mental model of that person to search for.

This convergence of Natural Language Processing (NLP) and Computer Vision represents the next frontier. The drone will no longer just be a tool that captures images; it will be an intelligent agent capable of understanding the complex nuances of human appearance and heritage, answering the question “What do they look like?” with a level of technological sophistication that was once the stuff of science fiction.