In the world of high-resolution digital imaging, from 4K gimbal cameras to advanced thermal sensors, the volume of data generated is staggering. A single minute of raw, uncompressed 4K video at 60 frames per second can consume gigabytes of storage space. To manage this data deluge, imaging systems rely on a critical process known as video compression. But what exactly does compressing a video do, and why is it the backbone of modern imaging technology? At its core, video compression is the art and science of reducing the file size of a digital video while maintaining as much visual fidelity as possible. It is a sophisticated balancing act between data economy and image quality.

Understanding the Fundamentals of Video Compression

To understand what compression does, one must first understand the “raw” state of digital video. Digital images are composed of pixels, each containing data about color and brightness. In an uncompressed stream, every single pixel of every single frame is recorded and stored. Video compression works by identifying and removing “redundancy” within this data.

The Concept of Bitrate and Data Density

Bitrate refers to the amount of data processed over a specific period, usually measured in megabits per second (Mbps). When you compress a video, you are essentially lowering the bitrate. High-end imaging systems, such as those used in professional cinema or high-resolution mapping, often operate at high bitrates to preserve every minute detail. Compressing the video reduces this density, making the file manageable for standard storage media like SD cards or for transmission over wireless frequencies. By lowering the bitrate, compression allows a camera to record for hours rather than minutes, without requiring industrial-scale storage arrays.

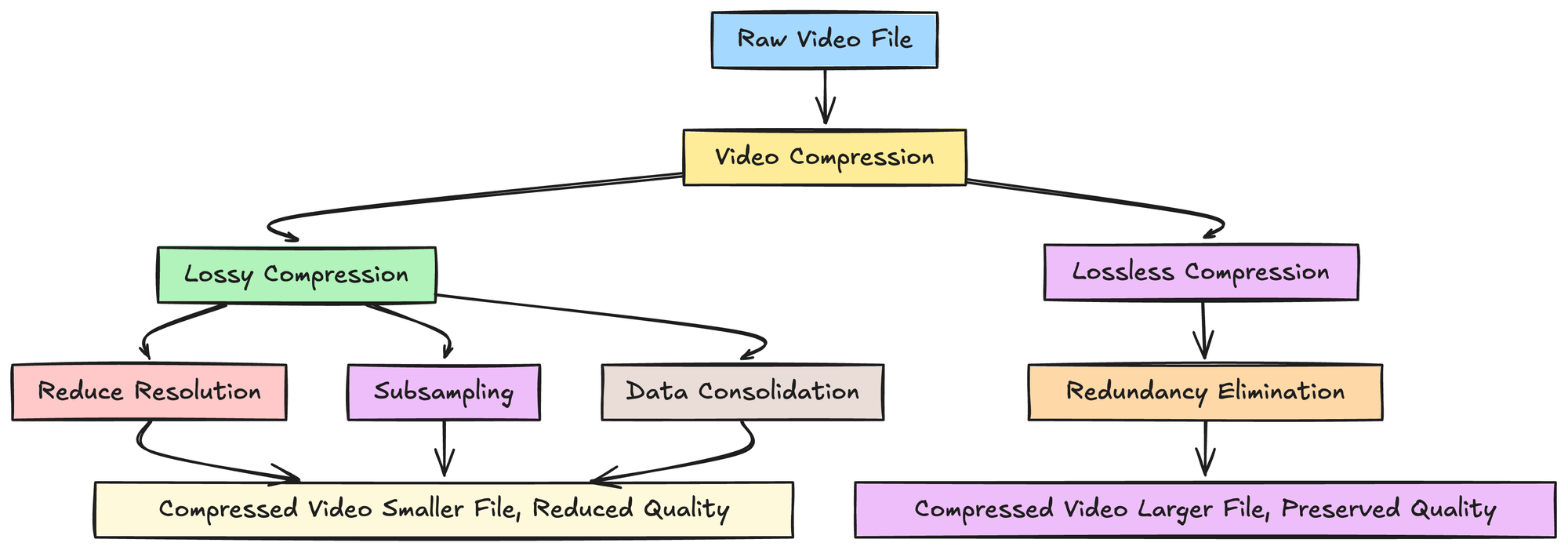

Lossy vs. Lossless Compression in Imaging

In the niche of cameras and imaging, we primarily deal with two types of compression: lossy and lossless.

- Lossless Compression allows the original data to be reconstructed perfectly. While it preserves 100% of the sensor’s detail, the reduction in file size is minimal (often only 2:1 or 3:1).

- Lossy Compression is the standard for most imaging applications. It permanently discards data that the human eye is less likely to perceive—such as subtle variations in color shades in a bright sky. What compression “does” here is prioritize “essential” visual information over “expendable” data, allowing for massive reductions in file size (often 100:1 or more) with remarkably little perceived loss in quality.

How Video Compression Works: Codecs and Containers

When we discuss compression, we often use terms like H.264, HEVC, or MP4. These represent the tools and frameworks that execute the compression process. Understanding these is vital to understanding how data is manipulated during the imaging workflow.

The Role of the Video Codec (H.264, H.265, and ProRes)

A “codec” (short for Coder-Decoder) is the algorithm that performs the compression. What the codec does is analyze the video frames and decide which pixels to keep and which to approximate.

- H.264 (AVC): This is the most common codec. It provides a great balance between quality and compatibility.

- H.265 (HEVC): The High-Efficiency Video Coding standard is the successor to H.264. It is significantly more efficient, often delivering the same visual quality as H.264 at half the bitrate. For 4K and 8K imaging, H.265 is essential because it can handle the massive pixel counts without clogging the camera’s internal processor.

- Apple ProRes: Used in high-end imaging, this codec is “less” compressed, focusing on maintaining maximum image data for post-production, though at the cost of much larger file sizes.

Interframe vs. Intraframe Compression

This is one of the most ingenious things compression does.

- Intraframe (All-I) compression treats every frame as a standalone image. It compresses each frame individually. This results in higher quality and easier editing but larger files.

- Interframe (IPB) compression looks for changes between frames. If you are filming a stationary landscape and only a bird flies across the sky, the codec only records the movement of the bird. It “re-uses” the background data from the previous frame. This process, known as motion estimation, is what allows high-definition video to be streamed over the internet or stored on small devices. It drastically reduces the amount of data by not recording redundant, unchanging pixels.

The Impact of Compression on Image Quality and Storage

While compression is a necessity, it is not without its trade-offs. What compression “does” to the physical look of your footage depends entirely on the intensity of the process.

Balancing Resolution and Artifacts

When a video is compressed too heavily (low bitrate), the algorithm begins to struggle. This results in “compression artifacts.” You might see “macroblocking,” where the image breaks down into small square blocks, or “color banding,” where a smooth gradient (like a sunset) turns into distinct, ugly steps of color. In professional imaging, the goal is to find the “sweet spot” where the file size is small enough for the hardware to handle, but the artifacts remain invisible to the viewer. Effective compression ensures that the sharpness of a 4K sensor is maintained, even if some of the underlying “invisible” data is stripped away.

Storage Management and Transmission Efficiency

From a hardware perspective, compression is a logistics manager. Without it, the high-speed data bus of a camera would overheat, and the storage media would fail to keep up with the write speeds required. By compressing the video stream in real-time, the imaging system can write data to a standard UHS-II SD card. Furthermore, in FPV (First Person View) systems, compression is what allows a high-definition image to be transmitted wirelessly from a camera to a set of goggles with minimal lag. By shrinking the data packets, the system can send them through the air much faster than it could send raw video.

Why Compression is Essential for Modern Imaging Systems

In the current landscape of imaging technology—encompassing everything from thermal sensors used in industrial inspections to the 10-bit color cameras used in cinema—compression is not just a feature; it is a fundamental requirement.

Real-time Processing in High-Resolution Cameras

Modern Image Signal Processors (ISPs) are designed to handle compression at the hardware level. When light hits the CMOS sensor, it is converted into electrical signals and then into raw digital data. The ISP immediately applies compression algorithms. This real-time processing allows for features like high-frame-rate (HFR) recording. For instance, shooting at 120fps generates an enormous amount of data every second. Compression “compresses” this timeline of data so the camera’s buffer doesn’t overflow, enabling slow-motion capture that would otherwise be technically impossible on portable hardware.

Facilitating Low-Latency Wireless Transmission and Remote Sensing

In specialized imaging, such as thermal or multispectral sensing, the data being captured isn’t just for “looks”—it’s for analysis. However, this data often needs to be viewed remotely in real-time. Compression allows these specialized imaging systems to transmit a “proxy” or a compressed version of the live feed to a remote station. This ensures that an operator can see what the camera sees with near-zero latency. By reducing the bandwidth requirement, compression makes remote imaging over long distances viable, bridging the gap between high-fidelity sensors and the practical limitations of wireless radio frequencies.

Conclusion

So, what does compressing a video do? It transforms an unmanageable firehose of raw sensor data into a streamlined, efficient, and usable digital file. It enables our cameras to record longer, our screens to display 4K clarity without stuttering, and our wireless systems to transmit high-definition visuals across miles. While it involves a delicate trade-off—sacrificing absolute data purity for practical efficiency—the sophisticated codecs of today ensure that the visual experience remains stunning. In the niche of cameras and imaging, compression is the invisible engine that makes high-resolution digital media possible, turning the theoretical potential of a high-megapixel sensor into a functional reality.