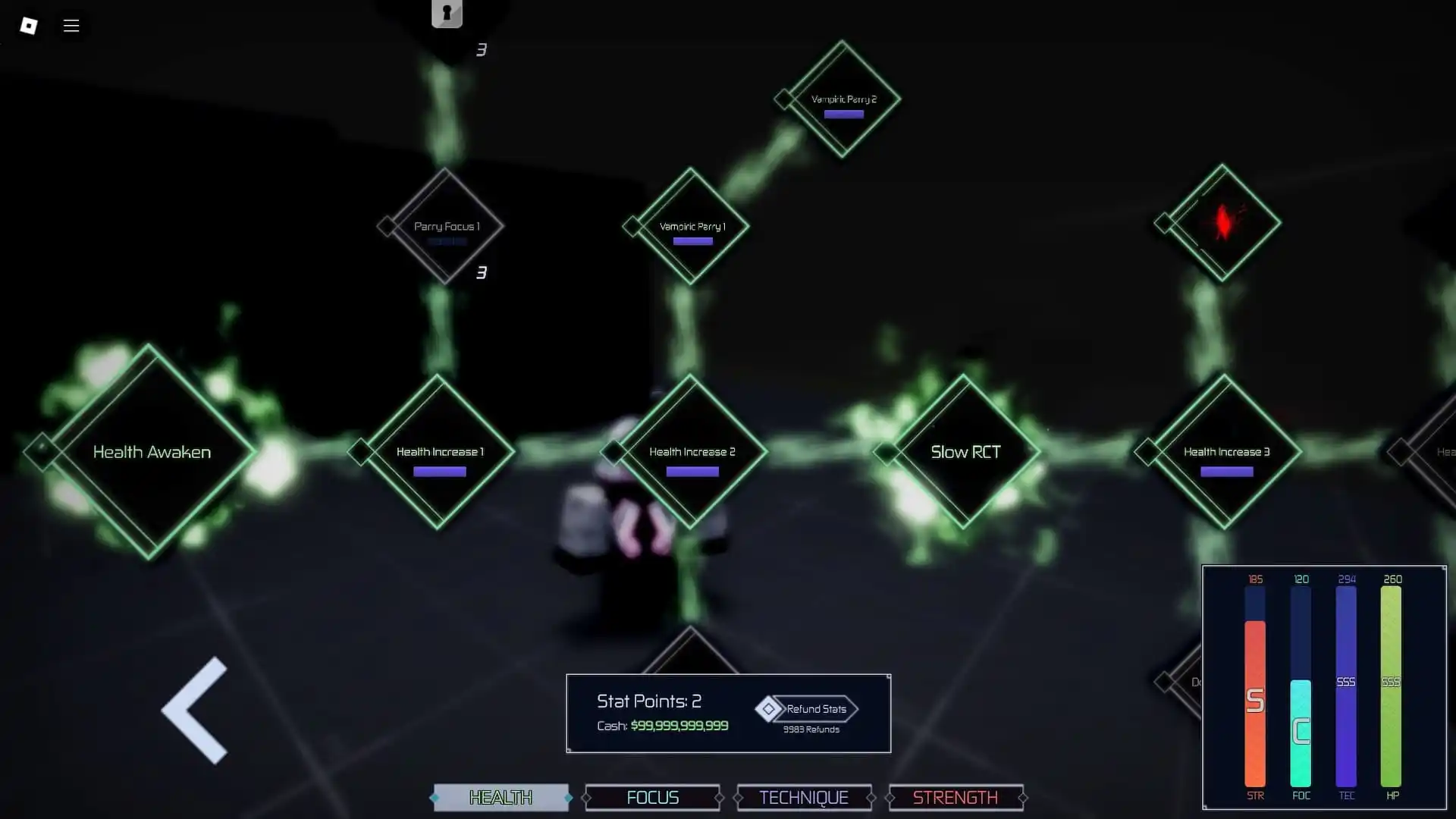

In the digital landscapes of popular culture and gaming, such as in “Jujutsu Infinite,” the concept of a “max level” represents the absolute zenith of power, skill, and capability. It is the point where a character or system has transcended all previous limitations, achieving a state of mastery that defines the current boundaries of that universe. When we translate this concept into the realm of modern technology—specifically the rapidly evolving sector of Unmanned Aerial Vehicles (UAVs)—the question of “what is the max level” takes on a technical and transformative meaning.

In the niche of Tech and Innovation, the “max level” is currently defined by the transition from simple remote-controlled flight to Level 5 Autonomy. This level of innovation represents a future where drones are not just tools piloted by humans, but intelligent agents capable of sensing, mapping, and reacting to the world with “infinite” precision. Understanding this pinnacle requires a deep dive into AI follow modes, autonomous flight protocols, and the cutting-edge remote sensing technologies that make “Max Level” performance a reality.

The Spectrum of Autonomy: Defining Level 5 in UAV Systems

To understand where the current ceiling of drone technology lies, one must first look at the standardized levels of autonomy. Just as a player in a complex RPG progresses through tiers of experience, drone innovation is categorized by the degree of human intervention required. Currently, the industry is striving toward what is effectively the “max level”: Full Autonomy.

The Transition from Pilot-Assist to Independent Systems

For years, the industry standard hovered around Level 2 and Level 3 autonomy. These “levels” included basic stabilization, GPS-guided hovering, and return-to-home functions. However, the “Max Level” in today’s innovation landscape is Level 4 and Level 5. At Level 4, the drone is capable of performing all safety-critical functions within a defined area or during a specific mission without human intervention. Level 5, the true pinnacle, implies that the drone can operate in any environment, under any conditions, with the same or better decision-making capabilities as a human pilot.

Achieving this requires a massive leap in onboard processing. It is no longer about following a pre-programmed path; it is about “thinking” in three dimensions. This innovation is what allows modern drones to be deployed in disaster recovery zones where the landscape is constantly shifting, rendering traditional GPS waypoints useless.

AI Algorithms and Real-Time Decision Making

The heart of high-level autonomy is the artificial intelligence (AI) that governs flight logic. In the context of tech innovation, the “max level” involves sophisticated neural networks that have been trained on millions of flight hours. These algorithms allow the drone to perform real-time “object classification.” This means the drone isn’t just seeing an “obstacle”; it understands that the obstacle is a swaying tree branch, a moving vehicle, or a human bystander. By classifying these objects, the drone can predict their movement and adjust its flight path accordingly, achieving a fluid, “infinite” smoothness in its trajectory that was impossible only five years ago.

The Eyes of the Machine: Remote Sensing and Mapping in Achieving Peak Performance

If autonomy is the brain of the drone, remote sensing and mapping are its senses. To reach the “max level” of innovation, a drone must be able to reconstruct its environment in real-time. This is where the integration of LiDAR and high-speed photogrammetry comes into play, transforming a simple flying camera into a high-tech data collection powerhouse.

LiDAR and Photogrammetry: Creating a Digital Twin

The most advanced drones today utilize Light Detection and Ranging (LiDAR) to map their surroundings. Unlike traditional cameras, LiDAR sends out laser pulses that bounce off surfaces, creating a “point cloud” of the environment. This allows the drone to see through dense foliage or operate in total darkness. In the tech and innovation niche, the “max level” is achieved when a drone can generate a 3D “digital twin” of its surroundings while traveling at high speeds.

This capability is essential for industrial inspections and complex mapping missions. By processing thousands of data points per second, the drone can identify structural weaknesses in bridges or calculate the volume of a stockpile with 99% accuracy. This level of precision is the benchmark for what we consider “infinite” capability in the current hardware cycle.

Edge Computing and Onboard Processing Power

A major bottleneck in reaching the “max level” has historically been the lag between data collection and data processing. Traditional drones would capture data, which would then be processed on a powerful ground-based computer. Innovation in “Edge Computing” has changed this.

Modern high-tier drones possess onboard GPUs (Graphics Processing Units) capable of performing trillion-operations-per-second (TOPS). This allows the drone to process its 3D maps and sensor data “at the edge”—meaning right there in the air. This instantaneous processing is what enables true autonomous flight; the drone doesn’t have to wait for instructions from a server. It perceives, analyzes, and acts in milliseconds.

AI Follow Mode and the Future of Predictive Motion

One of the most visible signs of a drone reaching its “max level” is the sophistication of its “Follow Mode.” In the early days, this was a simple tethering to a GPS signal. Today, tech innovation has pushed this into the realm of computer vision and predictive kinetics.

Human-Centric Tracking and Predictive Motion

The “Max Level” of AI follow mode does not rely on a GPS beacon in the user’s pocket. Instead, it uses visual recognition to lock onto a subject’s unique skeletal structure and movement patterns. Through “deep learning,” the drone can predict where a subject will be even if they momentarily disappear behind a tree or a building.

This predictive motion is the hallmark of innovation. The drone’s AI calculates the subject’s velocity and acceleration, adjusting its own pitch, roll, and yaw to maintain a perfect geometric relationship with the target. This creates a seamless “ghost pilot” effect, where the drone moves with an organic grace that mimics a professional human cinematographer, yet is controlled entirely by code.

Overcoming Environmental Obstacles at High Speeds

The true test of a “max level” tech stack is the ability to maintain an AI follow mode in a cluttered environment. Imagine a drone following a mountain biker through a dense forest at 30 miles per hour. The innovation required here is staggering. The drone must simultaneously track the rider, map the surrounding branches, calculate its own momentum, and find a path that avoids collisions while keeping the rider in the frame. This requires a fusion of ultrasonic sensors, binocular vision, and high-speed AI processing. Reaching this level of performance represents the “infinite” potential of autonomous systems to augment human activity.

Challenges in Reaching the Infinite Horizon of Drone Capability

Even as we define the “max level” of current technology, there are inherent barriers that innovators are working to overcome. Just as a player in a game must navigate challenges to reach the cap, drone engineers face physical and regulatory hurdles.

Regulatory Barriers and the Evolution of BVLOS

Innovation often moves faster than legislation. While we have the technology to reach “Max Level” autonomy, global regulations often require drones to stay within the “Visual Line of Sight” (VLOS) of a pilot. The next great leap in the innovation niche is the widespread adoption of “Beyond Visual Line of Sight” (BVLOS) operations.

To achieve this, drones must be equipped with “Detect and Avoid” (DAA) systems that are reliable enough to satisfy aviation authorities. This involves integrating ADS-B (Automatic Dependent Surveillance-Broadcast) technology, allowing drones to communicate with manned aircraft. Reaching the “max level” of innovation means creating a drone that can safely integrate into the national airspace alongside commercial airliners.

Hardware Constraints vs. Software Ambition

We often find that our software (AI and Autonomy) is ready for the “max level,” but our hardware is still “leveling up.” Battery density remains the greatest challenge. To power the high-performance GPUs required for Level 5 Autonomy and LiDAR mapping, a significant amount of energy is consumed.

Innovations in solid-state batteries and hydrogen fuel cells are the current “end-game” research areas. Furthermore, the heat generated by onboard AI processing requires advanced thermal management systems. The “max level” drone of the future will not only be smarter but will be built with materials and power sources that allow for “infinite” endurance—or at least, flight times that far exceed the current 30-to-40-minute industry average.

Conclusion: The Infinite Pursuit of Excellence

In the world of “Jujutsu Infinite,” reaching the max level is the end of one journey and the beginning of a new tier of play. In the niche of Drone Tech and Innovation, the “max level” is a moving target. Today, it is Level 5 Autonomy, Edge Computing, and LiDAR-driven 3D mapping. Tomorrow, it may be swarm intelligence and global-scale autonomous networks.

As we have explored, the “Max Level” in this sector is defined by the seamless integration of AI and hardware. It is the point where the machine no longer requires a human to “tell” it how to fly, but instead understands the “why” of its mission. Whether it is through revolutionary AI follow modes that track with uncanny precision or remote sensing capabilities that map the world in real-time, the innovation in UAV technology is rapidly approaching a state of “infinite” possibility. We are standing at the threshold of an era where the sky is no longer a limit, but a vast, autonomous playground for the most advanced technology ever created.