In the realm of internet folklore, “The Backrooms” represents an infinite, procedurally generated maze of empty office rooms, characterized by the hum of fluorescent lights and a sense of unsettling familiarity. While this concept originated as a “creepypasta,” it has evolved into a fascinating theoretical benchmark for the world of drone technology and autonomous systems. When we ask, “What is the last level of the Backrooms?” from a technical perspective, we are not searching for a physical floor number, but rather the “Last Level” of technical capability: the point where autonomous flight, mapping, and remote sensing can master an infinite, repetitive, and GPS-denied environment.

To navigate the Backrooms—or any complex, indoor, multi-level structure—modern drones must transcend traditional flight limits. This involves a convergence of SLAM (Simultaneous Localization and Mapping), AI-driven pathfinding, and advanced sensor fusion.

The Computational Challenge of Liminal Spaces

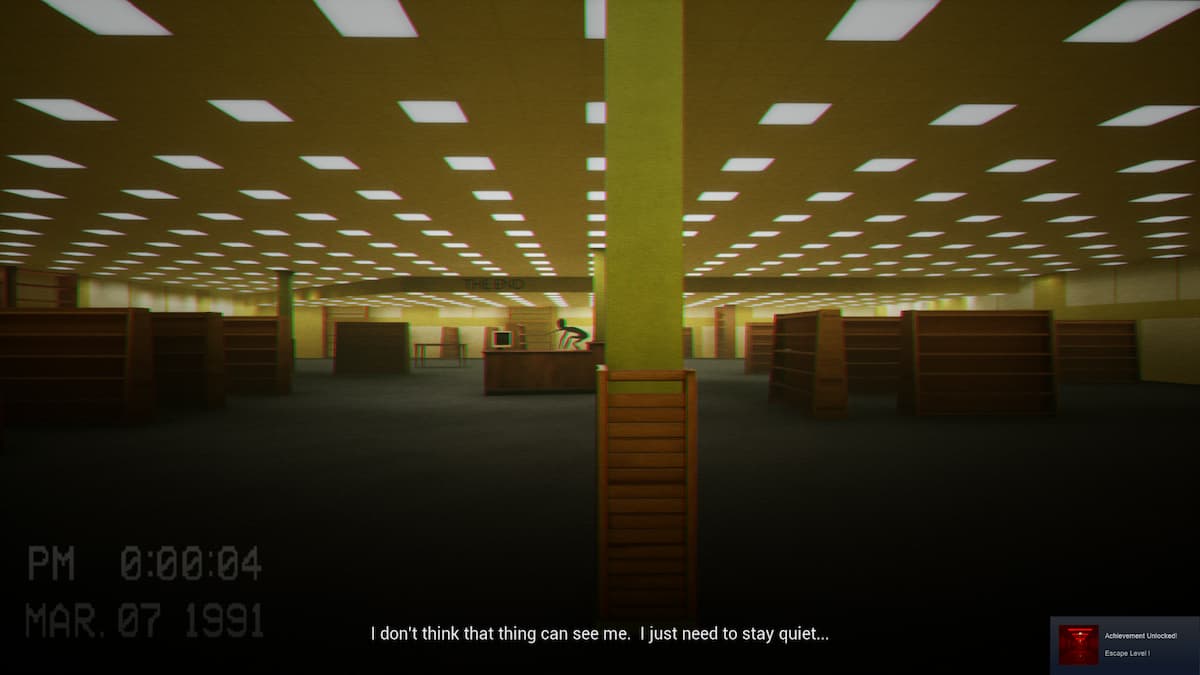

The primary obstacle in mapping a “Backrooms” style environment is the lack of distinct visual landmarks. In drone tech, most navigation systems rely on “features” to understand where they are. In a repetitive yellow-wallpapered hallway, those features disappear.

Visual Odometry and Feature Deserts

Most autonomous drones use Visual Odometry (VO) to track their movement. By identifying high-contrast points in an environment—like the corner of a table or a window frame—the drone’s onboard processor can calculate its velocity and position. However, the “Backrooms” represents a “feature desert.” When every wall is the same shade and every corner looks identical, standard optical sensors suffer from “feature matching” errors. The “Last Level” of navigation technology involves developing AI that can detect microscopic textures or use non-visible spectrums to maintain orientation where human eyes (and standard cameras) fail.

The Problem of Non-Euclidean Mapping

In the lore of the Backrooms, geometry often defies logic—hallways may loop back on themselves in ways that shouldn’t be possible. For an autonomous drone, this is the ultimate test of “Loop Closure.” In SLAM technology, loop closure is the ability of a drone to recognize a place it has been before and “snap” its internal map together to correct drift. If a drone is navigating an infinite series of repetitive levels, the computational load required to verify whether “Room A” is the same as “Room 1002” requires massive onboard processing power and sophisticated spatial temporal memory.

Autonomous Flight in GPS-Denied Environments

The “Backrooms” is, by definition, an indoor environment where GPS signals cannot penetrate. Reaching the metaphorical “last level” of drone exploration requires a total reliance on internal sensors and edge computing.

SLAM Algorithms and Real-Time Processing

Simultaneous Localization and Mapping (SLAM) is the heartbeat of modern autonomous drones. To conquer a complex structure, the drone must build a 3D voxel map of its surroundings while simultaneously locating itself within that map. In a multi-leveled, infinite environment, the “Last Level” of SLAM is the transition from static mapping to dynamic understanding. This involves the drone not just seeing a wall, but predicting the structural layout of the building based on architectural AI models, allowing it to navigate more efficiently than a human pilot ever could.

Beyond GPS: Inertial Navigation Systems (INS)

When GPS fails, drones rely on Inertial Measurement Units (IMUs)—accelerometers and gyroscopes. However, IMUs are prone to “drift,” where tiny errors in measurement add up over time, leading the drone to think it is several meters away from its actual location. The frontier of flight technology involves “Zero-Velocity Update” (ZUPT) algorithms and the integration of ultra-wideband (UWB) beacons that allow a drone to recalibrate its position in real-time without needing a satellite link.

Reaching the “Last Level”: The Peak of Remote Sensing

If we define the “Last Level” as the ultimate achievement in drone data acquisition, we must look at the sensors that allow a machine to “see” through the infinite yellow haze of the Backrooms.

LIDAR vs. Photogrammetry in Low-Texture Zones

While cameras (photogrammetry) struggle in repetitive environments, LIDAR (Light Detection and Ranging) thrives. LIDAR sends out thousands of laser pulses per second to create a precise “point cloud.” In a hypothetical Backrooms exploration, a drone equipped with solid-state LIDAR would be able to map the geometry of the environment regardless of lighting conditions or wallpaper patterns. The technical “Last Level” here is the miniaturization of high-definition LIDAR to fit on micro-drones (UAVs), allowing them to zip through narrow vents and crawlspaces that were previously inaccessible.

AI-Driven Pathfinding and Autonomous Exploration

The true “Last Level” of autonomy is “Frontier Exploration.” This is an AI behavior where the drone identifies the boundary between “mapped space” and “unmapped space” and autonomously decides to explore the unknown. Instead of a pilot saying “go left,” the drone’s onboard AI analyzes the topology and determines the most statistically likely path to an exit or a new level. This requires massive neural networks capable of “semantic labeling”—understanding that a door is a “passable opening” while a glass pane is a “solid obstacle,” even if they look visually similar.

From Theory to Reality: Search and Rescue Applications

While the “Backrooms” may be a digital myth, the technology required to navigate it is being used today in some of the most dangerous real-world environments. The “Last Level” of this tech is the transition from a single drone to a coordinated swarm.

Swarm Intelligence in Complex Structures

In a massive, multi-level building collapse or a deep subterranean cave system, a single drone is limited by battery life and signal range. The future of tech and innovation lies in “Swarm Intelligence.” In this scenario, dozens of small drones enter the structure. They talk to each other, sharing mapping data in real-time. If one drone finds a “stairwell” (a transition to a new level), it relays that coordinate to the rest of the swarm. This creates a “distributed brain” that can map an entire complex in minutes.

The Future of Subterranean and Deep-Space Exploration

The lessons learned from solving the “Backrooms” problem—navigating repetitive, GPS-denied, enclosed spaces—are exactly what NASA and other agencies need for the exploration of lunar lava tubes or the icy caves of Europa. The “Last Level” of drone technology isn’t just about office buildings; it’s about creating machines that can enter a dark, unknown void and return with a perfect 3D digital twin of the interior.

Conclusion: The Ultimate Technical Mastery

When we strip away the internet lore, the “Last Level of the Backrooms” represents the absolute ceiling of autonomous machine intelligence. It is the point where a drone no longer needs a human, no longer needs a satellite, and no longer needs a pre-existing map.

To reach this level, flight technology must evolve to handle infinite procedural data, sensors must become immune to visual monotony, and AI must develop a sense of spatial logic that mirrors—or exceeds—human intuition. We are currently in the middle levels of this technological journey. With every advancement in LIDAR precision, SLAM efficiency, and edge computing, we move one floor closer to the “Last Level,” turning the once-impossible challenge of navigating the infinite unknown into a standard mission profile for the next generation of autonomous UAVs.