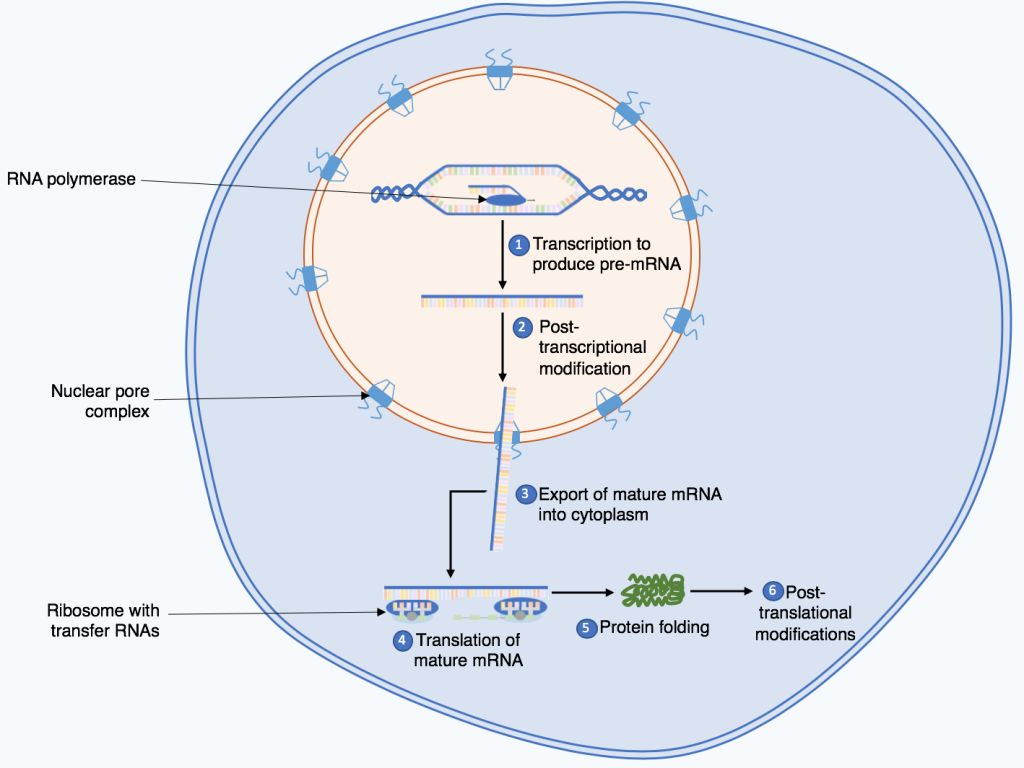

In the biological world, the first step in protein synthesis is known as transcription—a process where a specific segment of DNA is copied into RNA by the enzyme RNA polymerase. While this may seem far removed from the high-tech world of unmanned aerial vehicles (UAVs) and autonomous systems, the conceptual framework is strikingly similar. In the niche of Tech & Innovation, specifically regarding AI follow modes, autonomous flight, and remote sensing, we see a digital version of this “synthesis” occurring every millisecond.

Just as a cell must transcribe genetic instructions before it can build a functional protein, an autonomous drone must “transcribe” raw environmental data into a digital code that the flight controller can understand. This article explores the “transcription” phase of drone technology—the foundational step where sensor data is converted into actionable intelligence—and how this synthesis defines the future of autonomous flight.

Defining the “Transcription” Phase of Autonomous Systems

In the context of drone innovation, the “first step” in any autonomous mission—be it mapping a forest or following a high-speed cyclist—is the acquisition and encoding of data. This is the drone’s equivalent of biological transcription. Without a precise digital “transcript” of the world around it, the drone cannot synthesize a flight path or execute complex maneuvers.

Digital Encoding of the Physical World

The first step in any sophisticated tech-driven drone operation is the translation of physical reality into digital language. This happens through an array of sensors that act as the “enzymes” of the system. For an AI-driven drone, the “DNA” is the mission objective programmed by the user, but the “RNA”—the working copy of the instructions—is the real-time map generated by onboard processors.

In tech-heavy applications like remote sensing, this step involves transforming light reflections, heat signatures, or laser pulses (LiDAR) into a binary format. This process is critical because the quality of the “transcript” determines the success of the entire synthesis. If the initial data capture is flawed, the resulting “protein”—the flight path or the 3D map—will be non-functional or “folded” incorrectly, leading to crashes or data errors.

The Role of Remote Sensing as the Genetic Template

Remote sensing represents the cutting edge of tech and innovation in the UAV space. By using multispectral and hyperspectral sensors, drones can see beyond the visible spectrum. In our synthesis analogy, these sensors allow the drone to read “hidden” genetic markers in the environment, such as moisture levels in crops or structural weaknesses in a bridge.

The first step here is not just taking a picture; it is the systematic logging of data points. When a drone performs autonomous mapping, it begins by “transcribing” the terrain into a point cloud. This point cloud serves as the template for all subsequent processing. Innovators in the field are currently focusing on how to make this transcription faster and more efficient, reducing the latency between sensing (transcription) and action (translation).

From Code to Kinetic Action: The Synthesis Workflow

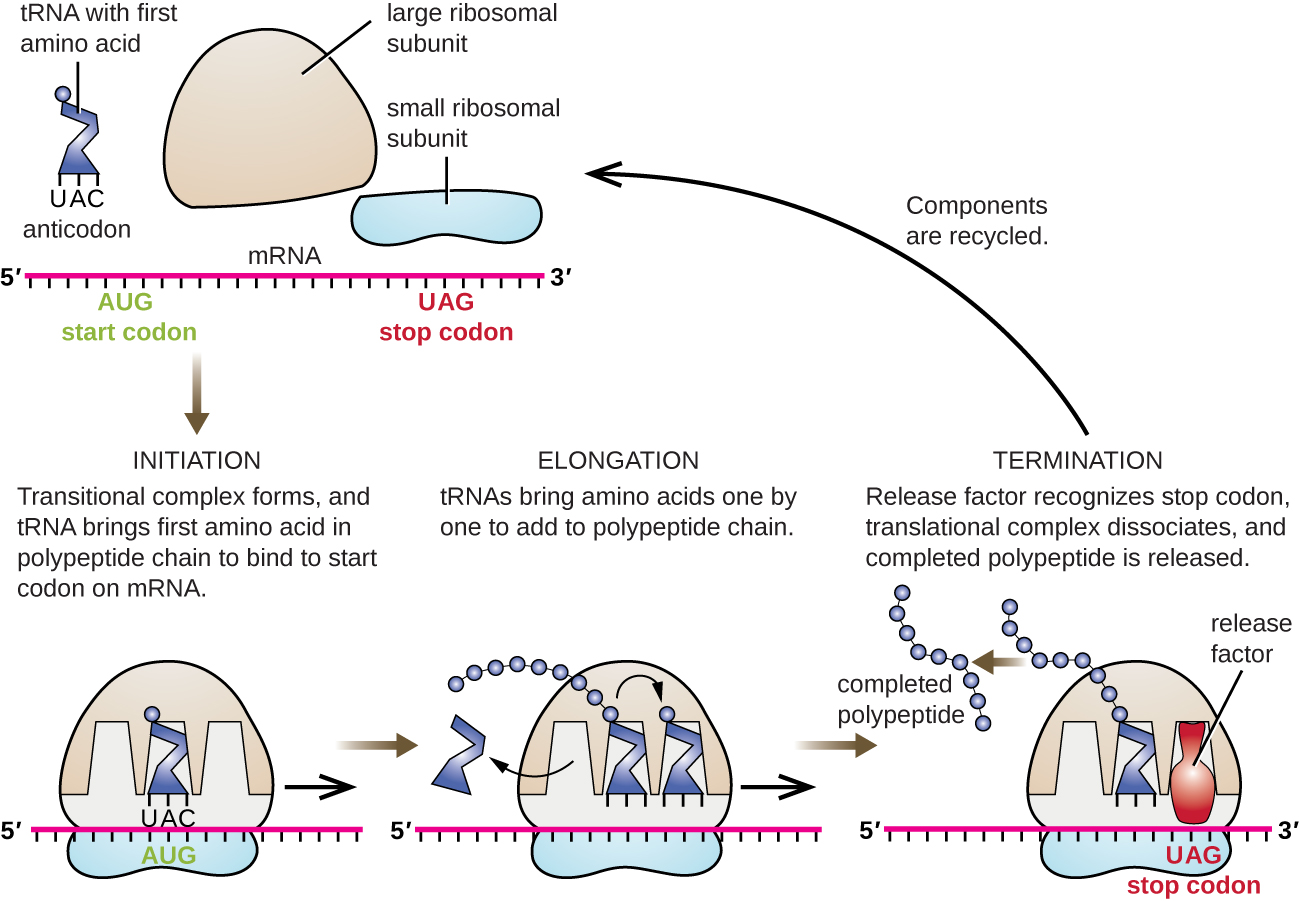

Once the environment has been transcribed into a digital format, the drone moves into the “translation” phase. This is where the AI follow mode and autonomous flight logic come into play. The drone’s onboard computer acts as the ribosome, reading the digital transcript and assembling the necessary motor commands to maintain stability and reach the destination.

Neural Networks as the Processing Engine

The “synthesis” of flight requires a powerful engine. In modern tech-driven drones, this engine is often a deep neural network. These AI systems are trained on massive datasets to recognize patterns—much like how biological systems recognize specific codon sequences. When a drone is in “Follow Mode,” it isn’t just seeing a person; it is synthesizing a geometric understanding of that person’s movement relative to the drone’s own position in 3D space.

The innovation here lies in how these networks handle “mutations”—unexpected obstacles like a bird flying across the path or a sudden gust of wind. A robust autonomous system can re-transcribe its surroundings in real-time, adjusting its synthesis to ensure the “protein” (the mission) remains viable despite environmental stressors.

Obstacle Avoidance and the Logic of Real-Time Translation

Obstacle avoidance is perhaps the most visible example of drone synthesis in action. Using stereoscopic vision or ultrasonic sensors, the drone creates a real-time transcript of nearby hazards. The “Tech & Innovation” aspect shines here through “SLAM” (Simultaneous Localization and Mapping).

SLAM is the pinnacle of autonomous synthesis. It allows a drone to transcribe an unknown environment while simultaneously translating that information into a localized coordinate system. This dual process is what enables drones to fly through dense forests or inside warehouses without human intervention. The speed at which this occurs is the primary metric of success for modern UAV innovators.

The Role of AI in Scaling Flight Data Synthesis

As we look deeper into the innovation niche, the focus shifts from individual drone performance to the “synthesis” of massive datasets. AI is no longer just a tool for flight; it is the architect of the entire data ecosystem.

Machine Learning and Pattern Recognition

In remote sensing, the synthesis process often continues long after the drone has landed. Machine learning algorithms take the transcribed data—thousands of high-resolution images or LiDAR points—and synthesize them into actionable insights. For example, in precision agriculture, the synthesis involves identifying “stress zones” in a field that are invisible to the human eye.

The innovation here is the move from “descriptive” synthesis (what happened?) to “predictive” synthesis (what will happen?). By analyzing the transcribed history of a landscape, AI can predict future changes, such as where a wildfire is likely to spread or where a crop might fail. This is the ultimate expression of the “protein synthesis” metaphor: taking basic instructions and building a complex, functional output that has a real-world impact.

Edge Computing: Speeding Up the Synthesis Process

One of the biggest hurdles in tech innovation is latency. In the past, drones had to send their “transcript” (raw data) to a powerful ground station or a cloud server to be processed. This was a slow and inefficient synthesis.

The emergence of “Edge Computing” has changed the game. By placing high-performance AI chips directly on the drone, the transcription and translation happen simultaneously in flight. This enables real-time decision-making, which is essential for high-speed racing drones or autonomous search-and-rescue missions in complex environments. This “on-the-fly” synthesis represents the current frontier of UAV technology.

Future Innovations in Drone “Synthesis” Technology

The future of drone technology lies in perfecting the “first step” and the subsequent synthesis. We are moving toward a world where drones do not just follow a script but exhibit “emergent behavior” based on their digital transcription of the world.

Swarm Intelligence and Distributed Logic

The next leap in innovation is swarm intelligence. In a swarm, the synthesis process is distributed across multiple units. Each drone transcribes a small portion of the environment and shares that “code” with the rest of the group. Together, they synthesize a collective response.

This is highly reminiscent of how multicellular organisms function. Each “cell” (drone) performs its own transcription, but the overall “protein” (the mission objective) is a result of the collective synthesis. This allows for massive scaling in mapping, surveillance, and even atmospheric research, where a single drone would be insufficient.

Autonomous Mapping and the Path to True Autonomy

The ultimate goal of innovation in this sector is “Level 5 Autonomy”—a state where the drone requires zero human input for any part of the synthesis process. To achieve this, the “first step” of transcription must become flawless. The drone must be able to recognize not just objects, but the context of those objects.

For instance, a truly autonomous drone would not just see a “tree” but would understand that the tree is swaying in a specific way that indicates high-altitude turbulence, and it would adjust its synthesis accordingly. This level of nuanced data interpretation is where the next decade of tech and innovation will be focused.

By understanding the “first step” in this digital protein synthesis—the transcription of the physical world into a digital blueprint—we gain a clearer picture of how autonomous drones are evolving. They are no longer just flying cameras; they are complex biological-style systems that take in code, process it through sophisticated neural “ribosomes,” and produce the vital “proteins” of data, movement, and intelligence that are reshaping our modern world.