Depth vision, in the context of drone flight technology, refers to a drone’s ability to perceive the three-dimensional structure of its surrounding environment. Unlike traditional two-dimensional imaging, which captures only height and width, depth vision adds the crucial third dimension: distance from the sensor to objects. This spatial awareness is not merely a convenience; it is a foundational technology that underpins safe, autonomous, and efficient drone operations, directly impacting critical aspects such as navigation, obstacle avoidance, and precise positioning. Without the capacity to understand depth, a drone operates effectively blind to the physical world, unable to accurately judge distances, identify hazards, or execute complex maneuvers with the necessary precision.

The Imperative of Spatial Awareness in Drone Flight

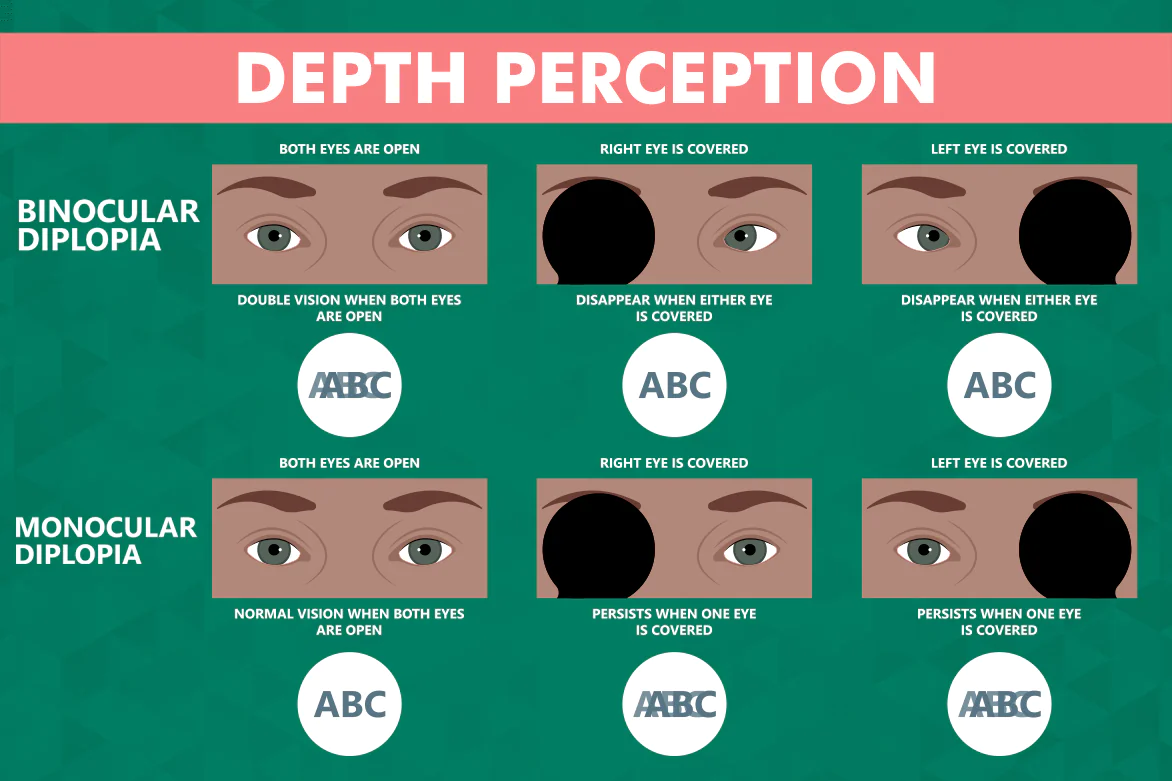

For any airborne vehicle, especially an unmanned aerial vehicle (UAV) operating in dynamic and often complex environments, an accurate understanding of its surroundings is paramount. Human pilots rely on binocular vision, depth perception, and cognitive processing to navigate through three-dimensional space, assess distances, and react to changing conditions. Drones, however, must replicate this capability through sophisticated sensor systems and computational algorithms.

The limitations of purely 2D vision for drones are significant. A standard camera provides an image where objects appear larger or smaller depending on their distance, but without a direct measure of that distance, it’s impossible for the drone to differentiate between a small object nearby and a large object far away. This ambiguity can lead to severe navigation errors and collisions. For instance, an object in the drone’s path might appear small on a 2D image, leading the drone to misjudge its proximity and impact it. Depth vision resolves this by providing explicit distance data for every point in the drone’s field of view, transforming a flat image into a rich 3D map of the operational space. This capability is indispensable for operations ranging from automated package delivery in urban settings to industrial inspections in confined spaces, where collision avoidance and precise flight paths are non-negotiable requirements.

Core Technologies for Perceiving Depth

Drones employ several distinct technologies to acquire depth information, each with its own principles, advantages, and limitations. The choice of technology often depends on the specific application, environmental conditions, and available processing power.

Stereo Vision: Mimicking Human Eyes

Stereo vision systems are inspired by biological binocular vision, utilizing two or more cameras mounted with a known separation (baseline) to capture slightly different perspectives of the same scene. By comparing these two images, algorithms can identify corresponding points in each image. The apparent shift in position of a point between the left and right images – known as disparity – is inversely proportional to its distance from the cameras. Essentially, objects closer to the cameras exhibit a larger disparity than those further away.

The process involves several steps:

- Calibration: Accurately determining the intrinsic parameters of each camera and their relative poses.

- Rectification: Transforming the images so that corresponding points lie on the same horizontal scanline, simplifying the matching process.

- Correspondence Matching: Identifying identical points in the left and right rectified images. This is often the most computationally intensive step, requiring algorithms to find features that correlate between the two views.

- Disparity Map Generation: Creating a map where each pixel represents the calculated disparity value.

- Depth Map Calculation: Converting disparity values into actual distance measurements using triangulation, based on the camera’s focal length and the baseline distance.

Advantages: Stereo vision is a passive technology, meaning it does not emit light or signals, making it energy-efficient and suitable for covert operations. It can operate in various lighting conditions (though extreme dark or bright light can be challenging) and provides high-resolution depth maps.

Limitations: Performance can degrade in environments with poor texture (e.g., plain walls, clear skies, shiny surfaces) where correspondence matching becomes difficult. It is also sensitive to lighting changes and requires significant computational resources for real-time processing.

LiDAR (Light Detection and Ranging): Active Depth Sensing

LiDAR systems operate on an active principle, emitting laser pulses and measuring the time it takes for these pulses to return after reflecting off objects in the environment. This “time-of-flight” measurement directly corresponds to the distance to the object. By rapidly scanning a scene, a LiDAR sensor can build a dense “point cloud” – a collection of millions of 3D data points that precisely map the drone’s surroundings.

Modern LiDAR units for drones can be mechanical (spinning mirrors to direct laser beams) or solid-state (using phased arrays for beam steering). The data collected provides highly accurate and dense spatial information, forming the backbone for detailed 3D mapping and real-time obstacle detection.

Advantages: LiDAR offers extremely accurate depth measurements and is largely unaffected by ambient light conditions, operating effectively in both bright sunlight and complete darkness. It excels in creating dense and precise 3D models of environments, making it invaluable for applications requiring high geometric fidelity.

Limitations: LiDAR sensors can be more expensive, heavier, and consume more power than camera-based systems, which can be a significant factor for smaller drones with limited payload capacity and battery life. Processing the vast amount of point cloud data in real-time also demands substantial computational power.

Structured Light and Time-of-Flight (ToF) Cameras

While less common for outdoor, long-range drone applications than stereo vision or LiDAR, structured light and dedicated Time-of-Flight (ToF) cameras offer alternative methods for depth sensing, particularly relevant for closer-range or indoor operations.

Structured Light: These systems project a known pattern of light (e.g., dots, grids, or stripes) onto a scene. A camera then captures the distorted reflection of this pattern. By analyzing how the projected pattern is deformed by the objects’ surfaces, algorithms can triangulate the depth of each point.

Advantages: Can provide very accurate and high-resolution depth maps over short to medium ranges.

Limitations: Performance is highly susceptible to ambient light, as sunlight can wash out the projected pattern. They are typically used in controlled indoor environments due to these limitations.

Time-of-Flight (ToF) Cameras: Similar in principle to LiDAR but using an array of LED or laser emitters and a specialized sensor, ToF cameras measure the phase shift or intensity modulation of emitted light that bounces back from objects. This allows for direct per-pixel depth measurement across an entire image frame simultaneously.

Advantages: Can be compact and offer real-time depth data at video frame rates.

Limitations: Range is generally limited compared to LiDAR, and accuracy can be affected by ambient light and reflective surfaces. They are often used for proximity sensing and gesture recognition rather than broad environmental mapping.

The Role of Depth Vision in Advanced Flight Systems

The data acquired through depth vision technologies is not merely raw information; it is the raw material for intelligent flight systems that enable drones to perform complex tasks safely and autonomously.

Obstacle Avoidance: The Primary Safety Net

Perhaps the most direct and critical application of depth vision in drone flight technology is obstacle avoidance. By continuously mapping the 3D environment, the drone’s flight controller can identify obstacles (trees, buildings, power lines, other drones) in its flight path. This real-time understanding of its surroundings allows the drone to:

- Predict Collisions: Calculate the drone’s trajectory relative to detected obstacles and predict potential impact points.

- Path Planning: Dynamically adjust its flight path to steer around identified hazards, maintaining a safe standoff distance.

- Emergency Braking: If an avoidance maneuver is impossible or too risky, the system can trigger an emergency stop or hover to prevent a collision.

- Dynamic Avoidance: In complex, changing environments (e.g., flying through a forest or an active construction site), depth vision enables the drone to react to moving obstacles or newly appearing hazards.

Advanced obstacle avoidance systems combine depth data with other sensor inputs (e.g., ultrasonic sensors for very close proximity) and sophisticated algorithms to create a robust and reliable safety mechanism, greatly enhancing operational safety and allowing for operations in environments previously deemed too hazardous.

Precise Navigation and Positioning

While GPS provides global positioning, it often lacks the precision required for centimeter-level accuracy, especially in GPS-denied environments (indoors, urban canyons, under dense foliage). Depth vision systems complement or even replace GPS in these scenarios.

- Visual-Inertial Odometry (VIO): By tracking visual features and their estimated depth changes over time, depth vision combined with inertial measurement units (IMUs) allows drones to estimate their position, velocity, and orientation with high accuracy, even without external signals. This is crucial for maintaining stable flight in areas where GPS signals are weak or unavailable.

- Indoor Navigation: Depth cameras and LiDAR are fundamental for drones navigating within buildings, warehouses, or mines where GPS signals cannot penetrate. They create a real-time 3D map, allowing the drone to locate itself within that map and navigate to specific waypoints.

- Landing Precision: For automated landings, particularly on moving platforms or in designated landing zones, depth vision provides the fine-grained spatial data needed to accurately judge the height, relative speed, and precise coordinates of the landing spot, ensuring a soft and accurate touchdown.

Terrain Following and Environmental Interaction

Depth vision also empowers drones to interact intelligently with their environment in specific applications:

- Terrain Following: Drones equipped with depth sensors can maintain a constant altitude above varied terrain, automatically adjusting their height to match the contours of the ground. This is vital for applications like agricultural spraying, pipeline inspection, or mapping irregular landscapes, ensuring consistent data collection or application.

- Structure Inspection: For inspecting bridges, wind turbines, or power lines, depth vision allows the drone to maintain a precise and constant standoff distance from the surface it is examining. This ensures consistent image quality for inspection purposes and prevents accidental contact.

- Object Tracking and Following: By understanding the 3D position and movement of a target object (e.g., a person, vehicle), depth vision enables drones to autonomously track and follow subjects, maintaining a safe distance and optimal viewing angle.

Challenges and Future Directions

Despite the immense capabilities depth vision brings to drone technology, challenges remain.

- Computational Demands: Processing vast amounts of 3D data in real-time requires powerful onboard processors, which can increase drone cost, weight, and power consumption.

- Sensor Fusion: No single depth sensing technology is perfect for all scenarios. The future lies in robust sensor fusion – intelligently combining data from stereo cameras, LiDAR, ultrasonic sensors, and traditional 2D cameras to achieve a more comprehensive, robust, and reliable understanding of the environment.

- AI and Machine Learning: Integrating advanced AI and machine learning algorithms is critical for interpreting depth data more effectively, differentiating between different types of obstacles, predicting their movement, and making more intelligent flight decisions.

- Miniaturization and Cost Reduction: As depth vision technologies become more compact, lighter, and more affordable, they will be integrated into an even broader range of drones, from consumer models to highly specialized industrial platforms, democratizing advanced autonomous capabilities.

In essence, depth vision transcends merely “seeing” the world; it empowers drones to “understand” it in three dimensions. This fundamental capability is continually evolving, pushing the boundaries of what autonomous flight systems can achieve in terms of safety, precision, and efficiency across countless applications.