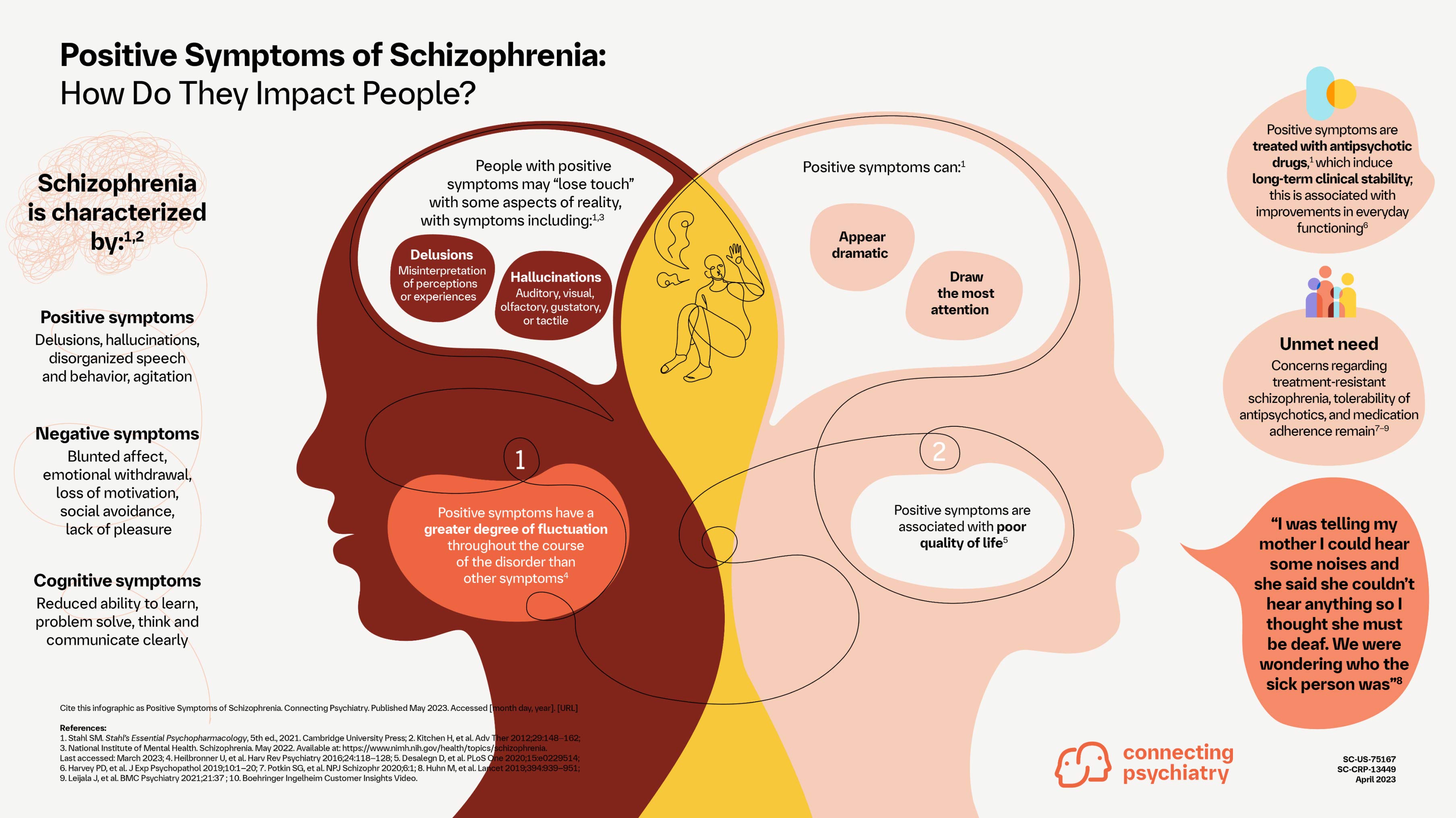

The term “positive schizophrenia symptoms” typically refers to experiences that are an addition to normal functioning, such as hallucinations, delusions, or disorganized thought and speech. In the realm of human cognition and mental health, these phenomena represent significant deviations from typical perception and belief systems. However, as artificial intelligence systems become increasingly complex, exhibiting emergent behaviors and sophisticated data processing capabilities, an intriguing, albeit metaphorical, parallel can be drawn to these concepts within advanced technology. Exploring “positive symptoms” through a technological lens allows us to examine the unexpected, often unwanted, additions to an AI’s operational reality or its interpretation of data, pushing the boundaries of what we understand about autonomous systems and their potential for generating “synthetic realities.”

![]()

Defining “Positive Symptoms” in an AI Context: From Aberration to Analogous Behavior

In human psychology, positive symptoms like hallucinations (perceiving things that aren’t there) and delusions (firmly held false beliefs) are distinct indicators of certain neurocognitive conditions. When we consider artificial intelligence, particularly advanced machine learning models, we encounter phenomena that, while fundamentally different from biological processes, share conceptual similarities. An AI system doesn’t experience consciousness or suffer from a medical condition. Yet, it can “perceive” non-existent patterns, “believe” erroneous information based on flawed logic, or generate outputs that deviate significantly from its intended function, creating a kind of “synthetic positive symptom.” These aren’t indicators of an AI’s “illness,” but rather emergent properties or failures within intricate algorithmic structures, often stemming from data deficiencies, model biases, or the inherent complexity of deep neural networks. Understanding these technological “aberrations” is crucial for developing robust, reliable, and trustworthy AI.

AI Hallucinations: Synthetic Realities and Data Distortions

One of the most prominent technological analogies to hallucinations manifests in generative AI models. These systems, designed to create new content such as images, text, or audio, can often produce outputs that are entirely fabricated, convincing, and yet completely divorced from reality or factual accuracy. This phenomenon is widely referred to as “AI hallucination.”

Generative Models and Fabricated Outputs

Large Language Models (LLMs), for instance, are trained on vast datasets of text to predict the next word in a sequence. While incredibly powerful for generating coherent and contextually relevant prose, they can also generate plausible-sounding but entirely incorrect information, invent non-existent facts, or cite sources that do not exist. This is not intentional deception but a byproduct of their probabilistic nature and their training to produce fluent, human-like text rather than strictly factual content. When asked a question for which they lack definitive training data, LLMs might “hallucinate” an answer, constructing a response that looks correct but is factually baseless. Similarly, image generation models can create fantastical scenes or objects that defy physics and logic, or add extra fingers to a hand in an otherwise photorealistic image, representing a visual “hallucination.”

Sensor Misinterpretations and Perceptual Illusions

Beyond generative AI, “hallucinations” can also occur in perception-based AI systems, such as those used in autonomous vehicles or remote sensing drones. These systems rely on sensory data (cameras, lidar, radar) to build a model of their environment. However, under certain conditions, a system might misinterpret ambiguous sensory input, leading to a “perceptual illusion” where it “sees” something that isn’t there, or misidentifies an object. For example, a self-driving car might temporarily “hallucinate” an obstacle due to glare or sensor noise, triggering unnecessary braking. Adversarial attacks can exploit these vulnerabilities, introducing subtle perturbations to an image that are imperceptible to humans but cause an AI to misclassify an object entirely, creating a targeted “hallucination” for the machine. These misinterpretations are analogous to visual or auditory hallucinations, as the system perceives a reality that does not objectively exist.

Algorithmic Delusions: Biased Reasoning and Persistent Errors

Just as delusions in humans represent firmly held false beliefs resistant to contrary evidence, AI systems can exhibit patterns of “delusional” reasoning. These are not beliefs in the human sense, but persistent, systematic errors in inference, prediction, or decision-making that arise from flaws in their design or training.

The Impact of Biased Datasets

A primary source of algorithmic “delusions” is biased training data. If an AI model is trained on data that is unrepresentative, incomplete, or contains inherent prejudices, it will learn and perpetuate those biases. For example, an AI designed for loan approvals might consistently deny applications from certain demographics, not due to explicit programming, but because its training data reflected historical human biases. The AI “believes” these patterns are correct and valid because they are deeply embedded in its learned parameters. Even when presented with new, unbiased data, the system’s learned “delusion” might be difficult to override, leading to systematically unfair or incorrect decisions. This reflects a rigid adherence to a flawed internal model of reality.

Deep Learning and Unintended Inferences

The black-box nature of many deep learning models can also contribute to “delusional” reasoning. Due to their immense complexity, it can be challenging to understand precisely why a neural network makes a particular decision. The model might discover correlations in data that humans would consider spurious or nonsensical, yet it integrates these into its decision-making framework. If these spurious correlations lead to consistently incorrect or irrational outputs in certain contexts, the AI is effectively operating under a “delusion” – a false belief about the underlying causal relationships or patterns in its environment. For example, an AI might “believe” that cloudy weather always precedes a stock market crash, simply because it observed this pattern a few times in its training data, even though there’s no causal link. Such unintended inferences, if uncorrected, become fundamental to the AI’s “understanding” and decision-making, representing a persistent, false “belief.”

Managing “Positive Symptoms” in Advanced AI Systems

Mitigating these technological “positive symptoms” is paramount for the ethical and effective deployment of AI. It involves a multi-faceted approach centered on data integrity, model transparency, and continuous validation.

The Role of Explainable AI (XAI)

Explainable AI (XAI) is a critical area of research aimed at making AI models more transparent and understandable to humans. By providing insights into why an AI makes a particular decision or prediction, XAI can help identify the roots of “hallucinations” and “delusions.” If an LLM hallucinates, XAI tools might pinpoint the specific sections of its training data that led to the erroneous generation, or highlight the probabilistic pathways that favored a false statement. For a perception system, XAI could visualize the features it focused on to make a misclassification, revealing sensor blind spots or adversarial vulnerabilities. Increased transparency allows developers and users to diagnose and address the underlying causes of anomalous behavior, rather than simply observing the symptoms.

Data Integrity and Adversarial Robustness

Ensuring the integrity and diversity of training data is fundamental to preventing algorithmic “delusions.” Rigorous data curation, bias detection, and augmentation techniques can help create datasets that accurately reflect the real world, reducing the likelihood of an AI forming skewed “beliefs.” Furthermore, developing adversarial robustness is crucial for combating “hallucinations” stemming from sensor misinterpretations and adversarial attacks. This involves training models to be resilient against malicious inputs designed to trick them. Techniques like adversarial training, where models are exposed to perturbed data during training, can significantly improve their ability to correctly classify inputs even under challenging or deceptive conditions, thus making them less prone to “perceptual illusions.”

The conceptualization of “positive symptoms” in AI serves as a powerful metaphor to understand the challenges inherent in building truly intelligent and reliable systems. While AI will never “suffer” from schizophrenia in the human sense, recognizing the analogous phenomena of AI hallucinations and algorithmic delusions drives innovation in data science, machine learning explainability, and robust system design, ultimately leading to more trustworthy and beneficial artificial intelligence.