The seemingly simple act of reporting content on a platform like Instagram triggers a sophisticated chain of technological processes, embodying a significant frontier in digital innovation. Far beyond a mere button click, each report initiates a complex interplay of artificial intelligence, machine learning algorithms, and human review systems designed to uphold community guidelines and maintain platform integrity. This intricate ecosystem represents a substantial investment in cutting-edge technology, constantly evolving to address the dynamic challenges of user-generated content, misinformation, and online abuse. Understanding what transpires after a report is filed means delving into the very architecture of modern content moderation, a critical component of today’s digital landscape.

The Algorithmic Backbone of Content Moderation

At the heart of any large-scale content platform’s moderation strategy lies a robust algorithmic infrastructure. When a user reports content—be it a photo, video, comment, or profile—it doesn’t simply disappear into a black box. Instead, it enters a highly optimized pipeline where advanced technological solutions swiftly assess and categorize the potential violation. This initial phase is predominantly automated, leveraging the power of artificial intelligence and machine learning to process an unimaginable volume of data in real-time.

Automated Detection Systems and Machine Learning

Before a human even sees a reported piece of content, automated detection systems are already at work. These systems are powered by sophisticated machine learning models, meticulously trained on vast datasets of previously reviewed content, including both policy-compliant and violative material. When a report comes in, these models rapidly analyze various attributes of the reported item. For images and videos, this might involve object recognition, facial recognition (where permissible and privacy-compliant), text overlay analysis, and even sentiment analysis of accompanying captions. For text-based content, natural language processing (NLP) algorithms are deployed to identify hate speech, harassment, threats, or other forms of harmful language.

These AI systems are not static; they continuously learn and improve. Every moderation decision, whether made by an AI or a human, feeds back into the models, refining their accuracy and expanding their ability to detect nuances in harmful content. This iterative process of training and refinement is crucial, especially as malicious actors constantly devise new ways to circumvent detection. The innovation here lies not just in the initial deployment of these models but in their persistent adaptability and self-optimization, ensuring they remain effective against evolving threats.

Real-time Analysis and Predictive Analytics

The speed at which reported content is processed is a testament to the real-time analysis capabilities inherent in these systems. In an environment where viral content can spread globally in minutes, delays in moderation can amplify harm. Consequently, platforms employ highly efficient computational frameworks that allow for near-instantaneous processing of reports. This rapid analysis isn’t limited to reported content; many platforms also use predictive analytics to proactively identify potentially violative content before it’s even reported.

Predictive models analyze user behavior patterns, content attributes, and network connections to flag suspicious activity or content that might violate policies. For instance, an algorithm might identify a new account rapidly posting a high volume of identical images across multiple profiles, a common indicator of spam or bot activity. This proactive approach, driven by advanced data science and machine learning, shifts content moderation from a purely reactive process to a more preventative one, representing a significant innovation in digital safety. The challenge, however, is to maintain this speed and proactive stance without inadvertently penalizing legitimate users or stifling free expression.

The Hybrid Approach: Integrating AI with Human Oversight

While AI forms the bedrock of modern content moderation, human judgment remains indispensable. The nuances of human language, cultural contexts, satire, and complex real-world situations often exceed the current capabilities of even the most advanced algorithms. This necessitates a hybrid approach, where AI efficiently handles the vast majority of straightforward cases, while human moderators step in for more intricate decisions. This integration of artificial intelligence and human intelligence is a hallmark of sophisticated digital governance.

Escalation Protocols and Expert Review

When an automated system flags content but cannot make a definitive decision, or when the severity of a potential violation warrants additional scrutiny, the content is escalated to a human review team. These teams consist of trained professionals who specialize in understanding platform policies, legal frameworks, and various cultural contexts. They assess reported content against detailed guidelines, taking into account subtleties that AI might miss, such as intent, context, and potential real-world impact.

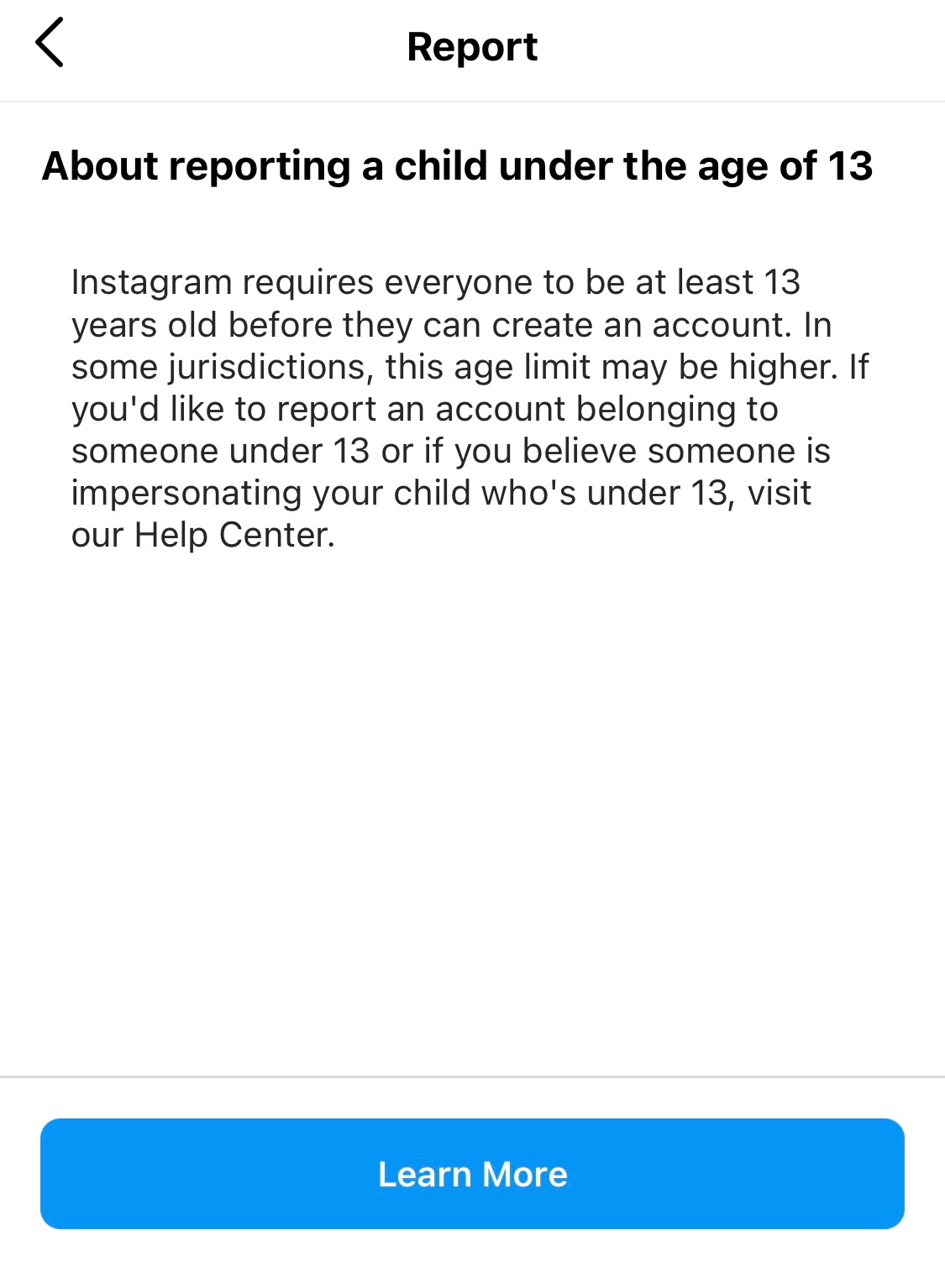

The process often involves multiple layers of review, particularly for highly sensitive categories like hate speech, child safety, or incitement to violence. A case might be reviewed by a general moderator, then escalated to a specialist, and potentially even to legal teams for specific jurisdictional considerations. This multi-tiered human review process ensures that critical decisions are made with the highest degree of accuracy and accountability, serving as a vital check against algorithmic errors or biases. The innovation here lies not only in the efficiency of the escalation but in the continuous training and support provided to human moderators, who operate under immense pressure.

Feedback Loops and Model Refinement

The interplay between AI and human moderators is a dynamic feedback loop. Every decision made by a human reviewer serves as a new data point for the machine learning models. If an AI system incorrectly flags benign content, or fails to detect a clear violation, the human override provides invaluable information that helps retrain and refine the algorithms. Conversely, when AI successfully identifies and acts on violative content, it reinforces the model’s accuracy.

This continuous exchange ensures that the AI systems are constantly learning from human expertise, adapting to new forms of harmful content, and improving their ability to differentiate between policy violations and acceptable expression. This iterative process of human validation and algorithmic adjustment is crucial for the long-term effectiveness and fairness of any content moderation system. It’s a testament to the collaborative innovation where machines augment human capabilities, and humans, in turn, enhance machine intelligence.

Navigating Platform Policies and User Trust

The efficacy of content moderation technologies is not solely measured by their ability to detect violations but also by their contribution to fostering user trust and maintaining transparency. Platforms like Instagram operate under immense scrutiny, balancing the need for a safe environment with the imperative to protect free expression. The technologies employed in moderation play a direct role in navigating these often-conflicting objectives.

Transparency Challenges and System Explainability

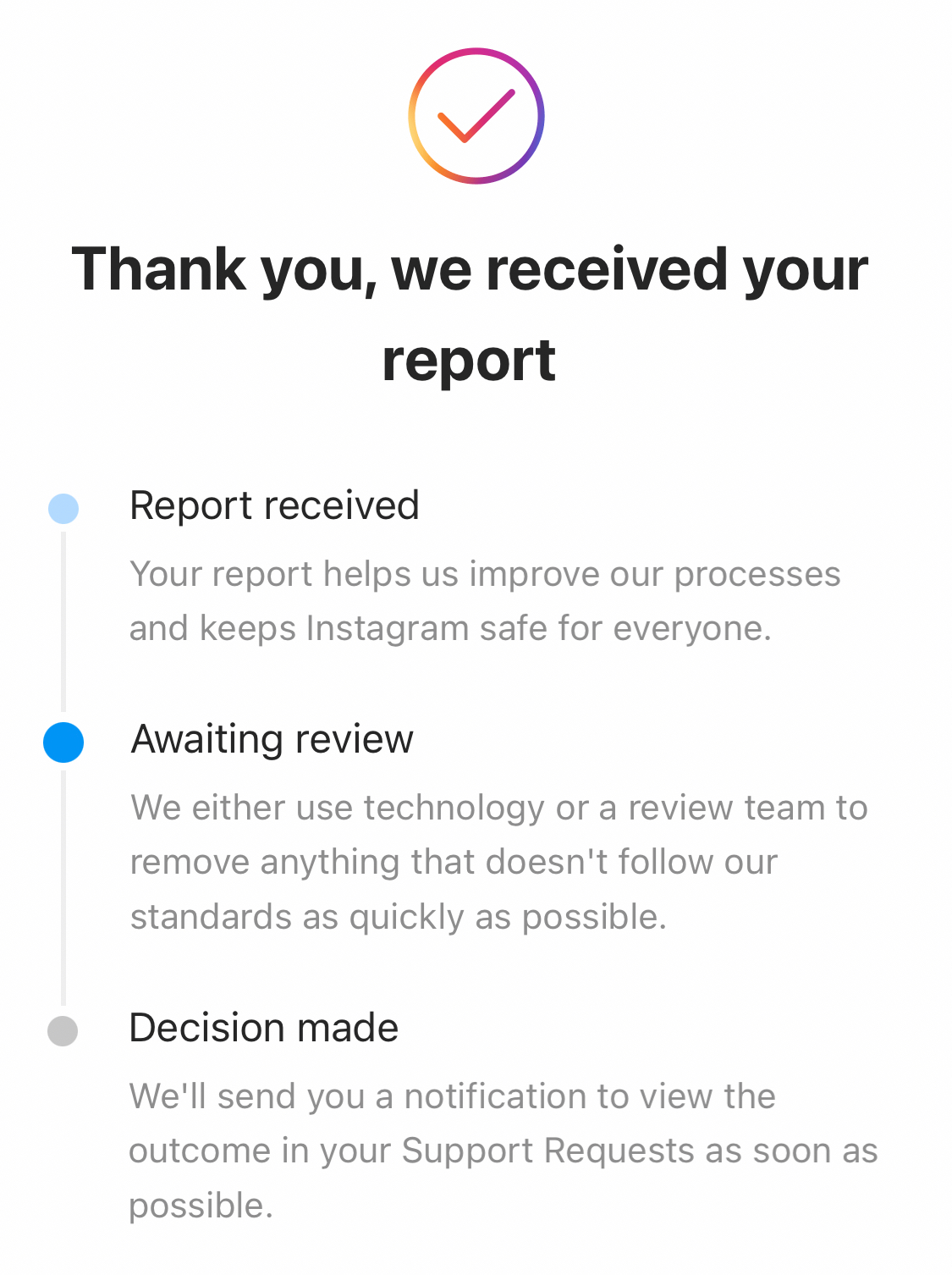

One of the significant challenges in employing sophisticated AI for content moderation is the inherent “black box” nature of many advanced algorithms. Users often find it difficult to understand why their content was removed or why a report was acted upon (or not). This lack of explainability can erode user trust, leading to perceptions of unfairness or censorship. Innovative efforts are underway to make these systems more transparent, providing users with clearer reasons for moderation decisions, including the specific policy violated and, where possible, an explanation of how the AI identified the violation.

While full algorithmic transparency remains an elusive goal due to proprietary concerns and the complexity of the models, platforms are investing in technologies that can offer more actionable insights to users. This includes improved notification systems, accessible appeal processes, and public reporting on moderation metrics. These innovations aim to bridge the gap between automated enforcement and user understanding, fostering a more transparent and trustworthy digital environment.

Safeguarding Against Abuse and Misinformation

The ultimate purpose of content moderation technologies is to safeguard users and maintain the integrity of the platform. This encompasses a broad spectrum of harms, from cyberbullying and harassment to the spread of dangerous misinformation and propaganda. Advanced AI systems are increasingly vital in identifying and mitigating these threats at scale. They can detect patterns of coordinated inauthentic behavior, identify deepfakes or manipulated media, and track the dissemination of harmful narratives across vast networks.

The innovation in this domain is constant, driven by the ever-evolving tactics of those seeking to exploit platforms. From natural language generation to advanced image and video manipulation, the sophistication of harmful content demands equally sophisticated countermeasures. Content moderation technologies are on the front lines of this digital battle, constantly adapting and deploying new defenses to protect vulnerable users and ensure that platforms remain spaces for constructive engagement rather than harmful exploitation.

The Evolving Landscape of Digital Governance Technologies

The field of content moderation technology is in a state of rapid evolution, driven by the increasing scale of online interactions and the growing complexity of digital harms. Future innovations promise even more proactive, nuanced, and perhaps federated approaches to managing online content.

Future of Proactive Moderation

Looking ahead, the focus on proactive moderation is set to intensify. This involves leveraging technologies like federated learning, where AI models are trained on decentralized datasets without directly sharing sensitive user information, enhancing both privacy and detection capabilities. Further advancements in neural networks and graph analysis will allow platforms to identify emerging threats and harmful trends even earlier, moving beyond reactive reporting to predictive intervention. The goal is to create truly resilient systems that can anticipate and neutralize threats before they inflict widespread damage, while simultaneously minimizing false positives that impact legitimate speech.

Balancing Free Expression with Safety Protocols

The continuous technological challenge for platforms lies in striking the delicate balance between enabling free expression and enforcing safety protocols. Innovations in “contextual AI” are crucial here, allowing algorithms to better understand the intent and surrounding circumstances of content, rather than merely identifying keywords or images in isolation. This could lead to more nuanced moderation decisions, reducing the likelihood of over-moderation or under-moderation. The development of AI-powered tools that help users understand platform policies and self-moderate before posting could also play a significant role. As digital governance technologies mature, they will increasingly shape the contours of online discourse, demanding ongoing ethical consideration and technological ingenuity to build truly safe, inclusive, and vibrant digital communities.